Variations of Genetic Algorithms

The goal of this project is to develop the Genetic Algorithms (GA) for solving the Schaffer F6 function in fewer than 4000 function evaluations on a total of 30 runs. Four types of Genetic Algorithms (GA) are presented - Generational GA (GGA), Steady-State (mu+1)-GA (SSGA), Steady-Generational (mu,mu)-GA (SGGA), and (mu+mu)-GA.

💡 Research Summary

The paper investigates the performance of four well‑known variants of Genetic Algorithms (GAs) on the continuous benchmark problem Schaffer F6, with the explicit goal of solving the problem in fewer than 4,000 function evaluations over 30 independent runs. The four GA variants examined are: (1) a Generational GA (GGA), (2) a Steady‑State (µ+1)‑GA (SSGA), (3) a Steady‑Generational (µ,µ)‑GA (SGGA), and (4) a (µ+µ)‑GA. For each variant three crossover operators are considered – Single‑Point Crossover (SPX), Mid‑Point Crossover (MPX), and Blend Crossover (BLX). All experiments use a fixed population size of 16, a mutation rate of 0.012, binary tournament selection, and a search space bounded by –100 to +100 in both dimensions.

Methodology

Each GA‑crossover combination is run 30 times; the number of function evaluations required to reach the termination criterion is recorded. The authors then apply a two‑stage statistical analysis. First, an ANOVA test determines whether any pairwise differences are statistically significant (p < 0.05). If a significant difference is found, the worst‑performing algorithm is removed and the test is repeated until all remaining algorithms are statistically indistinguishable (p ≥ 0.05). Next, a Student’s t‑test (one‑ or two‑tailed as appropriate) is used to refine the equivalence classes, with |t| > 1.7 indicating a significant difference, |t| ≈ 1.5 indicating the same equivalence class, and |t| ≈ 1.9 indicating a different class. The authors also use an F‑test to decide whether to assume equal variances in the t‑test.

Algorithmic Details

- GGA creates a full set of P offspring each generation, replaces the entire parent population, and therefore performs P function evaluations per cycle. No elitism is applied; the generation gap is zero.

- SSGA (µ+1‑GA) selects two parents, produces a single offspring, and replaces the worst individual. Only one function evaluation is required per cycle, dramatically reducing evaluation cost.

- SGGA (µ,µ‑GA) also creates a single offspring per cycle, but replaces a randomly chosen non‑best individual rather than the worst. Two evaluations are required per cycle.

- (µ+µ)‑GA generates a child population of size µ, merges it with the parent population (total 2µ), and then selects the top µ individuals. This approach incurs the highest per‑cycle evaluation cost but guarantees that the best individuals from both generations survive.

Crossover Operators

- SPX splits each parent chromosome at a single cut point, producing two children. It works for both binary and real‑coded representations but introduces limited diversity.

- MPX computes the midpoint of each gene pair and creates a single real‑coded offspring. It tends to concentrate the search around the average of the parents, improving convergence speed on continuous problems.

- BLX selects each gene of the offspring uniformly at random within the interval defined by the two parent genes, thereby increasing diversity but potentially slowing convergence due to excessive randomness.

Results

The statistical analysis yields the following key findings:

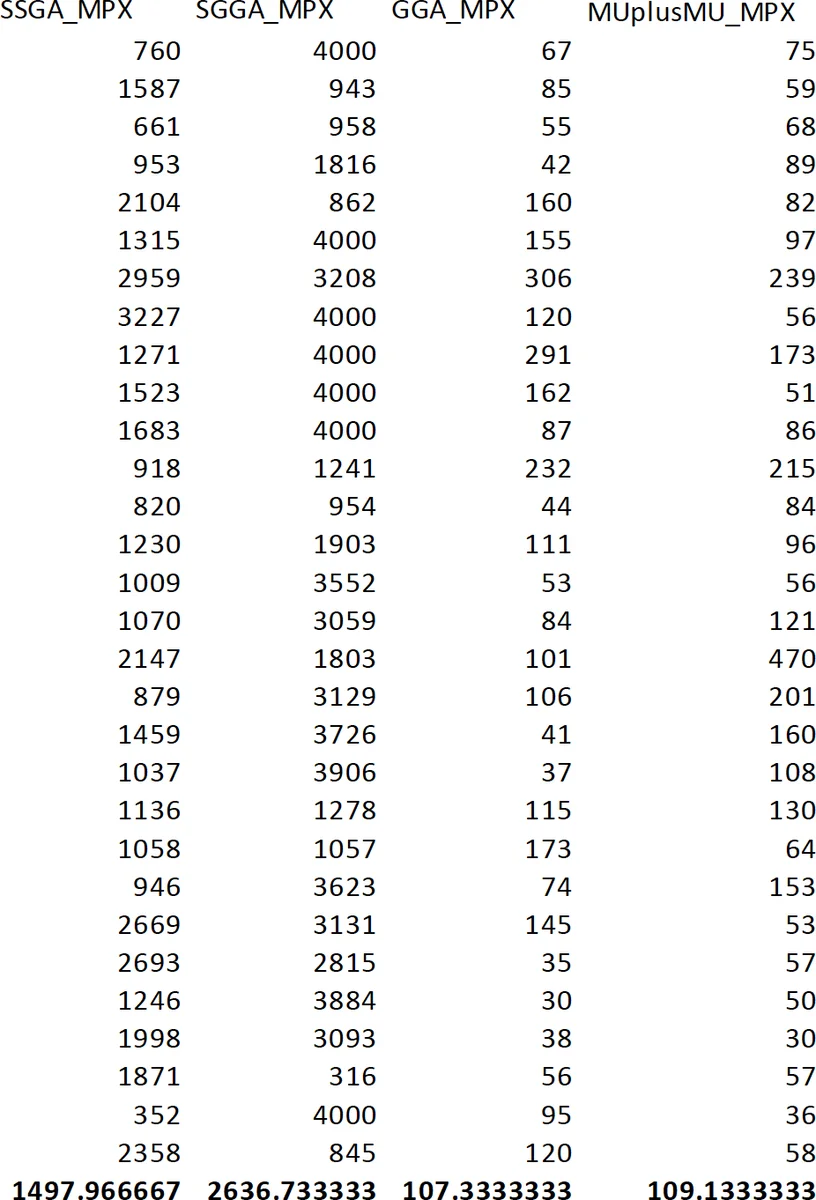

- SSGA‑MPX achieves the lowest average number of function evaluations (≈ 1,497) among all SSGA configurations, and all three SSGA crossover methods belong to the same equivalence class.

- SGGA‑MPX records an average of ≈ 2,636 evaluations, while SGGA‑MPX is the worst performer overall with an average of ≈ 3,637 evaluations, placing SGGA in a distinct, less‑efficient class.

- GGA‑MPX and (µ+µ)‑GA‑MPX both achieve average evaluation counts around 1,600. ANOVA produces a p‑value > 0.05 when comparing these two, and the subsequent t‑test yields |t| ≈ 0.09, indicating that they belong to the same equivalence class. Consequently, these two configurations are identified as the most efficient overall.

- For the other crossover operators (SPX and BLX), the average evaluation counts are consistently higher than for MPX across all GA variants, and the statistical tests confirm significant differences.

Overall, the MPX operator consistently outperforms SPX and BLX in this continuous‑domain setting, regardless of the underlying GA variant. While SSGA and SGGA reduce per‑generation evaluation cost, their final convergence quality is inferior to that of GGA and (µ+µ)‑GA when paired with MPX.

Interpretation and Implications

The study demonstrates that, under a strict evaluation budget, the choice of crossover operator can have a larger impact on performance than the choice of GA variant. MPX’s averaging mechanism efficiently guides the search toward promising regions of the continuous landscape, leading to fewer evaluations needed to reach the target fitness. The (µ+µ)‑GA’s elitist selection ensures that high‑quality solutions are retained, compensating for its higher per‑generation evaluation cost. Consequently, for problems similar to Schaffer F6, practitioners should consider using a traditional generational GA or a (µ+µ)‑GA combined with MPX crossover to achieve the best trade‑off between evaluation economy and solution quality.

Limitations and Future Work

The experiments are confined to a single benchmark function; extending the analysis to a broader suite of continuous and discrete problems would test the generality of the conclusions. Moreover, exploring adaptive mutation rates, dynamic population sizes, or self‑adjusting crossover strategies could further improve efficiency. Finally, integrating multi‑objective optimization or real‑time constraint handling would broaden the applicability of the findings to more complex, real‑world scenarios.

In summary, the paper provides a thorough empirical comparison of four GA variants and three crossover operators on a constrained evaluation budget, identifies MPX‑augmented GGA and (µ+µ)‑GA as the top performers, and offers practical guidance for researchers and engineers seeking efficient evolutionary solutions under tight computational limits.

Comments & Academic Discussion

Loading comments...

Leave a Comment