Robust sound event detection in bioacoustic sensor networks

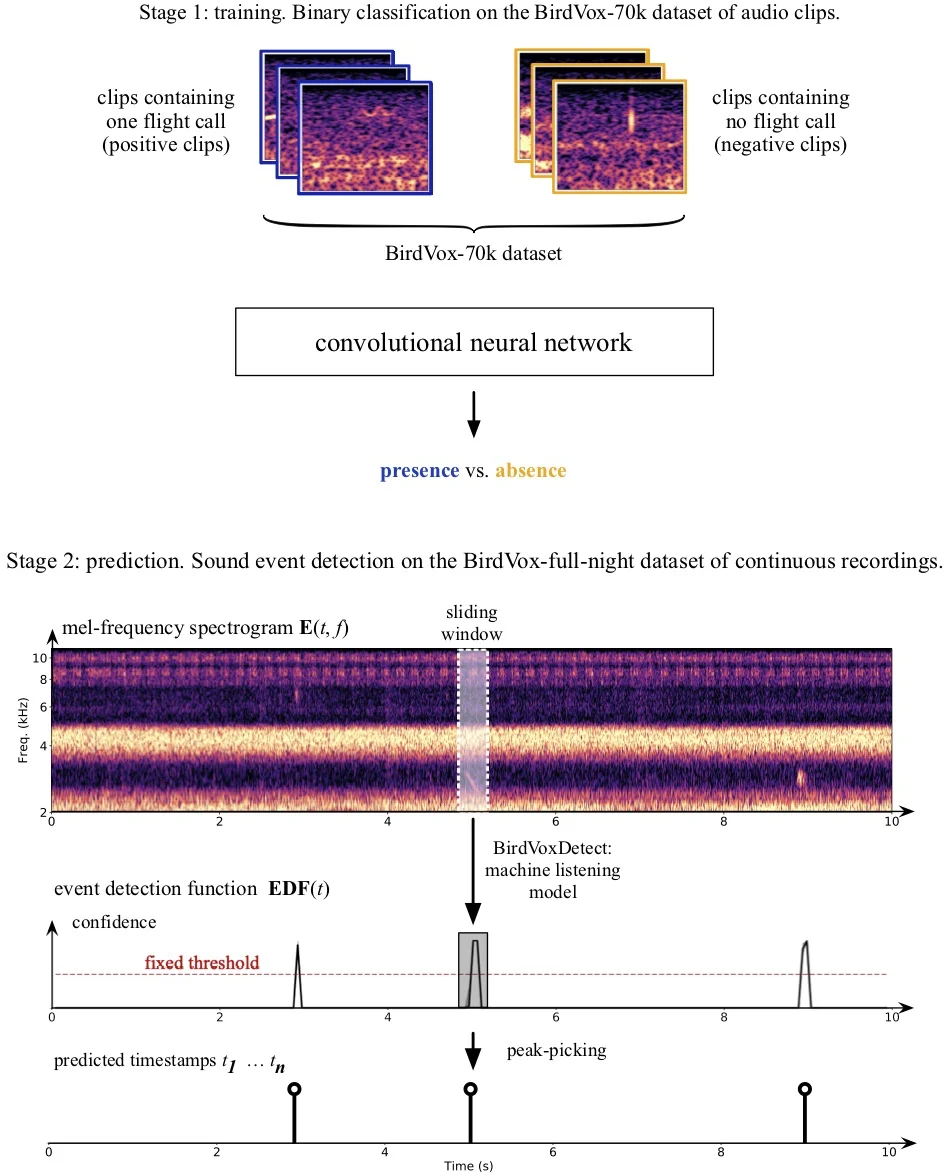

Bioacoustic sensors, sometimes known as autonomous recording units (ARUs), can record sounds of wildlife over long periods of time in scalable and minimally invasive ways. Deriving per-species abundance estimates from these sensors requires detection, classification, and quantification of animal vocalizations as individual acoustic events. Yet, variability in ambient noise, both over time and across sensors, hinders the reliability of current automated systems for sound event detection (SED), such as convolutional neural networks (CNN) in the time-frequency domain. In this article, we develop, benchmark, and combine several machine listening techniques to improve the generalizability of SED models across heterogeneous acoustic environments. As a case study, we consider the problem of detecting avian flight calls from a ten-hour recording of nocturnal bird migration, recorded by a network of six ARUs in the presence of heterogeneous background noise. Starting from a CNN yielding state-of-the-art accuracy on this task, we introduce two noise adaptation techniques, respectively integrating short-term (60 milliseconds) and long-term (30 minutes) context. First, we apply per-channel energy normalization (PCEN) in the time-frequency domain, which applies short-term automatic gain control to every subband in the mel-frequency spectrogram. Secondly, we replace the last dense layer in the network by a context-adaptive neural network (CA-NN) layer. Combining them yields state-of-the-art results that are unmatched by artificial data augmentation alone. We release a pre-trained version of our best performing system under the name of BirdVoxDetect, a ready-to-use detector of avian flight calls in field recordings.

💡 Research Summary

The paper addresses the challenge of robust sound event detection (SED) for avian flight calls in heterogeneous acoustic environments typical of large‑scale bioacoustic sensor networks. Existing convolutional neural network (CNN) detectors, while achieving state‑of‑the‑art performance on benchmark datasets, suffer from severe temporal and spatial variability: recall fluctuates dramatically between dusk and dawn and across different autonomous recording units (ARUs) because background noise (e.g., insect stridulations) changes both over the night and from site to site. This non‑stationarity leads to systematic bias in downstream ecological analyses.

To mitigate these issues, the authors propose two complementary adaptation techniques. The first is per‑channel energy normalization (PCEN), a time‑frequency representation that applies a short‑term (≈60 ms) automatic gain control to each mel‑frequency channel. PCEN enhances transient events such as bird vocalizations while suppressing stationary background components, thereby reducing temporal over‑fitting. The second technique is a context‑adaptive neural network (CA‑NN) layer that replaces the final dense layer of the CNN. An auxiliary sub‑network computes low‑dimensional long‑term (≈30 min) spectral summary statistics (means, variances, spectral centroids, etc.) from the entire recording segment. These statistics are fed into the CA‑NN to dynamically modulate the weights or biases of the final classification layer, providing a form of spatial adaptation that compensates for sensor‑specific noise characteristics.

The authors evaluate the methods on the publicly available BirdVox‑full‑night dataset, which consists of six continuous ten‑hour recordings from six ARUs capturing nocturnal migration. They adopt a “leave‑one‑sensor‑out” cross‑validation protocol to simulate deployment on unseen sensors. The experimental factors include (i) artificial data augmentation (noise mixing, time‑stretching), (ii) choice of time‑frequency representation (PCEN vs. log‑mel spectrogram), and (iii) formulation of the context‑adaptive layer (dynamic weights vs. dynamic biases). The computational effort amounts to roughly ten CPU‑years.

Key findings are: (1) PCEN alone substantially reduces temporal over‑fitting, yielding more stable recall across the night; (2) CA‑NN alone does not improve a log‑mel‑based model, indicating that context adaptation requires a representation that already attenuates stationary noise; (3) when PCEN is combined with CA‑NN, both temporal and spatial generalization improve, achieving state‑of‑the‑art F‑scores that surpass any configuration using data augmentation alone; (4) the two techniques are complementary rather than interchangeable.

Based on these results, the authors assemble the best performing configuration into a ready‑to‑use system called BirdVoxDetect. The software is implemented in Python, released under the MIT license, and includes a command‑line interface and an API that segment continuous recordings into individual detected events. Comprehensive documentation, a test suite, and PyPI packaging facilitate reproducibility and large‑scale deployment. The authors envision BirdVoxDetect enabling autonomous, real‑time monitoring of nocturnal bird migration, with applications ranging from aviation safety to wind‑farm impact assessment and broader ecological forecasting.

Comments & Academic Discussion

Loading comments...

Leave a Comment