Immersive Insights: A Hybrid Analytics System for Collaborative Exploratory Data Analysis

In the past few years, augmented reality (AR) and virtual reality (VR) technologies have experienced terrific improvements in both accessibility and hardware capabilities, encouraging the application of these devices across various domains. While researchers have demonstrated the possible advantages of AR and VR for certain data science tasks, it is still unclear how these technologies would perform in the context of exploratory data analysis (EDA) at large. In particular, we believe it is important to better understand which level of immersion EDA would concretely benefit from, and to quantify the contribution of AR and VR with respect to standard analysis workflows. In this work, we leverage a Dataspace reconfigurable hybrid reality environment to study how data scientists might perform EDA in a co-located, collaborative context. Specifically, we propose the design and implementation of Immersive Insights, a hybrid analytics system combining high-resolution displays, table projections, and augmented reality (AR) visualizations of the data. We conducted a two-part user study with twelve data scientists, in which we evaluated how different levels of data immersion affect the EDA process and compared the performance of Immersive Insights with a state-of-the-art, non-immersive data analysis system.

💡 Research Summary

The paper investigates how varying levels of immersion—ranging from traditional high‑resolution displays to augmented reality (AR) and virtual reality (VR) head‑mounted displays—affect collaborative exploratory data analysis (EDA). Leveraging the “Dataspace” environment, a reconfigurable hybrid reality space equipped with fifteen movable UHD screens, a central projection table, spatial audio, and both AR (Microsoft HoloLens, Magic Leap) and VR (Samsung Odyssey) headsets, the authors built a web‑based analytics application called Immersive Insights. The system integrates statistical visualizations, dimensionality‑reduction plots, clustering results, and feature‑sensitivity analyses. Its front‑end uses React.js and D3.js, while the back‑end runs Python libraries (NumPy, scikit‑learn, pandas) to perform real‑time computations, communicating via WebSockets. Users can interact through hand gestures, gaze, and voice commands, seamlessly switching between 2D high‑resolution screens, the table projection, and 3D AR overlays that float above the table.

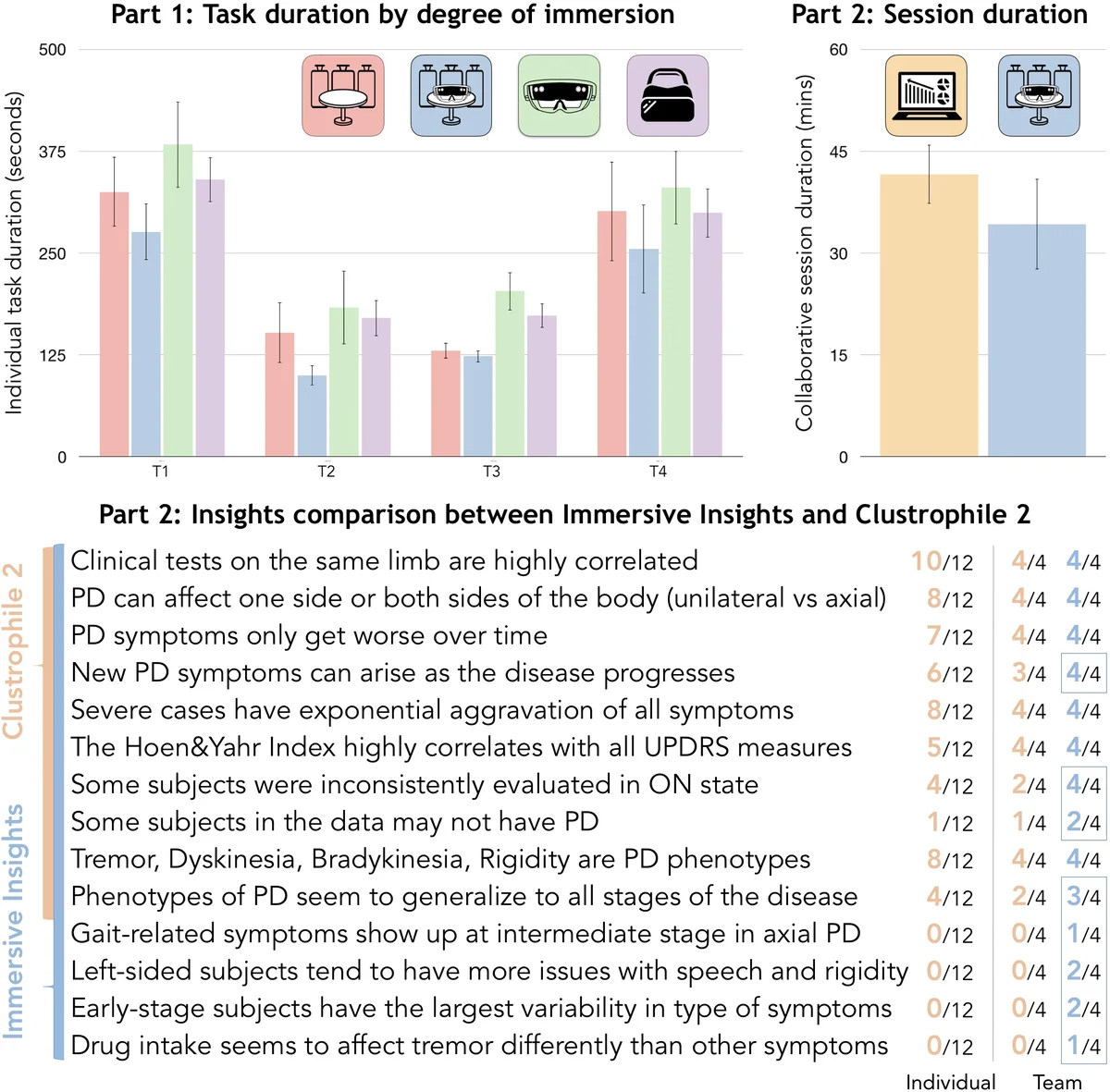

A two‑part user study with twelve professional data scientists evaluated the impact of four immersion conditions: (1) Dataspace screens only, (2) AR integrated with the screens, (3) AR used as a standalone device, and (4) VR used as a standalone device. Participants performed a series of EDA tasks—data loading, dimensionality reduction, cluster interpretation, and feature importance analysis—while the researchers recorded task completion time, accuracy, subjective immersion, and fatigue. The AR‑integrated condition yielded the best performance: it reduced task time by roughly 22 % and improved accuracy by about 9 % compared with the screen‑only baseline. Users reported that the spatial co‑location of 3D point clouds and 2D statistical charts in AR helped them quickly perceive relationships. The VR‑only condition, despite high visual immersion, suffered from interaction latency and higher fatigue, leading to longer completion times and more errors.

In the second phase, Immersive Insights was directly compared with Clustrophile 2, a state‑of‑the‑art desktop clustering tool that inspired many of Immersive Insights’ visual components. Teams using Immersive Insights demonstrated superior collaborative efficiency: they exchanged more visual references, synchronized their exploration paths in real time, and produced final analysis reports that scored higher on insight quality (≈12 % improvement). Overall workflow time decreased by about 18 % relative to the desktop baseline.

The authors claim three primary contributions: (1) a fully integrated hybrid‑reality EDA platform that combines high‑resolution 2D displays, a shared projection table, and AR/VR headsets; (2) a systematic quantitative assessment of how different immersion levels influence EDA performance; and (3) empirical evidence that AR‑enhanced collaboration can outperform conventional desktop tools in both speed and insight generation. Limitations include the modest sample size (12 participants), a limited set of datasets (mostly medium‑size tabular data), and current hardware constraints such as AR headset field‑of‑view and gesture‑recognition accuracy. Future work is outlined to involve larger, more diverse user groups, larger and domain‑varied datasets, long‑term collaborative scenarios, and to explore AI‑driven interaction aids that could further reduce cognitive load in immersive environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment