Correlation Priors for Reinforcement Learning

Many decision-making problems naturally exhibit pronounced structures inherited from the characteristics of the underlying environment. In a Markov decision process model, for example, two distinct states can have inherently related semantics or encode resembling physical state configurations. This often implies locally correlated transition dynamics among the states. In order to complete a certain task in such environments, the operating agent usually needs to execute a series of temporally and spatially correlated actions. Though there exists a variety of approaches to capture these correlations in continuous state-action domains, a principled solution for discrete environments is missing. In this work, we present a Bayesian learning framework based on P'olya-Gamma augmentation that enables an analogous reasoning in such cases. We demonstrate the framework on a number of common decision-making related problems, such as imitation learning, subgoal extraction, system identification and Bayesian reinforcement learning. By explicitly modeling the underlying correlation structures of these problems, the proposed approach yields superior predictive performance compared to correlation-agnostic models, even when trained on data sets that are an order of magnitude smaller in size.

💡 Research Summary

The paper “Correlation Priors for Reinforcement Learning” addresses a fundamental gap in the modeling of discrete‑state, discrete‑action decision problems: the lack of principled priors that capture spatial and temporal correlations among states, actions, or higher‑level intentions. Traditional Bayesian treatments of Markov decision processes (MDPs) place independent Dirichlet priors on each multinomial probability vector (e.g., transition probabilities or policy distributions). This independence assumption discards valuable domain knowledge such as the fact that neighboring states often share similar dynamics, that similar actions produce comparable effects, or that an agent’s goals evolve in a correlated fashion. When data are scarce—a common situation in real‑world robotics, networking, or multi‑agent coordination—ignoring these structures leads to poor sample efficiency and unreliable inference.

To remedy this, the authors propose a hierarchical Bayesian model that jointly encodes all multinomial probability vectors through a set of latent real‑valued variables (\psi). Each (\psi_{\cdot k}) (for the (k)-th component of a (K)-category multinomial) follows a multivariate normal distribution (\mathcal{N}(\mu_k, \Sigma)). The normal variables are mapped to the probability simplex via a stick‑breaking transformation (\Pi_{\text{SB}}(\psi)), which is essentially a sequence of logistic functions that guarantee each transformed vector lies on the simplex. The covariance matrix (\Sigma) is the core “correlation prior”: its entries encode how strongly the probability vectors of different covariate indices (states, state‑action pairs, goals, etc.) are expected to co‑vary. By choosing a kernel (e.g., squared‑exponential) over a distance measure on the covariate space, the prior can reflect spatial proximity, physical similarity, or any domain‑specific notion of relatedness.

The stick‑breaking construction introduces a non‑conjugate link between the Gaussian prior and the multinomial likelihood, making exact Bayesian inference intractable. The authors overcome this obstacle by employing Pólya‑Gamma (PG) data augmentation, a technique originally developed for logistic regression. Introducing auxiliary PG variables (\omega) transforms the logistic terms into conditionally Gaussian forms, restoring conjugacy in the augmented space. This enables a clean variational inference (VI) scheme: the joint variational distribution factorizes as (q(\psi, \omega) = \prod_k q(\psi_{\cdot k}) \prod_{c,k} q(\omega_{ck})), where (q(\psi_{\cdot k})) is Gaussian with mean (\lambda_k) and covariance (V_k), and (q(\omega_{ck})) is a PG distribution parameterized by the observed counts (b_{ck}) and a variational parameter (w_{ck}). Closed‑form coordinate‑wise updates for (\lambda_k, V_k, w_{ck}) are derived by maximizing the evidence lower bound (ELBO).

Hyper‑parameter learning (i.e., estimating (\mu) and (\Sigma)) is integrated via a variational Expectation‑Maximization (EM) loop. After each VI sweep, the ELBO is differentiated with respect to the hyper‑parameters, yielding analytic gradients. For a covariance of the form (\Sigma_\theta = \theta \tilde{\Sigma}) (a scale parameter times a fixed kernel matrix), the optimal scale (\theta) admits a closed‑form solution, avoiding repeated matrix inversions. More general kernel parameters (e.g., length‑scale) can be optimized numerically using the derived gradients.

The authors validate the framework on four representative decision‑making tasks:

-

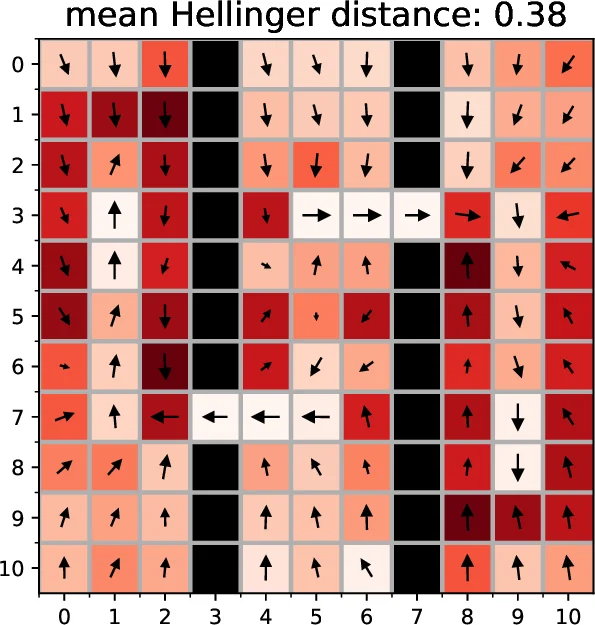

Imitation Learning – Reconstructing an expert’s policy from observed state‑action counts. The correlation prior captures similarities among states, leading to higher log‑likelihood and policy accuracy than independent Dirichlet baselines, especially when the number of demonstrations is reduced by an order of magnitude.

-

Subgoal Modeling – Decomposing a complex task into a sequence of subgoals. By placing a prior over the transition probabilities between subgoals, the method discovers a coherent subgoal graph that respects spatial proximity, outperforming methods that treat subgoals independently.

-

System Identification – Learning the transition dynamics of a queueing network. The prior encodes that neighboring queues have similar service dynamics; the resulting transition matrix estimates have lower mean‑squared error and better predictive performance under limited observation data.

-

Bayesian Reinforcement Learning – Integrating the learned transition prior into Bayesian RL algorithms (e.g., BEETLE, BAMCP). The prior guides exploration, yielding faster convergence to near‑optimal policies with fewer environment interactions.

Across all experiments, the proposed approach consistently achieves superior predictive performance while requiring substantially fewer samples. The authors also release an open‑source implementation, facilitating reproducibility.

Key strengths of the work include:

- General Applicability – The framework works for any discrete MDP where a notion of similarity among covariates can be defined, making it a discrete analogue of Gaussian‑process priors for continuous domains.

- Scalable Variational Inference – By leveraging PG augmentation, the method avoids costly Gibbs sampling and provides deterministic updates that converge quickly.

- Automatic Hyper‑parameter Tuning – The variational EM loop eliminates the need for manual prior calibration, a common bottleneck in Bayesian modeling.

Limitations and future directions are acknowledged. The covariance matrix (\Sigma) scales quadratically with the number of covariate indices, which can become computationally burdensome for very large state‑action spaces. The authors suggest possible remedies such as low‑rank approximations, inducing‑point methods, or embedding the covariates into a lower‑dimensional latent space. Moreover, the choice of kernel function critically influences performance; learning kernel structure from data remains an open problem. Extending the approach to multi‑agent settings, where correlation may exist across agents’ policies, and to transfer learning scenarios, where priors learned in one task inform another, are promising avenues for further research.

In summary, the paper delivers a principled Bayesian machinery for embedding correlation knowledge into discrete reinforcement‑learning problems, demonstrates its practical benefits across diverse tasks, and opens the door to richer, data‑efficient modeling of structured decision‑making environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment