A Queue-oriented Transaction Processing Paradigm

Transaction processing has been an active area of research for several decades. A fundamental characteristic of classical transaction processing protocols is non-determinism, which causes them to suffer from performance issues on modern computing environments such as main-memory databases using many-core, and multi-socket CPUs and distributed environments. Recent proposals of deterministic transaction processing techniques have shown great potential in addressing these performance issues. In this position paper, I argue for a queue-oriented transaction processing paradigm that leads to better design and implementation of deterministic transaction processing protocols. I support my approach with extensive experimental evaluations and demonstrate significant performance gains.

💡 Research Summary

This position paper advocates for a fundamental shift in transaction processing architecture through the “Queue-oriented Transaction Processing Paradigm.” The author begins by critiquing classical non-deterministic transaction processing protocols, which suffer from performance bottlenecks—such as high abort rates under contention and the overhead of distributed commit protocols like Two-Phase Commit (2PC)—in modern many-core, in-memory, and distributed computing environments. While deterministic database systems have shown promise in mitigating these issues, the paper identifies inefficiencies in existing deterministic approaches and proposes a novel abstraction to overcome them.

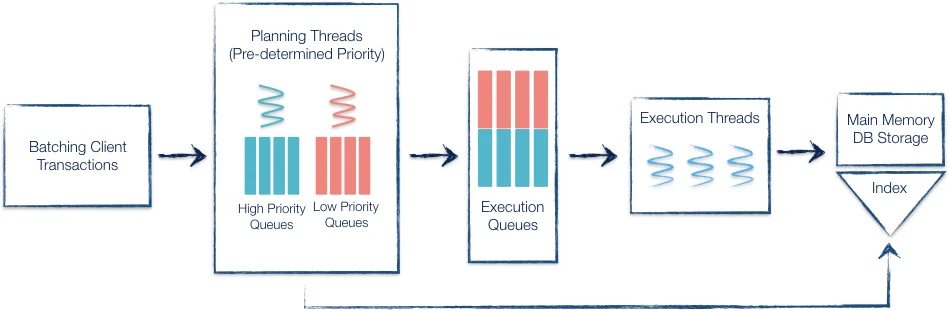

The core innovation lies in three interconnected principles. First, the Transaction Fragmentation Model breaks down transactions into smaller, self-contained units called “fragments,” which encapsulate transaction logic and abort conditions. Second, processing occurs in Two Deterministic Phases: a planning phase and an execution phase. During the planning phase, multiple threads analyze a batch of transactions, identify dependencies (data, conflict, commit, speculation) among fragments, and deterministically assign these fragments to priority-tagged queues. This phase requires minimal coordination. In the execution phase, worker threads are assigned these pre-filled queues. They simply execute the fragment logic in the order dictated by queue priority and FIFO discipline, resolving dependencies as defined in a shared data structure. Crucially, execution threads are unaware of the broader transaction context, eliminating traditional concurrency control overhead during execution.

Third, this paradigm employs a Priority-based Queue-oriented Representation of the workload, which provides a unified and extensible framework. It seamlessly supports various execution models (speculative or conservative) and isolation levels (serializable or read-committed), adapting to different workload characteristics and consistency requirements.

The author implemented this paradigm within the ExpoDB platform and conducted extensive evaluations using industry-standard benchmarks (TPC-C, YCSB). The results demonstrate significant performance gains. In a centralized, high-contention TPC-C setting (1 warehouse), the queue-oriented protocol outperformed state-of-the-art non-deterministic protocols (e.g., Cicada, Silo) by a factor of 3x in throughput. In a distributed, deterministic setting using YCSB, it outperformed the Calvin protocol by 22x under low-contention workloads. These improvements stem from the efficient exploitation of intra-transaction parallelism, minimal runtime coordination, and the elimination of 2PC for committing distributed transactions in deterministic execution.

The paper concludes by highlighting the paradigm’s broader impact and future potential. Its flexible abstraction makes it applicable not only for enhancing deterministic databases but also as a foundational design principle for building Byzantine fault-tolerant distributed transaction systems and for improving the performance of blockchain ordering services, such as in Hyperledger Fabric. The work represents a significant step towards high-performance, predictable transaction processing in next-generation computing environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment