Hybrid Residual Attention Network for Single Image Super Resolution

The extraction and proper utilization of convolution neural network (CNN) features have a significant impact on the performance of image super-resolution (SR). Although CNN features contain both the spatial and channel information, current deep techn…

Authors: Abdul Muqeet, Md Tauhid Bin Iqbal, Sung-Ho Bae

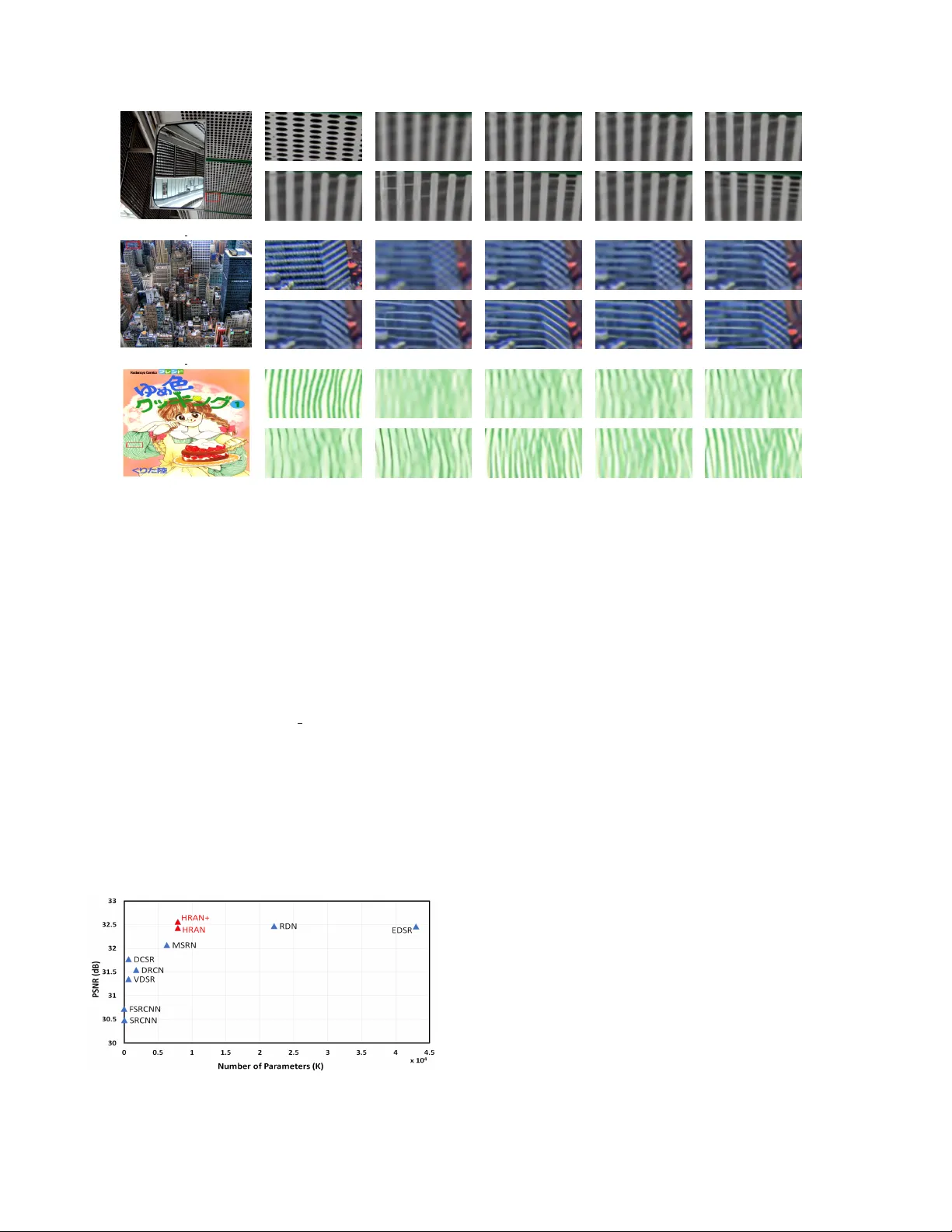

Hybrid Residual Attention Network f or Single Image Super Resolution Abdul Muqeet K yung Hee Univ ersity South K orea amuqeet@khu.ac.kr Md T auhid Bin Iqbal K yung Hee Univ ersity South K orea tauhidiq@khu.ac.kr Sung-Ho Bae K yung Hee Univ ersity South K orea shbae@khu.ac.kr Abstract The extr action and pr oper utilization of con volution neu- ral network (CNN) featur es have a significant impact on the performance of image super -r esolution (SR). Although CNN featur es contain both the spatial and channel infor - mation, curr ent deep techniques on SR often suffer to max- imize performance due to using either the spatial or chan- nel information. Moreo ver , the y inte grate such information within a deep or wide network rather than exploiting all the available features, eventually r esulting in high compu- tational complexity . T o addr ess these issues, we present a binarized featur e fusion (BFF) structur e that utilizes the ex- tracted features fr om r esidual gr oups (RG) in an effective way . Each residual group (RG) consists of multiple hy- brid residual attention blocks (HRAB) that effectively inte- grates the multiscale featur e e xtraction module and chan- nel attention mechanism in a single bloc k. Furthermor e, we use dilated con volutions with differ ent dilation factors to ex- tract multiscale features. W e also propose to adopt global, short and long skip connections and r esidual gr oups (RG) structur e to ease the flow of information without losing im- portant features details. In the paper , we call this overall network ar chitectur e as hybrid residual attention network (HRAN). In the experiment, we have observed the efficacy of our method against the state-of-the-art methods for both the quantitative and qualitative comparisons. 1. Introduction In this paper , we address the Single Image Super Reso- lution (SISR) problem, where the objecti ve is to reconstruct the accurate high-resolution (HR) image from a single lo w- resolution (LR) image. It is kno wn as an ill-posed problem, since there are multiple solutions available for mapping an y LR image to HR images. This problem is intensified when the up-sampling factor becomes lar ger . Because HR images preserve much richer information than LR images, SISR techniques are popular in many practical applications, such as surveillance [ 43 ], F ace Hallucination [ 35 ], Hyperspectral imaging [ 14 ], medical imaging [ 26 ] etc. Numerous deep learning based methods have been pro- posed in recent years to address the SISR problem. Among them, SRCNN [ 3 ] is considered as the first attempt to come up with a deep-learning based solution with its three con- volution layers. SRCNN outperformed the existing SISR approaches that typically used either multiple images with different scaling factors and/or handcrafted features. Later , Kim et al. [ 4 ] proposed an architecture named VDSR that extended the depth of CNN up to twenty layers while adding a global residual connection within the architecture. DRCN [ 11 ] also increased the depth of network through a recursiv e supervision and skip connection, and improv ed the performance. Ho wever , due to increasing depth of the networks, vanishing gradient resisted the network to be con- ver ged [ 7 ]. In the image classification domain, to solve the aforementioned problem, He et al. [ 7 ] proposed a residual block by which a network over 1000 layers was successfully trained. Inspired by its very deep architecture with residual blocks, EDSR [ 17 ] proposed much wider and deeper net- works for the SISR problem using residual blocks, called EDSR and MDSR [ 17 ], respectiv ely . V ery recently , Zhang et al. [ 40 ] proposed RCAN that utilizes a channel attention block to exploit the inter- dependencies across the feature channels. Moreover , Li et al. [ 16 ] proposed MSRN that improved the reconstruction performance by exploiting the information of spatial fea- tures rather than increasing the depth of CNNs. MSRN combines the features extracted from different con volu- tion filter sizes and concatenates the outputs of all resid- ual blocks through a hierarchical feature fusion (HFF) tech- nique, utilizing the information of the intermediate fea- ture maps. By doing so, MSRN achie ved comparable per- formance against EDSR [ 17 ] although having a 7-times smaller model size. In [ 42 ], Zhang et al. proposed DCSR in which the y proposed a mixed con volution block that com- bines dilated con volution layers and conv entional conv olu- tion layers to attain lar ger receptive field sizes. Nonethe- less, most of these CNN-based methods focused either on increasing the number of layers [ 10 , 11 , 17 , 40 ] or on ex- tending the width and height in a layer of CNN to achieve higher performance [ 16 ]. In this way , the y put less fo- cus on e xploiting the by-product CNN features, e.g ., spatial and channel information, simultaneously , and thus suf fer to maximize the performance at times. Moreov er, the strong correlations between the input LR and output HR images [ 16 ] lead us to making an assumption 1 that, apart from the high-lev el features, the both low-le vel and mid-lev el features also play vital roles for reconstruct- ing an super-resolution (SR) image. Therefore, we argue that, they should be treated precisely in this paper . In the pre vious work, dense connections were used [ 32 ], which added e very feature to subsequent features with residual connections. As a variant of dense connections, hybrid feature fusion (HFF) [ 32 , 41 , 16 ] was proposed to remov e the tri vial residual connections and to directly con- catenate all the output features from the residual blocks for the SISR problem. Howe ver , this direct feature concatena- tion prohibit the features from smooth feature transforma- tion from low to high lev els, resulting in resulting in im- proper utilization of various low-le vel and mid-lev el fea- tures. This may introduce redundanc y in feature utilization, thus increasing the cost of computation comple xity . In our ablation study in Section 4.1, this problem will be verified. T o solve this problem, in this paper , we propose a bina- rized feature fusion (BFF) scheme that combines adjacent feature maps with 1 × 1 con volutions which are repeatedly performed until remaining a single feature map. This allows all the features extracted from the CNN to be integrated smoothly , thus fully utilizing various features with differ- ent le vels. Moreover , to efficiently extract the features, un- like previous work that used main residual blocks, we pro- pose to use residual groups (RG) that constructs with the proposed hybrid residual attention block (HRAB). Our pro- posed HRAB extracts both the spatial and channel informa- tion with the notion that the both information is important in the reconstruction of high quality SR images and should be extracted simultaneously in a single module. Moreov er, compared to MSRN [ 16 ] that concatenates the conv entional conv olution layers with different kernel sizes to enlarge recepti ve field sizes, proposed method con- catenates dilated con volution layers with different dilation factors exploiting much larger recepti ve fields while signif- icantly decreasing the number of parameters, i.e ., conv o- lution weights. Furthermore, to ease the flo w of informa- tion, we introduce the short, long and global skip connec- tions. W e conduct comprehensi ve experiments to v erify the efficac y of our method, where we observe its superiority against other state-of-the-art methods. W e summarize the overall contrib utions of this work as, • W e propose a BFF to transfer all the images features smoothly by the end of the network. This structure allows the network to smoothly transform the features with dif ferent levels and generate an effecti ve feature map in the final reconstruction stage. • W e propose a hybrid residual attention block (HRAB) that considers both channel and spatial attention mech- anisms to exploit the channel and spatial dependencies. The spatial attention mechanism extracts the fine spa- tial features with larger receptiv e field sizes whereas the channel attention guides in selecting the most im- portant feature channels thus in the end, we ha ve more discriminativ e features. • Other than previous works, we employ BFF on resid- ual groups (RG) rather than residual blocks (HRAB) • For e xtracting the multiscale spatial features, we pro- pose to use a mixed dilated con v olution block with dif- ferent dilation f actors. Compared to the pre vious work in [ 16 ] that used the large kernel sizes to secure lar ge receptiv e fields, our proposed method can achiev e a similar performance even with smaller kernel sizes. Moreov er, we propose to use the dilated conv olution in an effecti ve manner to av oid the gridding problem of the con ventional dilated con volution layers. • T o ease the transmission of information through out the network, we propose to adopt the global, short and long skip in our architecture. 2. Related work There are several CNN-based SISR methods that hav e been proposed in the recent past. Pre viously , in the pre- processing step, researchers tend to use an interpolated LR image as an input that is interpolated to desired output im- age size which enables the network to ha ve the same size of input and output images. In contrast, due to the additional computation complexity of interpolation, current work em- phasizes to directly reconstruct HR image from LR image without interpolation. In 2014, Dong et al. [ 3 ] proposed SRCNN, the first CNN network architecture in the SR domain. It was a shallow 3 layers CNN architecture which achie ved the superior per- formance against the previous non-CNN methods. Later, He et al. [ 7 ] proposed a residual learning technique, and then Kim et al. [ 10 , 11 ] achiev ed remarkable performance with their proposed VDSR and DRCN methods. VDSR used the deep (20 layers) CNN and global residual connec- tion whereas DRCN [ 11 ] used a recursiv e block to increase the depth that does not require new parameters for repeti- tiv e blocks. T ai et al. [ 28 ] proposed the MemNet which had memory blocks that consist of recursiv e and gate units. All of these methods have used the interpolated LR image as input. Due to this preprocessing, these methods add addi- tional computation complexity along with artifacts, as also described in [ 25 ]. On the other end, the recent state-of-the-art methods di- rectly learn the mapping from input LR image. Dong et al. [ 4 ] proposed the FSRCNN, an improved version of SR- CNN, ha ving faster training and inference time. Ledig et al. [ 15 ] proposed the SRResNet, inspired from ResNet [ 7 ], to construct the deeper network. W ith the perceptual loss function in GAN, they proposed the SRGAN for photo- realistic SR. Lim et al. [ 17 ] removed the trivial modules (like batch normalization) of SRResNet, and proposed the EDSR (wider) and MDSR (deeper) that made a significant improv ement in SR problem. EDSR has a large number of filters (256) whereas MDSR has a small number of fil- ters though the depth of CNN network is increased to about 165 layers. It also won the first NTIRE SR challenge [ 30 ]. It has shown that deeper networks can achie ve remarkable Figure 1. The proposed netw ork architecture HRAN. The green-shaded area at top-left performs shallow feature e xtraction. the gray-shaded area at top-right indicates the internal structure of RG. The proposed BFF smoothly integrates features from low to high lev el RG blocks, and the output of BFF is element-wise summed with the shallow features and is fed into the final reconstruction stage (the orange-shaded area) to produce an HR image. The left-bottom block shows the specific descriptions. performance. Consequently , Zhang et al. [ 40 ] proposed a very deep network for SR. T o the extent of our knowledge, it has the largest depth in the SR domain. RCAN [ 40 ] has shown that only stacking the layers cannot improve the per - formance. It proposed to use the channel attention (CA) [ 8 ] mechanism to neglect the low-frequency information while selecting the valuable high-frequency feature maps. T o in- crease the depth of the network, it proposed the residual in residual (RIR) structure. Nev ertheless, RCAN [ 40 ] network is v ery deep and makes it difficult to use it in real-life appli- cations due to higher inference time. In contrast, multiscale feature extraction technique, which is less explored in SISR, has sho wn significant per- formance in object detection, [ 18 ] image segmentation, [ 24 ] and model compression [ 2 ] to achieve good tradeoffs be- tween speed and accuracy . Li et al. proposed a multi- scale residual network (MSRN) [ 16 ] having just 8 residual blocks. It used multipath con volution layers with different kernel sizes (3 × 3 and 5 × 5) to e xtract the multiscale spatial features. Furthermore, it proposed to use the hierarchical feature fusion (HFF) architecture to utilize the intermediate features. The intuition behind HFF architecture is to trans- fer the middle features at the end of the network since an increase in the depth of the network may cause the features to vanish in between the network. HFF shows comparable performance to EDSR, nevertheless its accuracy is limited. In addition, as the depth or width of a network increases, HFF also increases the computation complexity . Therefore, we need an ef ficient multiscale superresolu- tion CNN which could fully utilize the feature information as well as channel information. Considering it, we propose a hybrid residual attention network (HRAN) which com- bines the multiscale feature extraction along with the chan- nel attention [ 8 ] mechanism. In this paper , we refer the multiscale feature extraction as spatial attention. Thus, the combination of the channel and spatial attention is called hybrid attention. W e discuss the details of HRAN in the next section. 3. Hybrid Residual Attention Network 3.1. Network architectur e The proposed HRAN architecture is shown in Figure 1 . The HRAN can be decomposed into two parts: feature ex- traction and reconstruction. The feature extraction is di- vided into two parts: shallo w feature extraction and deep feature extraction. The deep feature extraction step further includes residual groups (RG) with binarized feature fu- sion (BFF) structure. Whereas, RG contains a sequence of hybrid residual attention blocks (HRAB) follo wed by 3 × 3 con volution. W e represent the input and output of HRAN as I LR and I S R respectiv ely . W e aim to reconstruct the ac- curate HR image I H R directly from LR image I LR . In the shallo w feature e xtraction, we use two con volution layers to extract the features from input I LR image. F 0 = H S F 1 ( I LR ) , (1) Here H S F 1 ( · ) represents the con volution operation. F 0 is also used for global residual learning to preserve the input features. As mentioned abov e, we pass the F 0 for further feature extraction F 1 = H S F 2 ( F 0 ) , (2) where H S F 2 ( · ) represents the con volution operation. F 1 is the output of shallow feature e xtraction step and will be used as input for the deep feature extraction. F DF = H DF ( F 1 ) + F 0 , (3) Here H DF ( · ) represents the deep feature e xtraction func- tion and F 0 shows global residual connection like VDSR [ 10 ] at the end of deep features. The deep features are se- quentially extracted through HRAB, RG and BFF . The de- tails are mentioned in later sections. I S R = H RE C ( F DF ) , (4) where H RE C denotes the reconstruction function. For the image reconstruction, pre viously researchers upsampled the input image to get the desired output dimensions. we recon- struct the I S R having similar dimensions as I H R through deep features of I LR . There are v arious techniques to serve as upsampling modules, such as Pix elShuffle layer [ 25 ], de- con volution layer [ 4 ], nearest-neighbor upsampling conv o- lution [ 5 ]. In this w ork, we use the MSRN [ 16 ] reconstruc- tion module that enables us to upscale to any upscale f actor with minor changes. W e can write the proposed HRAN function as I S R = H H RAN ( I LR ) , (5) For the optimization, numerous loss functions hav e been discussed for SISR. The mostly used loss functions are the MSE, L1, and L2 functions whereas perceptual and adver - sarial losses are also preferred. T o keep the netw ork simple and avoid the trivial training tricks, we prefer to optimize with L1 loss function. Hence, we can define the objective function of HRAN as : L (Θ) = 1 N N X i =1 H H RAN I i LR − I i H R 1 , (6) where Θ denotes the weights and bias of our network. 3.2. Binarized Featur e Fusion (BFF) structure The shallo w features lack the fine details for SISR. W e use deep networks to detect such features. Howe ver , in SISR, there is a strong correlation between I LR and I S R . It is required to fully utilize the features of I LR and transmit them to the end of network, but due to the deep network, features start gradually vanishing during the transmission. The possible solution is to use a residual connection, how- ev er , it induces the redundant information [ 16 ]. MSRN [ 16 ] uses the hierarchical feature fusion structure (HFF) to transmit the information from all the feature maps towards the end of the network. The concatenation of e very feature generates a lot of redundant information and also increase the memory computation. In contrast, we propose a binarized feature fusion (BFF) structure that as shown in Figure 1 . The notable difference in this architecture is the use of residual groups (RG) instead of Multiscale residual block (MSRB)[ 16 ]. It is called a residual group due to residual connections within itself i.e ., RGs are connected through LSC whereas its sub-module HRABs are connected through SSC. The use of RGs does not only help to increase the depth but also reduce the mem- ory ov erhead when concatenating the features map. Another difference in this architecture is the feature ex- traction from adjacent RG blocks. First, we concatenate the adjacent RG blocks and then, we remove the redundant information from adjacent blocks using 1 × 1 con volution. W e repeat this procedure for all RG blocks and the resultant blocks produced through this mechanism until all the blocks integrate into single RG block, which is con volved by 1 × 1 to produce the output features. In the end, we element-wise add this output to the shallo w features’ output ( F 0 ). W e re- fer this element-wise summation as global skip connection in Figure 1 . F i +1 = H RG ( F i ) , (7) where H RG ( · ) represents the features extracted through single RG block whereas F i shows the i th extracted fea- ture map. W e explain the details of RG in the next section. When we extract all the features through RG blocks, then we can utilize these RG blocks with HFF architecture. M j = H 1 × 1 [ F i +1 , F i +2 ] , (8) M j +1 = H 1 × 1 [ F i +3 , F i +4 ] , (9) Here, the output of two adjacent RG blocks are channel- wise concatenated and then passed into 1x1 conv olution layer to av oid the redundant information from them. Thus, the four RG blocks produce two more blocks which are then processed in a similar manner such that F i +1 = M j and F i +2 = M j +1 . Thus, in the ne xt step, M j and M j +1 will act as two RG blocks. W e repeat this procedure until we in- tegrate all the RGs and resultant blocks into a single output which is further used in the input of reconstruction step. 3.3. Residual Groups (RG) It is shown in [ 17 ] that the stacked residual blocks en- hance the performances of SR but after some extent, cause crucial information loss during transmission and also makes the training slower , af fecting the performance gain in the SISR [ 40 ]. Thus, rather than increasing the depth, we pro- pose to use the residual groups (RG) (see shaded area of Figure 1 ) in our architecture to detect deep features. The RG consists of multiple HRAB that are follo wed by 1 × 1 con volution. W e find that adding many HRAB does de- grade the SR performance. Thus, to preserve the informa- tion, we apply element-wise summation between the input of RG and output of 1 × 1 con volution and refer it as long skip connection (LSC). The RG enables the network to remember the informa- tion through LSC whereas to detect deep features, it uses SSC within its modules, in this case, HRAB. Hence, the flow of information in RG is smoothly carried out through LSC and SSC. The details of the HRAB are mentioned in the next section. Thus, we express the single RG block as H RG = W RG ∗ H n ( H n − 1 ( · · · H 1 ( F 1 ) · · · )) , (10) Figure 2. Proposed multi-path hybrid residual attention block (HRAB). T op path represents Spatial Attention (SA) that contains dilated con volutions with different dilation factors. Bottom path represents Channel Attention (CA) mechanism. Notations about different com- ponents are giv en in the right. Here H i represents the ‘B’ hybrid residual attention blocks (HRAB), which takes input features from pre vious RG block ( F i ) and produces the output ( F i +1 ). After stack- ing the ‘B’ HRAB modules, we apply 3 × 3 con volutions with weights W RG . After applying LSC, the equation 10 can be rewritten as H RG = W RG ∗ H n ( H n − 1 ( · · · H 1 ( F 1 ) · · · )) + F 1 , (11) The above equation represents the first RG block because it takes the shallo w features F 1 as input. Since, we have multiple RG blocks to extract the deep features, hence, the abov e equation can be generally written as H i RG = W i RG ∗ H i − 1 RG + H i − 1 RG (12) Here i = 1 , 2 , · · · , R . W e hav e ‘R’ RG blocks and each RG block uses the output of the previous block as its input except the first RG block that uses the shallow features F 1 as input. Thus, for the first RG block, H 0 RG = F 1 . 3.4. Hybrid Residual Attention Block (HRAB) In this section, we propose a multiscale multipath resid- ual attention block for the feature extraction, called hy- brid residual attention block (HRAB) (see Figure 2 ). Our HRAB integrates both the spatial attention (SA) and chan- nel attention (CA) mechanisms, thus, it has two separate paths for the SA and CA. H H RAB ( F i +1 ) = H S A ( F i ) · H C A ( F i ) (13) where H S A and H C A denote the functions of spatial at- tention (SA) and channel attention (CA) respectiv ely . Here ‘ · ’ represents the element-wise multiplication between SA and CA functions. Unlike RCAN [ 40 ], we propose to use element-wise multiplication between the outputs of SA and CA to extract the most informativ e spatial features. Like RCAN [ 40 ], we also add the short skip connections (SSC) to ease the flow of information through the netw ork. 3.4.1 Spatial Attention (SA) MSRN [ 16 ] pro ves that multiscale features improve the per- formance with lesser residual blocks. In MSRN [ 16 ], au- thors use the multiple CNN filters with increasing kernel sizes ( 3 × 3 and 5 × 5 ) to e xtract multiscale features. The intuition behind the larger kernel size is to take adv antage of large recepti ve fields. But, the large k ernel size causes to increase the memory computation. Thus, we propose to use the dilated con volution layers with dif ferent dilation factors which can ha ve the same receptiv e fields as large kernel size and memory consumption is similar to smaller kernel size. But, only stacking the dilated con volution layers produce gridding ef fect [ 36 ]. T o a void this problem, as illustrated in Figure 2 , we propose to use the element-wise sum operation between the dilated con volutions with dif ferent factors be- fore the concatenation operation. Suppose F i − 1 is the input of SA then the output will be F i . S 1 = Leak y ReLU ( H DC 1 ( F i )) (14) S 2 = Leak y ReLU ( H DC 2 ( F i ) + S 1 ) (15) S = S 1 , S 2 (16) S 1 = Leak y ReLU ( H DC 1 ( S )) (17) S 2 = Leak y ReLU ( H DC 2 ( S ) + S 1 ) (18) H S A ( F i +1 ) = H 1 × 1 ∗ S 1 , S 2 (19) where H DC 1 and H DC 2 denotes the con volution layers with dilation factors 1 and 2 respectiv ely . First, we con- catenate the output of two con volution layers to increase the channel size and at the end, we use 1 × 1 con volution to reduce the channels. Thus, our input and output hav e the same number of channels. Our SA architecture inspires from [ 6 ] which has sho wn that upsampling and downsam- pling module within the architecture improv es the accuracy in SR. For the activ ation unit, by following ref [ 12 , 29 ], we prefer the LeakyReLU over ReLU activ ation whereas we use the linear bottleneck layer as suggested in [ 23 ]. 3.4.2 Channel Attention (CA) The channel attention (CA) mechanism achiev es a lot of success in image classification [ 8 ]. In SISR, RCAN [ 40 ] introduces the CA layer in the network. CA plays an im- portant role in exploiting the interchannel dependencies be- cause some of them hav e trivial information while others hav e the most v aluable information. Therefore, we decide to use channel-wise features and incorporate the CA mech- anism with SA module in our HRAB. Thus, by following [ 8 , 40 ], we use the global pooling a verage to consider the channel-wise global information. W e also experiment with global pooling v ariance as we thought global v ariance could extract more high-frequencies, in contrast, we get poor re- sults as compare with global pooling av erage. Suppose if we have C channels in the feature map [ x 1 , x 2 , · · · , x C ] then we can express each ‘c’ feature map as a single value. z c ( x c ) = 1 H × W H X i =1 W X j =1 x c ( i, j ) , (20) here x c is the spatial position ( i, j ) of the feature maps. T o extract the channel-wise dependencies, we use the similar sigmoid gating mechanism as [ 8 , 40 ]. Alike SA, here, we replace the ReLU with LeakyReLU acti vation. H C A ( F i +1 ) = f ( W U LR ( W D z )) , (21) Here LR ( · ) and f ( · ) represent the Leak yReLU and sig- moid gating function respecti vely whereas W D and W U re- spectiv ely denote the weights of downscaling and upscaling con volutions. It is noted that it is channel-wise do wnscaling and upscaling with reduction ratio r . T able 1. In vestigation of HRAB module (with and without CA). W e examine the best PSNR (dB) on Urban100 (2) with same train- ing settings. Modules SSIM / PSNR Spatial attention without channel attention (SA) 32.77 / 0.9343 Spatial attention with channel attention (Hybrid Attention) 32.95 / 0.9357 3.5. Implementation details For training the HRAN network, we employ 4 RG blocks in our main architecture and in each RG block, there are 8 HRAB modules which are follo wed by 3 × 3 conv olution. For the dilated conv olution layers, we use the 3 × 3 con vo- lution with dilation factor 1 and 2. W e use C = 64 filters in all the layers except the final layer which has 3 filters to pro- duce a color image though our network can work for both gray and color images. For the channel-downscaling in CA mechanism, we set a reduction factor r = 4 . T able 2. BFF vs HFF structures. W e examine the best PSNR (dB) on Urban100 (2 × ) with same training settings. Method PSNR / SSIM MSRN with HFF [15] 32.22 / 0.9326 MSRN [15] with proposed BFF 32.44 / 0.9315 Our HRAN with HFF 32.69 / 0.9334 Our HRAN with proposed BFF 32.95 / 0.9375 4. Experimental Results In this section, we explain the e xperimental analysis of our method. For this purpose, we use se veral public datasets that are considered as the benchmark in SISR. W e provide the results of both the quantitativ e and qualitative e xperi- ments for the comparison of our method with sev eral state- of-the-art networks. For the datasets, we follow the recent trends [ 17 , 39 , 41 , 16 , 30 ] and use DIV2K dataset as the training set, since it contains the high-resolution images. For testing, we choose widely used standard datasets: Set5 [ 1 ], Set14[ 37 ], BDS100 [ 19 ], Urban100 [ 9 ] and Manga109 [ 20 ]. For the degradation, we use the Bicubic Interpolation (BI). W e ev aluate our results with peak signal-to-noise ratio (PSNR) and structural similarity (SSIM) [ 33 ] on luminance channel i.e ., Y of transformed YCbCr space and we remov e P-pixels from each border (P refers to upscaling f actor). W e provide the results for scaling factor × 2 , × 3 , × 4 , and × 8 . For the training settings, we follow the settings in [ 16 ]. W e extract 16 LR patches randomly in each training batch with the size of 64 × 64. W e use AD AM optimizer with learning rate lr = 10 − 4 which decreases to half after ev- ery 2 × 10 5 iterations of back-propagation. W e use Py- T orch framework to implement our models with GeForce R TX 2080 T i GPU. 4.1. Ablation studies W e conduct a series of ablation studies to sho w the ef- fectiv eness of our model. In the first experiment, we train our model with and without CA and compare their perfor- mance with our HRAB module. For the training, we use Urban100 dataset[ 9 ] as it consists of large dataset. The re- sults are sho wn in T able 1 . W e observe that our SA mod- ule alone achie ves 32.77 dB PSNR. W e also experimented on CA module alone though results were unsatisfactory . Whereas, when we combine SA with CA, i.e ., our HRAB module, it achie ves the 32.95 db PSNR. This study sug- gests we need HRAB module containing both the spatial and channel attention for accurate SR results. W e also in- vestigate about our BFF structure using HRAB module and tested the both BFF and HFF on MSRN [ 16 ] and our pro- posed HRAN model to verify the ef fectiveness of BFF on both models. It is evident from the results that BFF struc- ture improves the PSNR of MSRN [ 16 ] from 32.22 dB to 32.44 dB with BFF . Moreov er, proposed HRAN and BFF together significantly increase the accuracy which sho w the T able 3. Quantitati ve Comparisons of state-of-the-art methods for BI de gradation model. Best, 2nd best and 3rd best results are respectively shown with Magenta , Blue , and Green colors. Method Scale Set5 Set14 B100 Urban100 Manga109 PSNR SSIM PSNR SSIM PSNR SSIM PSNR SSIM PSNR SSIM Bicubic × 2 33.66 0.9299 30.24 0.8688 29.56 0.8431 26.88 0.8403 30.80 0.9339 SRCNN [ 3 ] × 2 36.66 0.9542 32.45 0.9067 31.36 0.8879 29.50 0.8946 35.60 0.9663 FSRCNN [ 4 ] × 2 37.05 0.9560 32.66 0.9090 31.53 0.8920 29.88 0.9020 36.67 0.9710 VDSR [ 10 ] × 2 37.53 0.9590 33.05 0.9130 31.90 0.8960 30.77 0.9140 37.22 0.9750 LapSRN [ 12 ] × 2 37.52 0.9591 33.08 0.9130 31.08 0.8950 30.41 0.9101 37.27 0.9740 MemNet [ 28 ] × 2 37.78 0.9597 33.28 0.9142 32.08 0.8978 31.31 0.9195 37.72 0.9740 EDSR [ 17 ] × 2 38.11 0.9602 33.92 0.9195 32.32 0.9013 32.93 0.9351 -/- -/- SRMDNF [ 39 ] × 2 37.79 0.9601 33.32 0.9159 32.05 0.8985 31.33 0.9204 38.07 0.9761 RDN [ 41 ] × 2 38.24 0.9614 34.01 0.9212 32.34 0.9017 32.89 0.9353 39.18 0.9780 DCSR [ 42 ] × 2 37.54 0.9587 33.14 0.9141 31.90 0.8959 30.76 0.9142 -/- -/- MSRN [ 16 ] × 2 38.08 0.9605 33.74 0.9170 32.23 0.9013 32.22 0.9326 38.82 0.9868 HRAN (ours) × 2 38.21 0.9613 33.85 0.9200 32.34 0.9016 32.95 0.9357 39.12 0.9780 HRAN+ (ours) × 2 38.25 0.9614 33.99 0.9211 32.38 0.9020 33.12 0.9370 39.29 0.9785 Bicubic × 3 30.39 0.8682 27.55 0.7742 27.21 0.7385 24.46 0.7349 26.95 0.8556 SRCNN [ 3 ] × 3 32.75 0.9090 29.30 0.8215 28.41 0.7863 26.24 0.7989 30.48 0.9117 FSRCNN [ 4 ] × 3 33.18 0.9140 29.37 0.8240 28.53 0.7910 26.43 0.8080 31.10 0.9210 VDSR [ 10 ] × 3 33.67 0.9210 29.78 0.8320 28.83 0.7990 27.14 0.8290 32.01 0.9340 LapSRN [ 12 ] × 3 33.82 0.9227 29.87 0.8320 28.82 0.7980 27.07 0.8280 32.21 0.9350 MemNet [ 28 ] × 3 34.09 0.9248 30.00 0.8350 28.96 0.8001 27.56 0.8376 32.51 0.9369 EDSR [ 17 ] × 3 34.65 0.9280 30.52 0.8462 29.25 0.8093 28.80 0.8653 -/- -/- SRMDNF [ 39 ] × 3 34.12 0.9254 30.04 0.8382 28.97 0.8025 27.57 0.8398 33.00 0.9403 RDN [ 41 ] × 3 34.71 0.9296 30.57 0.8468 29.26 0.8093 28.80 0.8653 34.13 0.9484 DCSR [ 42 ] × 3 33.94 0.9234 30.28 0.8354 28.86 0.7985 27.24 0.8308 -/- -/- MSRN [ 16 ] × 3 34.38 0.9262 30.34 0.8395 29.08 0.8041 28.08 0.8554 33.44 0.9427 HRAN (ours) × 3 34.69 0.9292 30.54 0.8463 29.25 0.8089 28.76 0.8645 34.08 0.9479 HRAN+ (ours) × 3 34.75 0.9298 30.60 0.8474 29.29 0.8098 28.96 0.8670 34.36 0.9492 Bicubic × 4 28.42 0.8104 26.00 0.7027 25.96 0.6675 23.14 0.6577 24.89 0.7866 SRCNN [ 3 ] × 4 30.48 0.8628 27.50 0.7513 26.90 0.7101 24.52 0.7221 27.58 0.8555 FSRCNN [ 4 ] × 4 30.72 0.8660 27.61 0.7550 26.98 0.7150 24.62 0.7280 27.90 0.8610 VDSR [ 10 ] × 4 31.35 0.8830 28.02 0.7680 27.29 0.0726 25.18 0.7540 28.83 0.8870 LapSRN [ 12 ] × 4 31.54 0.8850 28.19 0.7720 27.32 0.7270 25.21 0.7560 29.09 0.8900 MemNet [ 28 ] × 4 31.74 0.8893 28.26 0.7723 27.40 0.7281 25.50 0.7630 29.42 0.8942 EDSR [ 17 ] × 4 32.46 0.8968 28.80 0.7876 27.71 0.7420 26.64 0.8033 -/- -/- SRMDNF [ 39 ] × 4 31.96 0.8925 28.35 0.7787 27.49 0.7337 25.68 0.7731 30.09 0.9024 RDN [ 41 ] × 4 32.47 0.8990 28.81 0.7871 27.72 0.7419 26.61 0.8028 31.00 0.9151 DCSR [ 42 ] × 4 31.58 0.8870 28.21 0.7715 27.32 0.7264 27.24 0.8308 -/- -/- MSRN [ 16 ] × 4 32.07 0.8903 28.60 0.775 27.52 0.7273 26.04 0.7896 30.17 0.9034 HRAN (ours) × 4 32.43 0.8976 28.76 0.7863 27.70 0.7407 26.55 0.8006 30.94 0.9143 HRAN+ (ours) × 4 32.56 0.8991 28.86 0.7880 27.76 0.7420 26.74 0.8046 31.26 0.9172 Bicubic × 8 24.40 0.6580 23.10 0.5660 23.67 0.5480 20.74 0.5160 21.47 0.6500 SRCNN [ 3 ] × 8 25.33 0.6900 23.76 0.5910 24.13 0.5660 21.29 0.5440 22.46 0.6950 FSRCNN [ 4 ] × 8 20.13 0.5520 19.75 0.4820 24.21 0.5680 21.32 0.5380 22.39 0.6730 SCN [ 34 ] × 8 25.59 0.7071 24.02 0.6028 24.30 0.5698 21.52 0.5571 22.68 0.6963 VDSR [ 10 ] × 8 25.93 0.7240 24.26 0.6140 24.49 0.5830 21.70 0.5710 23.16 0.7250 LapSRN [ 12 ] × 8 26.15 0.7380 24.35 0.6200 24.54 0.5860 21.81 0.5810 23.39 0.7350 MemNet [ 28 ] × 8 26.16 0.7414 24.38 0.6199 24.58 0.5842 21.89 0.5825 23.56 0.7387 EDSR [ 17 ] × 8 26.96 0.7762 24.91 0.6420 24.81 0.5985 22.51 0.6221 -/- -/- MSRN [ 16 ] × 8 26.59 0.7254 24.88 0.5961 24.70 0.5410 22.37 0.5977 24.28 0.7517 HRAN (ours) × 8 27.11 0.7798 25.01 0.6419 24.83 0.5983 22.57 0.6223 24.64 0.7817 HRAN+ (ours) × 8 27.18 0.7828 25.12 0.6450 24.89 0.6001 22.73 0.6280 24.87 0.7878 effecti veness of our BFF structure. 4.2. Comparison with State-of-the-art Methods W e compare our method with 10 state-of-the-art SISR methods: SRCNN [ 3 ], FSRCNN[ 4 ], VDSR [ 10 ], LapSRN [ 12 ], MEMNet [ 28 ], EDSR [ 17 ] , SRMDNF [ 39 ], RDN [ 41 ], DCSR [ 42 ] and MSRN [ 16 ]. By following [ 17 , 31 ], , we also use self-ensemble strategy to improv e the accuracy of our model at test time. W e show our quantitativ e e valuation results in T able 3 for the scale factor of × 2 , × 3 , × 4 , and × 8 . It is e vident from the results that our method outperforms most of the previ- ous methods. Our self-ensemble model achie ves the high- est PSNR amongst all the models. Although RDN [ 41 ] has shown slightly better performance, from Figure 4 , we ob- serve that RDN [ 41 ] has about 22M parameters, in contrast, our HRAN model has only 7.94 M parameters though our model shows comparable performance. Instead of increas- ing the depth and dense connections, our HRAN model with HRAB and BFF detect the deep features without increas- Urban100 ( 4 × ): img 004 HR Bicubic SRCNN [ 3 ] FSRCNN [ 4 ] VDSR [ 10 ] LapSRN [ 12 ] MemNet [ 28 ] EDSR [ 17 ] SRMDNF [ 39 ] HRAN Urban100 ( 4 × ): img 073 HR Bicubic SRCNN [ 3 ] FSRCNN [ 4 ] VDSR [ 10 ] LapSRN [ 12 ] MemNet [ 28 ] EDSR [ 17 ] SRMDNF [ 39 ] HRAN Manga109 ( 4 × ): Y umeiroCooking HR Bicubic SRCNN [ 3 ] FSRCNN [ 4 ] VDSR [ 10 ] LapSRN [ 12 ] MemNet [ 28 ] EDSR [ 17 ] SRMDNF [ 39 ] HRAN Figure 3. Qualitative results for 4 × SR with BI model on Urban100 and Manga109 datasets. ing the depth of the network. Hence, this observ ation in- dicates that we can improve the network performance with HRAB and RG along with BFF without increasing the net- work depth. This also suggests that our netw ork can further improv e the accuracy with more HRAB’ s and RG’ s, though, we aim to achieve the greater accuracy by considering the memory computations. Moreov er, we present the qualitativ e results in Figure 3 . The results of other methods are derived from [ 40 ]. In Fig- ure 3 , it can be observed from ‘img 004’ image our HRAN method recov ers the lattices in more details, meanwhile, other methods experience the blurring artifacts. Similar be- havior is also observ ed in ‘Y umeiro-Cooking’ image where other methods produce blurry lines and our HRAN pro- duces the lines similar to HR image. It sho ws that our model reconstructs the fine details in output SR image through e x- tracted deep features with RGs which are then efficiently utilized by BFF . Figure 4. Comparison of memory and performance. Results are ev aluated on Set5 ( × 4 ). 4.3. Model Complexity Analysis Since, we are tar geting the maximum accuracy with lim- ited memory computation, therefore our performance is best visible when we see the T able. 3 along with Figure. 4 . In Figure. 4 , we compare our model size and its performance on Set5 [ 1 ] ( × 4). As we can observe that our HRAN model has fewer parameters compared to RDN [ 41 ] and EDSR [ 17 ], it still achie ves the comparable performance whereas our HRAN+ outperforms the state-of-the-art methods. W e hav e also shown analysis on much larger scale ( × 8) in sup- plementary materials. These results demonstrate the effec- tiv e utilization of the features that result in performance gain in SISR. 5. Conclusions In this paper , we propose a h ybrid residual attention net- work (HRAN) to detect the most informativ e multiscale spatial features for the accurate SR image. Proposed hy- brid residual attention block (HRAB) module fully utilize the high-frequency information from input features with a combination of the spatial attention (SA) and channel atten- tion (CA). In addition, the binarized feature fusion (BFF) structure allo ws us to smoothly transmit all the features at the end of the network for reconstruction. Furthermore, we propose to adopt the global, short and long skip connection and residual groups (RG) to ease the flow of information. Our comprehensiv e experiments sho w the efficacy of the proposed model. References [1] M. Bevilacqua, A. Roumy , C. Guillemot, and M. Alberi- Morel. Low-complexity single-image super-resolution based on nonnegati ve neighbor embedding. In British Machine V i- sion Confer ence (BMVC) , pages 1–10, 2012. 6 , 8 [2] C.-F . Chen, Q. Fan, N. Mallinar , T . Sercu, and R. Feris. Big-little net: An efficient multi-scale feature representa- tion for visual and speech recognition. arXiv pr eprint arXiv:1807.03848 , 2018. 3 [3] C. Dong, C. C. Loy , K. He, and X. T ang. Image super-resolution using deep con volutional networks. IEEE transactions on pattern analysis and machine intelligence , 38(2):295–307, 2016. 1 , 2 , 7 , 8 , 11 , 12 [4] C. Dong, C. C. Loy , and X. T ang. Accelerating the super - resolution con volutional neural network. In Eur opean con- fer ence on computer vision , pages 391–407. Springer , 2016. 1 , 2 , 4 , 7 , 8 , 11 , 12 [5] V . Dumoulin, J. Shlens, and M. Kudlur . A learned represen- tation for artistic style. Pr oc. of ICLR , 2, 2017. 4 [6] M. Haris, G. Shakhnarovich, and N. Ukita. Deep back- projection networks for super-resolution. In IEEE Confer - ence on Computer V ision and P attern Recognition (CVPR) , 2018. 5 [7] K. He, X. Zhang, S. Ren, and J. Sun. Deep residual learn- ing for image recognition. In Pr oceedings of the IEEE con- fer ence on computer vision and pattern reco gnition , pages 770–778, 2016. 1 , 2 [8] J. Hu, L. Shen, and G. Sun. Squeeze-and-excitation net- works. In Proceedings of the IEEE conference on computer vision and pattern r ecognition , pages 7132–7141, 2018. 3 , 6 [9] J.-B. Huang, A. Singh, and N. Ahuja. Single image super- resolution from transformed self-exemplars. In Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 5197–5206, 2015. 6 [10] J. Kim, J. K. Lee, and K. M. Lee. Accurate image super- resolution using very deep conv olutional networks. In The IEEE Conference on Computer V ision and P attern Recogni- tion (CVPR Oral) , June 2016. 1 , 2 , 4 , 7 , 8 , 11 , 12 [11] J. Kim, J. K. Lee, and K. M. Lee. Deeply-recursiv e con volu- tional network for image super-resolution. In The IEEE Con- fer ence on Computer V ision and P attern Recognition (CVPR Oral) , June 2016. 1 , 2 [12] W .-S. Lai, J.-B. Huang, N. Ahuja, and M.-H. Y ang. Deep laplacian pyramid networks for fast and accurate super- resolution. In IEEE Conferene on Computer V ision and P at- tern Recognition , 2017. 5 , 7 , 8 , 11 , 12 [13] W .-S. Lai, J.-B. Huang, N. Ahuja, and M.-H. Y ang. Fast and accurate image super-resolution with deep laplacian pyramid networks. , 2017. 11 , 12 [14] C. Lanaras, E. Baltsavias, and K. Schindler . Hyperspectral super-resolution by coupled spectral unmixing. In Proceed- ings of the IEEE International Confer ence on Computer V i- sion , pages 3586–3594, 2015. 1 [15] C. Ledig, L. Theis, F . Husz ´ ar , J. Caballero, A. Cunningham, A. Acosta, A. Aitken, A. T ejani, J. T otz, Z. W ang, et al. Photo-realistic single image super-resolution using a gener- ativ e adversarial network. In Pr oceedings of the IEEE con- fer ence on computer vision and pattern reco gnition , pages 4681–4690, 2017. 2 [16] J. Li, F . Fang, K. Mei, and G. Zhang. Multi-scale residual network for image super-resolution. In The Eur opean Con- fer ence on Computer V ision (ECCV) , September 2018. 1 , 2 , 3 , 4 , 5 , 6 , 7 [17] B. Lim, S. Son, H. Kim, S. Nah, and K. M. Lee. Enhanced deep residual networks for single image super-resolution. In The IEEE Conference on Computer V ision and P attern Recognition (CVPR) W orkshops , July 2017. 1 , 2 , 4 , 6 , 7 , 8 , 11 , 12 [18] T .-Y . Lin, P . Doll ´ ar , R. Girshick, K. He, B. Hariharan, and S. Belongie. Feature pyramid networks for object detection. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 2117–2125, 2017. 3 [19] D. Martin, C. Fo wlkes, D. T al, and J. Malik. A database of human segmented natural images and its application to ev aluating segmentation algorithms and measuring ecologi- cal statistics. In null , page 416. IEEE, 2001. 6 [20] Y . Matsui, K. Ito, Y . Aramaki, A. Fujimoto, T . Ogawa, T . Y a- masaki, and K. Aizawa. Sketch-based manga retriev al us- ing manga109 dataset. Multimedia T ools and Applications , 76(20):21811–21838, 2017. 6 [21] T . Peleg and M. Elad. A statistical prediction model based on sparse representations for single image super-resolution. IEEE transactions on image pr ocessing , 23(6):2569–2582, 2014. 11 [22] M. S. Sajjadi, B. Scholkopf, and M. Hirsch. Enhancenet: Single image super-resolution through automated te xture synthesis. In Pr oceedings of the IEEE International Con- fer ence on Computer V ision , pages 4491–4500, 2017. 11 , 12 [23] M. Sandler, A. Howard, M. Zhu, A. Zhmoginov , and L.-C. Chen. Mobilenetv2: In verted residuals and linear bottle- necks. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 4510–4520, 2018. 6 [24] S. Seferbekov , V . Iglovikov , A. Buslaev , and A. Shvets. Feature pyramid network for multi-class land segmentation. In The IEEE Conference on Computer V ision and P attern Recognition (CVPR) W orkshops , 2018. 3 [25] W . Shi, J. Caballero, F . Husz ´ ar , J. T otz, A. P . Aitken, R. Bishop, D. Rueckert, and Z. W ang. Real-time single image and video super-resolution using an efficient sub- pixel con volutional neural network. In Pr oceedings of the IEEE confer ence on computer vision and pattern reco gni- tion , pages 1874–1883, 2016. 2 , 4 [26] W . Shi, J. Caballero, C. Ledig, X. Zhuang, W . Bai, K. Bha- tia, A. M. S. M. de Marvao, T . Dawes, D. ORegan, and D. Rueckert. Cardiac image super-resolution with global cor- respondence using multi-atlas patchmatch. In International Confer ence on Medical Image Computing and Computer- Assisted Intervention , pages 9–16. Springer , 2013. 1 [27] Y . T ai, J. Y ang, and X. Liu. Image super-resolution via deep recursiv e residual network. In Pr oceedings of the IEEE Con- fer ence on Computer V ision and P attern Recognition , 2017. 11 , 12 [28] Y . T ai, J. Y ang, X. Liu, and C. Xu. Memnet: A persistent memory network for image restoration. In Proceedings of International Confer ence on Computer V ision , 2017. 2 , 7 , 8 , 11 , 12 [29] W . T an, B. Y an, and B. Bare. Feature super-resolution: Make machine see more clearly . In Pr oceedings of the IEEE Con- fer ence on Computer V ision and P attern Recognition , pages 3994–4002, 2018. 5 [30] R. T imofte, E. Agustsson, L. V an Gool, M.-H. Y ang, and L. Zhang. Ntire 2017 challenge on single image super- resolution: Methods and results. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition W orkshops , pages 114–125, 2017. 2 , 6 [31] R. T imofte, R. Rothe, and L. V an Gool. Seven ways to im- prov e example-based single image super resolution. In The IEEE Conference on Computer V ision and P attern Recogni- tion (CVPR) , June 2016. 7 [32] T . T ong, G. Li, X. Liu, and Q. Gao. Image super-resolution using dense skip connections. In Pr oceedings of the IEEE International Conference on Computer V ision , pages 4799– 4807, 2017. 2 [33] Z. W ang, A. C. Bovik, H. R. Sheikh, E. P . Simoncelli, et al. Image quality assessment: from error visibility to struc- tural similarity . IEEE transactions on image pr ocessing , 13(4):600–612, 2004. 6 [34] Z. W ang, D. Liu, J. Y ang, W . Han, and T . Huang. Deep net- works for image super-resolution with sparse prior . In Pro- ceedings of the IEEE International Confer ence on Computer V ision , pages 370–378, 2015. 7 , 11 , 12 [35] J. Y ang, J. Wright, T . S. Huang, and Y . Ma. Image super- resolution via sparse representation. IEEE transactions on image pr ocessing , 19(11):2861–2873, 2010. 1 [36] F . Y u, V . K oltun, and T . Funkhouser . Dilated residual net- works. In Proceedings of the IEEE conference on computer vision and pattern r ecognition , pages 472–480, 2017. 5 [37] R. Zeyde, M. Elad, and M. Protter . On single image scale-up using sparse-representations. In International confer ence on curves and surfaces , pages 711–730. Springer , 2010. 6 [38] K. Zhang, W . Zuo, S. Gu, and L. Zhang. Learning deep cnn denoiser prior for image restoration. In IEEE Confer ence on Computer V ision and P attern Recognition , pages 3929– 3938, 2017. 11 [39] K. Zhang, W . Zuo, and L. Zhang. Learning a single conv o- lutional super-resolution network for multiple degradations. In IEEE Confer ence on Computer V ision and P attern Recog- nition , pages 3262–3271, 2018. 6 , 7 , 8 , 11 , 12 [40] Y . Zhang, K. Li, K. Li, L. W ang, B. Zhong, and Y . Fu. Image super-resolution using very deep residual channel attention networks. In Proceedings of the Eur opean Confer ence on Computer V ision (ECCV) , pages 286–301, 2018. 1 , 3 , 4 , 5 , 6 , 8 [41] Y . Zhang, Y . Tian, Y . Kong, B. Zhong, and Y . Fu. Residual dense network for image super-resolution. In CVPR , 2018. 2 , 6 , 7 , 8 [42] Z. Zhang, X. W ang, and C. Jung. Dcsr: Dilated con volu- tions for single image super-resolution. IEEE T ransactions on Image Pr ocessing , 28(4):1625–1635, 2019. 1 , 7 [43] W . W . Zou and P . C. Y uen. V ery low resolution face recog- nition problem. IEEE T ransactions on image pr ocessing , 21(1):327–340, 2012. 1 Supplementary Material In this supplementary submission, we present more qual- itativ e results with the different scaling factors. Further - more, we also compare our method’ s computation complex- ity with the large scaling f actor . 1. Experimental Results 1.1. Model Complexity Analysis When it comes to the large scaling factor , the reconstruc- tion of the SR image becomes more difficult and the SR problem is intensified due to very limited information in the LR image. In this section, we compare our model computa- tion comple xity and performance on the large scaling factor ( 8 × ) in terms of a number of parameters and peak signal- to-noise ratio (PSNR) respectiv ely in Figure. 5 . The results in Figure. 5 shows that our HRAN and HRAN+ models outperform all the models including EDSR, and MSRN, for the scaling factor 8 × with the low number of parameters. 1.2. Visual Comparisons In this section, we compare our qualitative results with the state-of-the-art methods: SRCNN [ 3 ], SPMSR [ 21 ], FS- RCNN [ 4 ], VDSR [ 10 ], IRCNN [ 38 ], SRMDNF [ 39 ], SCN [ 34 ], DRRN [ 27 ], LapSRN [ 12 ], MSLapSRN [ 13 ], Enet- P A T [ 22 ], MemNet [ 28 ], and EDSR [ 17 ]. W e sho w the experiments’ results with the different scal- ing factors: 3 × , 4 × , and 8 × . In Figure. 6 , we can visualize that most of the methods fail to reconstruct the fine details in ‘img 062’ and ‘img 078’ and have blurry effects. Al- though SRMDNF has recovered the horizontal and vertical lines but output result is more blurry . Whereas, our results hav e no blurry effect and have sho wn similar visual perfor- mance than EDSR. For further illustrations, we also analyze our results on 8 × super-resolution (SR) in Figure 7 . When the scaling fac- tor increases, we get very limited details in the LR image. From the ‘img 040’ image, what we observe that Bicubic interpolation does not recov er the original patterns. Those methods (SRCNN, MemNet, and VDSR) which use inter- polation as pre-scaling, lose the original structure and gen- erate wrong patterns. Our HRAN results are more similar Figure 5. Comparison of memory and performance. Results are ev aluated on Set5 ( × 8 ). to EDSR, but unlike EDSR, HRAN does not produce blurry effects. Similarly , in ‘T aiyouNiSmash’ image, we observ e that most of the methods could not recover the tiny lines clearly and lose the structures and the blurry effect is also evident in most of the methods. HR Bicubic SRCNN [ 3 ] FSRCNN [ 4 ] SCN [ 34 ] VDSR [ 10 ] DRRN [ 27 ] LapSRN [ 12 ] MSLapSRN [ 13 ] ENet-P A T [ 22 ] MemNet [ 28 ] EDSR [ 17 ] SRMDNF [ 39 ] HRAN (ours) Figure 6. “img 074” from Urban100 (4 × ): State-of-the-art results with Bicubic (BI) degradation. Manga109 ( 8 × ) T aiyouNiSmash HR Bicubic SRCNN [ 3 ] SCN [ 34 ] VDSR [ 10 ] LapSRN [ 12 ] MemNet [ 28 ] EDSR [ 17 ] MSLapSRN [ 13 ] HRAN Urban100 ( 8 × ): img 040 HR Bicubic SRCNN [ 3 ] SCN [ 34 ] VDSR [ 10 ] LapSRN [ 12 ] MemNet [ 28 ] MSLapSRN [ 13 ] EDSR [ 17 ] HRAN Figure 7. Qualitative results for 8 × SR with BI model on Manga109 and Urban100.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment