Coordinated Joint Multimodal Embeddings for Generalized Audio-Visual Zeroshot Classification and Retrieval of Videos

We present an audio-visual multimodal approach for the task of zeroshot learning (ZSL) for classification and retrieval of videos. ZSL has been studied extensively in the recent past but has primarily been limited to visual modality and to images. We…

Authors: Kranti Kumar Parida, Neeraj Matiyali, Tanaya Guha

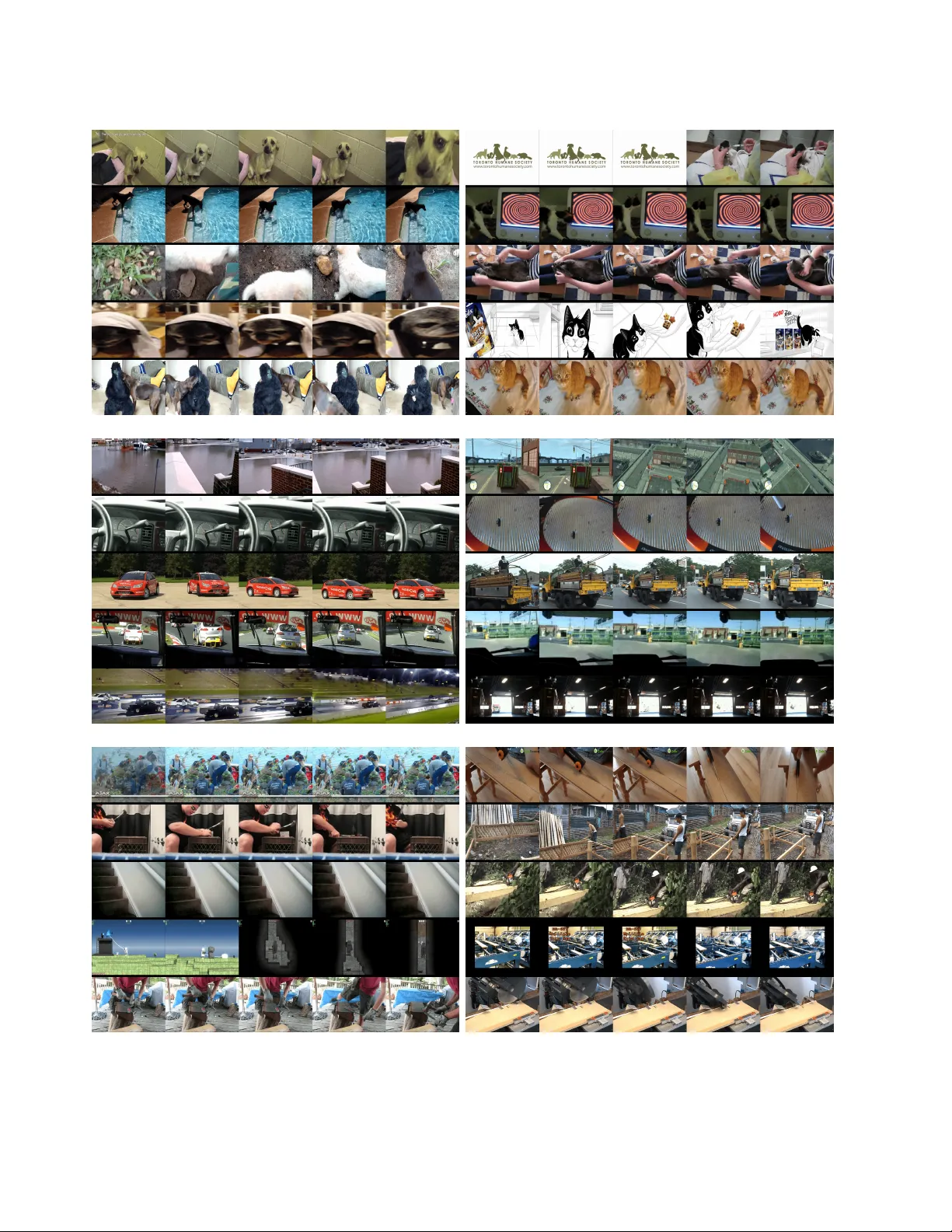

Coordinated Joint Multimodal Embeddings f or Generalized A udio-V isual Zer oshot Classification and Retriev al of V ideos Kranti Kumar P arida IIT Kanpur Neeraj Matiyali IIT Kanpur T anaya Guha Uni versity of W arwick Gaurav Sharma NEC Labs America Abstract W e pr esent an audio-visual multimodal appr oach for the task of zer oshot learning (ZSL) for classification and re- trieval of videos. ZSL has been studied extensively in the r ecent past b ut has primarily been limited to visual modal- ity and to images. W e demonstrate that both audio and visual modalities are important for ZSL for videos. Since a dataset to study the task is curr ently not available, we also construct an appr opriate multimodal dataset with 33 classes containing 156 , 416 videos, from an existing lar ge scale audio event dataset. W e empirically show that the per- formance impr oves by adding audio modality for both tasks of zeroshot classification and r etrieval, when using multi- modal e xtensions of embedding learning methods. W e also pr opose a novel method to pr edict the ‘dominant’ modal- ity using a jointly learned modality attention network. W e learn the attention in a semi-supervised setting and thus do not r equire any additional explicit labelling for the modali- ties. W e pr ovide qualitative validation of the modality spe- cific attention, which also successfully generalizes to un- seen test classes. 1. Introduction Zeroshot learning (ZSL) refers to the setting when test time data comes from classes that were not seen during training. In the past few years, ZSL for classification has receiv ed significant attention [ 1 – 9 ] due to the challenging nature of the problem, and its rele v ance to real world set- tings, where a trained model deplo yed in the field may en- counter classes for which no examples were av ailable dur - ing training. Initially , ZSL was proposed and studied in the setting where the test examples were from unseen classes and were classified into one of the unseen classes only [ 6 ]. This ho wever is an artificial/controlled setting. More re- cent ZSL works thus focus on a setting where unseen test examples are classified into both seen and unseen classes [ 2 , 9 , 10 ]. The present w ork follows the latter setting kno wn as the Generalized ZSL . The majority of work in volving generalized ZSL [ 3 , 10 ] video audio text dog cat car rain ... dog cat rain car Figure 1. Illustration of the proposed method. W e jointly embed all videos, audios and text labels into the same embedding space. W e learn the space such that the corresponding embedding vec- tors for the same classes hav e lower distances than those of dif fer- ent classes. Once embeddings are learned, ZSL classification and crossmodal retrie val can be posed as a nearest neighbor search in the embedding space. has (i) worked with images, and (ii) used only visual repre- sentations along with text embeddings of the classes. When dealing with images, this is optimal. Howe ver , for the task of video ZSL, the audio modality , if av ailable, may help with the task by providing complementary information. Ig- noring the audio modality completely might ev en render an otherwise easy classification task difficult, eg. if we are looking to classify an example from the ‘dog’ class, the dog might be highly occluded and not properly visible in the video, but the barking sound might be prominent. In this work, we study the problem of ZSL for videos with general classes like, ‘dog’, ‘sewing machine’, ‘ambu- lance’, ‘camera’, ‘rain’, and propose to use audio modality in addition to the visual modality . ZSL for videos is rela- tiv ely less studied, cf. ZSL for images. There are sev eral works on video ZSL for the specific task of human action recognition [ 11 – 13 ] but they ignored the audio modality as well. Our focus here is on lev eraging both audio and video modalities to learn a joint projection space for audio, video and text (class labels). In such an embedding space, ZSL tasks can be formulated as nearest neighbor searches (fig. 1 1 illustrates the point). When doing classification, a new test video is embedded into the space and the nearest class em- bedding is predicted to be its class. Similarly , when doing retriev al, the nearest video or audio embeddings are pre- dicted to be its semantic retriev al outputs. W e propose cross-modal extensions of the embedding- based ZSL approach based on triplet loss for learning such a joint embedding space. W e optimize an objective based on (i) two cross-modal triplet losses, one each for ensuring compatibility between the text (class labels) and the video, and the text and the audio, and (ii) another loss based on crossmodal compatibility of the audio and visual embed- dings. While the triplet losses encourage the audio and video embeddings to come closer to respective class em- beddings in the common space, the audio-visual crossmodal loss encourages the audio and video embeddings from the same sample to be similar . These losses together ensure that the three embeddings of the same class are closer to each other relati ve to their distance from those of dif fer- ent classes. The crossmodal loss term is an ` 2 loss, and uses paired audio-video data, the annotation being trivially av ailable from the videos. While the text-audio and text- video triplet losses use class annotations av ailable for the seen classes during training, the crossmodal term uses the trivial constraint that audio and video from the same exam- ple are similar . As another contribution, we also propose a modality at- tention based extension, which first seeks to identify the ‘dominant’ modality and then makes a decision based on that modality only if possible. T o clarify our intuition of ‘dominant’, we refer back to the dog video example above, where the dog may be occluded but barking is prominent. In this case, we would like the audio modality to be predicted as dominant, and subsequently be used to make the class prediction. In case the attention network is not able to de- cide a clear dominant modality the inference then continues using both the modalities. This leads to a more interpretable model which can also indicate which modality it is basing its decision on. Furthermore, we show empirically that us- ing such attention learning improv es the performance, and brings it to be competitive to model trained on a concatena- tion of both modality features. A suitable dataset w as not av ailable for the task of audio- visual ZSL. Hence, we construct a multimodal dataset with class lev el annotations. The dataset is a subset of a recently published large scale dataset, called Audioset [ 14 ], which was primarily created for audio event detection and main- tains a comprehensi ve sound vocab ulary . W e subsample the dataset to allow studying the task of audiovisual ZSL in a controlled setup. In particular , the subsampling ensures that (i) the classes hav e relativ ely high number of examples, with the minimum number of examples in any class being 292 , (ii) the classes belong to diverse groups, eg. animals, vehi- cles, weather e vents, (ii) the set of unseen classes is such that the pre-trained video networks could be used without violating the zeroshot condition, ie. the pre-training did not in volve classes close to the unseen classes in our dataset. W e provide more details in sec. 4 . In summary , our contributions are as follows. (i) W e in- troduce the problem of audiovisual ZSL for videos, (ii) we construct a suitable dataset to study the task, (iii) we pro- pose a multimodal embedding based ZSL method for classi- fication and crossmodal retriev al, (iv) we propose a modal- ity attention based method, which indicates which modality is dominant and was used to make the decision. W e thor- oughly ev aluate our method on the dataset and show that considering audio modality , whenev er appropriate, helps video ZSL tasks. W e also show our method on standard ZSL datasets and results for some existing ZSL approaches for single-modality in our dataset as well. W e also present qualitativ e results highlighting the improved cases using the proposed methods. 2. Related W ork Zeroshot lear ning. ZSL has been quite popular for image classification [ 1 – 9 , 15 – 17 ], and recently has been used for object detection in images as well [ 18 – 20 ]. The problem has been often ad- dressed as a task of embedding learning, where the images and their class labels are embedded in a common space. The two types of class embeddings commonly used in the liter- ature are based on (i) attributes like shape, color, and pose [ 2 , 5 , 6 , 9 ], and (ii) semantic word embeddings [ 2 , 4 , 7 , 9 ]. Few works ha ve also used both the embeddings together [ 1 , 8 , 16 ]. Different from embedding learning, few recent works [ 2 , 9 ] hav e proposed to generate the data for the un- seen class using a generati ve approach conditioned on the attribute vectors. The classifiers are then learned using the original data for the seen classes and the generated data for the unseen classes. This line of work follows the recent success of image generation methods [ 21 , 22 ]. The initially- studied setting in ZSL refers to the one where the test exam- ples were classified into unseen test classes only [ 6 ]. How- ev er, more recently the generalized version was proposed where they are classified into both seen and unseen classes [ 3 ]. W e address this later more practical, setting 1 . W ork on ZSL in volving audio modality is scarce. W e are aware of only one very recent work, where the idea of ZSL has been used to recognize unseen phonemes for multilin- gual speech recognition [ 23 ]. A udiovisual learning . In the last few years, there has been a significant gro wth in research efforts that le verage infor- mation from audio modality to aid visual learning tasks and 1 Some earlier video retrie val works were called zeroshot, howe ver , the y are not strictly zeroshot in the current sense. Kindly see Supplementary material for a detailed discussion 2 vice-versa. Audio modality has been exploited for applica- tions such as, audiovisual correspondence learning [ 24 – 27 ], audiovisual source separation [ 28 , 29 ] and source localiza- tion [ 30 – 32 ]. Among the representativ e works, Owens et al. [ 25 ] used CNNs to predict, in a self-supervised way , if a giv en pair of audio and video clip is temporally aligned or not. The learned representations are subsequently used to perform sound source localization, and audio-visual action recognition. In a task of crossmodal biometric matching, Nagrani et al. [ 33 ] proposed to match a giv en voice sam- ple against two or more faces. Arandjelovic et al. [ 30 ] in- troduced the task of audio-visual correspondence learning, where a network comprising visual and audio subnetworks was trained to learn semantic correspondence between au- dio and visual data. Along the similar lines, Arandjelovic et al. [ 26 ] and Sencoak et al. [ 32 ] in vestigated the prob- lem of localizing objects in an image corresponding to a sound input. Gao et al. [ 29 ] proposed a multi-instance multilabel learning framew ork to address the audiovisual source separation problem, where they extract different au- dio components and associate them with the visual objects in a video. Ephrat et al. [ 34 ] proposed a join audiovisual model to address the classical cocktail party problem (blind speech source separation). Zhao et al. [ 28 ] proposed a self- supervised learning frame work to address the problem of pixel-le vel (audio) source localization [ 35 ]. 3. Coordinated Joint Multimodal Embeddings W e now present our method in detail. Fig. 1 illustrates the basic idea and Fig. 2 giv es the high lev el block diagram of the proposed method. Our method works by projecting all three inputs, audio, video and text, onto a common em- bedding space such that class constraints and crossmodal similarity constraints are satisfied. The class constraints are enforced using bimodal triplet losses between audio and text, and video and text embeddings. Denoting a i , v i , t i as the audio, video and text embedding (we explain ho w we obtain them shortly) for an example i , we define the bimodal triplet losses as follows L T A ( a p , t p , a q , t q ) = [ d ( a p , t p ) − d ( a q , t p ) + δ ] + (1) L T V ( v p , t p , v q , t q ) = [ d ( v p , t p ) − d ( v q , t p ) + δ ] + (2) where, ( a p , v p , t p ) and ( a q , v q , t q ) are two e xample videos with t p 6 = t q . These losses force the audio and video em- beddings to be closer to the correct class embedding by a margin δ > 0 cf. the incorrect class embeddings. W e also use a third loss to ensure the crossmodal simi- larity between the audio-video streams that come from the same video in the common embedding space. This loss is simply a ` 2 loss giv en by L AV ( a p , v p ) = k a p − v p k 2 2 . (3) ‘dog’: label for second example second class example video and audio first class example video and audio ‘cat’: label for first example spectrogram spectrogram Figure 2. Block diagram of the proposed approach. Pairs of video, audio and text networks share weights. The full loss function is thus a weighted av erage of these three losses. L = λ X p ∈T L AV + γ X p,q ∈T y p 6 = y q { α v L T V + α a L T A } , (4) where, λ, γ , α v , α a are the hyperparameters that control the contributions of the dif ferent terms, and T is the index set ov er the training examples { ( a i , v i , y i ) | i = 1 , . . . , N } with y i being the class label. With these three losses ov er all pair- wise combinations of the modalities, ie. L T V , L T A , L AV , we force the embeddings from all the three modalities to re- spect the class memberships and similarities. Representations and parameters. W e now need to specify the parameters ov er which these losses are optimized. W e represent each of the three types of inputs, ie. audio, video, and text, using the corresponding state-of-the-art neural net- works outputs which we denote as f a ( · ) , f v ( · ) , f t ( · ) . W e project each representation with corresponding neural net- works which are small MLPs, denoted as g a ( · ) , g v ( · ) , g t ( · ) with parameters θ a , θ v , θ t (we gi ve details about all these networks in the implementation details sec. 5 ). Finally , the representations are obtained by passing the input au- dio/video/text through the corresponding networks sequen- tially , ie. x = g x ◦ f x ( X ) where x ∈ a , v , t and X is the corresponding raw audio/video/text input. W e keep the ini- tial network parameters fixed to be that of the pretrained networks and optimize over the parameters of the projec- tion networks. Hence, the full optimization is giv en as, θ ∗ a , θ ∗ v , θ ∗ t = arg min θ a ,θ v ,θ t L ( T ) . (5) W e train for the parameters using standard backpropagation for neural networks. 3 Inference. Once the model has been learned, we use nearest neighbor in the embedding space for making predictions. In the case of classification, the audio and video are embedded in the space and the class embedding with the minimum av erage distance with them is taken as the prediction, ie. t ∗ = arg min t { d ( a , t ) + d ( v , t ) } . (6) In the case of (crossmodal) retrie val, the sorted list of audio or video examples are returned as the result, based on their distance from the query in the embedding space. Modality attention based learning . In the prequel, the method learns to make a prediction (classification or re- triev al) using both the audio as well as video modalities. W e augment our method to predict modality attention to find the dominant modality for each sample, eg. in case when the object is occluded or not visible, but the characteristic sound is clearly present we want the network to be able to make the decision based on the audio modality only . W e in- corporate such attention by adding a attention predictor net- work f attn ( · ) , with parameters θ attn , which takes the con- catenated audio and video features as inputs and predicts a scalar α which gives us the relativ e importance weights α v = α, α a = 1 − α in eq. 4 . All the network parameters are then learned jointly . T o further guide the attention network, we use the intu- ition that when one modality is dominant, say audio, the correct class embedding is expected to be much closer to the audio embedding, than the other classes cf. the video embedding. Hence the entropy of the prediction probability distribution o ver classes, for the dominant modality , should be very low . T o compute such distribution, we first com- pute the in verse of the distances of the query embedding to all the class embeddings, and then ` 1 normalize the vec- tor . W e then deriv e a supervisory signal for α using the en- tropies computed w .r .t. audio and video modalities, denoted e a , e v ∈ [0 , log N c ] where N c is the number of classes over which prediction is being done, as α = 0 , if e v < e a − ξ 1 , if e a < e v − ξ 0 . 5 , otherwise (7) where, ξ > 0 is a threshold parameter for preferring one of the modalities based on their entropy difference. The modality attention objective becomes, L attn = L + L C E − α , where L is the objecti ve from eq. 4 , L C E − α is the cross en- tropy loss on α based on the generated supervision above. This loss is then minimized jointly ov er all θ a , θ v , θ t , θ attn . Modality selective inference with attention. While atten- tion is interesting at training as it helps identify the domi- nant modality and learn better models. W e also use attention to make inference using only the predicted dominant modal- ity at test time. When the predicted attention is higher than a threshold for one of the modalities we only compute dis- tance for that modality in the embedding space and use that to make the prediction. W e could also use the abov e computed α value based on the difference of entropies of the prediction distrib utions (eq. 7 ) at test time, e ven when not training with modality attention. W e use that as a baseline to verify that learning to predict the attention helps improv e the performance. Calibrated stacking in generalized ZSL (GZSL). The common problem with GZSL setting is that the classifier is alw ays biased to w ards the seen classes. This reduces the performance for the unseen classes as the unseen examples are often misclassified to one of the seen classes. A sim- ple approach to handle this was proposed in [ 3 ], where the authors suggested to reduce the scores for the seen classes. The amount β by which the scores are additiv ely reduced for the seen classes, is a parameter which needs to be tuned. W e use the approach of calibrated stacking, and as we are working with distances instead of similarities, we use the modified prediction rule at inference, giv en by t ∗ = arg min t ,c ∈{ S + U } { d c ( x , t ) + β I ( c ∈ S ) } , (8) where, x can be audio, video or concatenated feature, I is the indicator function which is 1 when the input condition is true and 0 otherwise. 4. Proposed A udioSet ZSL Dataset A large scale audio dataset, AudioSet [ 14 ], was recently released containing segments from in-the-wild Y ouT ube videos (with audio). These videos are weakly annotated with dif ferent types of audio e vents ranging from human and animal sounds to musical instruments and en vironmen- tal sound. In total, there are 527 audio ev ents, and each video segment is annotated with multiple labels. The original dataset being highly diverse and rich, is of- ten used in parts to address specific tasks [ 29 , 36 ]. T o study the task of audiovisual ZSL, we construct a subset of the Audioset containing 156416 video segments. W e refer to this subset as the AudioSetZSL . While the original dataset was multilabel, the example videos were selected such that ev ery video in AudioSetZSL has only one label, ie. it is a multiclass dataset. Fig. 3 sho ws the num- ber of examples for dif ferent classes in AudioSetZSL , tab . 8 gives some statistics. W e follo w the steps belo w to create the AudioSetZSL : (i) W e remove classes with confidence score (for annotation quality) less than 0 . 7 , (ii) we then determine the group of classes that are semantically similar , e.g. animals, vehicles, water bodies. W e do so to ensure that the seen and unseen 4 2k 4k 6k 8k 10k cashbo x thunderstor m camer a hammer panther* cloc k* sa wing f an* cattle pig* church-bell* boom se wing goat r ain stream* bicycle fire w or ks skateboard horse ocean pr inter* cat gunshot* amb ulance b us* aircr aft* motorcycle tr ain tr uc k Figure 3. Distribution of the different classes in AudioSetZSL . Apart from these three other classes included in the dataset are dog, bird and car containing 12646 , 25153 and 38315 examples. The unseen classes are appended with a ‘*’. min max mean std. dev . 292 38315 4739.88 7693.10 T able 1. Statistics on the number of examples per class for the AudioSetZSL dataset. classes for ZSL ha ve some similarities and the task is feasi- ble with the dataset. (iii) After selecting the classes, we dis- card highly correlated classes within those groups to have a challenging dataset, obtaining 33 classes. (i v) W e then remov e the examples which correspond to more than one of the 33 classes to keep the dataset multiclass. W e then re- mov e the examples that are no longer av ailable on Y ouT ube. T ab. 8 and Fig. 3 giv e some statistics and more details are in the supplementary document. T o create the seen, unseen splits for ZSL tasks, we se- lected a total of 10 classes spanning all the groups as the zero-shot classes (marked with ‘*’ in Fig. 3 ). W e ensure that the unseen classes ha ve minimal overlap with the Kinetics dataset [ 37 ] training classes as we use CNNs pre-trained on that. W e do so by not choosing any class whose class embedding similarity is greater than 0 . 8 with an y of the Ki- netics train class embeddings in the word2vec space. W e finally split, both the seen and unseen classes, as 60 − 20 − 20 into train, validation and test sets. W e set the protocol to be as follows. T rain on the train classes and then test on seen class examples and unseen class e xamples, both being classified into one of all the classes. The performance measure is mean class accuracies for seen classes and un- seen classes and the harmonic mean of these two values, following that in image based ZSL w ork [ 10 ]. 5. Experiments Implementation details The audio network f a ( · ) is based on that of [ 38 ], and is trained on the spectrogram of the audio clips in the train set of our dataset. W e obtain the audio features after seven conv layers of the network, and av erage them to obtain 1024 D vector . The video network, denoted as f v ( · ) is an inflated 3D CNN network which is pretrained on the Kinetics dataset [ 37 ] and a large video dataset of action recognition. W e also obtain the video fea- tures form the layer before the classification layer and av er- age them to get a vector of 1024 D. Finally the text network, denoted as f t ( · ) is the well kno wn word2vec network pre- trained on W ikipedia [ 39 ] with output dimension of 300 D. The projection model for text embeddings was fixed to be a single layer network, where as for the audio and video was fixed to be a two layer network, with the output di- mensions matching for all. In order to find the seen/unseen class bias parameter β we divide the maximum and min- imum possible value of β into 25 equal intervals and then ev aluate performances on the val set. W e chose the best per - forming β among those. Evaluation and performance metrics. W e report the mean class accuracy (% mAcc) for the classification task and the mean average precision (% mAP) for the retriev al tasks. The performance for the seen classes (denoted as S) is clas- sified (retriev ed) over all the classes (seen and unseen), and that for the unseen classes (denoted as U) are also reported. The harmonic mean HM of S and U indicates how well the system performs on both seen and unseen categories on a v- erage. For classification, we classify each test e xample, and for retriev al, we perform leave-one-out testing, ie. each test example is considered as a query with the rest being the gallery . The performance reported is (mean class) a veraged. Methods reported. W e report performances of audio and video only methods, ie. only the respecti ve modality is used to test and train. W e also report a naiv e combination by concatenation of features from audio and video modalities before learning the projection to the common space. This method allows zeroshot classification and retrie v al only when both the modalities are a vailable, and it does not allow crossmodal retriev al at all. W e then report performances with the proposed Coordinated Joint Multimodal Embed- dings (CJME) method, when modality attention is used and when it is not used. In either of the cases, we can choose dominant modality (or not) based on the α value (eq. 7 ). W e report with both the cases. W e also compare our approach to two other baseline methods, namely pre-trained features and GCCA. In ‘Pre- trained’ method the raw features obtained from the individ- ual modality pre-trained network are directly used for re- triev al as both are of same dimensions. This can be consid- ered as one of the lo wer bound since no common projection is learned for the different modalities in this case. GCCA [ 40 ] or Generalized Canonical Correlation Analysis is the 5 Figure 4. Effect of classification performance for model M1 (left) and model M2 (right) with different v alues of bias parameter . T rain Modality T est Modality S U HM audio audio 28.35 18.35 22.22 CJME audio 25.58 20.30 22.64 video video 43.27 27.11 33.34 CJME video 41.53 28.76 33.99 both (concat) both 45.83 27.91 34.70 CJME both 30.29 31.30 30.79 CJME (no attn) audio or video 31.72 26.31 28.76 CJME (w/ attn) audio or video 41.07 29.58 34.39 T able 2. Zeroshot classification performances (% mAcc) achieved with audio only , video only , and both audio and video used for training and test. Note that the audio and video concatenation model requires both the modalities to be av ailable during testing. standard e xtension of the Canonical Correlation Analysis (CCA) method from two-set method to multi-set method, where the correlation between the example pair from each sets are maximized. W e use here the GCCA to maximize the correlation between all the three modalities (text, audio and video) for ev ery example triplet in the dataset. 5.1. Quantitative Ev aluation Evaluation of calibrated stacking perf ormance. W e hav e shown the improvement in performance with the approach of calibrated stacking in Fig. 4 . This shows the perfor- mances with dif ferent v alues of the bias parameter , ie. accu- racies for seen and unseen classes, as well as their harmonic means. W e observe that the performance increases with the initial increase in bias, and then falls after a certain point as expected. W e choose the best performing value of the class bias on the val set and then fix it for the e xperiments on test set. Zeroshot audio-visual classification. T ab. 2 giv es the performances of the dif ferent models for the task of ze- roshot classification. W e make multiple observations here. The video modality performs better than the audio modality for the task ( 33 . 34 vs. 22 . 22 HM), which is interesting as the original dataset was constructed for audio event detection. W e also observe that when both audio and video modalities are used by simply concatenating the feature from the re- spectiv e pre-trained networks, the performance increases to 34 . 70 . This shows that adding the audio modality is help- ful for zeroshot classification. Our coordinated joint mul- timodal embeddings (denoted CJME in the table) improves Model T est S U HM pre-trained T → A 3.83 1.66 2.32 GCCA [ 40 ] T → A 49.84 2.39 4.56 audio T → A 43.16 3.34 6.20 CJME T → A 48.24 3.32 6.21 pre-trained T → V 3.83 2.53 3.05 GCCA [ 40 ] T → V 57.67 3.54 6.67 video T → V 48.62 5.25 9.47 CJME T → V 59.39 5.55 10.15 both (concat) T → A V 63.13 7.80 13.88 CJME T → A V 65.45 5.40 9.97 CJME (no attn) T → A or V 65.74 5.09 9.45 CJME (w/ attn) T → A or V 62.97 5.67 10.41 T able 3. Zeroshot retrie val performances (% mAP) achieved by models when audio only , video only , and both audio and video modalities are used for training and test. Note that the audio and video concatenation based model requires both modalities at test time also and can not predict using any single one. the performance of video and audio only models on the re- spectiv e test sets by modest b ut consistent mar gins. This highlights the ef ficacy of the proposed method to learn joint embeddings which are comparable (slightly better) than in- dividually trained models. The performance of the proposed method is lower with- out attention learning and selective modality based test time prediction cf. the concatenated input model ( 30 . 79 vs. 34 . 70 ), b ut is comparable to it when trained and tested with attention ( 34 . 39 ). Also, when we do not train for atten- tion but use selectiv e modality based prediction the perfor- mance falls ( 28 . 76 ). Both these comparisons validate that the modality attention learning is an important addition to the base multimodal embedding learning framew ork. Zeroshot audio-visual retrie val. T able 3 compares the per- formances of different models for the task of zeroshot re- triev al. The performance on the unseen classes are quite poor , albeit it is approximately three times the baseline pre- trained performance. This is because of the bias towards the seen classes in generalized ZSL. This happens for clas- sification setting as well but is corrected for explicitly by reducing the scores of the seen classes. Ho we ver , in a re- triev al scenario, since the class of the gallery set member is not known in general, such correction can not be applied. W e tried classifying the gallery sets first and then apply- ing the seen/unseen class bias correction, howe ver that did not improve results possibly because of erroneous classifi- cations. W e observe, from T ab. 3 , similar trends as with zeroshot classification. The proposed CJME performs similar to audio only ( 6 . 20 vs. 6 . 21 ) and slightly better than video only ( 9 . 47 vs. 10 . 15 ) models but consistently outperforms both pre-trained and GCCA model. Compared to the au- dio and video features concatenated model, the performance without modality attention based training are lo wer ( 13 . 88 vs. 9 . 47 ) which improv e upon using attention at training 6 Model T est S U HM pre-trained audio → video 3.61 2.37 2.86 GCCA [ 40 ] audio → video 22.12 3.65 6.26 CJME audio → video 26.87 4.31 7.43 pre-trained video → audio 4.22 2.57 3.19 GCCA [ 40 ] video → audio 26.68 2.98 5.26 CJME video → audio 29.33 4.35 7.58 T able 4. Zeroshot crossmodal retrieval performances (% mAP). L AV L T A L T V S U HM 7 7 3 1.26 10.13 2.24 7 3 7 3.00 4.18 3.49 7 3 3 31.20 28.47 29.77 3 7 3 30.39 27.31 28.76 3 3 7 30.07 25.06 27.33 3 3 3 33.29 28.18 30.53 T able 5. Ablation study to verify the contribution of different loss terms. Performances for proposed CJME (with attention) method on zeroshot classification (% mAcc) ( 10 . 41 ), albeit staying a little lower cf. similar in the classi- fication case. W e thus conclude that CJME is as good as au- dio only or video only model and is competitiv e cf. concate- nated features model, while allowing crossmodal retrie v al, which we ev aluate next. Crossmodal retrieval. Since CJME learns to embed both audio and video modality in a common space, it allows for doing crossmodal retriev al from audio to video and vice- versa. T ab . 4 gi ves the performances of such crossmodal retriev al from audio and video domains. W e observe that the retrie val accuracy in the case of crossmodal retriev al are 7 . 43 and 7 . 58 for audio to video and video to audio respec- tiv ely . Due to the inability to do seen/unseen class bias cor- rection, we observ e a lar ge gap between the retrie val perfor- mance of seen classes cf. unseen classes, which stays true in the case of crossmodal retriv al as well. The performance is still three times better than the raw pre-trained features. W e believ e these are encouraging initial results on the challeng- ing task of audio-visual crossmodal retriev al on real world unconstrained videos in zeroshot setting. Ablation of the different loss components. T ab. 5 gives the performances in the dif ferent cases when we selecti vely turn off different combination of losses in the optimization objectiv e eq. 4 . W e observe that all three losses contribute positiv ely tow ards the performance. When either of the triplet loss is turned off, the performance drastically fall to ∼ 3 , b ut when the crossmodal audio-video loss is added with one of the triplet losses turned off, the y recov er to rea- sonable values ∼ 28 . Compared to the final performance of 30 . 53 , when the text-audio, text-video and audio-video losses are turned off, the performances fall to 28 . 76 , 27 . 33 and 29 . 77 respecti vely . Thus we conclude that each com- ponent in the loss function is useful and that the networks (which are already pre-trained on auxiliary classification tasks) need to be trained for the current task to giv e mean- ingful results. 5.2. Comparison with state of the art methods W e address both possible issues , i.e. (i) our imple- mentation is competitiv e w .r .t. other methods on standard datasets, and (ii) ho w do other methods compare on the pro- posed audio-visual ZSL dataset, by providing additional re- sults. T ab . 6 gives the performance of our implementation on other datasets (existing method performances are taken from Xian et al. [ 10 ]). W e observe that our method is competiti ve to other meth- ods on an average. T ab . 7 gives the classification perfor - mance of other methods using our features on the proposed dataset. W e see that our method performs better than many existing methods (eg. ALE 33 . 0 vs. CJME 34 . 4 ). Hence we conclude that our implementation and method, both, per- form comparable to existing appearance based ZSL meth- ods. T ab. 7 also sho ws that adding audio improv es the video only ZSL from 33 . 3 to 34 . 4 HM. In these comparisons, we ha ve not included some of the recent generativ e approaches [ 2 , 9 ] which handles the task by conditional generation of examples form the unseen classes. Although these approaches increase the perfor- mance but they come with the drawback of soft-max clas- sification, which requires the classifier to be trained form scratch once again if a new class is added to the existing setup at test time. This also requires saving all the training data for generative approaches while in the projection based methods, this is not required. 5.3. Qualitative e valuation Fig. 5 shows qualitativ e crossmodal retriev al results for all three pairs of modalities, ie. text to audio/video, audio to video and video to audio. W e see that method makes acceptable mistakes, e g. for the car text query one of the audio retrie val contains motorbik e due to the similar sound, for the bird video query the wrong retriev al is a cat purring sound which is similar to a pigeon sound. In the unseen class case, the bus text query return car , train and truck audio as the top false positiv es. Easier and distinct cases such as gunshot audio query gi ves very good video re- triev als. W e encourage the readers to look at the result videos av ailable at https://www.cse.iitk.ac.in/ users/kranti/avzsl.html for a better understand- ing of qualitativ e results. 6. Conclusion W e presented a nov el method, which we call Coordi- nated Crossmodal Joint Embeddings (CJME), for the task of audio visual zeroshot classification and retriev al of videos. The method learns to embeds audio, video and text into a common embedding space and then performs nearest neigh- bor retrie val in that space for classification and retriev al. 7 SUN CUB A W A1 A W A2 Method U S HM U S HM U S HM U S HM CONSE [ 7 ] 6.8 39.9 11.6 1.6 72.2 3.1 0.4 88.6 0.8 0.5 90.6 1.0 DEVISE [ 4 ] 16.9 27.4 20.9 23.8 53.0 32.8 13.4 68.7 22.4 17.1 74.7 27.8 SAE [ 17 ] 8.8 18.0 11.8 7.8 54.0 13.6 1.8 77.1 3.5 1.1 82.2 2.2 ESZSL [ 5 ] 11.0 27.9 15.8 12.6 63.8 21.0 6.6 75.6 12.1 5.9 77.8 11.0 ALE [ 8 ] 21.8 33.1 26.3 23.7 62.8 34.4 16.8 76.1 27.5 14.0 81.8 23.9 CJME 30.2 23.7 26.6 35.6 26.1 30.1 29.8 47.9 36.7 51.9 36.8 43.1 T able 6. Comparison with existing methods on standard datasets (projection based methods only , see sec. 5.2 for details) Modality Method train test S U HM CONSE [ 7 ] video video 48.5 19.6 27.9 DEVISE [ 4 ] video video 39.8 26.0 31.5 SAE [ 17 ] video video 29.3 19.3 23.2 ESZSL [ 5 ] video video 33.8 19.0 24.3 ALE [ 8 ] video video 47.9 25.2 33.0 CJME video video 43.2 27.1 33.3 CJME both video 41.5 28.8 33.9 CJME both both 41.0 29.5 34.4 T able 7. Comparison with existing methods on proposed dataset (projection based meth- ods only , see sec. 5.2 for details) car dog cat audio camera video bird video cat purring pig bus dog video dog video bird video bird video car audio train audio truck audio stream audio church-bell audio bus video gunshot audio bird audio Seen class retrieval Unseen class retrieval Figure 5. Qualitative crossmodal retrie val results with the proposed method. Each block of two rows from top to bottom corresponds to text to audio, text to video, audio to video and video to video respecti vely . The small icons on the left top of each image indicates the modality considered for that specific video. Please see detailed results video in the supplementary material. The loss function we propose has three components, two bi- modal text-audio and text-video triplet losses, and an audio- video crossmodal similarity based loss. Motiv ated by the fact that the two modalities might carry different amount of information for dif ferent examples, we also proposed a modality attention learning framework. The attention part learns to predict the dominant modality for the task, ie. if the object is occluded but the audio is clear, and base the pre- diction on that modality only . W e reported extensi ve exper - iments to v alidate the method and sho wed adv antages of the method ov er baselines, as well as demonstrated crossmodal retriev al which is not possible with the baseline methods. W e also constructed a dataset appropriate for the task which is a subset of a large scale unconstrained dataset for audio ev ent detection in video. W e plan to release the dataset de- tails upon acceptance. 8 References [1] Zeynep Akata, Scott Reed, Daniel W alter, Honglak Lee, and Bernt Schiele. Evaluation of output embeddings for fine- grained image classification. In Computer V ision and P attern Recognition , pages 2927–2936, 2015. [2] Y ongqin Xian, T obias Lorenz, Bernt Schiele, and Zeynep Akata. Feature generating networks for zero-shot learning. In Computer V ision and P attern Recognition , 2018. [3] W ei-Lun Chao, Soravit Changpinyo, Boqing Gong, and Fei Sha. An empirical study and analysis of generalized zero- shot learning for object recognition in the wild. In Eur opean Confer ence on Computer V ision , pages 52–68. Springer , 2016. [4] Andrea Frome, Greg S Corrado, Jon Shlens, Samy Bengio, Jeff Dean, T omas Mikolov , et al. De vise: A deep visual- semantic embedding model. In Advances in Neural Informa- tion Pr ocessing Systems , pages 2121–2129, 2013. [5] Bernardino Romera-Paredes and Philip T orr . An embarrass- ingly simple approach to zero-shot learning. In International on Machine Learning , pages 2152–2161, 2015. [6] Christoph H Lampert, Hannes Nickisch, and Stefan Harmel- ing. Attribute-based classification for zero-shot visual object categorization. P attern Analysis and Machine Intelligence , 36(3):453–465, 2014. [7] Mohammad Norouzi, T omas Mikolov , Samy Bengio, Y oram Singer , Jonathon Shlens, Andrea Frome, Greg S Corrado, and Jef frey Dean. Zero-shot learning by conv ex combination of semantic embeddings. arXiv preprint , 2013. [8] Zeynep Akata, Florent Perronnin, Zaid Harchaoui, and Cordelia Schmid. Label-embedding for image classification. P attern Analysis and Machine Intelligence , 38(7):1425– 1438, 2016. [9] V Kumar V erma, Gundeep Arora, Ashish Mishra, and Piyush Rai. Generalized zero-shot learning via synthesized exam- ples. In Computer V ision and P attern Recognition , pages 4281–4289, 2018. [10] Y ongqin Xian, Christoph H Lampert, Bernt Schiele, and Zeynep Akata. Zero-shot learning-a comprehensiv e evalu- ation of the good, the bad and the ugly . T ransactions on P attern analysis and Machine Intelligence , 2018. [11] Ashish Mishra, V inay Kumar V erma, M Shiv a Krishna Reddy , S Arulkumar , Piyush Rai, and Anurag Mittal. A gen- erativ e approach to zero-shot and few-shot action recogni- tion. In W inter on Applications of Computer V ision , pages 372–380. IEEE, 2018. [12] Jie Qin, Li Liu, Ling Shao, Fumin Shen, Bingbing Ni, Jiaxin Chen, and Y unhong W ang. Zero-shot action recognition with error-correcting output codes. In Computer V ision and P at- tern Recognition , pages 2833–2842, 2017. [13] Xun Xu, Timothy M Hospedales, and Shaogang Gong. Multi-task zero-shot action recognition with prioritised data augmentation. In Eur opean on Computer V ision , pages 343– 359. Springer , 2016. [14] Jort F Gemmeke, Daniel PW Ellis, Dylan Freedman, Aren Jansen, W ade Lawrence, R Channing Moore, Manoj Plakal, and Marvin Ritter . Audio set: An ontology and human- labeled dataset for audio events. In International on Acous- tics, Speech and Signal Processing , pages 776–780. IEEE, 2017. [15] Richard Socher , Milind Ganjoo, Christopher D Manning, and Andrew Ng. Zero-shot learning through cross-modal transfer . In Advances in Neur al Information Pr ocessing Sys- tems , pages 935–943, 2013. [16] Y ongqin Xian, Zeynep Akata, Gaurav Sharma, Quynh Nguyen, Matthias Hein, and Bernt Schiele. Latent embed- dings for zero-shot classification. In Computer V ision and P attern Recognition , pages 69–77, 2016. [17] Elyor Kodiro v , T ao Xiang, and Shaogang Gong. Semantic autoencoder for zero-shot learning. In Computer V ision and P attern Recognition , pages 3174–3183, 2017. [18] Ankan Bansal, Karan Sikka, Gaurav Sharma, Rama Chel- lappa, and Ajay Div akaran. Zero-shot object detection. arXiv pr eprint arXiv:1804.04340 , 2018. [19] Pengkai Zhu, Hanxiao W ang, T olga Bolukbasi, and V enkatesh Saligrama. Zero-shot detection. arXiv pr eprint arXiv:1803.07113 , 2018. [20] Shafin Rahman, Salman Khan, and Fatih Porikli. Zero-shot object detection: Learning to simultaneously recognize and localize novel concepts. arXiv pr eprint arXiv:1803.06049 , 2018. [21] Ian Goodfello w , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farle y , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generativ e adversarial nets. In Advances in Neural Information Pr ocessing Systems , pages 2672–2680, 2014. [22] Scott Reed, Zeynep Akata, Xinchen Y an, Lajanugen Lo- geswaran, Bernt Schiele, and Honglak Lee. Genera- tiv e adv ersarial text to image synthesis. arXiv pr eprint arXiv:1605.05396 , 2016. [23] Xinjian Li, Siddharth Dalmia, David R. Mortensen, Florian Metze, and Alan W Black. Zero-shot learning for speech recognition with univ ersal phonetic model, 2019. [24] Y usuf A ytar , Carl V ondrick, and Antonio T orralba. Sound- net: Learning sound representations from unlabeled video. In Advances in Neur al Information Processing Systems , pages 892–900, 2016. [25] Andrew Owens, Jiajun Wu, Josh H McDermott, William T Freeman, and Antonio T orralba. Ambient sound provides supervision for visual learning. In Eur opean on Computer V ision , pages 801–816. Springer , 2016. 9 [26] Relja Arandjelovic and Andre w Zisserman. Look, listen and learn. In International on Computer V ision , pages 609–617. IEEE, 2017. [27] Andrew Owens and Alexei A Efros. Audio-visual scene analysis with self-supervised multisensory features. arXiv pr eprint arXiv:1804.03641 , 2018. [28] Hang Zhao, Chuang Gan, Andre w Rouditchenko, Carl V on- drick, Josh McDermott, and Antonio T orralba. The sound of pixels. arXiv preprint , 2018. [29] Ruohan Gao, Rogerio Feris, and Kristen Grauman. Learning to separate object sounds by watching unlabeled video. arXiv pr eprint arXiv:1804.01665 , 2018. [30] Relja Arandjelovi ´ c and Andre w Zisserman. Objects that sound. arXiv pr eprint arXiv:1712.06651 , 2017. [31] Sanjeel Parekh, Alexey Ozerov , Slim Essid, Ngoc Duong, Patrick P ´ erez, and Ga ¨ el Richard. Identify , locate and separate: Audio-visual object extraction in lar ge video collections using weak supervision. arXiv preprint arXiv:1811.04000 , 2018. [32] Arda Senocak, T ae-Hyun Oh, Junsik Kim, Ming-Hsuan Y ang, and In So Kweon. Learning to localize sound source in visual scenes. In Computer V ision and P attern Recognition , pages 4358–4366, 2018. [33] Arsha Nagrani, Samuel Albanie, and Andrew Zisserman. Seeing v oices and hearing faces: Cross-modal biometric matching. In on Computer V ision and P attern Recognition , pages 8427–8436, 2018. [34] Ariel Ephrat, Inbar Mosseri, Oran Lang, T ali Dekel, Kevin W ilson, A vinatan Hassidim, W illiam T Freeman, and Michael Rubinstein. Looking to listen at the cocktail party: a speaker-independent audio-visual model for speech sepa- ration. A CM T ransactions on Gr aphics , 37(4):112, 2018. [35] Einat Kidron, Y oav Y Schechner, and Michael Elad. Pixels that sound. In Computer V ision and P attern Recognition , volume 1, pages 88–95. IEEE, 2005. [36] Emmanuel V incent INRIA Benjamin Elizalde, Bhiksha Raj. Large-scale weakly supervised sound event detection for smart cars, 2017. [37] Joao Carreira and Andrew Zisserman. Quo v adis, action recognition? a new model and the kinetics dataset. In Com- puter V ision and P attern Recognition , 2017. [38] Anurag Kumar , Maksim Khadkevich, and Christian F ¨ ugen. Knowledge transfer from weakly labeled audio using con- volutional neural network for sound ev ents and scenes. In International on Acoustics, Speech and Signal Pr ocessing , pages 326–330. IEEE, 2018. [39] T omas Mikolov , Edouard Grav e, Piotr Bojano wski, Christian Puhrsch, and Armand Joulin. Adv ances in pre-training dis- tributed w ord representations. In International on Language Resour ces and Evaluation , 2018. [40] Jon R Kettenring. Canonical analysis of several sets of vari- ables. Biometrika , 58(3):433–451, 1971. [41] Jeffrey Dalton, James Allan, and Prana v Mirajkar . Zero-shot video retriev al using content and concepts. In Proceedings of the 22nd A CM international confer ence on Information & Knowledge Manag ement , pages 1857–1860. A CM, 2013. [42] Chuang Gan, Ming Lin, Y i Y ang, Gerard De Melo, and Alexander G Hauptmann. Concepts not alone: Exploring pairwise relationships for zero-shot video acti vity recogni- tion. In Thirtieth AAAI Confer ence on Artificial Intellig ence , 2016. 10 A. Difference with pr eviously claimed zeroshot video retrie val approach There ha ve been some earlier works [ 41 , 42 ] that claimed to be zeroshot video retriev al b ut their definition of zeroshot is different from the contemporary meaning [ 10 ]. In these earlier works of zeroshot, there is no separation of seen and unseen class queries, which clearly violates the contempo- rary meaning of zeroshot. The authors claim these works to be zeroshot as there is no pairing between the query and the example retrie val lists in the training set. In order to learn from the un- paired dataset, ’concept’ detectors (e.g. airplanes, bicycles, church, computers) are used separately for both the modal- ities to extract the concepts. The extracted concepts are fi- nally aligned for cross-modal retriev al. Howe ver , these con- cept detectors are pre-trained on external annotated datasets (e.g. ImageNet, UCF101) which means that their models hav e already seen the concepts prior to testing. This again violates the contemporary setting of zero-shot where no pre-training is allowed for the unseen data. Our definition of zero-shot is aligned with the more re- cent and strict definition [ 10 ], where the unseen query con- cept class examples are ne ver seen during training (i.e. even pre-trained detectors are not allowed). Hence, our approach of zero-shot is different from the pre viously claimed ap- proach and can not be compared directly with them. B. Dataset In this section we give more details about the dataset, AudioSetZSL as mentioned in Section. 4 of the paper . The statistics for different splits of the dataset is giv en in T able. 8 . The number of examples in the seen and unseen classes is given in T able. 9 and the number of examples in each class of the dataset is shown in T able. 10 . W e hav e provided some examples videos from the seen and unseen classes in Fig. 6 and Fig. 7 respectiv ely . As the dataset was collected for the audio task, it can be clearly seen that some of the frame doesn’t contain the video as suggested in the paper . This can be seen for the 2 nd examples in the class ambulance . split min max mean std. de v . train 176 22989 2844.39 4615.77 val 58 7663 947.61 1538.65 test 58 7663 947.88 1538.68 T able 8. Statistics on the number of examples per class for the AudioSetZSL dataset. class train val test seen classes 79795 26587 26593 unseen classes 14070 4684 4687 T otal 93865 31271 31280 T able 9. No. of examples in seen and unseen classes of the dataset class train v al test dog 7588 2529 2529 cat 2133 710 711 horse 1862 620 620 cattle 437 145 145 pig 467 155 156 goat 1096 365 365 panther 379 126 126 bird 15092 5030 5031 thunderstorm 192 64 64 rain 1317 439 439 stream 1583 527 527 ocean 1871 623 623 car 22989 7663 7663 truck 5514 1837 1838 bus 2888 962 962 ambulance 2540 846 846 motorcycle 4000 1333 1333 train 5140 17113 1713 aircraft 3104 1034 1034 bicycle 1622 540 541 skateboard 1703 567 568 clock 389 129 130 sewing 1050 350 350 fan 434 144 144 cashbox 176 58 58 printer 1915 638 638 camera 204 68 68 church-bell 662 220 220 hammer 260 86 86 sawing 431 143 143 gunshot 2249 749 750 fireworks 1699 566 566 boom 879 292 293 T otal 93865 31271 31280 T able 10. Number of examples per class av ailable in the AudioSetZSL dataset. The zeroshot classes are marked with boldface. 11 (Dog) (Cat) (Car) (Ambulance) (Hammer) (Sawing) Figure 6. Example videos from seen classes of the dataset. The classes are mentioned below each of the figure. Each row in the figure corresponds to an example video, where the frames are e xtracted at equal intervals from the entire video. 12 (Pig) (Panther) (Clock) (Gunshot) Figure 7. Example videos from unseen classes of the dataset. The classes are mentioned below each of the figure. Each row in the figure corresponds to an example video, where the frames are e xtracted at equal intervals from the entire video. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment