Challenges of Human-Aware AI Systems

From its inception, AI has had a rather ambivalent relationship to humans—swinging between their augmentation and replacement. Now, as AI technologies enter our everyday lives at an ever increasing pace, there is a greater need for AI systems to work synergistically with humans. To do this effectively, AI systems must pay more attention to aspects of intelligence that helped humans work with each other—including social intelligence. I will discuss the research challenges in designing such human-aware AI systems, including modeling the mental states of humans in the loop, recognizing their desires and intentions, providing proactive support, exhibiting explicable behavior, giving cogent explanations on demand, and engendering trust. I will survey the progress made so far on these challenges, and highlight some promising directions. I will also touch on the additional ethical quandaries that such systems pose. I will end by arguing that the quest for human-aware AI systems broadens the scope of AI enterprise, necessitates and facilitates true inter-disciplinary collaborations, and can go a long way towards increasing public acceptance of AI technologies.

💡 Research Summary

The paper argues that as artificial intelligence (AI) becomes ubiquitous in everyday life, the central challenge shifts from building systems that merely augment or replace humans to creating AI agents that can collaborate with people as true partners. The author defines “human‑aware AI” as autonomous agents that are capable of modeling the mental states of humans in the loop, recognizing their goals, desires, and capabilities, and using this knowledge to provide proactive assistance, behave in an explicable manner, generate on‑demand explanations, and foster trust.

The core technical contribution is a conceptual architecture that augments the classic sense‑plan‑act cycle with two mental‑model estimators: f_MH^r, the AI’s model of the human’s goals and abilities, and f_MR^h, the human’s model of the AI’s capabilities. By integrating these estimators, every stage of decision‑making—state estimation, world prediction, action projection, and policy selection—must account for both the agent’s own model (M_R) and the inferred human model (M_H^r), as well as the human’s expectations (M_R^h). This dual‑model perspective reshapes planning: the agent seeks a plan π that balances optimality with “distance” from the plan π₀ the human expects, formalized as a multi‑objective optimization problem. Two solution families are discussed: a model‑based approach that explicitly computes distances between plans, and a model‑free approach that learns a labeling function to estimate this distance.

When the distance is too large for the agent to adopt the human‑expected plan, the system must provide an explanation. The authors cast explanation as a model‑reconciliation problem: find a minimal set of changes E to the human’s mental model M_R^h such that the chosen plan becomes explicable under M_R^h + E. This is framed as a meta‑search over model space, and efficient search strategies are proposed. The paper illustrates the approach with a simplified urban search‑and‑rescue scenario, showing how a robot can either clear an obstacle to follow the human‑expected path (explicable behavior) or take a shorter, optimal path and explain the blockage (explanatory behavior).

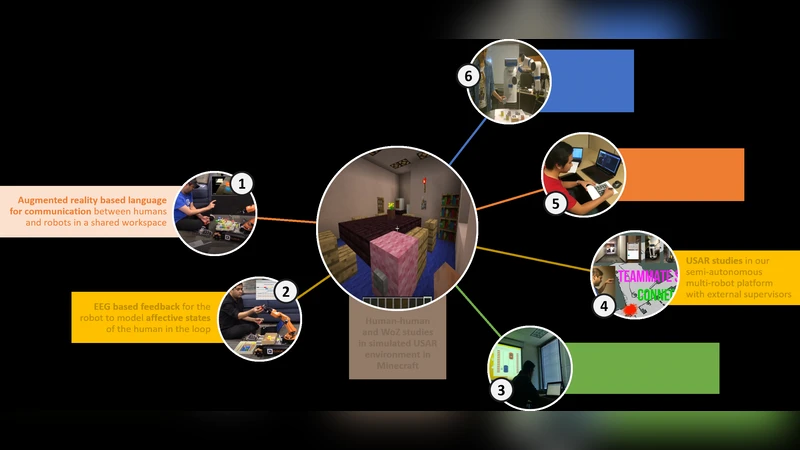

Beyond planning, the paper addresses how the required mental models can be acquired. In some domains, agents can assume a shared initial model; in others, they must learn from interaction traces, ranging from fully causal specifications (e.g., PDDL) to shallow, correlational models. The authors discuss learning techniques for both ends of this spectrum. They also explore sensing affective and attentional states using brain‑computer interfaces (e.g., Emotiv helmets) and augmented‑reality displays (e.g., HoloLens), arguing that such signals can dynamically modulate proactive assistance and explanation timing.

Trust is treated as a measurable construct, with test‑beds and micro‑worlds designed to study trust dynamics in human‑robot teams. Ethical considerations—privacy of mental‑state data, transparency of model inference, and the risk of manipulation—are highlighted as essential design constraints.

Finally, the paper emphasizes that solving these challenges requires interdisciplinary collaboration among AI, cognitive science, HCI, robotics, and ethics. By broadening AI’s scope to include human‑centric mental modeling and explainability, the field can not only advance technically but also improve public acceptance of AI technologies. The author concludes that the quest for human‑aware AI reshapes the AI enterprise, fostering true partnerships between humans and machines.

Comments & Academic Discussion

Loading comments...

Leave a Comment