Adversarial Colorization Of Icons Based On Structure And Color Conditions

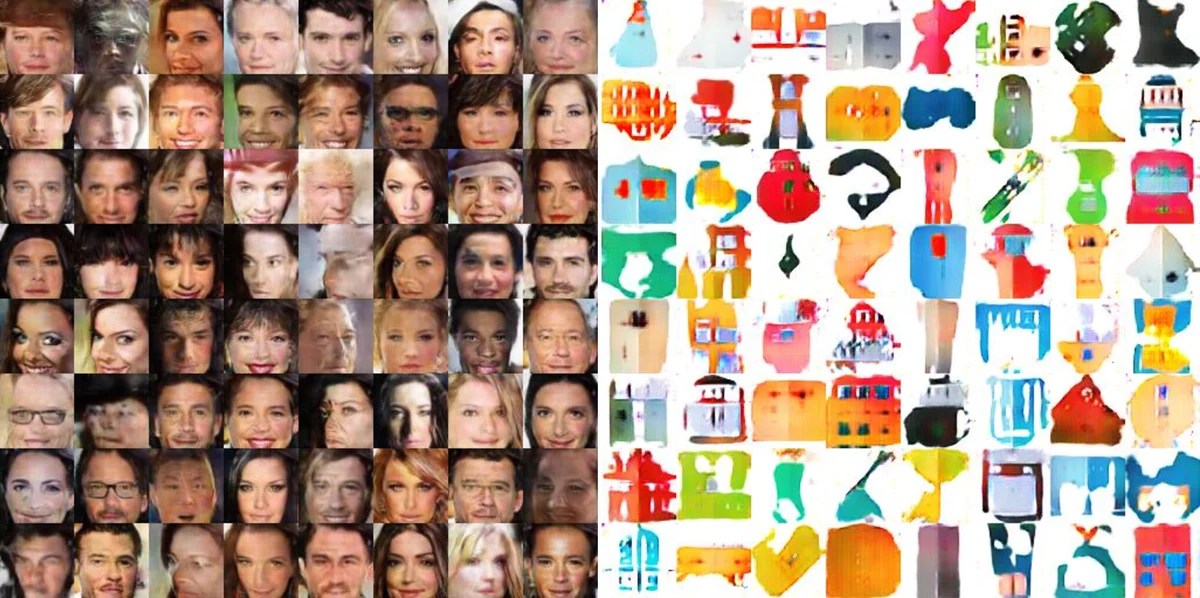

We present a system to help designers create icons that are widely used in banners, signboards, billboards, homepages, and mobile apps. Designers are tasked with drawing contours, whereas our system colorizes contours in different styles. This goal is achieved by training a dual conditional generative adversarial network (GAN) on our collected icon dataset. One condition requires the generated image and the drawn contour to possess a similar contour, while the other anticipates the image and the referenced icon to be similar in color style. Accordingly, the generator takes a contour image and a man-made icon image to colorize the contour, and then the discriminators determine whether the result fulfills the two conditions. The trained network is able to colorize icons demanded by designers and greatly reduces their workload. For the evaluation, we compared our dual conditional GAN to several state-of-the-art techniques. Experiment results demonstrate that our network is over the previous networks. Finally, we will provide the source code, icon dataset, and trained network for public use.

💡 Research Summary

This paper presents an automated system designed to assist graphic designers in creating icons, which are ubiquitously used in banners, signboards, mobile apps, and websites. The core idea is to reduce designers’ workload by splitting the creative process: designers focus on drawing the structural contour of an icon, while the system takes over the task of colorizing that contour according to a desired color style.

To achieve this, the authors propose a novel “Dual Conditional Generative Adversarial Network (GAN).” The system is trained on a custom-collected dataset of 12,575 icon images. From this dataset, structure conditions (contours) are automatically extracted using the Canny edge detector, and color style conditions are derived by clustering icons based on their 3D Lab color histograms, eliminating the need for manual labeling.

The network architecture consists of one generator and two discriminators, each dedicated to a specific condition. The generator takes two inputs: a user-drawn contour image (defining structure) and a reference man-made icon image (defining color style). It then synthesizes a new, colorized icon. This generated icon is evaluated by two separate discriminators. The Structure Discriminator (D_s) assesses whether the generated icon and the input contour form a matching pair, i.e., if their outlines align. The Color Discriminator (D_c) judges whether the generated icon and the reference icon form a matching pair in terms of overall color style and palette, not necessarily structure. The adversarial losses for each discriminator (L_s and L_c) are combined, training the generator to simultaneously fool both. This decomposition of the complex icon generation task into two simpler sub-problems (structure fidelity and style matching) is a key contribution, leading to more stable training and higher-quality results compared to using a single, all-encompassing discriminator.

For user convenience, the system abstracts the color condition input through a set of 12 semantic style labels (e.g., “Casual,” “Elegant,” “Dynamic”). When a user selects a label, the system randomly picks reference icons from the dataset that match that style’s predefined color combination and feeds them to the network. Style matching is based on comparing the icon’s histogram with histograms generated from the core color combinations associated with each label.

The proposed method was evaluated extensively. It demonstrated robustness against color bleeding even with open contours, provided real-time generation feedback, and produced icons with flat colors and clean boundaries that closely resemble professionally designed assets. In qualitative comparisons with several state-of-the-art image-to-image translation techniques including iGAN, CycleGAN, Pix2Pix, Comicolorization, MUNIT, and a manga colorization method (Anime), the dual conditional GAN consistently generated results that were more structurally accurate and stylistically coherent for the icon domain. The paper concludes by stating the intention to publicly release the source code, the icon dataset, and the pre-trained network to foster further research and application.

Comments & Academic Discussion

Loading comments...

Leave a Comment