As You Are, So Shall You Move Your Head: A System-Level Analysis between Head Movements and Corresponding Traits and Emotions

Identifying physical traits and emotions based on system-sensed physical activities is a challenging problem in the realm of human-computer interaction. Our work contributes in this context by investigating an underlying connection between head movem…

Authors: Sharmin Akther Purabi, Rayhan Rashed, Md. Mirajul Islam

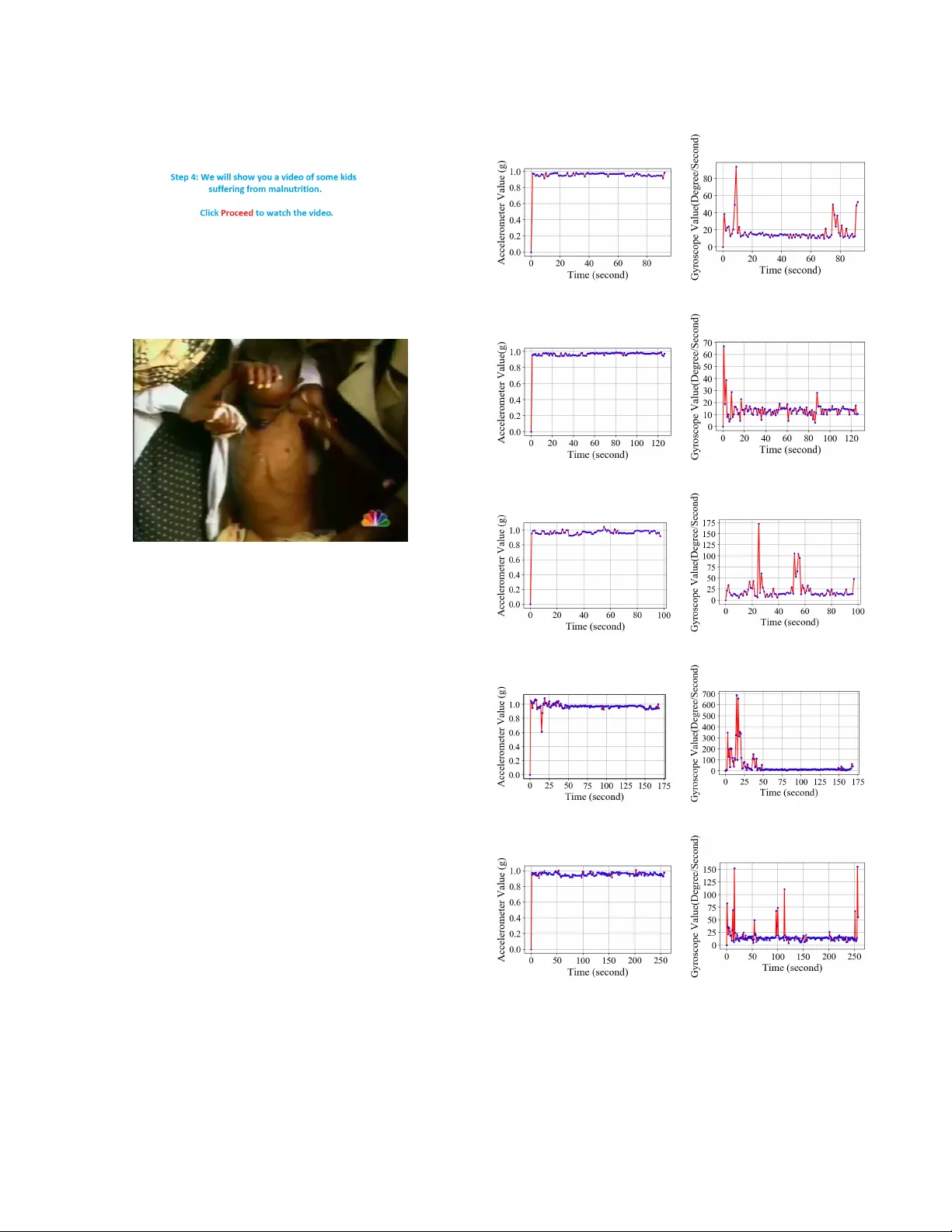

As Y ou Are, So Shall Y ou Move Y our Head: A System-Le vel Anal ysis between Head Movements and Corresponding T raits and Emotions Sharmin Akther Purabi, Rayhan Rashed, Md. Mirajul Islam, Md. Nahiyan Uddin, Mahmuda Naznin, and A. B. M. Alim Al Islam Department of Computer Science and Engineering, BUET , W est Polashi, Dhaka, Bangladesh-1000 Email: {1505067.sap, 1505006.mrr , 1405119.mi, 1405102.mnu}@ugrad.cse.buet.ac.bd, {mahmudanaznin, alim_razi}@cse.buet.ac.bd ABSTRA CT Identifying physical traits and emotions based on system-sensed physical acti vities is a challenging problem in the realm of human- computer interaction. Our work contributes in this context by in- vestigating an underlying connection between head mo vements and corresponding traits and emotions. T o do so, we utilize a head mov e- ment measuring device called eSense, which gi ves acceleration and rotation of a head. Here, first, we conduct a thorough study over head mov ement data collected from 46 persons using eSense while inducing fi ve different emotional states ov er them in isolation. Our analysis rev eals sev eral new head mo vement based findings, which in turn, leads us to a nov el unified solution for identifying differ - ent human traits and emotions through exploiting machine learning techniques over head mov ement data. Our analysis confirms that the proposed solution can result in high accuracy ov er the collected data. Accordingly , we dev elop an integrated unified solution for real-time emotion and trait identification using head mo vement data lev eraging outcomes of our analysis. CCS CONCEPTS • Human-centered computing In teractive systems and to ols . KEYWORDS head mov ement, emotion, machine learning, traits, ubiquitous A CM Reference F ormat: Sharmin Akther Purabi, Rayhan Rashed, Md. Mirajul Islam, Md. Nahiyan Uddin, Mahmuda Naznin, and A. B. M. Alim Al Islam. 2019. As Y ou Are, So Shall Y ou Mov e Y our Head: A System-Level Analysis between Head Movements and Corresponding Traits and Emotions. In 6th International Confer ence on Networking, Systems and Security (6th NSysS 2019), Decem- ber 17–19, 2019, Dhaka, Bangladesh. A CM, New Y ork, NY , USA, 9 pages. https://doi.org/10.1145/3362966.3362985 Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee pro vided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. Copyrights for components of this w ork owned by others than A CM must be honored. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistrib ute to lists, requires prior specific permission and /or a fee. Request permissions from permissions@acm.org. 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh © 2019 Association for Computing Machinery . A CM ISBN 978-1-4503-7699-0/19/12. . . $15.00 https://doi.org/10.1145/3362966.3362985 1 INTR ODUCTION Identifying human traits and emotional states has numerous applica- tions in di versified sectors covering health diagnosis, human-robot interactions, humanoid research, etc. Reasons behind the diversified applications is the fact that acti vity and behaviour vary from person to person, and the acti vity and behavior mostly get dictated by the person’ s trait and emotional state. Thus, it is imperati ve that if the traits and emotional state can get identified, corresponding acti vity and behavior can get predicted and generated. Ho wev er, the task of identifying human traits and emotional states is al ways regarded as a challenging task, and perhaps this task is still at its embryonic stage exhibiting a substantial scope of conducting research. T raits carry most of the reasons behind di versified human be- haviors and ph ysical activities. Dif ferent methodologies hav e been explored to identify the relationship between the human activities and their connection with traits and emotional states [ 1 , 2 ]. Some of the human traits can be identified by some physical diagnosis processes such as blood test [ 3 ], dope test [ 4 ], etc. Examples of such identifiable traits include smoking, taking alcohol, etc. Although these diagnosis processes are well accepted, they are often e xpensiv e to some extents [ 5 ]. On the other hand, questionnaire or intervie w- based approaches used to identify the traits [ 6 ] can be considered to be cheaper alternativ es. Howe ver , these approaches are trait-specific [ 7 ], and thus, these lack potency to be scaled up. Accordingly , it is very dif ficult (if not impossible) to apply an intervie w-based ap- proach to another application, as this demands to hav e new domain experts to identify user traits and emotional states. Moreover , success of these approaches often depends on overcoming socio-cultural and other human related barriers, which often limits their applicability . Therefore, the questionnaire or intervie w-based approaches cannot be applied in a ubiquitous manner . T o this extent, we endeav our to explore devising a ubiquitous method of identifying human traits and emotional states going be- yond the traditional tests and interview-based approaches. Here, we adopt a common human activity - head mo vement - to explore whether it gets related to human traits and emotional states. In case the head movement activity can get related to human traits and emotional states, we can attempt to identify the human traits and emotional states through analyzing corresponding head mo vement data. T o the best of our knowledge, such an exploration is yet to be focused in the literature, and we are the first to do it. Accordingly , in this paper, we conduct a research on revealing relationships between human traits and head mov ement data through analyzing translational and r otational human-head movement data. 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Sharmin and Rayhan et al. W e apply machine learning techniques to relate human traits with the corresponding head movement data. Here, we build our data set by collecting head mov ement data from 46 participants using a de vice called eSense . The device has built-in Accelerometer and Gyroscope in it [ 8 ]. W e collect data with the device while imposing fiv e dif ferent emotional states in isolation on each of the participants. Subsequently , we feed dif ferent machine learning models with our collected data set to analyze underlying relationships between the head mov ement data and corresponding traits and emotional states. Our study rev eals that there exist relationships between spontaneous mov ement of the head and corresponding traits and emotional states. Our collected head mov ement data consists of translational and r otational motion along the three dimensional physical ax es. Here, we explore 14 different human traits. Examples of the traits are religion practice, religious belief, religion practice among fam- ily members, physical exercise, smoking, diabetes among family members, heart disease among family members, brain stroke among family members,fastfood intake habits etc. Besides, we e xplore five different emotional states such as happy , sad, etc. W e find out rela- tionships between the head mo vement data and the corresponding traits and emotional states through utilizing machine learning tech- niques. Our machine learning-based analysis over the collected data demonstrates substantial accuracy in identifying the traits and emo- tional states. Based on our work in this paper, we make the following set of contributions: • W e collect head mov ement data (at the granularity of each second) with eSense device. While collecting the data, we induce fiv e different emotional states in isolation over each of our 46 participants by sho wing related videos. • T o expedite the process of data collection, we dev elop an automated data collection application to ev entually come up with an automated integrated surv ey system. • W e apply machine learning based techniques over the col- lected data to analyze how the head movement data get corre- lated with the traits and emotional states. Here, we analyze capability of the machine learning techniques in digging the correlation, and thus performing identification of traits and emotional states based on head mov ement data. The organization of this paper is as follows. In Section 2, we discuss relev ant research studies. In Section 3, we provide the detail methodology of our approach. Later, in Section 4, we present our experimental results. Finally , in Section 5, we conclude this paper with future research directions. 2 B A CKGR OUND STUD Y Our research builds on prior examinations of head mov ement to indi- cate it’ s relationship with human emotions and other physical activi- ties. The study by W allbot et al. demonstrated that body movements and postures to some degree are specific for certain emotions[ 9 ]. A sample of 224 video takes, in which actors and actresses por- trayed the emotions of elated joy , happiness, sadness, despair , fear , terror , cold anger , hot anger, disgust, contempt, shame, guilt, pride, and boredom via a scenario approach, was analyzed using coding schemata for the analysis of body movements and postures. Results indicate that some emotion-specific movement and posture char- acteristics seem to exist, but that for body movements differences between emotions can be partly explained by the dimension of acti- vation. While encoder (actor) dif ferences are rather pronounced with respect to specific mo vement and posture habits, these dif ferences are largely independent from the emotion-specific differences found. The results are discussed with respect to emotion-specific discrete expression models in contrast to dimensional models of emotion encoding. When a person is stuck with grief, normally he/she shows lethargy which leads them to make less human body movement. On the other hand, being happy one normally talks with excitement and a jolly mind. Then their physical body movement increases. Our motiv ation is to explore whether similar conclusions can be drawn for head mov ement patterns also. For this purpose we intend to go through a rigorous research methodology . Provided we get signifi- cant success, we can mov e on further to find co-relations between physical traits, emotions and head mo vement patterns. Throughout this research, our moti vation was to check whether any co-relation exists and to what e xtent. Many human physiological studies ha ve provided strong evidence for a close link among human emotion, trait and head movement. The study in [ 10 ] focused on dimensional prediction of emotions from spontaneous con versational head gestures. Their preliminary experi- ments sho w that it is possible to automatically predict emotions in terms of these fi ve dimensions (arousal, expectation, intensity , po wer and valence) from con versational head gestures. A basic method of using temporal and dynamic design elements, in particular physical mov ements, to improve the emotional value of products is presented in [ 11 ]. T o utilize physical movements in design, a relational frame- work between movement and emotion was dev eloped as the first step of that research. In the framework, the movement represent- ing emotion has been defined in terms of three properties: v elocity , smoothness and openness. Based on this framework, a new inter- activ e device, ’Emotion Palpus’, was developed, and a user study was also conducted. The result of the research showed that emo- tional user experience got impro ved when used as a design method or directly applied to design practice as an interacti ve element of products. In [ 12 ], the authors examined whether it is possible to identify the emotional content of behaviour from point-light displays where pairs of actors are engaged in interpersonal communication. In this study [ 13 ], the authors suggested that non verbal gestures such as head mov ements play a more direct role in the perception of speech than previously known. According to their study , the head move- ment correlated strongly with the pitch (fundamental frequenc y) and amplitude of the talker’ s v oice. Sogon et al. [ 14 ] showed that se ven fundamental emotions of joy , surprise, fear, sadness, disgust, anger , contempt and three af fectiv e-cognitive structures for the emotions of affection, anticipation, and acceptance were displayed by four Japanese actors/actresses with their backs turned tow ard the viewer . The emotions of sadness, fear , and anger as expressed in kinetic mov ement showed high agreement between the two cultural groups. Joy and surprise, ev en though they are classified as fundamental emotions, contained some cultural components that affected the judg- ments. Furthermore, the subjects from USA successfully identified disgust as portrayed by Japanese actors/actresses, but the subjects from Japan did not identify the e xpressions of disgust or contempt. As Y ou Are, So Shall Y ou Move Y our Head 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Affection, anticipation, and acceptance ha ve some cultural compo- nents that are interpreted differently by Japanese and Americans, and this accounted for some of the misunderstandings. Nev erthe- less, most of the scenes depicting emotions and af fective-cogniti ve structures emotions were correctly identified by the subjects of each culture. There is another research work [ 15 ], which compared the identification of basic emotions from both natural and synthetic dy- namic vs. static facial expressions in 54 subjects. They found no significant differences in the identification of static and dynamic expressions from natural faces. In contrast, some synthetic dynamic expressions were identified much more accurately than static ones. This effect was e vident only with synthetic facial expressions whose static displays were non-distinctiv e. Their results showed that dy- namics does not improve the identification of already distinctive static facial displays. On the other hand, dynamics had an important role for identifying subtle emotional expressions, particularly from computer-animated synthetic characters. 3 OUR PR OPOSED APPRO A CH Our working methodology can be divided in two phases: data col- lection and training phase, testing phase. W e describe the phases in detail in this section. 3.1 Preliminaries Our proposed technique relies on training data, which is collected through the eSense de vice which is a bluetooth enabled device. Col- lected data samples are stored and exploited to train a model. T rain- ing phase includes two steps: collecting training data and generating training results, selection of the model. The training phase begins with the respondent wearing the eSense de vice. The data is collected through its BLE interface and sensors and stored in an android de vice. Later on, the data is synchronized with the experimental timeline and processed for analyzing. Data Collection Phase : From the earable, using its accelerometer , we get the translational motion component along the x, y , and z axes. Moreo ver , with built-in gyroscope, the rotational components of head movement along x, y , and z axes have been collected. W e hav e computed the mean and standard deviation of all data points. From the questionnaire responses, we ha ve found demographic in- formation of the participants and also their 14 human traits [ 16 ]. Individually no mov ement component show an y significant outcome, but a combination of these components contribute to the identifica- tion of traits and emotion. T raining Phase : Each head mov ement data point can be consid- ered as a collection of 6 components, contributing to translational and rotational motion in the direction of x, y , and z axis. So, it can be considered as a 6 dimensional vector . T aking a combined transla- tional and rotational term ( p x 2 + y 2 + z 2 ) reduces the dimension of vector to 2. This transforms o verall head mov ement data in transla- tional and rotational form. W e have tested with the later one ´ s mean and standard deviation. T esting Accur acy of the T rained Models : After the training phase is done, it is time to check its consistency , correctness, and pick the best model that best describes the trait. Therefore, a sample data point goes through selected machine learning model to e xtract the feature. The original feature with the result obtained are compared. 3.2 Data Collection In this section we describe the details of data collection. 3.2.1 De vices Used for Data Collection. Each of the partici- pants wore an earable called eSense . This device is equipped with a built in sensors lik e accelerometer and gyroscope [ 8 ]. Data is trans- mitted from these sensors to an android device in real-time using Bluetooth Low Ener gy (BLE) radio connection. W e connect the device thorough using bluetooth to an Android 9 OS based smartphone. Each participant sat before a computer which was used to communicate with and receive data from the sensor suites of the earable. At the time of collecting brain wa ve data, fiv e different videos were shown on the computer screen to create dif ferent emotional en vironments for the participant. Once the brain data collection phase is finished, each participant filled up our questionnaire which provided fe w subjectiv e data about him/her . 3.2.2 Customized Application f or Data Collection. T wo dif- ferent pool of applications were used for the whole process. After the initial bluetooth connection setup, an app was run to initiate the actual data collection. At the same time a ja va based desktop appli- cation was run to sho w the videos and run the survey . T ime-stamps were recorded for each data sampling time from android based de- vice data and each phase of desktop application. Later collected datasets were integrated to the training phase. 3.2.3 Demography of the par ticipants . Participants for this ex- periment were selected from a di versified background. The y cover different age groups (from 18 to 45), different genders (45% female and 55% male) and different le vels of e xperience in behavioral per- spectiv e and computer literacy . 70% of the respondents are frequent computer users, and the rest use computer occasionally . W e tried to collect data to make a balanced data set for each trait, and we ha ve successfully done it for all the 7 traits. 3.2.4 Challenges in Data Collection. The data collection pro- cess needs a place where no external distraction is possible. Hands on head mov ement data extraction based on induced emotion is only possible if there is no other e xternal factor , distraction responsible for slightest head mov ement. This makes the choice of setup en vi- ronment very sophisticated. Data collection was only possible in controlled laboratory experiment. 3.3 Machine Learning Based Analysis W e used the Auto-W eka package of the W eka software 3.8.2 version. It finds out the best classifying or re gression algorithm automatically giv en the training dataset. W e run the Auto-W eka with two parallel core and approximately 30-40 minutes time was giv en to check sufficiently lar ge number of combinations of algorithms. As we collect head movement data sho wing five dif ferent videos, we get fi ve dif ferent sets of accelerometer and gyroscope data and each of them is stored during a particular emotional state. W e repeat our machine learning analysis with the fiv e set of different emotional states’ head movement data. From the surve y questionnaire answered by the train participants, we chose 7 traits that we are looking for to train. W e used participant’ s answer as the ground truth for all the trait and behavior related questions of the surve y . For each trait we train one machine learning model where the label is the trait, 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Sharmin and Rayhan et al. Figure 1: W orkflow of our proposed system Figure 2: eSense de vice Figure 3: Axis orientation of eSense [17] and features are the mean and standard de viation of accelerometer and gyroscope data of participants as described before. As a result, we trained 7 machine learning models corresponding to 7 traits in each of the fiv e emotional states which produces 35 training models. Among these 7 traits Smoking, Practicing religion, High-fat food Figure 4: P airing of eSense device intake habit, high-sugar food intake habit, heart diseases and diabetes in family are cate gorised in yes or no. But the fast-food intake data was cate gorised into 3 ranges (Low , Medium, High) based on how many fast-food meal participant tak e in each week on average. When we predict a certain trait during the evaluation period, we use an aggregated form of all the 5 emotional states ´ models for the trait where we take the model with best training accuracy . W e hav e also made a model to predict participant ´ s emotion from his accelerometer and gyroscope data. W e label the emotional state of a participant’ s data based on which video the participant was watching during that time. W e chose a funn y video of Mr .Bean for representing the happy emotional state, a food preparing video with background sound for representing surprise emotional state, a video (with command to keep close their eyes) with no sound and visual scenery for representing neutral state , a video of people suffering As Y ou Are, So Shall Y ou Move Y our Head 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh (a) Notification for sad segment (b) Sad V ideo Figure 5: Snapshots of desktop application from malnutrition for representing sad emotional state and finally an animation video sho wing effects of smoking to human body for representing disgust . 4 EXPERIMENT AL RESUL TS This section describes the findings of our experiments. Our hypothesis was - There will be chang es of head movement patterns in differ ent emotional states . Figure 6 shows the results of head mov ement data (both components translational, rotational) in three different representati ve states. Figure 6: e,f depicts the disgust state data, whereas Figure 6: c,d shows data samples of sadness induced period. Figure 6: i,j is the depiction of data collected during another time-frame with surprise emotional state. All these data match with our hypothesis. 4.1 Results f or V alidation W e go one step further to train and test validity of machine learning models as mentioned in Section 3. This would enable us to extract meaningful information, features from head mov ement data pattern. The best suited algorithm chosen over several machine learning algo- rithms demonstrate that our proposed technique achieves reasonable high training accuracy . Howe ver , our proposed technique demand significantly less memory and less computation time compared to the other algorithms making it suitable for pragmatic application. W e hav e set success rate as the acceptance criteria to compare among algorithms and take the one gi ving the best result. (a) Accelerometer data (b) Gyroscope data (c) Accelerometer data (d) Gyroscope data (e) Accelerometer data (f) Gyroscope data (g) Accelerometer data (h) Gyroscope data (i) Accelerometer data (j) Gyroscope data Figure 6: Sensor data of ev ery second of a random user . (a) and (b) ar e during sho wing neutral video,(c) and (d) are during showing Sad video;(e) and (f) are during showing video with dis- gust;(g) and (h) are during showing happy video,(i) and (j) are during showing video of surprise. 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Sharmin and Rayhan et al. TP Rate FP Rate Precision Recall F-measur e 100% 0% 100% 100% 100% T able 1: Perf ormance related value of model f or emotion pre- diction Predicted T rait - Emotion Accuracy(%) Smoker - Happy 97.5 Smoker - Neutral 97.5 Smoker - Surprise 97.5 Smoker - Sad 97.5 Smoker - Disgust 97.5 Practitioner - Happy 80 Practitioner - Neutral 80 Practitioner - Surprise 62.5 Practitioner - Sad 90 Practitioner - Disgust 92.5 Fast food intake - Happ y 87.2 Fast food intake - Neutral 92.3 Fast food intake - Surprise 66.7 Fast food intake - Sad 69.23 Fast food intake - Disgust 71.8 Fat intake - Happ y 82.5 Fat intake - Neutral 62.5 Fat intake - Surprise 87.5 Fat intake - Sad 100 Fat intake - Disgust 55 Sugar intake - Happy 95 Sugar intake - Neutral 97.5 Sugar intake - Surprise 87.5 Sugar intake - Sad 97.5 Sugar intake - Disgust 97.5 Heart disease in family - Happy 100 Heart disease in family - Neutral 100 Heart disease in family - Surprise 92.5 Heart disease in family - Sad 85 Heart disease in family - Disgust 100 Diabetes in family - Happy 67.5 Diabetes in family - Neutral 67.5 Diabetes in family - Surprise 80 Diabetes in family - Sad 67.5 Diabetes in family - Disgust 72.5 T able 2: Accuracy of training models As we mentioned in the previous section, we train 35 machine learning models for trait prediction and 1 model for emotion predic- tion. Here we describe the training accuracy of each of the 35 training models for trait prediction in T able: 2. The model for emotion pre- diction giv es accuracy of 100% under AdaBoostM1 Classifier with arguments [-P , 99, -I, 20, -Q, -S, 1, -W , weka.classifiers.trees.J48, –, -O, -S, -M, 1, -C, 0.9585694907535297]. The weighted a verage of performance related v alues for the model is giv en in table 1 In table 3 we provide classifiers and performance related v alues of the models that we used to get the accuracy gi ven in table 2. In Figure 7 we provide the accuracy of each traits in each video and the classifier for it in bar chart format. The algorithm is written in the bar providing the figure-mentioned accuracy . In Figure 7:a the accuracy of fast food intake prediction is accumulated based on data taken at different emotional states. Figure 7:b for high fat food taking prediction. The prediction models for Smoking habit, fast food taking,high sugar taking habits,practicing religion habit are also shown in Figure 7. A more details are listed in table 4. 4.2 Hardwar e Requirement in T raining Phase Through an ev aluation with 46 users, our system sho ws reasonably high accuracy . T o perform the identification task, the application takes only 12 minutes on an average which accumulates the time of showing 5 videos each having a duration of 1.5 to 3 minutes approximately . The application uses 3-4% of CPU with 100-105 MB memory on the laptop during the small period of video showing and answering survey questions from participants.The accelerometer and gyroscope data are sampled in one second interv al at the same time from our android application without causing any trouble to the participants while watching video. 4.3 Accuracy of Different Pr edictions W e have measured the prediction accuracy of some traits after train- ing the collected data from the participants. From T able 4, we can see the accuracy of the predictions. The maximum accurac y of all emotional states has been reported in T able 2 for each trait. 4.4 Threats to V alidity Our research work has been conducted in a controlled laboratory en- vironment. There is a chance that the same set of results, correctness may not be on the line when tested in noisy environment. Moreo ver , the respondents to our surve y system have no disabilities or any diseases related to abnormal head shaking or mov ement. Experimen- tation with these specialized participants will probably not bring the same set of conclusions. 5 CONCLUSION AND FUTURE WORK This paper contributes in the field of human trait and emotional state identification by means of head mov ement analyses using different sensors and machine learning techniques. This research does not only find different head mov ement patterns in different induced emotional states, but also finds correlations between ph ysical traits and head mov ement patterns. In future, we will increase the number of participants to enlar ge our data set to enhance the accurac y of our identification. Besides, to scale it up more, we will generate synthetic data based on our real collected data to make our training set larger . Additionally , ev en though we hav e explored 14 human traits, there are many more human traits that might hav e correlation with head movement. W e plan to explore these in future.In these 14 traits we could only make balanced data set for 7 traits. W e will try to make remaining trait’ s(religious belief,sleep problem,alcoholic etc.) data set balanced so that we can analyse these to find out if there is correlation between these traits and head mov ement. As Y ou Are, So Shall Y ou Move Y our Head 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Predicted trait-Emotion Best classifier TP rate(%) FP rate(%) Precision(%) Recall(%) F-measur e(%) Smoker -Happy RandomF orest 97.5 11.8 97.6 97.5 97.4 Smoker -Neutral SMO 97.5 11.8 97.6 97.5 97.4 Smoker -Surprise Logistic 97.5 11.8 97.6 97.5 97.4 Smoker -Sad AdaBoostM1 97.5 11.8 97.6 97.5 97.4 Smoker -Disgust RandomForest 97.5 11.8 97.6 97.5 97.4 Practitioner-Surprise AdaBoostM1 62.5 56.3 76.9 62.5 50.4 Practitioner-Neutral RandomT ree 80 25.8 80.5 80.0 79.3 Practitioner-Happ y JRip 80 21.7 80.0 80.0 58.3 Practitioner-Sad Random T ree 90 12.9 90.2 90.0 89.9 Practitioner-Disgust V ote 92.5 5.0 93.7 92.5 92.6 Fastfood intake-Surprise L WL 66.7 32.9 76.6 66.7 62.5 Fastfood intake-Happ y L WL 87.2 9.2 89.0 87.2 86.7 Fastfood intake-Neutral RandomT ree 92.3 8.1 93.3 92.3 92.0 Fastfood intake-Sad L WL 69.2 28.7 73 69.2 67.5 Fastfood intake-Disgust OneR 71.8 16.6 74.4 71.8 72.2 Fat intake-Happ y AdaBoostM1 82.5 15.3 85.0 82.5 82.4 Fat intake-Surprise L WL 87.5 12.2 87.0 87.5 87.5 Fat intake-Sad REP Tree 100 0 100 100 100 Fat intake-Neutral SMO 62.5 43.8 65.5 62.5 58 Fat intake-Disgust RandomCommittee 55 55 N AN 55 N AN High Sugar intake-Surprise J48 87.5 12.8 87.8 87.5 87.6 High Sugar intake-Neutral REPT ree 97.5 4.2 97.6 97.5 97.5 High Sugar intake-Happy RandomForest 95 5.7 95 95 95 High Sugar intake-Sad IBK 97.5 4.2 97.6 97.5 97.5 High Sugar intake-Disgust OneR 97.5 4.2 97.6 97.5 97.5 Heart Disease-Meditate OneR 100 0 100 100 100 Heart Disease-Happy L WL 100 0 100 100 100 Heart Disease-Surprise L WL 55 45 55 55 55 Heart Disease-Disgust AttributeSelectedClassifier 100 0 100 100 100 Heart Disease-Sad IBK 100 0 100 100 100 Diabetes-Happy OneR 67.5 67.5 N AN 67.5 NAN Diabetes-Disgust OneR 72.5 49.1 71.3 72.5 69 Diabetes-Neutral MultilayerPerception 67.5 67.5 N AN 67.5 N AN Diabetes-Sad L WL 67.5 67.5 N AN 67.5 N AN Diabetes-Surprise OneR 80 25.6 80 80 80 T able 3: Classifier and Perf ormance related value(weighted a verage) of training models Predicting Questions Best Accuracy(%) Are you a smoker? 97.5 Do you pray regularly? 92.5 How man y fast food meals in a weak? 92.3 Do you take high fat food? 100 Do you take high sugar food? 97.5 Does any body in your family ha ve heart disease? 100 Does any body in your family ha ve Diabetes? 80 T able 4: Survey questions and summary of the pr ediction evaluation There exist other a venues to work with the head mo vement sens- ing system with people having specific head movement patterns due to illness or habits. The patterns may lead to deeper understanding about the reasons lying behind these patterns. W e plan to work on in vestigating the patterns in future. Finally , we also plan to explore head movement of visually-impaired people to explore their traits and emotional states to progress this research further . 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh Sharmin and Rayhan et al. (a) (b) Figure 7: Accuracy and best classifier f or different emotion in identifying traits 6 A CKNO WLEDGMENT This work has been conducted in Bangladesh Univ ersity of En- gineering and T echnology (BUET), Dhaka, Bangladesh. Besides, the authors are thankful to Dr . Fahim Kawsar , Nokia Bell Lab for providing eSense de vices that were used in this study . REFERENCES [1] Joseph K Pickrell; T omaz Berisa; Jimmy Z Liu; Laure SÃl ’gurel; Joyce Y T ung; David A Hinds. Detection and interpretation of shared genetic influences on 42 human traits. Nature Genetics , 48:709, 2016. [2] S. Samangooei, B. Guo, and M. S. Nixon. The use of semantic human description as a soft biometric. 2008 IEEE Second International Conference on Biometrics: Theory , Applications and Systems , pages 1–7, September 2008. [3] H. Gnann, W . W einmann, C. Engelmann, F . M. W urst, G. Skopp, M. Winkler , A. Thierauf, V . AuwÃd ’rter, S. Dresen, and N. Ferreirà ¸ ss Bouzas. Selecti ve detection of phosphatidylethanol homologues in blood as biomarkers for alcohol consumption by lc-esi-ms/ms. Journal of Mass Spectr ometry , 44(9):1293–1299, 2009. [4] J M Connor and J Mazanov . W ould you dope? a general population test of the goldman dilemma. British Journal of Sports Medicine , 43(11):871–872, 2009. [5] Y ; Ellis R P; Schlein Kramer M Ash, A S; Zhao. Finding future high-cost cases: comparing prior cost versus diagnosis-based methods. Health Services Research , 36(11):194–206, 2001. As Y ou Are, So Shall Y ou Move Y our Head 6th NSysS 2019, December 17–19, 2019, Dhaka, Bangladesh [6] Muhammad Mubashir; Ling Shao; Luke Seed. A survey on fall detection: Princi- ples and approaches. Neurocomputing , 100:144 – 152, 2013. [7] T Rogers. Determining the sex of human remains through cranial morphology . Journal of F orensic Sciences , 50(3):1–8, 2005. [8] Fahim Kawsar , Chulhong Min, Akhil Mathur , Alessandro Montanari, Utku Günay Acer , and Marc V an den Broeck. esense: Open earable platform for human sensing. In Proceedings of the 16th ACM Conference on Embedded Networked Sensor Systems , pages 371–372. A CM, 2018. [9] Harald G. W allbott. Bodily expression of emotion. Eur opean Journal of Social Psychology , 28(6):879–896, 1998. [10] Gunes H.; Pantic M. Dimensional emotion prediction from spontaneous head gestures for interaction with sensiti ve artificial listeners. Intelligent V irtual Agents , pages 371–377, 2010. [11] Jin-Y ung Nam T ek-Jin Lee, Jong-Hoon Park. Emotional interaction through physical movement. Springer Berlin Heidelber g , pages 401–410, 2007. [12] T anya J Clark e, Mark F Bradshaw , David T Field, Sarah E Hampson, and Da vid Rose. The perception of emotion from body movement in point-light displays of interpersonal dialogue. P er ception , 34(10):1171–1180, 2005. PMID: 16309112. [13] K.G. Munhall, Jeffery A. Jones, Daniel E. Callan, T akaaki Kuratate, and Eric V atikiotis-Bateson. Visual prosody and speech intelligibility: Head mov ement improves auditory speech perception. Psycholo gical Science , 15(2):133–137, 2004. [14] Shunya Sogon and Makoto Masutani. Identification of emotion from body move- ments: A cross-cultural study of americans and japanese. Psychological Reports , 65(1):35–46E, 1989. [15] Jari KÃd ’tsyri and Mikko Sams. The effect of dynamics on identifying basic emotions from synthetic and natural faces. International Journal of Human- Computer Studies , 66(4):233 – 242, 2008. [16] T atjana Schnell. The sources of meaning and meaning in life questionnaire (some): Relations to demographics and well-being. Routledge , 4(6):483–499, 2009. [17] F . Kawsar, C. Min, A. Mathur, and A. Montanari. Earables for personal-scale behavior analytics. IEEE P ervasive Computing , 17(3):83–89, Jul 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment