Linking emotions to behaviors through deep transfer learning

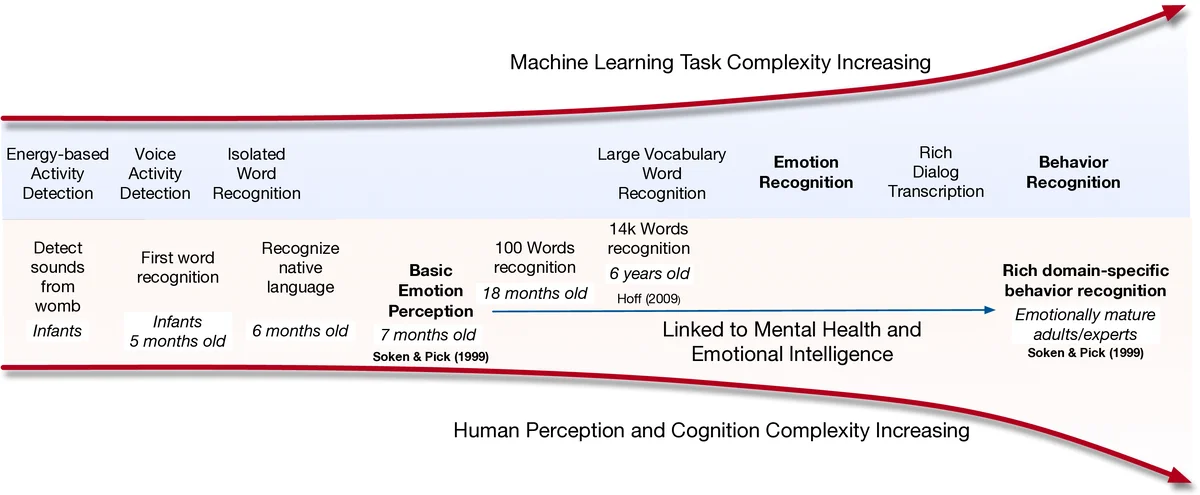

Human behavior refers to the way humans act and interact. Understanding human behavior is a cornerstone of observational practice, especially in psychotherapy. An important cue of behavior analysis is the dynamical changes of emotions during the conversation. Domain experts integrate emotional information in a highly nonlinear manner, thus, it is challenging to explicitly quantify the relationship between emotions and behaviors. In this work, we employ deep transfer learning to analyze their inferential capacity and contextual importance. We first train a network to quantify emotions from acoustic signals and then use information from the emotion recognition network as features for behavior recognition. We treat this emotion-related information as behavioral primitives and further train higher level layers towards behavior quantification. Through our analysis, we find that emotion-related information is an important cue for behavior recognition. Further, we investigate the importance of emotional-context in the expression of behavior by constraining (or not) the neural networks’ contextual view of the data. This demonstrates that the sequence of emotions is critical in behavior expression. To achieve these frameworks we employ hybrid architectures of convolutional networks and recurrent networks to extract emotion-related behavior primitives and facilitate automatic behavior recognition from speech.

💡 Research Summary

The paper investigates the relationship between human emotions and complex behaviors by leveraging deep transfer learning on speech data. The authors first train a robust speech‑emotion recognition (SER) system using a hybrid convolutional‑recurrent architecture. Raw audio is transformed into mel‑spectrograms, which are processed by several 2‑D convolutional layers to capture local spectral‑temporal patterns. The resulting feature maps are fed into a bidirectional LSTM (or GRU) that models the temporal evolution of emotional cues. This network is trained on a standard SER dataset to classify six basic emotions (anger, disgust, fear, happiness, sadness, surprise) and achieves performance comparable to state‑of‑the‑art systems, demonstrating reliable extraction of emotion‑related representations even from short utterances (≈2 seconds).

Once the emotion network is trained, its intermediate activations—specifically the final LSTM hidden state or a pooled CNN embedding—are extracted and treated as “emotion primitives.” These vectors are then used as input features for a second network tasked with recognizing domain‑specific behaviors (e.g., positivity, blame, withdrawal) in therapeutic contexts such as couples therapy, depression monitoring, and suicide risk assessment. The behavior network retains the same CNN‑RNN backbone but is trained from scratch while the emotion encoder’s parameters are frozen, thereby implementing a classic transfer‑learning pipeline.

A central contribution is the systematic analysis of contextual information. The authors construct two families of behavior models: (1) full‑context models that preserve the entire sequence of emotion embeddings, and (2) reduced‑context models that either truncate the sequence to fixed windows (1 s, 2 s, 4 s) or randomly shuffle the order of embeddings, effectively destroying temporal dependencies. Empirical results show that full‑context models consistently outperform reduced‑context variants, with average F1‑score improvements ranging from 8 % to 12 % across behavior categories. The gap is especially pronounced for behaviors that unfold over longer time scales (e.g., problem‑solving, cooperation), confirming that the order and continuity of emotional states are critical cues for behavior inference.

The study also compares using discrete emotion labels versus continuous emotion embeddings as inputs to the behavior classifier. While label‑based models achieve reasonable performance, embedding‑based models yield higher accuracy (5 %–9 % absolute gain in F1) because they retain nuanced acoustic and affective information that is lost during categorical labeling.

Limitations discussed include the relatively small size of annotated behavior datasets, the subjectivity inherent in both emotion and behavior annotations, and the reliance on a single modality (speech). The authors propose future work integrating multimodal cues (text, video), applying domain‑adaptation techniques (adversarial or self‑supervised), and expanding to larger, more diverse corpora to improve generalization.

In summary, the paper presents a novel framework that treats emotion‑derived representations as foundational building blocks for behavior recognition. By demonstrating that (i) emotion embeddings significantly boost behavior classification, (ii) preserving the temporal context of emotions is essential, and (iii) deep transfer learning can bridge the gap between relatively abundant emotion data and scarce behavior annotations, the work advances the field of Behavioral Signal Processing and offers a promising direction for automated analysis in mental‑health applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment