Learning-based Application-Agnostic 3D NoC Design for Heterogeneous Manycore Systems

The rising use of deep learning and other big-data algorithms has led to an increasing demand for hardware platforms that are computationally powerful, yet energy-efficient. Due to the amount of data parallelism in these algorithms, high-performance 3D manycore platforms that incorporate both CPUs and GPUs present a promising direction. However, as systems use heterogeneity (e.g., a combination of CPUs, GPUs, and accelerators) to improve performance and efficiency, it becomes more pertinent to address the distinct and likely conflicting communication requirements (e.g., CPU memory access latency or GPU network throughput) that arise from such heterogeneity. Unfortunately, it is difficult to quickly explore the hardware design space and choose appropriate tradeoffs between these heterogeneous requirements. To address these challenges, we propose the design of a 3D Network-on-Chip (NoC) for heterogeneous manycore platforms that considers the appropriate design objectives for a 3D heterogeneous system and explores various tradeoffs using an efficient ML-based multi-objective optimization technique. The proposed design space exploration considers the various requirements of its heterogeneous components and generates a set of 3D NoC architectures that efficiently trades off these design objectives. Our findings show that by jointly considering these requirements (latency, throughput, temperature, and energy), we can achieve 9.6% better Energy-Delay Product on average at nearly iso-temperature conditions when compared to a thermally-optimized design for 3D heterogeneous NoCs. More importantly, our results suggest that our 3D NoCs optimized for a few applications can be generalized for unknown applications as well. Our results show that these generalized 3D NoCs only incur a 1.8% (36-tile system) and 1.1% (64-tile system) average performance loss compared to application-specific NoCs.

💡 Research Summary

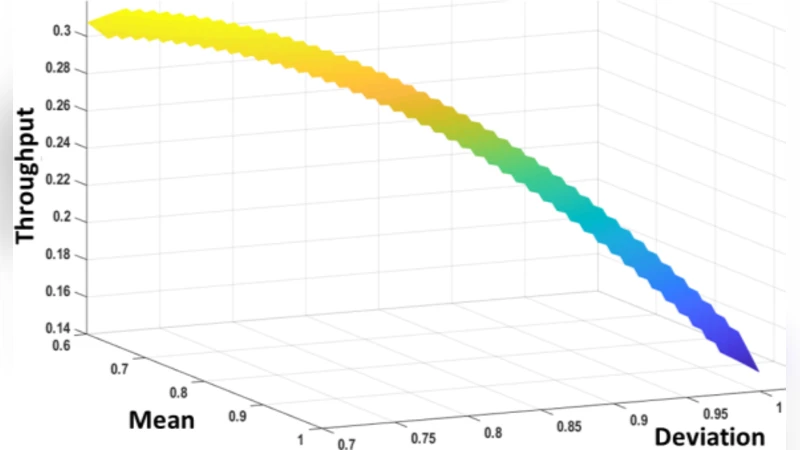

The paper tackles the challenging problem of designing a network‑on‑chip (NoC) for heterogeneous many‑core systems that integrate CPUs, GPUs, and other accelerators in a three‑dimensional (3D) stacked‑die environment. Traditional 2D mesh NoCs and homogeneous‑core design methods fail to address the conflicting communication requirements of such systems: CPUs need low memory latency, while GPUs demand high‑throughput data transfers. Moreover, 3D integration introduces severe thermal constraints due to higher power density. To simultaneously satisfy latency, throughput, energy, and temperature objectives, the authors formulate a multi‑objective optimization (MOO) problem and introduce a novel learning‑based optimizer called MOO‑STAGE, which extends the STAGE meta‑learning framework to multi‑objective search.

A key insight comes from an extensive traffic‑pattern study. Using Gem5‑GPU simulations, the authors run twelve benchmarks from diverse domains (CNNs, graph algorithms, linear algebra, bio‑informatics, etc.) on a 3D heterogeneous platform (8 CPUs, 16 LLCs, 40 GPUs). Heat‑map analysis reveals a consistent “many‑to‑few” communication pattern: the majority of traffic flows between many CPU/GPU cores and a small set of last‑level caches (LLCs). One CPU acts as a master and generates a disproportionate share of CPU‑LLC traffic, while GPU‑LLC traffic is relatively uniform across GPUs. Direct CPU‑GPU communication is negligible. This pattern holds for both a 64‑tile system and a smaller 36‑tile configuration, indicating that traffic characteristics are dictated more by the heterogeneous architecture than by any specific application.

Leveraging this observation, the authors argue that a NoC optimized for a few representative workloads can be generalized to unseen applications with minimal performance loss. They therefore design an “application‑agnostic” NoC architecture that primarily addresses the many‑to‑few traffic to LLCs, rather than tailoring to individual workload nuances.

The MOO‑STAGE algorithm accelerates the exploration of the massive design space. Unlike conventional evolutionary or simulated‑annealing based MOO methods (e.g., NSGA‑II, AMOSA), which rely solely on the current population and require many iterations, MOO‑STAGE learns from previous search trajectories to predict promising regions of the design space. This meta‑learning reduces the number of evaluated configurations by 30‑40 % while preserving solution quality. Comparative experiments show that MOO‑STAGE achieves the same Pareto front as AMOSA and a branch‑and‑bound method (PCBB) but with substantially lower runtime, demonstrating superior scalability.

Optimization results under iso‑temperature constraints show an average 9.6 % improvement in Energy‑Delay Product (EDP) compared to a thermally‑optimized baseline. When evaluated on both the 36‑tile and 64‑tile systems, the generalized NoC incurs only 1.8 % and 1.1 % average performance loss, respectively, relative to application‑specific NoCs. These figures confirm that the many‑to‑few traffic pattern dominates performance and that a single, well‑designed NoC can serve a wide range of workloads.

In summary, the paper makes three major contributions: (1) a comprehensive traffic‑pattern analysis revealing architecture‑driven communication characteristics across diverse applications; (2) the proposal of a generalized, application‑agnostic 3D NoC architecture that achieves near‑optimal latency, throughput, energy, and temperature trade‑offs; and (3) the development of the MOO‑STAGE optimizer, which dramatically speeds up multi‑objective NoC design without sacrificing solution quality. The work opens avenues for extending the methodology to newer accelerator types, dynamic workload adaptation, and online thermal‑aware reconfiguration, positioning learning‑driven multi‑objective optimization as a practical tool for future heterogeneous 3D many‑core systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment