Ludwig: a type-based declarative deep learning toolbox

In this work we present Ludwig, a flexible, extensible and easy to use toolbox which allows users to train deep learning models and use them for obtaining predictions without writing code. Ludwig implements a novel approach to deep learning model bui…

Authors: Piero Molino, Yaroslav Dudin, Sai Sumanth Miryala

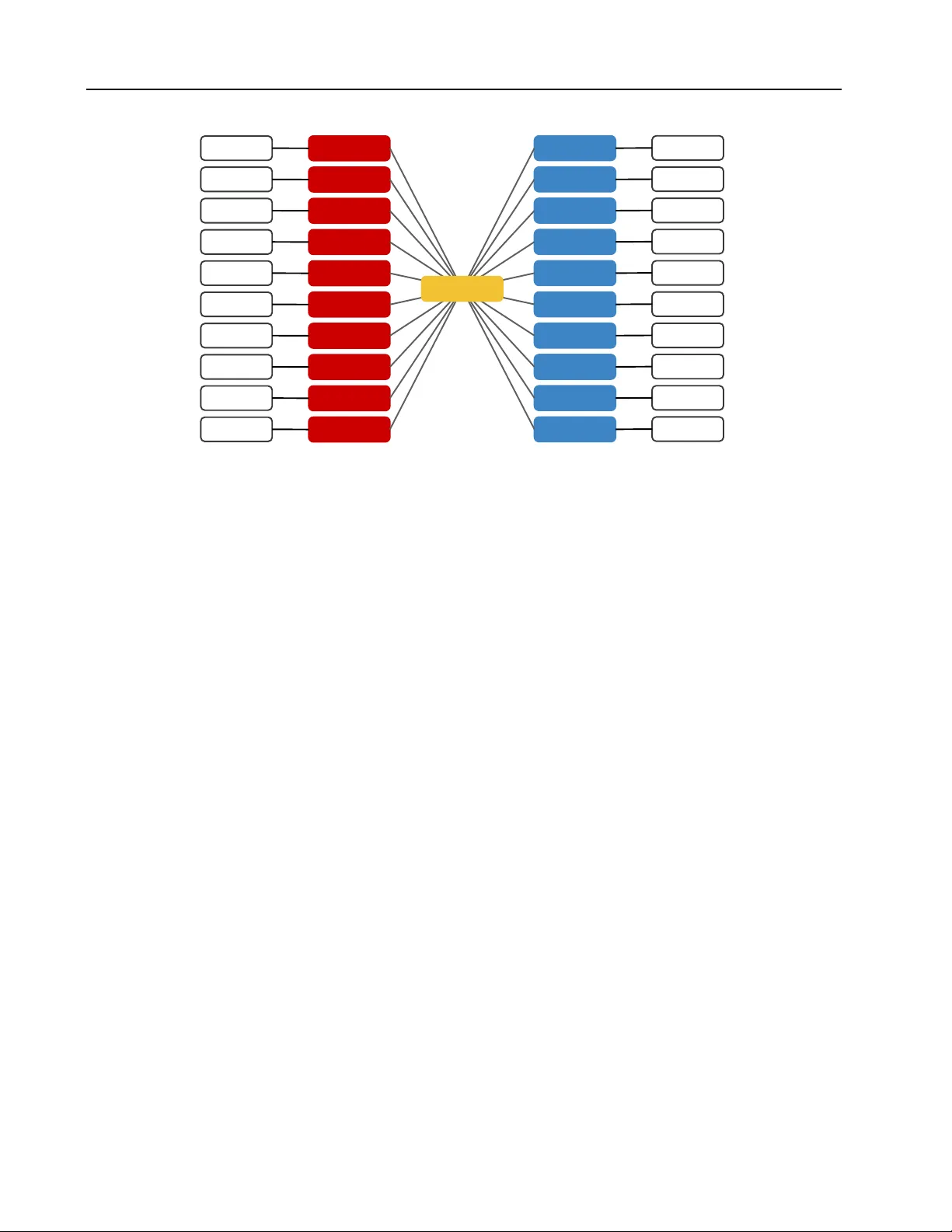

L U DW I G : A T Y P E - B A S E D D E C L A R A T I V E D E E P L E A R N I N G T O O L B O X Piero Molino 1 Y arosla v Dudin 1 Sai Sumanth Miryala 1 A B S T R AC T In this work we present Ludwig, a flexible, e xtensible and easy to use toolbox which allo ws users to train deep learning models and use them for obtaining predictions without writing code. Ludwig implements a novel approach to deep learning model b uilding based on two main abstractions: data types and declarati ve configuration files. The data type abstraction allo ws for easier code and sub-model reuse, and the standardized interfaces imposed by this abstraction allo w for encapsulation and make the code easy to extend. Declarativ e model definition configuration files enable inexperienced users to obtain ef fectiv e models and increase the productivity of e xpert users. Alongside these two innovations, Ludwig introduces a general modularized deep learning architecture called Encoder-Combiner -Decoder that can be instantiated to perform a v ast amount of machine learning tasks. These inno vations make it possible for engineers, scientists from other fields and, in general, a much broader audience to adopt deep learning models for their tasks, concretely helping in its democratization. 1 I N T R O D U C T I O N A N D M O T I V A T I O N Over the course of the last ten years, deep learning models hav e demonstrated to be highly ef fectiv e in almost every ma- chine learning task in dif ferent domains including (but not limited to) computer vision, natural language, speech, and recommendation. Their wide adoption in both research and industry ha ve been greatly facilitated by increasingly sophis- ticated software libraries like Theano ( Theano Development T eam , 2016 ), T ensorFLo w ( Abadi et al. , 2015 ), Keras ( Chol- let et al. , 2015 ), PyT orch ( Paszke et al. , 2017 ), Caf fe ( Jia et al. , 2014 ), Chainer ( T okui et al. , 2015 ), CNTK ( Seide & Agarwal , 2016 ) and MXNet ( Chen et al. , 2015 ). Their main value has been to provide tensor algebra primitives with efficient implementations which, together with the mas- siv ely parallel computation av ailable on GPUs, enabled researchers to scale training to bigger datasets. Those pack- ages, moreov er , provided standardized implementations of automatic dif ferentiation, which greatly simplified model implementation. Researchers, without ha ving to spend time re-implementing these basic building blocks from scratch and now having fast and reliable implementations of the same, were able to focus on models and architectures, which led to the explosion of ne w *Net model architectures of the last fiv e years. W ith artificial neural network architectures being applied to a wide variety of tasks, common practices regarding ho w to 1 Uber AI, San Francisco, USA. Correspondence to: Piero Molino < piero@uber .com > . Preliminary work. Under revie w by the Systems and Machine Learning (SysML) Conference. Do not distrib ute. handle certain types of input information emerged. When faced with a computer vision problem, a practitioner pre- processes data using the same pipeline that resizes images, augments them with some transformation and maps them into 3D tensors. Something similar happens for text data, where text is tokenized either into a list of words or charac- ters or word pieces, a vocabulary with associated numerical IDs is collected and sentences are transformed into vectors of integers. Specific architectures are adopted to encode different types of data into latent representations: con vo- lutional neural networks are used to encode images and recurrent neural networks are adopted for sequential data and te xt (more recently self-attention architectures are re- placing them). Most practitioners working on a multi-class classification task would project latent representations into vectors of the size of the number of classes to obtain logits and apply a softmax operation to obtain probabilities for each class, while for re gression tasks, they would map latent representations into a single dimension by a linear layer, and the single score is the predicted value. Observing these emerging patterns led us to define abstract functions that identify classes of equi valence of model archi- tectures. For instance, most of the dif ferent architectures for encoding images can be seen as dif ferent implementations of the abstract encoding function T 0 h 0 × w 0 × c 0 = e θ ( T h × w × c ) where T dims denotes a tensor with dimensions dims and e θ is an encoding function parametrized by parameters θ that maps from tensor to tensor . In tasks like image classi- fication, T 0 is pooled and flattened (i.e., a reduce function is applied spatially and the output tensor is reshaped as a vector) before being provided to, again, an abstract function that computes T 0 c = d θ ( T h ) where T h is a one-dimensional Ludwig: a type-based declarative deep lear ning toolbox { input_features: [ { name: img, type: image, encoder: vgg } ], output_features: [ { name: class, type: category } ] } { input_features: [ { name: img, type: image, encoder: resnet } ], output_features: [ { name: class, type: category } ] } { input_features: [ { name: utterance, type: text, encoder: rnn } ], output_features: [ { name: tags, type: set } ] } Figure 1. Examples of declarativ e model definitions. The first two show two models for image classification using two dif ferent encoders, while the third shows a multi-label te xt classification system. Note that a part from the name of input and output features, which are just identifiers, all that needs to be changed to encode with a different image encoder is jest the name of the encoder, while for changing tasks all that needs to be changed is the types of the inputs and outputs. tensor of hidden size h , T 0 c is a one-dimensional tensor of size c equal to the number of classes, and d θ is a decoding function parametrized by parameters θ that maps a hidden representation into logits and is usually implemented as a stack of fully connected layers. Similar abstract encoding and decoding functions that generalize many dif ferent archi- tectures can be defined for different types of input data and different types of expected output predictions (which in turn define different types of tasks). W e introduce Ludwig, a deep learning toolbox based on the above-mentioned lev el of abstraction, with the aim to encapsulate best practices and take adv antage of inheritance and code modularity . Ludwig makes it much easier for practitioners to compose their deep learning models by just declaring their data and task and to make code reusable, extensible and fa vor best practices. These classes of equiv a- lence are named after the data type of the inputs encoded by the encoding functions (image, text, series, category , etc.) and the data type of the outputs predicted by the decoding functions. This type-based abstraction allows for a higher lev el interface than what is a vailable in current deep learn- ing framew orks, which abstract at the level of single tensor operation or at the layer lev el. This is achie ved by defin- ing abstract interfaces for each data type, which allo ws for extensibility as any new implementation of the interface is a drop-in replacement for all the other implementations already av ailable. Concretely , this allows for defining, for instance, a model that includes an image encoder and a category decoder and being able to sw ap in and out VGG ( Simonyan & Zisserman , 2015 ), ResNet ( He et al. , 2016 ) or DenseNet ( Huang et al. , 2017 ) as different interchangeable representations of an image encoder . The natural consequence of this level of abstraction is associating a name to each encoder for a specific type and enabling the user to declare what model to employ rather than requiring them to implement them imperativ ely , and at the same time, letting the user add new and custom encoders. The same also applies to data types other than images and decoders. W ith such type-based interfaces in place and implementa- tions of such interfaces readily av ailable, it becomes pos- sible to construct a deep learning model simply by speci- fying the type of the features in the data and selecting the implementation to employ for each data type in v olved. Con- sequently , Ludwig has been designed around the idea of a declarati ve specification of the model to allow a much wider audience (including people who do not code) to be able to adopt deep learning models, ef fectiv ely democratizing them. Three such model definition are shown in Figure 1 . The main contribution of this work is that, thanks to this higher level of abstraction and its declarative nature, Ludwig allows for inexperienced users to easily build deep learn- ing models , while allowing experts to decide the specific modules to employ with their hyper -parameters and to add additional custom modules. Ludwig’ s other main contribu- tion is the general modular architecture defined through the type-based abstraction that allows for code reuse, flexibility , and the performance of a wide array of machine learning tasks under a cohesiv e framew ork. The remainder of this work describes Ludwig’ s architecture in detail, explains its implementation, compares Ludwig with other deep learning frameworks and discusses its ad- vantages and disadv antages. Ludwig: a type-based declarative deep lear ning toolbox Data point Pre-processor Encoder Scores Metrics Prediction Post-processor Decoder Figure 2. Data type functions flow . 2 A R C H I T E C T U R E The notation used in this section is defined as follo ws. Let d ∼ D be a data point sampled from a dataset D . Each data point is a tuple of typed values called features. They are divided in two sets: d I is the set of input features and d O id the set of output features. d i will refer to a specific input feature, while d o will refer to a specific output features. Model predictions given input features d I are denoted as d P , so that there will be a specific prediction d p for each output feature d o ∈ d O . The types of the features can be either atomic (scalar) types like binary , numerical or category , or complex ones like discrete sequences or sets. Each data type is associated with abstract function types, as is explained in the follo wing section, to perform type-specific operations on features and tensors. T ensors are a generalization of scalars, vectors, and matrices with n ranks of dif ferent dimensions. T ensors are referred to as T dims where dims indicates the dimensions for each rank, like for instance T l × m × n for a rank 3 tensor of dimensions l , m and n respecti vely for each rank. 2.1 T ype-based Abstraction T ype-based abstraction is one of the main concepts that de- fine Ludwig’ s architecture. Currently , Ludwig supports the following types: binary , numerical (floating point values), category (unique strings), set of categorical elements, bag of categorical elements, sequence of cate gorical elements, time series (sequence of numerical elements), text, image, audio (which doubles as speech when using different pre- processing parameters), date, H3 ( Brodsk y et al. , 2018 ) (a geo-spatial indexing system), and v ector (one dimensional tensor of numerical values). The type-based abstraction makes it easy to add more types. The motiv ation behind this abstraction stems from the ob- servation of recurring patterns in deep learning projects: pre-processing code is more or less the same gi ven certain types of inputs and specific tasks, as is the code implement- ing models and training loops. Small differences make models hard to compare and their code dif ficult to reuse. By modularizing it on a data type base, our aim is to improv e both code reuse, adoption of best practices and extensibility . Each data type has fi ve abstract function types associated with it and there could be multiple implementations of each of them: • Pre-pr ocessor : a pre-processing function T dims = pre( d i ) maps a raw data point input feature d i into a tensor T with dimensions dims . Different data types may hav e different pre-processing functions and differ - ent dimensions of T . A specific type may , moreover , hav e different implementations of pre . A concrete ex- ample is text : d i in this case is a string of text, there could be different tokenizers that implement pre by splitting on space or using byte-pair encoding and map- ping tokens to integers, and dims is s , the length of the sequence of tokens. • Encoder : an encoding function T 0 dims 0 = e θ ( T dims ) maps an input tensor T into an output tensor T 0 using parameters θ . The dimensions dims and dims 0 may be different from each other and depend on the spe- cific data type. The input tensor is the output of a pre function. Concretely , encoding functions for text , for instance, take as input T s and produce T h where h is an hidden dimension if the output is required to be pooled, or T s × h if the output is not pooled. Examples of pos- sible implementations of e θ are CNNs, bidirectional LSTMs or T ransformers. • Decoder : a decoding function ˆ T dims 00 = d θ ( T 0 dims 0 ) maps an input tensor T 0 into an output tensor ˆ T using parameter θ . The dimensions dims 00 and dims 0 may be dif ferent from each other and depend on the specific data type. T 0 is the output of an encoding function or of a combiner (explained in the next section). Concretely , a decoder function for the cate gory type would map T h input tensor into a T c tensor where c is the number of classes. • Post-pr ocessor : a post-processing function d p = p ost( ˆ T dims 00 ) maps a tensor ˆ T with dimensions dims 00 into a raw data point prediction d p . ˆ T is the output of a decoding function. Different data types may hav e different post-processing functions and different di- mensions of T . A specific type may , moreover , have different implementations of p ost . A concrete exam- ple is te xt : d p in this case is a string of te xt, and there could be dif ferent functions that implement p ost by first mapping integer predictions into tokens and then concatenating on space or using byte-pair concatena- tion to obtain a single string of text. • Metrics : a metric function s = m ( d o , d p ) produces a score s giv en a ground truth output feature d o and Ludwig: a type-based declarative deep lear ning toolbox INPUT COMBINER OUTPUT Encoder Encoder Encoder Encoder Encoder Encoder Encoder Encoder T ext Category Numerical Binary Sequence Set Image Time Series Audio ... Encoder Encoder Decoder Decoder Decoder Decoder Decoder Decoder Decoder Decoder Decoder Decoder T ext Category Numerical Binary Sequence Set Image Time Series Audio ... Combiner Figure 3. Encoder-Combiner-Decoder Architecture predicted output d p of the same dimension. d p is the output of a post-processing function. In this context, for simplicity , loss functions are considered to belong to the metric class of function. Many dif ferent metrics may be associated with the same data type. Concrete examples of metrics for the cate gory data type can be accuracy , precision, recall, F1, and cross entropy loss, while for the numerical data type they could be mean squared error , mean absolute error , and R2. A depiction of ho w the functions associated with a data type are connected to each other is provided in Figure 2 . 2.2 Encoders-Combiner -Decoders In Ludwig, ev ery model is defined in terms of encoders that encode different features of an input data point, a combiner which combines information coming from the dif ferent en- coders, and decoders that decode the information from the combiner into one or more output features. This generic architecture is referred to as Encoders-Combiner-Decoders (ECD). A depiction is provided in Figure 3 . This architecture is introduced because it maps naturally most of the architectures of deep learning models and allows for modular composition. This characteristic, enabled by the data type abstraction, allows for defining models by just declaring the data types of the input and output features in volv ed in the task and assembling standard sub-modules accordingly rather than writing a full model from scratch. A specific instantiation of an ECD architecture can hav e multiple input features of dif ferent or same type, and the same is true for output features. For each feature in the input part, pre-processing and encoding functions are computed depending on the type of the feature, while for each feature in the output part, decoding, metrics and post-processing functions are computed, again depending on the type of each output feature. When multiple input features are provided a combiner func- tion { T 00 } = c θ ( T 0 ) that maps a set of input tensors { T 0 } into a set of output tensors { T 00 } is computed. c has an ab- stract interface and many dif ferent functions can implement it. One concrete example is what in Ludwig is called concat combiner: it flattens all the tensors in the input set, concate- nates them and passes them to a stack of fully connected layers, the output of which is provided as output, a set of only one tensor . Note that a possible implementation of a combiner function can be the identity function. This definition of a decoder function allo ws for implemen- tations where subsets of inputs are provided to different sub-modules which return subsets of the output tensors, or ev en for a recursiv e definition where the combiner function is a ECD model itself, albeit without pre-processors and post-processors, since inputs and outputs are already tensors and do not need to be pre-processed and post-processed. Although the combiner definition in the ECD architecture is theoretically flexible, the current implementations of combiner functions in Ludwig are monolithic (without sub- modules), non-recursi ve, and return a single tensor as output instead of a set of tensors. Howe ver , more elaborate com- biners can be added easily . The ECD architecture allows for many instantiations by Ludwig: a type-based declarative deep lear ning toolbox TEXT CLASSIFICA TION Identity WordCNN T ext Multi-class Classifier Category IMAGE CAPTIONING Identity ResNet Image Multi-class Classifier T ext GENERIC REGRESSION VISUAL QUESTION ANSWERING Concat ResNet Bi-LSTM Image T ext Binary Classifier Binary SPEAKER VERIFICA TION MUL TI-LABEL EVENT DETECTION Identity RNN Time Series Multi-label Classifier Set Concat Identity Identity Embed Binary Numerical Category Regressor Numerical Concat CNN CNN Speech Speech Binary Classifier Binary TEXT P ART -OF-SPEECH TA GGING AND NAMED ENTITY RECOGNITION Identity T ransformer T ext T agger Sequence T agger Sequence SPEECH TRANSCRIPTION AND TRANSLA TION Identity LSTM Speech LSTM Generator T ext LSTM Generator T ext Figure 4. Different instantiations of the ECD architecture for dif ferent machine learning tasks combining dif ferent input features of different data types with dif ferent output features of different data types, as de- picted in Figure 4 . An ECD with an input text feature and an output categorical feature can be trained to perform te xt classification or sentiment analysis, and an ECD with an input image feature and a text output feature can be trained to perform image captioning, while an ECD with categor- ical, binary and numerical input features and a numerical output feature can be trained to perform regression tasks like predicting house pricing, and an ECD with numerical binary and categorical input features and a binary output feature can be trained to perform tasks like fraud detec- tion. It is evident how this architecture is really flexible and is limited only by the av ailability of data types and the implementations of their functions. An additional adv antage of this architecture is its ability to perform multi-task learning ( Caruana , 1993 ). If more than one output feature is specified, an ECD architecture can be trained to minimize the weighted sum of the losses of each output feature in an end-to-end fashion. This approach has shown to be highly ef fective in both vision and natural lan- guage tasks, achieving state of the art performance ( Ratner et al. , 2019b ). Moreover , multiple outputs can be corre- lated or have logical or statistical dependency with each other . For example, if the task is to predict both parts of speech and named entity tags from a sentence, the named entity tagger will most likely achiev e higher performance if it is provided with the predicted parts of speech (assuming the predictions are better than chance, and there is corre- lation between part of speech and named entity tag). In Ludwig, dependencies between outputs can be specified in the model definition, a directed acyclic graph among them is constructed at model building time, and either the last hidden representation or the predictions of the origin output feature are provided as inputs to the decoder of the desti- nation output feature. This process is depicted in Figure 5 . When non-differentiable operations are performed to ob- tain the predictions, for instance, like ar gmax in the case of category features performing multi-class classification, the logits or the probabilities are provided instead, keeping the multi-task training process end-to-end differentiable. This generic formulation of multi-task learning as a directed acyclic graph of task dependencies is related to the hierar - chical multi-task learning in Snorkel MeT aL proposed by Ratner et al. ( 2018 ) and its adoption for improving training from weak supervision by e xploiting task agreements and disagreements of different labeling functions ( Ratner et al. , 2019a ). The main difference is that Ludwig can handle automatically heterogeneous tasks, i.e. tasks to predict dif- ferent data types with support for dif ferent decoders, while in Snorkel MeT aL each task head is a linear layer . On the other hand Snorkel MeT aL ’ s focus on weak supervision is currently absent in Ludwig. An interesting av enue of further research to close the gap between the two approaches could be to infer dependencies and loss weights automatically giv en fully supervised multi-task data and combine weak supervision with heterogeneous tasks. 3 I M P L E M E N TA T I O N 3.1 Declarative Model Definition Ludwig adopts a declarati ve model definition schema that al- lows users to define an instantiation of the ECD architecture to train on their data. Ludwig: a type-based declarative deep lear ning toolbox E d it or d elet e f oot er t e xt in Mast er ip sand ella d olor eium d em isciame nd aest ia nessed q uib us aut hiligenet ut ea d eb isci et uriat e p or est i vid min cor e, ver cid igent . output_features: [ {name: OF1, type: , dependencies: [OF2] }, {name: OF2, type: , dependencies: [] }, {name: OF3, type: , dependencies: [OF2, OF1] } ] Combiner Output OF2 Decoder OF3 Decoder OF1 Decoder OF2 Loss OF1 Loss OF3 Loss Combined Loss Figure 5. Different instantiations of the ECD architecture for dif ferent machine learning tasks The higher le vel of abstraction provided by the type-based ECD architecture allows for a separation between what a model is expected to learn to do and how it actually does it. This con vinced us to provide a declarati ve way of defin- ing the models in Ludwig, as the amount of potential users who can define a model by declaring the inputs they are providing and the predictions they are expecting, without specifying the implementation of how the predictions are obtained, is substantially bigger than the amount of de vel- opers who can code a full deep learning model on their o wn. An additional motiv ation for the adoption of a declarative model definitions stems from the separation of interests be- tween the authors of the implementations of the models and the final users, analogous to the separation of interests of the authors of query planning and indexing strategies of a database and those users who query the database, which allows the former to provide improved strategies without impacting the way the latter interacts with the system. The model definition is divided in fi ve sections: • Input F eatures : in this section of the model definition, a list of input features is specified. The minimum amount of information that needs to be provided for each feature is the name of the feature that corresponds to the name of a column in the tabular data provided by the user , and the type of such feature. Some features hav e multiple encoders, but if one is not specified, the default one is used. Each encoder can have its own hyper-parameters, and if they are not specified, the default hyper -parameters of the specified encoder are used. • Combiner : in this section of the model definition, the type of combiner can be specified, if none is specified, the default concat is used. Each combiner can have its o wn hyper-parameters, but if the y are not specified, the default ones of the specified combiner are used. • Output Featur es : in this section of the model defini- tion, a list of output features is specified. The minimum amount of information that needs to be provided for each feature is the name of the feature that corresponds to the name of a column in the tabular data provided by the user, and the type of such feature. The data in the column is the ground truth the model is trained to predict. Some features hav e multiple decoders that calculate the predictions, but if one is not specified, the default one is used. Each decoder can hav e its o wn hyper-parameters and if the y are not specified, the default hyper -parameters of the specified encoder are used. Moreover , each decoder can hav e dif ferent losses with different parameters to compare the ground truth values and the v alues predicted by the decoder and, also in this case, if the y are not specified, defaults are used. • Pre-pr ocessing : pre-processing and post-processing functions of each data type can ha ve parameters that change their behavior . The y can be specified in this section of the model definition and are applied to all input and output features of a specified type, and if they are not pro vided, defaults are used. Note that for some use cases it w ould be useful to ha ve dif ferent pro- cessing parameters for different features of the same type. Consider a news classifier where the title and the body of a piece of news are pro vided as two input text features. In this case, the user may be inclined to set a smaller v alue for the maximum length of words and the maximum size of the vocab ulary for the title input feature. Ludwig allows users to specify processing parameters on a per-feature basis by providing them inside each input and output feature definition. If both type-lev el parameters and single-feature-lev el parame- ters are provided, the single-feature-level ones ov erride the type-lev el ones. • T raining : the training process itself has parameters that can be changed, like the number of epochs, the batch size, the learning rate and its scheduling, and so on. Those parameters can be provided by the user , but if they are not pro vided, defaults are used. Ludwig: a type-based declarative deep lear ning toolbox { input_features: [ { name: utterance, type: text } ], output_features: [ { name: class, type: category } ] } { input_features: [ { name: title, type: text, encoder: rnn, cell_type: lstm, bidirectional: true, state_size: 128, Num_layers: 2, preprocessing: { length_limit: 20 } }, combiner: { type: concat, num_fc_layers: 2, }, output_features: [ { name: class, type: category }, { name: tags, type: set } ], training: { epochs: 100, learning_rate: 0.01, batch_size: 64, early_stop: 10, gradient_clipping: 1, decay_rate: 0.95, optimizer: { type: rmsprop beta: 0.99 } } } { name: body, type: text, encoder: stacked_cnn, num_filters: 128, num_layers: 6, preprocessing: { length_limit: 1024 } } ], Figure 6. On the left side, a minimal model definition for text classification. On the right side, a more complex model definition including input and output features and more model and training hyper-parameters. The wide adoption of defaults allows for really concise model definitions, like the one shown on the left side of Figure 6 , as well as a high degree of control on both the architecture of the model and training parameters, as shown on the right side of Figure 6 . Ludwig adopts the con vention to adopt Y AML to parse model definitions because of its human readability , but as long its nested structure is representable, other similar for - mats could be adopted. For the e ver -growing list of a vailable encoders, combiners, and decoders, their hyper-parameters, the pre-processing and training parameter av ailable, please consult Ludwig’ s user guide 1 . For additional e xamples refer to the example 2 section. In order to allow for flexibility and ease of extendability , two well kno wn design patters are adopted in Ludwig: the strategy pattern ( Gamma et al. , 1994 ) and the re gistry pat- tern. The strategy pattern is adopted at different lev els to allow different behaviors to be performed by different in- stantiations of the same abstract components. It is used both to make the dif ferent data types interchangeable from the point of view of model b uilding, training, and inference, and to make dif ferent encoders and decoders for the same type interchangeable. The registry pattern, on the other hand, is implemented in Ludwig by assigning names to code con- structs (either v ariables, function, objects, or modules) and storing them in a dictionary . They can be referenced by their name, allowi ng for straightforward extensibility; adding an additional beha vior is as simple as adding a ne w entry in the registry . In Ludwig, the combination of these two patterns allows users to add new behaviors by simply implementing the abstract function interface of the encoder of a specific type and adding that function implementation in the registry of 1 http://ludwig.ai/user_guide/ 2 http://ludwig.ai/examples implementations av ailable. The same applies for adding new decoders, ne w combiners, and to add additional data types. The problem with this approach is that dif ferent implemen- tations of the same abstract functions have to conform to the same interface, b ut in our case some parameters of the function may be different. As a concrete example, consider two text encoders: a recurrent neural network (RNN) and a con volutional neural network (CNN). Although they both conform to the same abstract encoding function in terms of the rank of the input and output tensors, their hyper- parameters are different, with the RNN requiring a boolean parameter indicating whether to apply bi-direction or not, and the CNN requiring the size of the filters. Ludwig solves this problem by e xploiting **kwar gs , a Python functionality that allows to pass additional parameters to functions by specifying their names and collecting them into a dictionary . This allo ws different functions implementing the same ab- stract interface to hav e the same signature and then retrieve the specific additional parameters from the dictionary using their names. This also greatly simplifies the implementation of default parameters, because if the dictionary does not contain the keyword of a required parameter , the default value for that parameters is used instead automatically . 3.2 T raining Pipeline Giv en a model definition, Ludwig builds a training pipeline as shown in the top of Figure 7 . The process is not par- ticularly dif ferent from many other machine learning tools and consists in a metadata collection phase, a data pre- processing phase, and a model training phase. The metadata mappings in particular are needed in order to apply exactly the same pre-processing to input data at prediction time, while model weights and hyper -parameters are sav ed in or- der to load the same exact model obtained during training. The main notable innov ation is the fact that ev ery single component, from the pre-processing to the model, to the training loop is dynamically built depending on the declara- tiv e model definition. Ludwig: a type-based declarative deep lear ning toolbox E d it or d elet e f oot er t e xt in Mast er ip sand ella d olor eium d em isciame nd aest ia nessed q uib us aut hiligenet ut ea d eb isci et uriat e p or est i vid min cor e, ver cid igent . Raw Data Processed Data Predictions and Scores Data Pre Processing Predicted Data Post Processing Model Prediction CSV Numpy Numpy CSV JSON Raw Data Processed Data Model T raining Metadata Pre Processing CSV Weights Hyper parameters T ensor JSON Metadata Dictionary File Data Pre Processing HDF5 / Numpy Processed Data Cache Metadata Dictionary Optional T raining Prediction Figure 7. A depiction of the training and prediction pipeline. One of the main use cases of Ludwig is the quick exploration of different model alternatives for the same data, so, after pre-processing, the pre-processed data is optionally cached into an HDF5 file. The next time the same data is accessed, the HDF5 file will be used instead, sa ving the time needed to pre-process it. 3.3 Prediction Pipeline The prediction pipeline is depicted in the bottom of Figure 7 . It uses the metadata obtained during the training phase to pre-process the ne w input data, loads the model reading its hyper-parameters and weights, and uses it to obtain predic- tions that are mapped back in data space by a post-processor that uses the same mappings obtained at training time. 4 E V A L UAT I O N One of the positi ve ef fects of the ECD architecture and its implementation in Ludwig is the ability to specify a poten- tially complex model architecture with a concise declarati ve model definition. T o analyze how much of an impact this has on the amount of code needed to implement a model (including pre-processing, the model itself, the training loop, and the metrics calculation), the number of lines of code required to implement four reference architectures using different libraries is compared: W ordCNN ( Kim , 2014 ), Bi- LSTM ( T ai et al. , 2015 ) - both models for text classification and sentiment analysis, T agger ( Lample et al. , 2016 ) - se- quence tagging model with an RNN encoder and a per-token classification, ResNet ( He et al. , 2016 ) - image classifica- tion model. Although this ev aluation is imprecise in nature (the same model can be implemented in a more or less con- cise way and writing a parameter in a configuration file is substantially simpler than writing a line of code), it could T ensorFlow Keras PyT orch Ludwig mean mean mean. w/o w W ordCNN 406.17 201.50 458.75 8 66 Bi-LSTM 416.75 439.75 323.40 10 68 T agger 1067.00 1039.25 1968.00 10 68 ResNet 1252.75 779.60 479.43 9 61 T able 1. Number of lines of code for implementing different mod- els. mean columns are the mean lines of code needed to write a program from scratch for the task. w and w/o in the Ludwig column refer to the number of lines for writing a model definition specifying e very single model hyper-parameter and pre-processing parameter , and without specifying any hyper-parameter respec- tiv ely . provide intuition about the amount of effort needed to im- plement a model with different tools. T o calculate the mean for dif ferent libraries, openly av ailable implementations on GitHub are collected and the number of lines of code of each of them is collected (the list of repositories is av ailable in the appendix). For Ludwig, the amount of lines in the con- figuration file needed to obtain the same models is reported both in the case where no hyper-parameter is spacified and in the case where all its hyper-parameters are specified. The results in T able 1 sho w ho w e ven when specifying all its hyper-parame ters, a Ludwig declarati ve model configuration is an order of magnitude smaller than ev en the most concise alternativ e. This supports the claim that Ludwig can be useful as a tool to reduce the effort needed for training and using deep learning models. 5 L I M I T AT I O N S A N D F U T U R E W O R K Although Ludwig’ s ECD architecture is particularly well- suited for supervised and self-supervised tasks, ho w suitable Ludwig: a type-based declarative deep lear ning toolbox it is for other machine learning tasks is not immediately evident. One notable example of such tasks are Generative Adver - sarial Networks (GANs) ( Goodfello w et al. , 2014 ): their architecture contains two models that learn to generate data and discriminate synthetic from real data and are trained with inv erted losses. In order to replicate a GAN within the boundaries of the ECD architecture, the inputs to both models would have to be defined at the encoder level, the discriminator output would hav e to be defined as a decoder , and the remaining parts of both models w ould hav e to be de- fined as one big combiner , which is inelegant; for instance, changing just the generator would result in an entirely ne w implementation. An elegant solution would allow for dis- entangling the two models and change them independently . The recursiv e graph extension of the combiner described in section 2.2 allo ws a more eleg ant solution by providing a mechanism for defining the generator and discriminator as two independent sub-graphs, impro ving modularity and extensibility . W An e xtension of the toolbox in this direction is planned in the future. Another example is reinforcement learning. Although ECD can be used to build the v ast majority of deep architectures currently adopted in reinforcement learning, some of the techniques they employ are relativ ely hard to represent, such as instance double inference with fixed weights in Deep Q-Networks ( Mnih et al. , 2015 ), which can currently be implemented only with a really custom and inelegant combiner . Moreover , supporting the dynamic interaction with an en vironment for data collection and more clever ways to collect it like Go-Explore’ s ( Ecof fet et al. , 2019 ) archi ve or prioritized e xperience replay ( Schaul et al. , 2016 ), is currently out of the scope of the toolbox: a user would hav e to build these capabilities on their own and call Ludwig functions only inside the inner loop of the interaction with the environment. Extending the toolbox to allow for easy adoption in reinforcement learning scenarios, for example by allo wing training through policy gradient methods like REINFORCE ( W illiams , 1992 ) or off-polic y methods, is a potential direction of improv ement. Although these two cases highlight current limitations of the Ludwig, it’ s worth noting ho w most of the current industrial applications of machine learning are based on supervised learning, and that is where the proposed architecture fits the best and the toolbox provides most of its v alue. Although the declarati ve nature of Ludwig’ s model defini- tion allows for easier model de velopment, as the number of encoders and their hyper -parameters increase, the need for automatic hyper-parameter opti mization arises. In Ludwig, howe ver , different encoders and decoders, i.e., sub-modules of the whole architecture, are themselves hyper -parameters. For this reason, Ludwig is well-suited for performing both hyper-parameter search and architecture search, and blurs the line between the two. A future addition to the model definition file will be an hyper- parameter search section that will allow users to define which strategy among those av ailable to adopt to perform the optimization and, if the optimization process itself contains parameters, the user will be allowed to provide them in this section as well. Currently a Bayesian optimization ov er combinatorial structures ( Baptista & Poloczek , 2018 ) approach is in dev elopment, but more can be added. Finally , more feature types will be added in the future, in particular videos and graphs, together with a number of pre- trained encoders and decoders, which will allow training of a full model in few iterations. 6 R E L A T E D W O R K T ensorFlow ( Abadi et al. , 2015 ), Caffe ( Jia et al. , 2014 ), Theano ( Theano Dev elopment T eam , 2016 ) and other simi- lar libraries are tensor computation frame works that allo w for automatic differentiation and declarati ve model through the definition of a computation graph. They all provide sim- ilar underlying primiti ves and support computation graph optimizations that allo w for training of lar ge-scale deep neu- ral networks. PyT orch ( Paszke et al. , 2017 ), on the other hand, provides the same lev el of abstraction, but allows users to define models imperativ ely: this has the advantage to make a PyT orch program easier to deb ug and to inspect. By adding eager execution, T ensorFlo w 2.0 allows for both declarativ e and imperativ e programming styles. In contrast, Ludwig, which is built on top of T ensorFlow , provides a higher level of abstraction for the user . Users can declare full model architectures rather than underlying tensor op- erations, which allo ws for more concise model definitions, while flexibi lity is ensured by allo wing users to change each parameter of each component of the architecture if they wish to. Sonnet ( Reynolds et al. , 2017 ), K eras ( Chollet et al. , 2015 ), and AllenNLP ( Gardner et al. , 2017 ) are similar to Lud- wig in the sense that both libraries provide a higher le vel of abstraction ov er T ensorFlow and PyT orch primiti ves re- spectiv ely . Howe ver , while they provide modules which can be used to b uild a desired network architecture, what distinguishes Ludwig from them is its declarative nature and being built around data type abstraction. This allows for the flexible ECD architecture that can cover many use cases beyond the natural language processing covered by AllenNLP , and also doesn’t require to write code for both model implementation and pre-processing like in Sonnet and Keras. Scikit-learn ( Buitinck et al. , 2013 ), W eka ( Hall et al. , 2009 ), and MLLib ( Meng et al. , 2016 ) are popular machine learn- Ludwig: a type-based declarative deep lear ning toolbox ing libraries among researchers and industry practitioners. They contain implementations of several dif ferent traditional machine learning algorithm and provide common interfaces for them to use, so that algorithms become in most cases interchangeable and users can easily compare them. Lud- wig follows this API design philosophy in its programmatic interface, b ut focuses on deep learning models that are not av ailable in those tools. 7 C O N C L U S I O N S This work presented Ludwig, a deep learning toolbox b uilt around type-based abstraction and a flexible ECD archi- tecture that allows model definition through a declarative language. The proposed tool has many advantages in terms of fle xibil- ity , extensibility , and ease of use, which allo w both experts and novices to train deep learning models, employ them for obtaining predictions, and experiment with dif ferent archi- tectures without the need to write code, but still allowing users to easily add custom sub-modules. In conclusion, Ludwig’ s general and flexible architecture and its ease of use make it a good option for democratizing deep learning by making it more accessible, streamlining and speeding up experimentation, and unlocking many ne w applications. R E F E R E N C E S Abadi, M., Agarwal, A., Barham, P ., Bre vdo, E., Chen, Z., Citro, C., Corrado, G. S., Davis, A., Dean, J., Devin, M., Ghemawat , S., Goodfellow , I., Harp, A., Irving, G., Isard, M., Jia, Y ., Jozefo wicz, R., Kaiser, L., K udlur, M., Le v- enberg, J., Man ´ e, D., Monga, R., Moore, S., Murray , D., Olah, C., Schuster , M., Shlens, J., Steiner , B., Sutskev er , I., T alw ar, K., T ucker , P ., V anhoucke, V ., V asude van, V ., V i ´ egas, F ., V inyals, O., W arden, P ., W attenberg, M., W icke, M., Y u, Y ., and Zheng, X. T ensorFlo w: Large- scale machine learning on heterogeneous systems, 2015. URL https://www.tensorflow.org/ . Software av ailable from tensorflow .org. Baptista, R. and Poloczek, M. Bayesian optimization of combinatorial structures. In Dy , J. G. and Krause, A. (eds.), Pr oceedings of the 35th International Confer ence on Machine Learning, ICML 2018, Stockholmsm ¨ assan, Stockholm, Sweden, J uly 10-15, 2018 , v olume 80 of Pro- ceedings of Machine Learning Researc h , pp. 471–480. PMLR, 2018. URL http://proceedings.mlr. press/v80/baptista18a.html . Brodsky , I. et al. H3. https://github.com/uber/ h3 , 2018. Buitinck, L., Louppe, G., Blondel, M., Pedre gosa, F ., Mueller , A., Grisel, O., Niculae, V ., Prettenhofer, P ., Gramfort, A., Grobler , J., Layton, R., V anderPlas, J., Joly , A., Holt, B., and V aroquaux, G. API design for ma- chine learning softw are: experiences from the scikit-learn project. In ECML PKDD W orkshop: Languages for Data Mining and Machine Learning , pp. 108–122, 2013. Caruana, R. Multitask learning: A kno wledge-based source of inductiv e bias. In Utgof f, P . E. (ed.), Machine Learning, Pr oceedings of the T enth Interna- tional Confer ence, University of Massachusetts, Amherst, MA, USA, J une 27-29, 1993 , pp. 41–48. Morgan Kaufmann, 1993. doi: 10.1016/b978- 1- 55860- 307- 3. 50012- 5. URL https://doi.org/10.1016/ b978- 1- 55860- 307- 3.50012- 5 . Chen, T ., Li, M., Li, Y ., Lin, M., W ang, N., W ang, M., Xiao, T ., Xu, B., Zhang, C., and Zhang, Z. Mxnet: A flexible and ef ficient machine learning library for heterogeneous distributed systems. arXiv preprint , 2015. Chollet, F . et al. Keras. https://keras.io , 2015. Ecoffet, A., Huizinga, J., Lehman, J., Stanley , K. O., and Clune, J. Go-explore: a new approach for hard- exploration problems. CoRR , abs/1901.10995, 2019. URL . Gamma, E., Helm, R., Johnson, R., and Vlissides, J. Design P atterns: Elements of Reusable Object- Oriented Softwar e . Addison-W esley Professional Computing Series. Pearson Education, 1994. ISBN 9780321700698. URL https://books.google. com/books?id=6oHuKQe3TjQC . Gardner , M., Grus, J., Neumann, M., T afjord, O., Dasigi, P ., Liu, N. F ., Peters, M., Schmitz, M., and Zettlemoyer , L. S. Allennlp: A deep semantic natural language processing platform. 2017. Goodfellow , I., Pouget-Abadie, J., Mirza, M., Xu, B., W arde-Farley , D., Ozair, S., Courville, A., and Bengio, Y . Generativ e adversarial nets. In Advances in neural information pr ocessing systems , pp. 2672–2680, 2014. Hall, M. A., Frank, E., Holmes, G., Pfahringer , B., Reute- mann, P ., and Witten, I. H. The WEKA data mining software: an update. SIGKDD Explorations , 11(1):10– 18, 2009. doi: 10.1145/1656274.1656278. URL https: //doi.org/10.1145/1656274.1656278 . He, K., Zhang, X., Ren, S., and Sun, J. Deep residual learn- ing for image recognition. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , pp. 770–778, 2016. Ludwig: a type-based declarative deep lear ning toolbox Huang, G., Liu, Z., van der Maaten, L., and W einberger , K. Q. Densely connected conv olutional networks. In The IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , July 2017. Jia, Y ., Shelhamer, E., Donahue, J., Karayev , S., Long, J., Girshick, R., Guadarrama, S., and Darrell, T . Caffe: Con volutional architecture for fast feature embedding. In Pr oceedings of the 22nd A CM international confer ence on Multimedia , pp. 675–678. A CM, 2014. Kim, Y . Con volutional neural networks for sentence classi- fication. In Moschitti, A., Pang, B., and Daelemans, W . (eds.), Pr oceedings of the 2014 Conference on Empirical Methods in Natural Languag e Processing , EMNLP 2014, October 25-29, 2014, Doha, Qatar , A meeting of SIGD A T , a Special Interest Gr oup of the ACL , pp. 1746–1751. A CL, 2014. URL http://aclweb.org/anthology/D/ D14/D14- 1181.pdf . Lample, G., Ballesteros, M., Subramanian, S., Kaw akami, K., and Dyer, C. Neural architectures for named entity recognition. In Knight, K., Nenko va, A., and Rambow , O. (eds.), NAA CL HLT 2016, The 2016 Confer ence of the North American Chapter of the Association for Computa- tional Linguistics: Human Language T echnologies, San Die go California, USA, J une 12-17, 2016 , pp. 260–270. The Association for Computational Linguistics, 2016. URL http://aclweb.org/anthology/N/N16/ N16- 1030.pdf . Meng, X., Bradley , J., Y avuz, B., Sparks, E., V enkataraman, S., Liu, D., Freeman, J., Tsai, D., Amde, M., Owen, S., et al. Mllib: Machine learning in apache spark. The J ournal of Machine Learning Resear ch , 17(1):1235–1241, 2016. Mnih, V ., Kavukcuoglu, K., Silver , D., Rusu, A. A., V e- ness, J., Bellemare, M. G., Graves, A., Riedmiller , M. A., Fidjeland, A., Ostrovski, G., Petersen, S., Beattie, C., Sadik, A., Antonoglou, I., King, H., K umaran, D., Wier - stra, D., Legg, S., and Hassabis, D. Human-level con- trol through deep reinforcement learning. Nature , 518 (7540):529–533, 2015. doi: 10.1038/nature14236. URL https://doi.org/10.1038/nature14236 . Paszke, A., Gross, S., Chintala, S., Chanan, G., Y ang, E., DeV ito, Z., Lin, Z., Desmaison, A., Antiga, L., and Lerer, A. Automatic differentiation in p ytorch. 2017. Ratner , A., Hancock, B., Dunnmon, J., Goldman, R. E., and R ´ e, C. Snorkel metal: W eak supervision for multi-task learning. In Schelter , S., Seufert, S., and Kumar , A. (eds.), Pr oceedings of the Second W ork- shop on Data Management for End-T o-End Machine Learning, DEEM@SIGMOD 2018, Houston, TX, USA, J une 15, 2018 , pp. 3:1–3:4. A CM, 2018. doi: 10. 1145/3209889.3209898. URL https://doi.org/ 10.1145/3209889.3209898 . Ratner , A., Hancock, B., Dunnmon, J., Sala, F ., Pandey , S., and R ´ e, C. T raining complex models with multi- task weak supervision. In The Thirty-Thir d AAAI Confer ence on Artificial Intelligence, AAAI 2019, The Thirty-F irst Innovative Applications of Artificial Intel- ligence Confer ence, IAAI 2019, The Ninth AAAI Sym- posium on Educational Advances in Artificial Intelli- gence, EAAI 2019, Honolulu, Hawaii, USA, J anuary 27 - F ebruary 1, 2019. , pp. 4763–4771. AAAI Press, 2019a. URL https://aaai.org/ojs/index. php/AAAI/article/view/4403 . Ratner , A. J., Hancock, B., and R ´ e, C. The role of mas- siv ely multi-task and weak supervision in software 2.0. In CIDR 2019, 9th Biennial Confer ence on Innovative Data Systems Resear ch, Asilomar , CA, USA, January 13- 16, 2019, Online Pr oceedings . www .cidrdb .org, 2019b. URL http://cidrdb.org/cidr2019/papers/ p58- ratner- cidr19.pdf . Reynolds, M., Barth-Maron, G., Besse, F ., de Las Casas, D., Fidjeland, A., Green, T ., Puigdom ` enech, A., Racani ` ere, S., Rae, J., and V iola, F . Open sourc- ing Sonnet - a new library for constructing neu- ral networks. https://deepmind.com/blog/ open- sourcing- sonnet/ , 2017. Schaul, T ., Quan, J., Antonoglou, I., and Silver , D. Prior- itized experience replay . In Bengio, Y . and LeCun, Y . (eds.), 4th International Confer ence on Learning Rep- r esentations, ICLR 2016, San J uan, Puerto Rico, May 2-4, 2016, Conference T rack Proceedings , 2016. URL http://arxiv.org/abs/1511.05952 . Seide, F . and Agarwal, A. Cntk: Microsoft’ s open-source deep-learning toolkit. In Pr oceedings of the 22nd A CM SIGKDD International Confer ence on Knowledge Dis- covery and Data Mining , pp. 2135–2135. A CM, 2016. Simonyan, K. and Zisserman, A. V ery deep con v olutional networks for large-scale image recognition. In Bengio, Y . and LeCun, Y . (eds.), 3r d International Conference on Learning Repr esentations, ICLR 2015, San Die go, CA, USA, May 7-9, 2015, Confer ence T rack Pr oceed- ings , 2015. URL 1556 . T ai, K. S., Socher , R., and Manning, C. D. Improved se- mantic representations from tree-structured long short- term memory networks. In Pr oceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Confer ence on Natural Language Pr ocessing of the Asian F edera- tion of Natural Language Pr ocessing, ACL 2015, J uly Ludwig: a type-based declarative deep lear ning toolbox 26-31, 2015, Beijing, China, V olume 1: Long P apers , pp. 1556–1566. The Association for Computer Lin- guistics, 2015. URL https://www.aclweb.org/ anthology/P15- 1150/ . Theano Dev elopment T eam. Theano: A Python framework for fast computation of mathematical expressions. arXiv e-prints , abs/1605.02688, May 2016. URL http:// arxiv.org/abs/1605.02688 . T okui, S., Oono, K., Hido, S., and Clayton, J. Chainer: a next-generation open source frame work for deep learning. In Pr oceedings of workshop on machine learning systems (LearningSys) in the twenty-ninth annual confer ence on neural information pr ocessing systems (NIPS) , volume 5, pp. 1–6, 2015. W illiams, R. J. Simple statistical gradient-following algo- rithms for connectionist reinforcement learning. Ma- chine Learning , 8:229–256, 1992. doi: 10.1007/ BF00992696. URL https://doi.org/10.1007/ BF00992696 . Ludwig: a type-based declarative deep lear ning toolbox A F U L L L I S T O F G I T H U B R E P O S I T O R I E S repository loc model notes https://github.com/dennybritz/ cnn- text- classification- tf 308 W ordCNN https://github.com/randomrandom/ deep- atrous- cnn- sentiment 621 W ordCNN https://github.com/jiegzhan/ multi- class- text- classification- cnn 284 W ordCNN https://github.com/TobiasLee/ Text- Classification 335 W ordCNN cnn.py + files in utils directory https://github.com/zackhy/ TextClassification 405 W ordCNN cnn classifier .pt + train.py + test.py https://github.com/YCG09/ tf- text- classification 484 W ordCNN all files minus the rnn related ones https://github.com/roomylee/ rnn- text- classification- tf 305 Bi-LSTM https://github.com/dongjun- Lee/ rnn- text- classification- tf 271 Bi-LSTM https://github.com/TobiasLee/ Text- Classification 397 Bi-LSTM attn bi lstm.py + files utils direc- tory https://github.com/zackhy/ TextClassification 459 Bi-LSTM rnn classifier .pt + train.py + test.py https://github.com/YCG09/ tf- text- classification 506 Bi-LSTM all files minus the cnn related ones https://github.com/ry/tensorflow- resnet 2243 ResNet https://github.com/wenxinxu/ resnet- in- tensorflow 635 ResNet https://github.com/taki0112/ ResNet- Tensorflow 472 ResNet https://github.com/ShHsLin/ resnet- tensorflow 1661 ResNet https://github.com/guillaumegenthial/ sequence_tagging 959 T agger https://github.com/guillaumegenthial/tf_ner 1877 T agger https://github.com/kamalkraj/ Named- Entity- Recognition- with- Bidirectional- LSTM- CNNs 365 T agger T able 2. List of T ensorFlow repositories used for the e valuation. Ludwig: a type-based declarative deep lear ning toolbox repository loc model notes https://github.com/Jverma/ cnn- text- classification- keras 228 W ordCNN https://github.com/bhaveshoswal/ CNN- text- classification- keras 117 W ordCNN https://github.com/alexander- rakhlin/ CNN- for- Sentence- Classification- in- Keras 258 W ordCNN https://github.com/junwang4/ CNN- sentence- classification- keras- 2018 295 W ordCNN https://github.com/cmasch/ cnn- text- classification 122 W ordCNN https://github.com/diegoschapira/ CNN- Text- Classifier- using- Keras 189 W ordCNN https://github.com/shashank- bhatt- 07/ Keras- LSTM- Sentiment- Classification 425 Bi-LSTM https://github.com/AlexGidiotis/ Document- Classifier- LSTM 678 Bi-LSTM https://github.com/pinae/ LSTM- Classification 547 Bi-LSTM https://github.com/susanli2016/ NLP- with- Python/blob/master/Multi- Class% 20Text%20Classification%20LSTM%20Consumer% 20complaints.ipynb 109 Bi-LSTM https://github.com/raghakot/keras- resnet 292 ResNet https://github.com/keras- team/ keras- applications/blob/master/keras_ applications/resnet50.py 297 ResNet Only model, no preprocessing https://github.com/broadinstitute/ keras- resnet 2285 ResNet https://github.com/yuyang- huang/ keras- inception- resnet- v2 560 ResNet https://github.com/keras- team/ keras- contrib/blob/master/keras_contrib/ applications/resnet.py 464 ResNet Only model, no preprocessing https://github.com/Hironsan/anago 2057 T agger https://github.com/floydhub/ named- entity- recognition- template 150 T agger https://github.com/digitalprk/KoreaNER 501 T agger https://github.com/vunb/anago- tagger 1449 T agger T able 3. List of Keras repositories used for the ev aluation. Ludwig: a type-based declarative deep lear ning toolbox repository loc model notes https://github.com/Shawn1993/ cnn- text- classification- pytorch 311 W ordCNN https://github.com/yongjincho/ cnn- text- classification- pytorch 247 W ordCNN https://github.com/srviest/ char- cnn- text- classification- pytorch 778 W ordCNN ignored model CharCNN2d.py https://github.com/threelittlemonkeys/ cnn- text- classification- pytorch 499 W ordCNN https://github.com/keishinkickback/ Pytorch- RNN- text- classification 414 Bi-LSTM https://github.com/Jarvx/ text- classification- pytorch 421 Bi-LSTM https://github.com/jiangqy/ LSTM- Classification- Pytorch 324 Bi-LSTM https://github.com/a7b23/ text- classification- in- pytorch- using- lstm 188 Bi-LSTM https://github.com/claravania/lstm- pytorch 270 Bi-LSTM https://github.com/hysts/pytorch_resnet 447 ResNet https://github.com/a- martyn/resnet 286 ResNet https://github.com/hysts/pytorch_resnet_ preact 535 ResNet https://github.com/ppwwyyxx/ GroupNorm- reproduce/tree/master/ ImageNet- ResNet- PyTorch 1095 ResNet https://github.com/KellerJordan/ ResNet- PyTorch- CIFAR10 199 ResNet https://github.com/mbsariyildiz/ resnet- pytorch 450 ResNet https://github.com/akamaster/pytorch_ resnet_cifar10 344 ResNet https://github.com/ZhixiuYe/NER- pytorch 1184 T agger https://github.com/sgrvinod/ a- PyTorch- Tutorial- to- Sequence- Labeling 840 T agger https://github.com/epwalsh/pytorch- crf 3243 T agger https://github.com/LiyuanLucasLiu/ LM- LSTM- CRF 2605 T agger T able 4. List of Pytorch repositories used for the evaluation.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment