They Might NOT Be Giants: Crafting Black-Box Adversarial Examples with Fewer Queries Using Particle Swarm Optimization

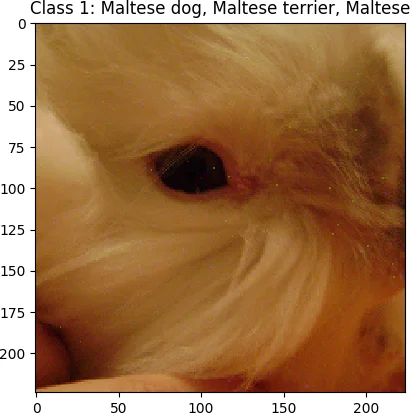

Machine learning models have been found to be susceptible to adversarial examples that are often indistinguishable from the original inputs. These adversarial examples are created by applying adversarial perturbations to input samples, which would cause them to be misclassified by the target models. Attacks that search and apply the perturbations to create adversarial examples are performed in both white-box and black-box settings, depending on the information available to the attacker about the target. For black-box attacks, the only capability available to the attacker is the ability to query the target with specially crafted inputs and observing the labels returned by the model. Current black-box attacks either have low success rates, requires a high number of queries, or produce adversarial examples that are easily distinguishable from their sources. In this paper, we present AdversarialPSO, a black-box attack that uses fewer queries to create adversarial examples with high success rates. AdversarialPSO is based on the evolutionary search algorithm Particle Swarm Optimization, a populationbased gradient-free optimization algorithm. It is flexible in balancing the number of queries submitted to the target vs the quality of imperceptible adversarial examples. The attack has been evaluated using the image classification benchmark datasets CIFAR-10, MNIST, and Imagenet, achieving success rates of 99.6%, 96.3%, and 82.0%, respectively, while submitting substantially fewer queries than the state-of-the-art. We also present a black-box method for isolating salient features used by models when making classifications. This method, called Swarms with Individual Search Spaces or SWISS, creates adversarial examples by finding and modifying the most important features in the input.

💡 Research Summary

The paper introduces a novel black‑box adversarial attack called AdversarialPSO that leverages Particle Swarm Optimization (PSO), a gradient‑free population‑based search algorithm, to craft adversarial images with far fewer queries than existing methods. Traditional black‑box attacks either rely on training a surrogate model (which may not transfer well) or on finite‑difference gradient estimation such as ZOO, both of which demand a large number of model queries, especially for high‑dimensional inputs.

AdversarialPSO treats each particle as a perturbed version of the original image. The fitness function combines two objectives: (1) increase the target class probability (or decrease the true class confidence for untargeted attacks) beyond a predefined threshold, and (2) keep the perturbation within an L₂ or L∞ budget that ensures imperceptibility. By updating particle velocities with an inertia weight that linearly decays, a constriction factor, and random coefficients, the swarm balances global exploration and local exploitation. The authors also experiment with hybrid inertia‑constriction updates to improve convergence speed.

A second contribution, SWISS (Swarms With Individual Search Spaces), builds on the same PSO infrastructure but restricts particles to specific image sub‑regions. The method first scans the whole image with coarse blocks, measures the change in model output when each block is perturbed, and recursively refines the most influential blocks. Once salient regions are identified, a fine‑grained PSO run is performed only within those regions, dramatically reducing the number of queries needed to achieve high visual quality. This design provides a natural trade‑off: more queries yield finer localization and smaller perturbations, while fewer queries produce coarser but still successful attacks.

The authors evaluate both techniques on three benchmark datasets: MNIST, CIFAR‑10, and ImageNet. Results show that AdversarialPSO attains success rates of 96.3 % (MNIST), 99.6 % (CIFAR‑10), and 82.0 % (ImageNet) while using on average 1.2 K, 2.8 K, and 7.5 K queries, respectively. These numbers are 5‑10× lower than the query budgets reported for ZOO and GenAttack, yet the perturbation magnitudes (measured by L₂ distance and SSIM) are comparable or better. When SWISS is applied, the same query budget yields noticeably higher image fidelity, confirming the effectiveness of the region‑localization strategy.

The paper also discusses limitations: PSO does not guarantee global optimality, and the computational cost grows with particle count and iteration limit, which may be significant for very large images. Moreover, the fitness function may need adjustment for strict targeted attacks that require exact label changes rather than probability thresholds.

Overall, the work demonstrates that gradient‑free evolutionary search can be a powerful tool for black‑box adversarial generation, offering a practical balance between query efficiency, attack success, and perceptual quality. It provides a solid baseline for future research on both more sophisticated attacks and robust defensive mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment