X-Ray CT Reconstruction of Additively Manufactured Parts using 2.5D Deep Learning MBIR

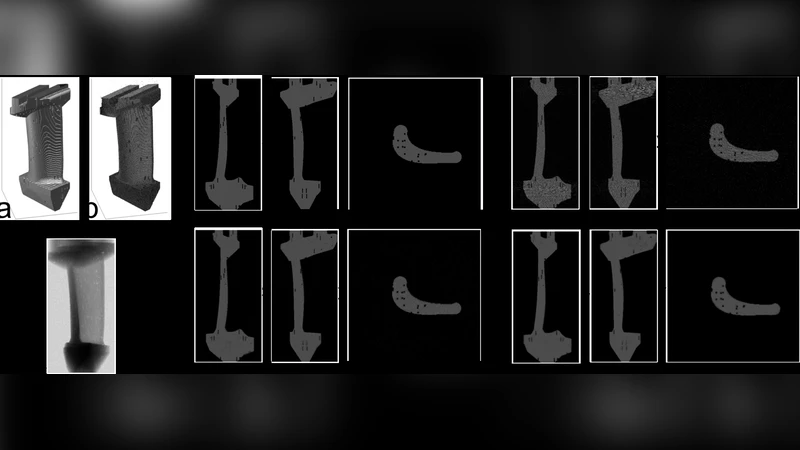

In this paper, we present a deep learning algorithm to rapidly obtain high quality CT reconstructions for AM parts. In particular, we propose to use CAD models of the parts that are to be manufactured, introduce typical defects and simulate XCT measurements. These simulated measurements were processed using FBP (computationally simple but result in noisy images) and the MBIR technique. We then train a 2.5D deep convolutional neural network [4], deemed 2.5D Deep Learning MBIR (2.5D DL-MBIR), on these pairs of noisy and high-quality 3D volumes to learn a fast, non-linear mapping function. The 2.5D DL-MBIR reconstructs a 3D volume in a 2.5D scheme where each slice is reconstructed from multiple inputs slices of the FBP input. Given this trained system, we can take a small set of measurements on an actual part, process it using a combination of FBP followed by 2.5D DL-MBIR. Both steps can be rapidly performed using GPUs, resulting in a real-time algorithm that achieves the high-quality of MBIR as fast as standard techniques. Intuitively, since CAD models are typically available for parts to be manufactured, this provides a strong constraint “prior” which can be leveraged to improve the reconstruction.

💡 Research Summary

The paper introduces a novel framework for rapid, high‑quality X‑ray computed tomography (CT) reconstruction of additively manufactured (AM) parts by leveraging the CAD models that are typically available for such components. The authors first generate synthetic defect‑laden part geometries from the CAD data, then simulate X‑ray projection measurements for these virtual parts. The simulated projections are reconstructed using two conventional methods: filtered back‑projection (FBP), which is computationally cheap but yields noisy, artifact‑prone images, and model‑based iterative reconstruction (MBIR), which produces high‑fidelity images at the cost of substantial computation time.

These paired low‑quality (FBP) and high‑quality (MBIR) 3‑D volumes constitute a supervised training set for a deep convolutional neural network (CNN) that operates in a “2.5‑D” fashion. In a 2.5‑D scheme each output slice is inferred not only from the corresponding input slice but also from a small stack of neighboring slices (typically five to seven). This design preserves inter‑slice continuity while avoiding the memory and runtime burdens of full 3‑D convolutions. The network architecture follows the 2.5‑D CNN described in reference

Comments & Academic Discussion

Loading comments...

Leave a Comment