A sufficient condition for a linear speedup in competitive parallel computing

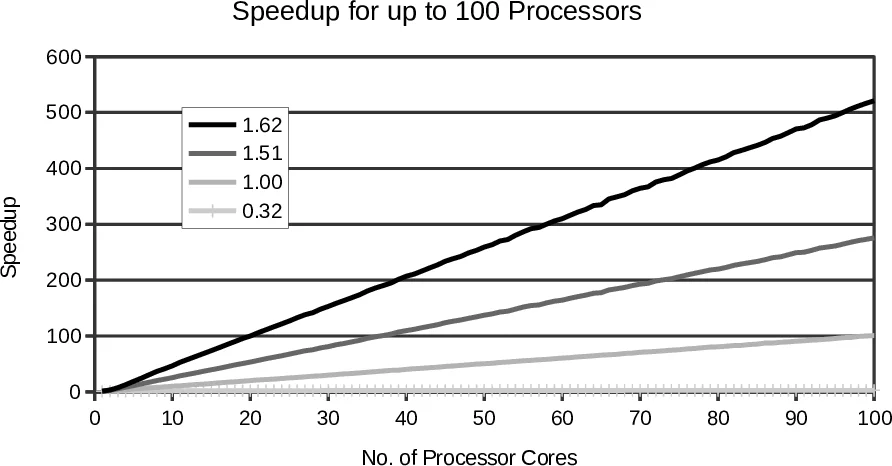

In competitive parallel computing, the identical copies of a code in a phase of a sequential program are assigned to processor cores and the result of the fastest core is adopted. In the literature, it is reported that a superlinear speedup can be achieved if there is an enough fluctuation among the execution times consumed by the cores. Competitive parallel computing is a promising approach to use a huge amount of cores effectively. However, there is few theoretical studies on speedups which can be achieved by competitive parallel computing at present. In this paper, we present a behavioral model of competitive parallel computing and provide a means to predict a speedup which competitive parallel computing yields through theoretical analyses and simulations. We also found a sufficient condition to provide a linear speedup which competitive parallel computing yields. More specifically, it is sufficient for the execution times which consumed by the cores to follow an exponential distribution. In addition, we found that the different distributions which have the identical coefficient of variation (CV) do not always provide the identical speedup. While CV is a convenient measure to predict a speedup, it is not enough to provide an exact prediction.

💡 Research Summary

The paper addresses the growing challenge of exploiting ever‑increasing numbers of processor cores without the overhead of traditional parallel programming. It proposes “competitive parallel computing” (CPC), a paradigm in which identical copies of a program phase are dispatched to multiple cores and the result from the fastest core is accepted while the others are terminated. This approach eliminates the need for explicit parallelization and can be applied even to algorithms that are inherently difficult to parallelize.

A mathematical model is introduced: the execution time of core i is represented by an independent, identically distributed (i.i.d.) random variable X_i, i = 1…n. The overall execution time of the phase is the minimum Y_n = min(X_1,…,X_n). Using order‑statistics, the cumulative distribution function (CDF) of Y_n is derived as

F_{Y_n}(y) = 1 − (1 − F_X(y))^n,

where F_X is the CDF of the individual execution times.

Four probability distributions are examined for X_i: exponential, Erlang, hyper‑exponential, and uniform. All are calibrated to have the same mean (E

Comments & Academic Discussion

Loading comments...

Leave a Comment