AI for Earth: Rainforest Conservation by Acoustic Surveillance

Saving rainforests is a key to halting adverse climate changes. In this paper, we introduce an innovative solution built on acoustic surveillance and machine learning technologies to help rainforest conservation. In particular, We propose new convolutional neural network (CNN) models for environmental sound classification and achieved promising preliminary results on two datasets, including a public audio dataset and our real rainforest sound dataset. The proposed audio classification models can be easily extended in an automated machine learning paradigm and integrated in cloud-based services for real world deployment.

💡 Research Summary

The paper “AI for Earth: Rainforest Conservation by Acoustic Surveillance” presents a novel approach that leverages acoustic monitoring and deep‑learning techniques to detect illegal activities and monitor biodiversity in tropical rainforests. Recognizing that visual surveillance is hampered by dense canopy, limited field of view, and variable lighting, the authors argue that sound offers a low‑bandwidth, high‑information‑density alternative that can be captured with inexpensive, battery‑efficient devices in remote, power‑constrained environments.

Two custom convolutional neural network (CNN) architectures are introduced to address the domain gap between existing urban‑centric audio datasets and the unique acoustic conditions of rainforests, while also meeting the computational constraints of edge devices.

-

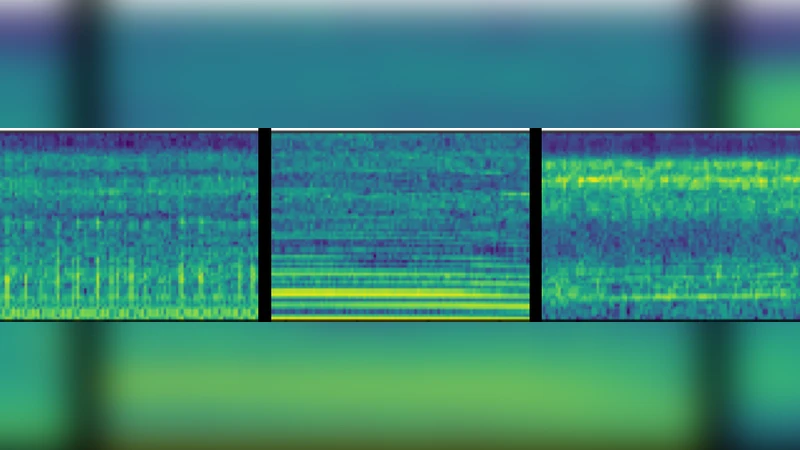

Aug‑VGGish – A heavily pruned version of the widely used VGG‑ish model. The original 72.1 M‑parameter network is reduced to 4.7 M parameters through layer pruning, replacement of the final dense layers with a global pooling operation, and shrinking the fully‑connected layers from 4096 to 256 units. Batch Normalization is added after each convolutional layer to enable higher learning rates and improve training stability. This design allows the model to accept spectrograms of varying dimensions, a practical necessity for field‑deployed microphones.

-

FCN‑VGGish – Building on Aug‑VGGish, this model adopts a fully‑convolutional architecture with eight convolutional layers (total 18.7 M parameters). By eliminating any fully‑connected layers, the network preserves spatial resolution throughout the forward pass, thereby capturing finer‑grained acoustic cues that may be lost in a global pooling step.

Both models are pre‑trained on the weakly labeled AudioSet (≈2 M audio clips) to learn generic sound representations, then fine‑tuned on two target tasks:

-

ESC‑50 benchmark – A balanced dataset of 2 000 five‑second clips spanning 50 environmental sound classes. Using 5‑fold cross‑validation, Aug‑VGGish achieves 87.5 % accuracy (F1 = 0.870) and FCN‑VGGish reaches 90.1 % accuracy (F1 = 0.898), surpassing the vanilla VGG‑ish baseline (81.3 %) and the previously reported state‑of‑the‑art method (86.5 %).

-

Real‑world rainforest recordings – Collected on‑site with Huawei smartphones (chosen for their durability and long battery life), the dataset comprises 22 000 one‑second clips annotated for the presence of chainsaw noise. The data are heavily imbalanced, reflecting the rarity of illegal logging events. Transfer learning from AudioSet followed by fine‑tuning yields precision‑recall curves showing that Aug‑VGGish outperforms the original VGG‑ish, while FCN‑VGGish delivers the best overall performance. The authors note that the current collection lacks extreme background sounds (e.g., insect buzzing), which may inflate performance metrics relative to a fully realistic acoustic environment.

The paper also discusses practical deployment considerations. The lightweight nature of Aug‑VGGish makes it suitable for on‑device inference on IoT nodes, whereas FCN‑VGGish, though larger, can be hosted in a cloud environment to provide higher accuracy for periodic batch analyses. Integration with Huawei Cloud services is proposed to enable automated model updates, scalable storage of audio streams, and real‑time alert generation for conservation partners.

Future work outlined by the authors includes:

- Exploring few‑shot and meta‑learning strategies to reduce dependence on large labeled datasets, which are costly to obtain in remote rainforest settings.

- Extending the binary chainsaw detector to multi‑class scenarios such as animal vocalization identification, habitat modeling for species like spider monkeys, and broader biodiversity monitoring.

- Building a full end‑to‑end cloud‑AI pipeline that connects field sensors, edge inference, cloud‑based model training, and a user‑friendly dashboard for NGOs and forest rangers.

In summary, the study demonstrates that acoustic surveillance, when combined with carefully engineered CNN models and transfer learning, can achieve high‑accuracy detection of illegal logging sounds in challenging rainforest environments. The proposed models balance computational efficiency with performance, making them viable for both on‑device and cloud‑based deployments. By providing concrete experimental results, a clear path toward scalable cloud services, and a roadmap for future enhancements, the paper makes a substantive contribution to the emerging field of AI‑enabled environmental conservation.

Comments & Academic Discussion

Loading comments...

Leave a Comment