Forecasting Cardiology Admissions from Catheterization Laboratory

Emergent and unscheduled cardiology admissions from cardiac catheterization laboratory add complexity to the management of Cardiology and in-patient department. In this article, we sought to study the behavior of cardiology admissions from Catheterization laboratory using time series models. Our research involves retrospective cardiology admission data from March 1, 2012, to November 3, 2016, retrieved from a hospital in Iowa. Autoregressive integrated moving average (ARIMA), Holts method, mean method, na"ive method, seasonal na"ive, exponential smoothing, and drift method were implemented to forecast weekly cardiology admissions from Catheterization laboratory. ARIMA (2,0,2) (1,1,1) was selected as the best fit model with the minimum sum of error, Akaike information criterion and Schwartz Bayesian criterion. The model failed to reject the null hypothesis of stationarity, it lacked the evidence of independence, and rejected the null hypothesis of normality. The implication of this study will not only improve catheterization laboratory staff schedule, advocate efficient use of imaging equipment and inpatient telemetry beds but also equip management to proactively tackle inpatient overcrowding, plan for physical capacity expansion and so forth.

💡 Research Summary

This paper addresses the operational challenge posed by emergent and unscheduled cardiology admissions originating from a cardiac catheterization laboratory. The authors aim to develop a reliable forecasting tool for weekly admissions to improve staff scheduling, equipment utilization, and inpatient telemetry bed management.

Data were collected retrospectively from a single Iowa hospital, covering the period March 1 2012 to November 3 2016, yielding 244 weekly admission counts. The first 200 weeks (up to December 31 2015) served as the training set, while the remaining 44 weeks were used for validation.

A suite of time‑series methods was implemented in R: ARIMA, Holt’s linear trend, simple mean, naïve, seasonal naïve, single exponential smoothing (SES), and drift. Model selection relied on Akaike Information Criterion (AIC), Schwarz Bayesian Criterion (BIC), and a single “sum of error” metric. The ARIMA(2,0,2)(1,1,1) specification achieved the lowest AIC (1528), BIC (1553), and sum of error (27.9), outperforming the alternative approaches (Holt 34.0, SES 37.7, mean 44.6, drift 45.4, naïve 45.7, seasonal naïve 60.7).

Stationarity was examined with three unit‑root tests: Augmented Dickey‑Fuller (ADF) and Phillips‑Perron (PP) both rejected non‑stationarity (p = 0.01), while the KPSS test failed to reject stationarity (p = 0.1). Autocorrelation of residuals was assessed via the Box‑Ljung test (p = 0.73), suggesting no significant autocorrelation, though the authors ambiguously describe a “lack of evidence of independence.”

Residual normality was rigorously tested using Anderson‑Darling, Shapiro‑Wilk, Cramér‑von Mises, Kolmogorov‑Smirnov, Shapiro‑Francia, and Pearson χ² tests; all yielded p < 0.05, indicating non‑normal residuals. Consequently, confidence intervals derived from the model may be unreliable.

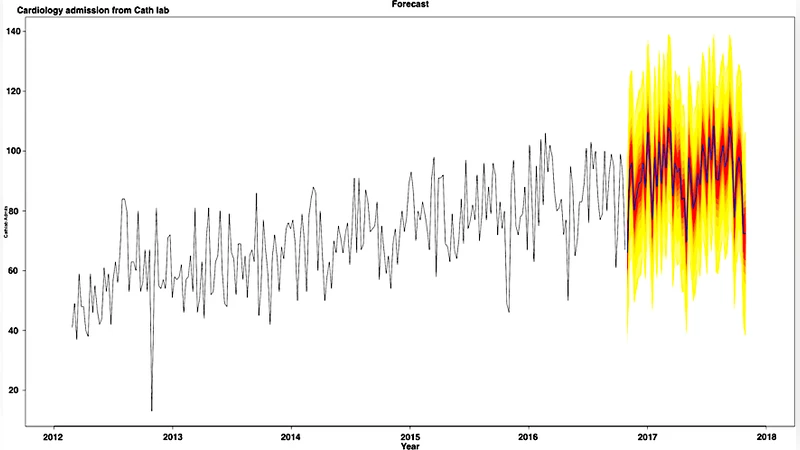

Forecast performance was illustrated by projecting 52 weeks ahead (December 3 2016 – December 2 2017) and visually comparing predicted versus observed admissions. The plot shows reasonable alignment, but the paper provides no quantitative error measures (e.g., MAE, RMSE, MAPE) for this out‑of‑sample period, limiting the assessment of predictive accuracy.

The discussion emphasizes that ARIMA outperforms simpler benchmarks and can support proactive resource allocation, potentially reducing overcrowding and informing capacity expansion. However, several methodological limitations are evident: (1) the analysis is confined to a single institution, precluding external validation; (2) non‑normal residuals raise concerns about the validity of prediction intervals; (3) reliance on a single error metric obscures a fuller picture of model performance; (4) the treatment of seasonality (weekly cycles) is not deeply explored; and (5) no comparison with modern machine‑learning approaches (e.g., neural networks, fuzzy logic, TABTS) is performed despite being mentioned as future work.

In conclusion, the study demonstrates that an ARIMA(2,0,2)(1,1,1) model can capture the weekly pattern of cardiology admissions from a catheterization lab within the examined dataset and may serve as a decision‑support tool for hospital administrators. To translate this into robust, generalizable practice, future research should incorporate multi‑center data, conduct cross‑validation, address residual non‑normality (e.g., via transformation or robust error modeling), employ multiple forecast accuracy metrics, and benchmark against advanced predictive algorithms. Such enhancements would strengthen confidence in the model’s utility for real‑time staffing, bed allocation, and overall patient flow management.

Comments & Academic Discussion

Loading comments...

Leave a Comment