Attributing Fake Images to GANs: Learning and Analyzing GAN Fingerprints

Recent advances in Generative Adversarial Networks (GANs) have shown increasing success in generating photorealistic images. But they also raise challenges to visual forensics and model attribution. We present the first study of learning GAN fingerprints towards image attribution and using them to classify an image as real or GAN-generated. For GAN-generated images, we further identify their sources. Our experiments show that (1) GANs carry distinct model fingerprints and leave stable fingerprints in their generated images, which support image attribution; (2) even minor differences in GAN training can result in different fingerprints, which enables fine-grained model authentication; (3) fingerprints persist across different image frequencies and patches and are not biased by GAN artifacts; (4) fingerprint finetuning is effective in immunizing against five types of adversarial image perturbations; and (5) comparisons also show our learned fingerprints consistently outperform several baselines in a variety of setups.

💡 Research Summary

The paper tackles two pressing challenges posed by the rapid advancement of Generative Adversarial Networks (GANs): reliable visual forensics for detecting synthetic imagery and protecting the intellectual property of GAN models. Existing forensic tools either focus on semantic or physical inconsistencies of specific manipulation scenarios, or they rely on handcrafted artifacts that are tied to a single GAN architecture. Digital watermarking and traditional device‑fingerprinting techniques are also unsuitable because they require white‑box access or embed external signals. To address these gaps, the authors introduce the notion of “GAN fingerprints” – intrinsic, model‑specific signatures that are automatically embedded in every image a GAN generates.

A fingerprint is defined on two levels. The model fingerprint is a reference vector (implemented as the weight vector of the final fully‑connected layer) that uniquely characterizes a particular GAN instance, encompassing architecture, training data, loss functions, optimizer settings, and even random initialization seeds. The image fingerprint is the feature representation extracted from an individual image (the 1×1×512 tensor before the final classifier), which captures the stable statistical patterns left by the generating model.

The core methodology consists of training a deep convolutional classifier on a labeled set of images drawn from several GANs and from real photographs. The classifier learns a unified embedding space where images from different sources are linearly separable. By interpreting the classifier’s final layer weights as model fingerprints and its penultimate features as image fingerprints, the system simultaneously learns both representations without any explicit supervision for the fingerprints themselves.

To probe where the fingerprints reside, the authors design three families of network variants:

- Pre‑downsampling networks replace early convolutions with fixed Gaussian down‑sampling, thereby forcing the model to rely on low‑frequency information.

- Pre‑downsampling residual networks compute the difference between two successive resolutions (akin to a Laplacian pyramid) to isolate high‑frequency residuals.

- Post‑pooling networks start average pooling at different spatial resolutions, effectively varying the patch size over which statistics are aggregated.

Across all variants, attribution accuracy remains high (often >90 % even at 8×8 resolution), demonstrating that fingerprints are not confined to conspicuous visual artifacts but are distributed throughout the frequency spectrum and across local patches.

Robustness is evaluated against five common perturbations—additive noise, Gaussian blur, JPEG compression, cropping, and relighting—as well as random combinations of these attacks. The baseline classifier’s performance degrades sharply under attack, but a simple fine‑tuning step on adversarially perturbed data restores accuracy to >80 % in most cases, indicating that the fingerprint representation can be made resilient through targeted adaptation.

For interpretability, the authors propose a visualization pipeline that replaces the implicit feature‑based fingerprint with an explicit image‑domain representation. An auto‑encoder reconstructs the input image; the reconstruction residual is defined as the image fingerprint. Simultaneously learned model fingerprints (free parameters of the same spatial size) are correlated with the residuals, and the correlation scores feed a softmax classifier. This yields visually interpretable “fingerprint images” that differ markedly across GAN families, confirming that the learned signatures correspond to genuine, model‑specific patterns rather than arbitrary classifier quirks.

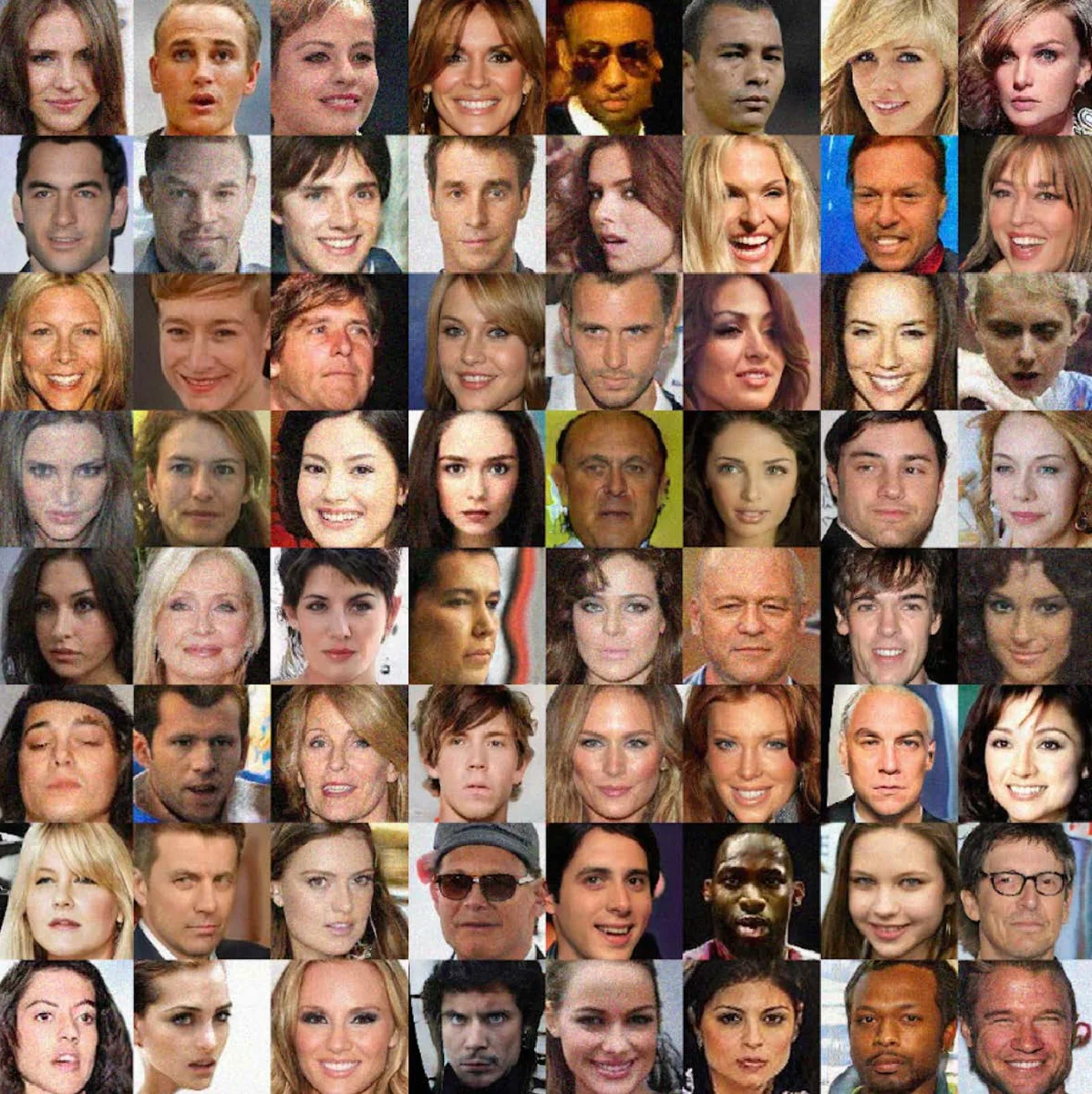

Extensive experiments involve four state‑of‑the‑art unconditional GANs (ProGAN, SNGAN, CramerGAN, MMDGAN) trained on high‑resolution face datasets, as well as diverse real‑world image collections. The proposed method consistently outperforms three baselines: (i) raw Inception‑V3 features, (ii) a recent CNN‑based forensic detector, and (iii) a PRNU‑based GAN fingerprint approach. Gains range from 12 % to 18 % in top‑1 attribution accuracy, and the system can even discriminate between two instances of the same architecture that differ only by random seed, achieving >95 % accuracy.

In summary, the paper demonstrates that GANs leave stable, unique, and persistent fingerprints in their outputs. By learning these fingerprints jointly with a classifier, one can reliably detect synthetic images, attribute them to the exact generating model, and visualize the underlying signatures. The approach is robust to common image perturbations, scalable to multiple GAN families, and offers a practical tool for forensic analysts and model owners seeking to protect their intellectual property against unauthorized use or piracy. Future work may extend the framework to conditional GANs, text‑to‑image models, and real‑time API monitoring scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment