Adversarial Attacks in Sound Event Classification

Adversarial attacks refer to a set of methods that perturb the input to a classification model in order to fool the classifier. In this paper we apply different gradient based adversarial attack algorithms on five deep learning models trained for sound event classification. Four of the models use mel-spectrogram input and one model uses raw audio input. The models represent standard architectures such as convolutional, recurrent and dense networks. The dataset used for training is the Freesound dataset released for task 2 of the DCASE 2018 challenge and the models used are from participants of the challenge who open sourced their code. Our experiments show that adversarial attacks can be generated with high confidence and low perturbation. In addition, we show that the adversarial attacks are very effective across the different models.

💡 Research Summary

This paper investigates the vulnerability of state‑of‑the‑art sound event classification systems to adversarial attacks. Using the Freesound‑derived FSDKaggle2018 dataset (the audio tagging task of DCASE 2018), the authors evaluate five publicly available models that were top‑ranked in the challenge: three convolution‑based architectures (VGG13, CRNN, GCNN) that operate on log‑mel spectrograms, and two DenseNet‑based variants (dense‑mel and dense‑wav) that use log‑mel spectrograms and raw waveform input respectively. All audio is resampled to 32 kHz; spectrogram‑based models use a 1024‑sample window, 512‑sample hop, 64 mel bands, and are normalized by the absolute maximum value.

Four untargeted attack methods are applied: white noise (as a naïve baseline), Fast Gradient Sign Method (FGSM), Deepfool, and Carlini‑Wagner (C&W). Two targeted attacks—L‑BFGS and C&W—are also employed. All attacks are performed in a white‑box setting (full knowledge of architecture and weights). For transferability analysis, adversarial examples generated by Deepfool and C&W on one model are tested on the remaining four models, simulating a zero‑knowledge scenario.

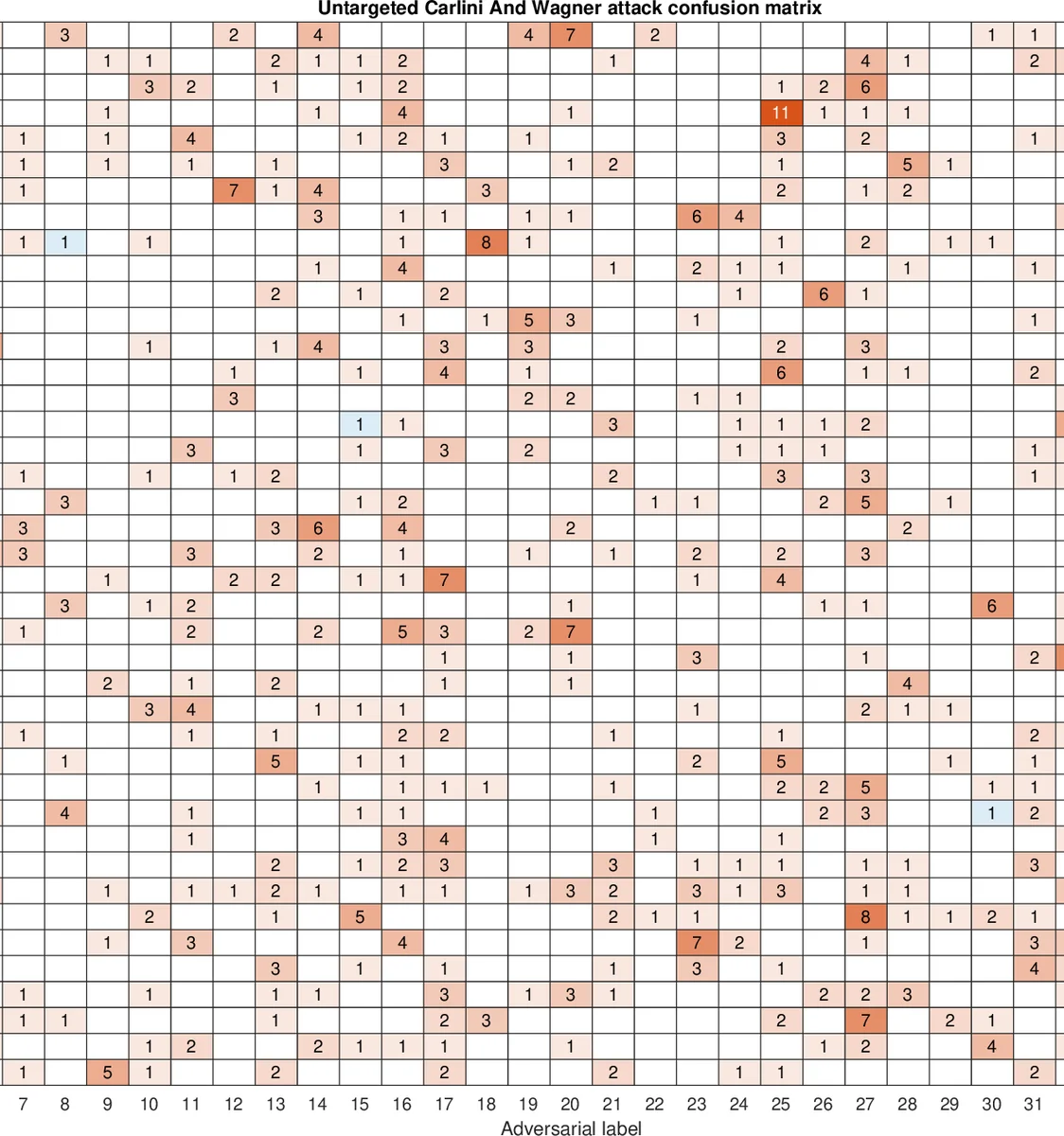

Experiment 1 – Untargeted attacks

A subset of 246 audio clips (six random examples per class across 41 classes) is used to keep computation tractable. Success rate, confidence of the new label, confidence of the original label on the perturbed input, and signal‑to‑noise ratio (SNR) are reported. White noise achieves only 21 % success with an average SNR of 0.37 dB. FGSM improves success to 31 % but still lags behind the gradient‑based attacks. Deepfool and C&W reach near‑perfect success (99.99 % and 99.51 % respectively) while maintaining very low SNRs (≈0.35 dB for Deepfool, ≈0.71 dB for C&W). Model‑wise, dense‑mel shows the highest SNR (0.36 dB) indicating slightly higher susceptibility, whereas dense‑wav (raw‑wave) records the lowest SNR (0.31 dB), suggesting raw‑wave models are marginally more robust.

Experiment 2 – Targeted attacks

Six instrument classes (bass drum, snare drum, cello, violin, clarinet, oboe) are selected. For each original class, the attack is run five times against each of the other five classes, yielding 180 targeted attempts. Average SNR required to force a mis‑classification is reported per class pair. C&W consistently needs less perturbation than L‑BFGS (average SNR 4–5 dB vs. 5–6 dB). The snare drum is the hardest target, demanding the highest SNR across all source‑target pairs. Acoustic similarity between instruments does not translate into lower SNR, reinforcing the observation that adversarial label changes are not acoustically intuitive. Success rates for targeted attacks are markedly lower than for untargeted attacks, reflecting the stricter output constraints.

Experiment 3 – Transferability

Adversarial examples generated by Deepfool and C&W on a source model are fed to the other four models. Transfer rates are modest: 5 %–10 % of the examples succeed on a different architecture. This low transferability indicates that each model learns a distinct decision boundary in feature space, and that a universal adversarial perturbation across heterogeneous audio classifiers is difficult to obtain.

Key Insights

- Gradient‑based attacks (Deepfool, C&W) are extremely effective against audio classifiers, achieving near‑perfect success with perturbations that are essentially inaudible (low SNR).

- Raw‑wave input (dense‑wav) offers a slight robustness advantage over log‑mel spectrogram input, possibly because the waveform retains more temporal detail that is harder to corrupt without noticeable distortion.

- Targeted attacks are substantially harder; they require larger perturbations and achieve lower success rates, especially for classes with distinctive temporal‑frequency signatures (e.g., snare drum).

- Transferability is limited, implying that an attacker must tailor adversarial examples to the specific victim model rather than rely on a one‑size‑fits‑all approach.

- The findings raise serious security concerns for real‑world deployments such as surveillance, autonomous vehicles, and content moderation, where mis‑classifications could have safety or legal ramifications.

Future Directions

The authors suggest exploring defensive strategies such as adversarial training, input preprocessing (e.g., denoising, randomization), model ensembles, and detection mechanisms that flag anomalous inputs. Additionally, extending the analysis to limited‑knowledge (black‑box) attacks and evaluating robustness under real‑world audio conditions (background noise, reverberation) would provide a more comprehensive risk assessment.

In summary, this work demonstrates that contemporary sound event classification systems are highly vulnerable to carefully crafted adversarial perturbations, and it provides a thorough empirical baseline for both attack and defense research in the audio domain.

Comments & Academic Discussion

Loading comments...

Leave a Comment