Data analysis challenges in transient gravitational-wave astronomy

Gravitational waves are radiative solutions of space-time dynamics predicted by Einstein’s theory of General Relativity. A world-wide array of large-scale and highly sensitive interferometric detectors constantly scrutinizes the geometry of the local space-time with the hope to detect deviations that would signal an impinging gravitational wave from a remote astrophysical source. Finding the rare and weak signature of gravitational waves buried in non-stationary and non-Gaussian instrument noise is a particularly challenging problem. We will give an overview of the data-analysis techniques and associated observational results obtained so far by Virgo (in Europe) and LIGO (in the US), along with the prospects offered by the up-coming advanced versions of those detectors.

💡 Research Summary

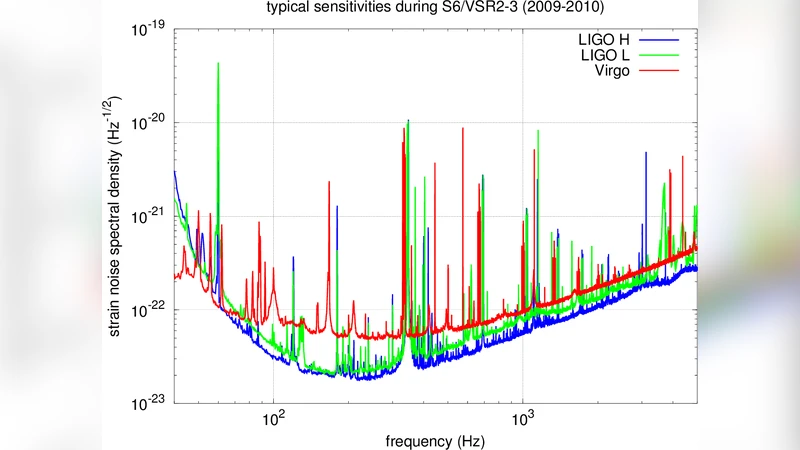

Gravitational waves (GWs), ripples in spacetime predicted by Einstein’s General Relativity, are now routinely searched for by a global network of kilometer‑scale laser interferometers—principally LIGO in the United States and Virgo in Europe. The central challenge addressed in this paper is the extraction of short‑duration, weak GW transients from data that are far from the idealized assumptions of stationary, Gaussian noise. Real detector output is contaminated by a mixture of persistent spectral lines (originating from mechanical resonances, power‑line harmonics, etc.) and sudden, non‑Gaussian glitches caused by environmental disturbances, hardware glitches, or anthropogenic sources. These features invalidate simple power‑spectral‑density based detection strategies and demand sophisticated preprocessing, noise‑mitigation, and statistical inference techniques.

The authors first review the traditional model‑based approach: matched filtering using a bank of theoretically computed waveforms (templates) that span the astrophysical parameter space (component masses, spins, orbital eccentricity, etc.). Because the template bank must be densely populated to avoid loss of signal‑to‑noise ratio (SNR), hierarchical searches, multi‑band filtering, and fast Fourier‑transform (FFT) acceleration are employed to keep computational costs tractable. Matched‑filter SNRs are supplemented by χ² consistency tests and time‑slide background estimation to control false‑alarm rates in the presence of non‑Gaussian noise.

Next, the paper surveys data‑driven techniques that complement or replace pure matched filtering. Machine‑learning methods—convolutional neural networks, recurrent networks, and variational auto‑encoders—have been trained on large simulated datasets and on real detector glitches to learn discriminative features that separate true GW transients from noise artifacts. These approaches can operate in near‑real time and are especially valuable for identifying and vetoing glitches that would otherwise inflate SNR estimates. The authors discuss how hybrid pipelines combine model‑based triggers with machine‑learning classifiers to improve overall sensitivity.

For parameter estimation, a Bayesian framework is described in detail. Posterior probability distributions for source properties (masses, spins, sky location, distance, inclination) are obtained using Markov‑Chain Monte Carlo, nested sampling, or Hamiltonian Monte Carlo algorithms. Multi‑detector coherence—exploiting time‑delay, amplitude, and phase consistency across LIGO Hanford, LIGO Livingston, and Virgo—sharpens sky localization to within a few square degrees and refines polarization information. The paper emphasizes the importance of accurate noise modeling in the likelihood function, especially when the underlying noise deviates from Gaussianity.

Looking ahead, the authors outline the impact of the upcoming Advanced LIGO and Virgo+ upgrades, which will increase the observable volume by roughly an order of magnitude. This sensitivity boost will raise the detection rate dramatically but will also increase data throughput and the complexity of the noise environment. Consequently, the authors advocate for scalable, GPU‑accelerated, and distributed computing infrastructures, as well as open‑source, community‑maintained software stacks such as PyCBC, GstLAL, and Bilby. They also stress the need for standardized metadata and low‑latency alert systems to enable rapid multimessenger follow‑up with electromagnetic and neutrino observatories.

In summary, the paper provides a comprehensive overview of the current state of transient GW data analysis: it details the noise challenges, the dual model‑based and data‑driven detection strategies, the Bayesian inference tools for source characterization, and the computational and collaborative frameworks required for the next generation of high‑sensitivity detectors.