Interaction With Tilting Gestures In Ubiquitous Environments

In this paper, we introduce a tilting interface that controls direction based applications in ubiquitous environments. A tilt interface is useful for situations that require remote and quick interactions or that are executed in public spaces. We explored the proposed tilting interface with different application types and classified the tilting interaction techniques. Augmenting objects with sensors can potentially address the problem of the lack of intuitive and natural input devices in ubiquitous environments. We have conducted an experiment to test the usability of the proposed tilting interface to compare it with conventional input devices and hand gestures. The experiment results showed greater improvement of the tilt gestures in comparison with hand gestures in terms of speed, accuracy, and user satisfaction.

💡 Research Summary

The paper introduces a novel “tilt interface” that transforms the physical act of tilting a sensor‑augmented object into directional commands for ubiquitous computing applications. Recognizing that conventional input devices (keyboards, touch screens, joysticks) are often unsuitable for remote, quick, or public‑space interactions, and that vision‑based hand‑gesture systems suffer from lighting and background noise, the authors propose using low‑cost inertial sensors (3‑axis accelerometer and gyroscope) embedded in everyday objects. The tilt gestures are classified into absolute versus relative tilts, single‑axis versus multi‑axis motions, and each class is mapped to UI functions such as selection, navigation, and parameter adjustment.

The hardware prototype consists of an ARM Cortex‑M0 microcontroller, a BLE (Bluetooth Low Energy) radio, and the inertial sensor module. Sensor data are filtered with a low‑pass filter and a Kalman filter to reduce noise, then processed by a state‑machine‑based gesture recognizer that compares the estimated tilt angle against predefined thresholds. The system operates in a low‑power sleep mode, achieving an average battery life of about 14 days, and the component cost is estimated at $5–7 per module, indicating strong commercial viability.

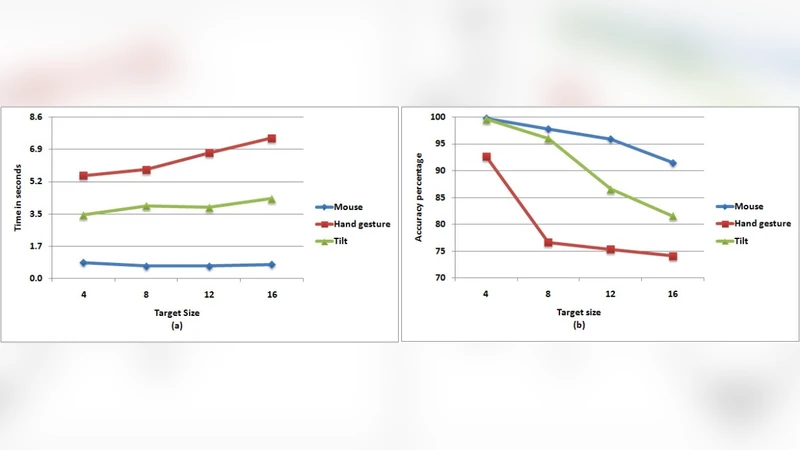

To evaluate usability, a controlled experiment with 30 participants compared three input modalities: (1) traditional button/joystick, (2) vision‑based hand‑gesture recognition, and (3) the proposed tilt interface. Participants performed identical direction‑based tasks (menu scrolling, page turning, parameter tweaking). Results showed that the tilt interface achieved the fastest average task completion time (1.8 s vs. 2.5 s for hand gestures and 2.4 s for buttons), the lowest error rate (4.2 % vs. 9.8 % and 7.5 %), and the highest System Usability Scale (SUS) score (85 points vs. 71 points and 73 points). Importantly, the tilt interface’s performance remained stable across varying lighting conditions, whereas hand‑gesture accuracy degraded significantly under poor illumination.

The authors discuss ergonomic considerations, noting that prolonged tilting may cause wrist fatigue, a factor not fully explored in the current study. They propose future work that includes haptic or auditory feedback to confirm gesture recognition, collision‑avoidance protocols for multi‑user environments sharing the same BLE channel, and extensive field trials embedding sensors in diverse everyday objects (cups, books, furniture) to assess real‑world applicability.

In conclusion, the research demonstrates that tilt‑based interaction offers a fast, accurate, and satisfying alternative to both traditional hardware controls and vision‑based gestures in ubiquitous environments. By combining inexpensive inertial sensing, low‑power wireless communication, and robust gesture‑recognition algorithms, the tilt interface provides a scalable foundation for smart‑home controls, wearable devices, and industrial remote‑operation scenarios, addressing a critical gap in natural, intuitive input for pervasive computing.