GenerationMania: Learning to Semantically Choreograph

Beatmania is a rhythm action game where players must reproduce some of the sounds of a song by pressing specific controller buttons at the correct time. In this paper we investigate the use of deep neural networks to automatically create game stages - called charts - for arbitrary pieces of music. Our technique uses a multi-layer feed-forward network trained on sound sequence summary statistics to predict which sounds in the music are to be played by the player and which will play automatically. We use another neural network along with rules to determine which controls should be mapped to which sounds. We evaluated our system on the ability to reconstruct charts in a held-out test set, achieving an $F_1$-score that significantly beats LSTM baselines.

💡 Research Summary

The paper addresses the problem of automatically generating charts for Beatmania IIDX, a rhythm action game that relies on “keysounds” – a one‑to‑one mapping between audio samples and player actions. Hand‑crafted charts are labor‑intensive and limit the number of songs that can be played, so the authors propose a data‑driven pipeline that learns to decide which sound events should be player‑controlled (playable) and which should be background (non‑playable), and then assigns each playable event to one of eight controller buttons.

The authors first construct a new dataset, BOF2011, consisting of 366 songs, 1,454 charts, and over 4.3 million sound events (≈29 % playable). They also collect a separate labeled set (BOFU) of 60 k audio samples for training an instrument classifier.

The pipeline consists of four stages:

-

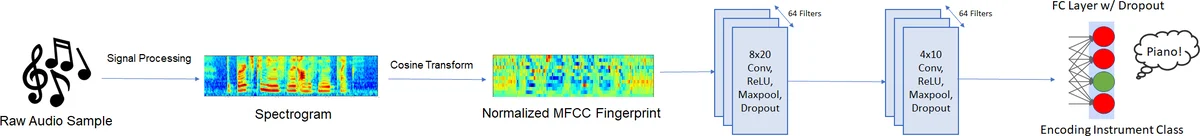

Sample Classification – Audio samples are classified into 27 instrument categories using MFCC‑based spectrograms fed to a small 2‑D CNN (two convolution‑ReLU‑max‑pool layers followed by a fully‑connected layer). The classifier reaches 84 % accuracy on a held‑out split.

-

Chart Knowledge Extraction – Three types of knowledge are extracted from existing charts: (a) Beat phase, a quantized position of each event within a 4‑note beat (0‑15); (b) a challenge model that computes a “strain” value for each event based on inter‑event intervals and simultaneous control activations, then max‑pools over 400 ms windows; (c) a relational summary that aggregates, at multiple time scales (2, 4, 8, 16, 32 beats), the probability distribution of instrument classes and playability. This produces for each event an S × C × 2 tensor (S = number of scales, C = instrument classes).

-

Sample Selection – The relational summary, together with beat phase and strain, is fed into a four‑layer feed‑forward network (sizes 64‑32‑16‑2, ReLU activations). The output is a binary decision (playable vs. non‑playable). Because non‑playable events dominate, a weighted mean‑squared‑error loss is used to mitigate class imbalance. The model is trained end‑to‑end on the BOF2011 charts.

-

Note Placement – Once events are labeled playable, a second feed‑forward network (same architecture, final softmax over 8 controls) assigns each playable event to a specific button. The authors note that any mapping that avoids simultaneous collisions yields a playable chart, so this step is not a major research contribution.

Evaluation focuses on the ability to reconstruct held‑out charts. The proposed feed‑forward model achieves a significantly higher F1‑score than several strong baselines, including LSTM‑based approaches such as Dance‑Dance‑Convolution. The authors attribute the improvement to the multi‑scale relational summary, which captures both local and global musical context without the overhead of recurrent networks.

Key contributions include: (i) a novel formulation of Beatmania chart generation as a binary playability classification problem; (ii) the introduction of a multi‑scale relational summary that acts as a style and difficulty prior; (iii) a fully‑feed‑forward architecture that outperforms recurrent baselines in both accuracy and computational efficiency; and (iv) the release of the BOF2011 dataset for future PCG‑ML research.

The paper also discusses limitations: the dataset is drawn from a single community and may not generalize to other genres; note placement does not consider ergonomic or ergonomic constraints of real players; evaluation is limited to reconstruction metrics without user studies on perceived fun or difficulty; and the feed‑forward model may struggle with very long‑range musical structures such as verses and choruses.

Future work suggested includes incorporating multi‑task learning to jointly predict playability and difficulty, using reinforcement learning to adapt charts based on player feedback, expanding the dataset to cover a broader range of musical styles, and exploring Transformer‑based architectures to better capture long‑term dependencies.

Overall, the paper presents a solid, data‑centric approach to Beatmania chart generation, demonstrates clear empirical gains over prior work, and opens several avenues for further research in procedural content generation for rhythm games.

Comments & Academic Discussion

Loading comments...

Leave a Comment