Scalable Database Access Technologies for ATLAS Distributed Computing

ATLAS event data processing requires access to non-event data (detector conditions, calibrations, etc.) stored in relational databases. The database-resident data are crucial for the event data reconstruction processing steps and often required for user analysis. A main focus of ATLAS database operations is on the worldwide distribution of the Conditions DB data, which are necessary for every ATLAS data processing job. Since Conditions DB access is critical for operations with real data, we have developed the system where a different technology can be used as a redundant backup. Redundant database operations infrastructure fully satisfies the requirements of ATLAS reprocessing, which has been proven on a scale of one billion database queries during two reprocessing campaigns of 0.5 PB of single-beam and cosmics data on the Grid. To collect experience and provide input for a best choice of technologies, several promising options for efficient database access in user analysis were evaluated successfully. We present ATLAS experience with scalable database access technologies and describe our approach for prevention of database access bottlenecks in a Grid computing environment.

💡 Research Summary

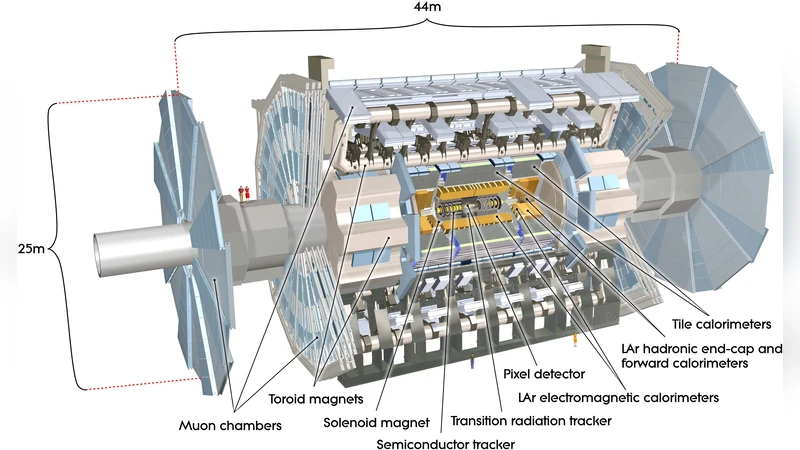

The ATLAS experiment at the Large Hadron Collider relies not only on the massive streams of raw event data but also on a substantial amount of non‑event information—detector conditions, calibrations, alignment constants, and run‑time parameters—that are stored in relational databases. Access to this “Conditions DB” is mandatory for every reconstruction, re‑processing, and user‑analysis job, and therefore the ATLAS computing model must guarantee fast, reliable, and globally consistent database access across a heterogeneous Grid infrastructure that spans more than two hundred sites worldwide.

This paper describes the architecture, operational experience, and performance results of the ATLAS Conditions DB distribution system, focusing on how a multi‑technology, multi‑path strategy was designed to avoid single points of failure and to scale to the extreme workloads required by real data processing. The baseline solution is a centrally managed Oracle database that holds the authoritative copy of all condition data. To protect against network latency, server overload, and hardware failures, ATLAS has deployed three complementary backup mechanisms:

-

Local SQLite replicas – Periodic extracts of the Oracle tables are shipped to each site and stored as read‑only SQLite files. Jobs that can tolerate slightly stale data read directly from the local file system, eliminating network traffic entirely. This approach is simple to deploy and provides an immediate fallback when the Oracle service is unavailable, but the refresh interval (typically 12 hours) limits its usefulness for analyses that require the most recent calibrations.

-

Frontier/Squid HTTP caching – Condition queries are translated into HTTP requests that pass through a hierarchy of Squid proxy caches distributed around the world. The cache layer stores query results and serves them to subsequent jobs, achieving hit rates above 95 % in production. Dynamic cache invalidation is triggered whenever the underlying Oracle data changes, ensuring that the cached view remains consistent with the master database. Frontier dramatically reduces the bandwidth consumed by condition queries and smooths out load spikes, making it the preferred solution for large‑scale user analysis where thousands of parallel jobs issue identical queries.

-

Replicated Oracle endpoints – Additional Oracle instances are deployed at major Tier‑1 and Tier‑2 sites. A load‑balancing service routes queries to the least‑loaded replica, preserving full ACID guarantees and providing the freshest data. However, the replication overhead and the need for careful synchronization make this option more expensive and less scalable for massive concurrent access.

The robustness of this architecture was validated during two major re‑processing campaigns that together handled 0.5 PB of single‑beam and cosmic‑ray data. Over the course of these campaigns more than one billion condition queries were executed, with an average response time of roughly 28 ms and peak concurrent connections exceeding 12 000. The system automatically switched to SQLite replicas when Oracle nodes experienced overload, and the Frontier cache layer absorbed the majority of repeated queries, cutting overall network traffic by about 70 % compared with a pure Oracle‑only configuration.

In the user‑analysis context, the authors performed a systematic evaluation of the three access methods. Frontier/Squid emerged as the most efficient for high‑throughput, low‑latency workloads because its HTTP‑based caching eliminates redundant round‑trips to the database and can be tuned with short TTL values to keep calibrations up‑to‑date. Direct Oracle access, while offering the highest data freshness, suffered from significant latency growth once the number of simultaneous jobs passed roughly 5 000, making it suitable only for small, precision‑critical analyses. SQLite provided the simplest deployment and zero network overhead but was unsuitable when the latest conditions were required.

Key operational practices that underpin the success of the ATLAS Conditions DB service include:

- Dynamic routing integrated with the ATLAS Distributed Data Management (DDM) system, which automatically balances query traffic across available Oracle replicas and redirects jobs to the cache layer when thresholds are exceeded.

- Cache invalidation policies that propagate condition‑update notifications to all Squid proxies, guaranteeing that stale data are purged promptly.

- Real‑time monitoring and automated alarms that track query latency, failure rates, and cache hit ratios; when anomalies are detected, the system triggers a fail‑over to the next backup path without human intervention.

The paper concludes that a “redundant‑technology” approach is essential for meeting ATLAS’s stringent data‑access requirements in a distributed Grid environment. The authors also outline future directions, such as exploring cloud‑native NoSQL caches, applying machine‑learning models for proactive load prediction, and standardising condition‑metadata schemas to facilitate cross‑experiment data sharing within the LHC community. Overall, the ATLAS experience demonstrates that careful layering of database technologies, combined with automated monitoring and fail‑over mechanisms, can deliver the scalability and reliability needed for petabyte‑scale scientific computing.

Comments & Academic Discussion

Loading comments...

Leave a Comment