Learning Stabilizable Nonlinear Dynamics with Contraction-Based Regularization

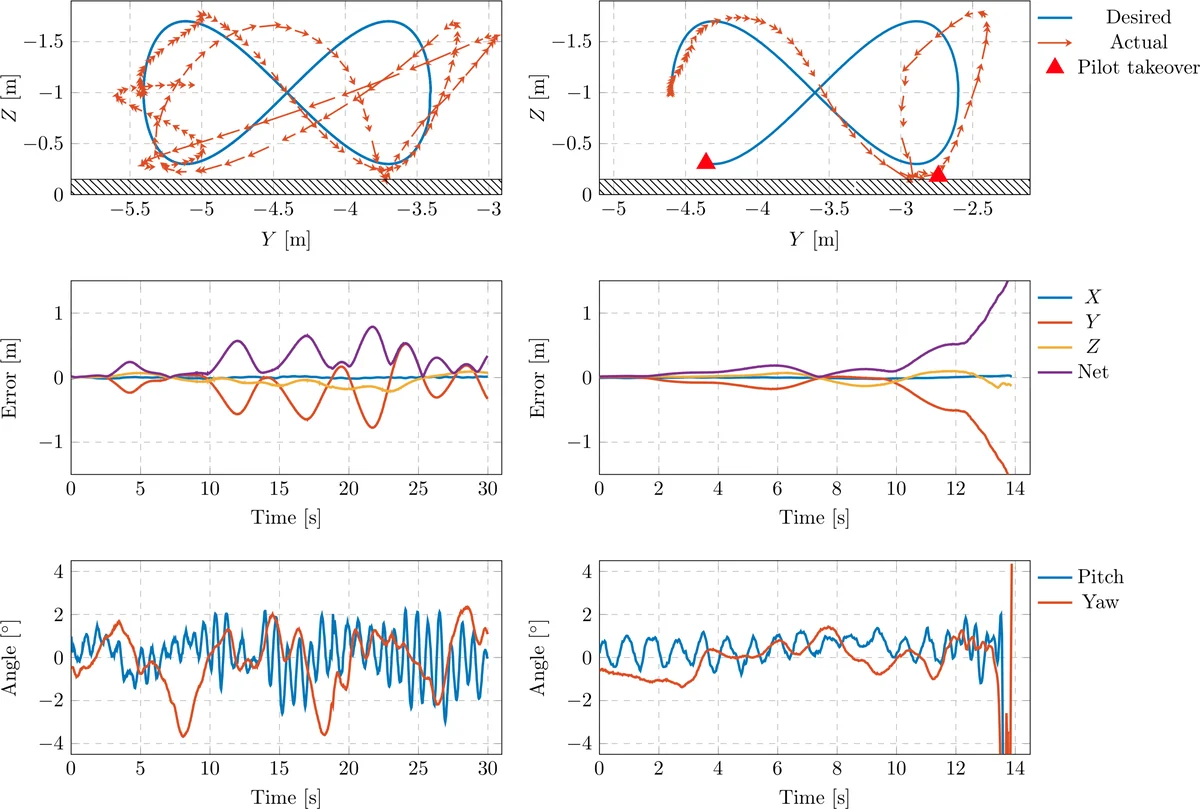

We propose a novel framework for learning stabilizable nonlinear dynamical systems for continuous control tasks in robotics. The key contribution is a control-theoretic regularizer for dynamics fitting rooted in the notion of stabilizability, a constraint which guarantees the existence of robust tracking controllers for arbitrary open-loop trajectories generated with the learned system. Leveraging tools from contraction theory and statistical learning in Reproducing Kernel Hilbert Spaces, we formulate stabilizable dynamics learning as a functional optimization with convex objective and bi-convex functional constraints. Under a mild structural assumption and relaxation of the functional constraints to sampling-based constraints, we derive the optimal solution with a modified Representer theorem. Finally, we utilize random matrix feature approximations to reduce the dimensionality of the search parameters and formulate an iterative convex optimization algorithm that jointly fits the dynamics functions and searches for a certificate of stabilizability. We validate the proposed algorithm in simulation for a planar quadrotor, and on a quadrotor hardware testbed emulating planar dynamics. We verify, both in simulation and on hardware, significantly improved trajectory generation and tracking performance with the control-theoretic regularized model over models learned using traditional regression techniques, especially when learning from small supervised datasets. The results support the conjecture that the use of stabilizability constraints as a form of regularization can help prune the hypothesis space in a manner that is tailored to the downstream task of trajectory generation and feedback control, resulting in models that are not only dramatically better conditioned, but also data efficient.

💡 Research Summary

This paper introduces a novel framework for learning nonlinear dynamical models that are guaranteed to be stabilizable, targeting continuous‑control tasks in robotics. The authors observe that standard supervised regression (e.g., deep networks or kernel ridge regression) often yields models that, when used for trajectory generation or feedback control, quickly become unstable, especially when training data are scarce. To address this, they embed a control‑theoretic regularizer directly into the learning objective: the learned dynamics must admit a Control Contraction Metric (CCM), which certifies that for any open‑loop trajectory there exists a feedback controller that exponentially tracks it.

Mathematically, the problem is posed as a functional optimization over a Reproducing Kernel Hilbert Space (RKHS) (\mathcal{H}): \

Comments & Academic Discussion

Loading comments...

Leave a Comment