Training capsules as a routing-weighted product of expert neurons

Capsules are the multidimensional analogue to scalar neurons in neural networks, and because they are multidimensional, much more complex routing schemes can be used to pass information forward through the network than what can be used in traditional neural networks. This work treats capsules as collections of neurons in a fully connected neural network, where sub-networks connecting capsules are weighted according to the routing coefficients determined by routing by agreement. An energy function is designed to reflect this model, and it follows that capsule networks with dynamic routing can be formulated as a product of expert neurons. By alternating between dynamic routing, which acts to both find subnetworks within the overall network as well as to mix the model distribution, and updating the parameters by the gradient of the contrastive divergence, a bottom-up, unsupervised learning algorithm is constructed for capsule networks with dynamic routing. The model and its training algorithm are qualitatively tested in the generative sense, and is able to produce realistic looking images from standard vision datasets.

💡 Research Summary

The paper proposes a novel unsupervised learning framework for capsule networks that treats capsules as collections of scalar neurons within a fully‑connected network and interprets the routing‑by‑agreement coefficients as weighting factors in a Product‑of‑Experts (PoE) model. The authors first review the PoE formulation for binary visible/hidden units, showing how the energy function leads to a contrastive‑divergence learning rule that requires only a single Gibbs step per parameter update. They then recap the dynamic routing algorithm used in capsule networks, where each lower‑level capsule predicts the activity of higher‑level capsules via learned transformation matrices and the predictions are combined using routing coefficients that are iteratively refined based on agreement (measured by cosine similarity).

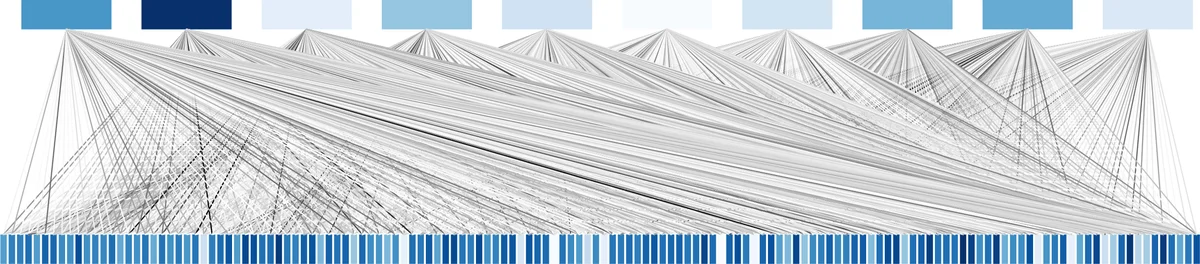

By defining an energy between a lower‑level capsule (x_i^{(l)}) and an upper‑level capsule (x_j^{(l+1)}) as (E_{ij}= -x_j^{(l+1)T}W_{ij}^{(l)}x_i^{(l)}), and weighting each pairwise energy with the routing coefficient (c_{ij}^{(l)}), the total energy of two adjacent layers becomes (E = \sum_{i,j} c_{ij}^{(l)}E_{ij}). The corresponding joint probability distribution takes the Boltzmann form (P \propto \exp(-E)) and can be factorised as a product of expert terms, each expert being a pair of capsule collections whose contribution is scaled by the routing coefficient. This yields a clean probabilistic interpretation of the routing process: high‑agreement capsule pairs receive larger weight, effectively lowering the energy of the sub‑network that connects them.

Training proceeds in two alternating steps. First, with the transformation matrices (W) fixed, the routing coefficients are updated through the standard dynamic routing iterations, thereby discovering a data‑dependent sub‑network structure. Second, with the routing coefficients held constant, the parameters (W) are updated by maximizing the log‑likelihood using contrastive divergence. The gradient of the log‑likelihood with respect to a weight matrix is derived as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment