Semisupervised Adversarial Neural Networks for Cyber Security Transfer Learning

On the path to establishing a global cybersecurity framework where each enterprise shares information about malicious behavior, an important question arises. How can a machine learning representation characterizing a cyber attack on one network be used to detect similar attacks on other enterprise networks if each networks has wildly different distributions of benign and malicious traffic? We address this issue by comparing the results of naively transferring a model across network domains and using CORrelation ALignment, to our novel adversarial Siamese neural network. Our proposed model learns attack representations that are more invariant to each network’s particularities via an adversarial approach. It uses a simple ranking loss that prioritizes the labeling of the most egregious malicious events correctly over average accuracy. This is appropriate for driving an alert triage workflow wherein an analyst only has time to inspect the top few events ranked highest by the model. In terms of accuracy, the other approaches fail completely to detect any malicious events when models were trained on one dataset are evaluated on another for the first 100 events. While, the method presented here retrieves sizable proportions of malicious events, at the expense of some training instabilities due in adversarial modeling. We evaluate these approaches using 2 publicly available networking datasets, and suggest areas for future research.

💡 Research Summary

The paper tackles a fundamental problem in collaborative cyber‑security: how to reuse a machine‑learning model trained on traffic from one enterprise to detect similar attacks on another enterprise whose benign and malicious traffic distributions differ dramatically. The authors first demonstrate, using the Kuiper test, that the feature distributions of two widely used public datasets—UNSW NB‑15 and CICIDS2017—are statistically distinct across virtually all features. Consequently, naïve model transfer and a simple domain‑alignment technique, CORAL (Correlation Alignment), which matches source and target covariance matrices, fail to provide any useful detection when the transferred model is evaluated on the top‑100 ranked events of the target domain; the malicious detection rate is essentially zero.

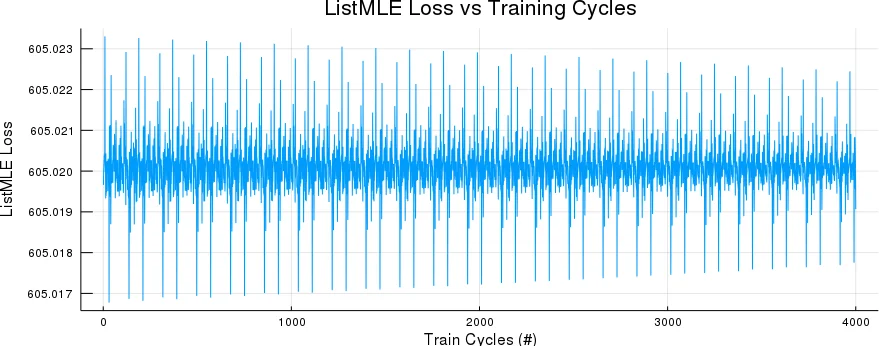

To overcome this, the authors propose a semi‑supervised adversarial neural network that combines three components: (1) an embedding network (E) that maps raw flow features into a low‑dimensional representation, (2) a ListMLE ranking head (L) that learns to order flows so that the most dangerous malicious flows receive the highest scores, and (3) an adversarial Siamese head (D) that tries to discriminate whether a pair of flows originates from the same dataset or from different datasets. The Siamese branch uses a contrastive loss with a margin of 2, and its gradients are reversed (ReverseGrad) before being applied to the shared embedding, thereby encouraging the embedding to be domain‑invariant. Training proceeds with two shuffled mini‑batches per iteration: the ListMLE loss is minimized via standard gradient descent, while the contrastive loss is maximized (gradient ascent) to create the adversarial game.

The authors evaluate the approach on DoS attacks only, rebalancing each dataset to a 1:1 ratio of DoS to normal traffic. They introduce a new metric, “Rolling Top‑N Accuracy,” which measures the proportion of malicious events recovered within the top‑N ranked alerts—a metric that mirrors the practical constraints of security analysts who can only triage a limited number of alerts. Under this metric, both naïve transfer and CORAL achieve 0 % detection in the first 100 alerts, whereas the adversarial model recovers roughly 30‑45 % of the malicious events, demonstrating a meaningful improvement despite the challenging domain shift.

Training instability is reported: the adversarial loss can cause oscillations, and performance is sensitive to hyper‑parameters such as learning rates, loss weighting, and the margin in the contrastive loss. The authors acknowledge that more sophisticated optimization schedules, adaptive loss weighting, or larger, deeper architectures could mitigate these issues. They also note that their network is deliberately simple (few hidden layers) and that richer architectures might yield better results.

In addition to the empirical results, the paper contributes methodological insights: (i) the use of ListMLE for ranking aligns model objectives with real‑world triage workflows, (ii) the combination of adversarial domain‑invariance with a ranking loss is novel in the cyber‑security transfer‑learning literature, and (iii) the Rolling Top‑N metric offers a more operationally relevant evaluation than traditional accuracy or F1 scores.

The discussion highlights several avenues for future work: stabilizing adversarial training, extending the approach to multi‑domain (more than two enterprises) scenarios, incorporating few‑shot or meta‑learning to reduce the need for labeled data in new domains, and testing the system in live SOC environments with continuous feedback loops. Overall, the study provides a promising proof‑of‑concept that adversarial Siamese networks, when paired with ranking objectives, can partially bridge the gap caused by heterogeneous network traffic distributions, moving the field closer to practical, cross‑enterprise cyber‑threat detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment