A computational model for grid maps in neural populations

Grid cells in the entorhinal cortex, together with head direction, place, speed and border cells, are major contributors to the organization of spatial representations in the brain. In this work we introduce a novel theoretical and algorithmic framework able to explain the emergence of hexagonal grid-like response patterns from head direction cells’ responses. We show that this pattern is a result of minimal variance encoding of neurons. The novelty lies into the formulation of the encoding problem through the modern Frame Theory language, specifically that of equiangular Frames, providing new insights about the optimality of hexagonal grid receptive fields. The model proposed overcomes some crucial limitations of the current attractor and oscillatory models. It is based on the well-accepted and tested hypothesis of Hebbian learning, providing a simplified cortical-based framework that does not require the presence of theta velocity-driven oscillations (oscillatory model) or translational symmetries in the synaptic connections (attractor model). We moreover demonstrate that the proposed encoding mechanism naturally explains axis alignment of neighbor grid cells and maps shifts, rotations and scaling of the stimuli onto the shape of grid cells’ receptive fields, giving a straightforward explanation of the experimental evidence of grid cells remapping under transformations of environmental cues.

💡 Research Summary

The paper proposes a novel computational framework that explains how hexagonal grid‑cell firing patterns can emerge from the activity of head‑direction (HD) cells. The authors start from three biologically plausible assumptions: (1) the second‑order statistics of the upstream inputs are stationary, implying that the input covariance matrix is diagonalized by Fourier bases; (2) synaptic weights evolve according to Oja’s rule, a normalized Hebbian learning rule that converges to the principal components of the input; and (3) the neural population aims to encode the animal’s position with minimal variance, i.e., to maximize the Fisher information about position.

Under these assumptions, the learned weight functions become complex exponentials e^{i k·ξ}, i.e., Fourier components characterized by two‑dimensional frequency vectors k. The response of each “simple” HD cell to a translated stimulus is a phase‑shifted Fourier coefficient, while a change in head direction corresponds to a rotation of the frequency vectors. By aggregating the responses of several such cells linearly (a “complex” grid cell), the overall response is a sum of phase‑modulated complex exponentials.

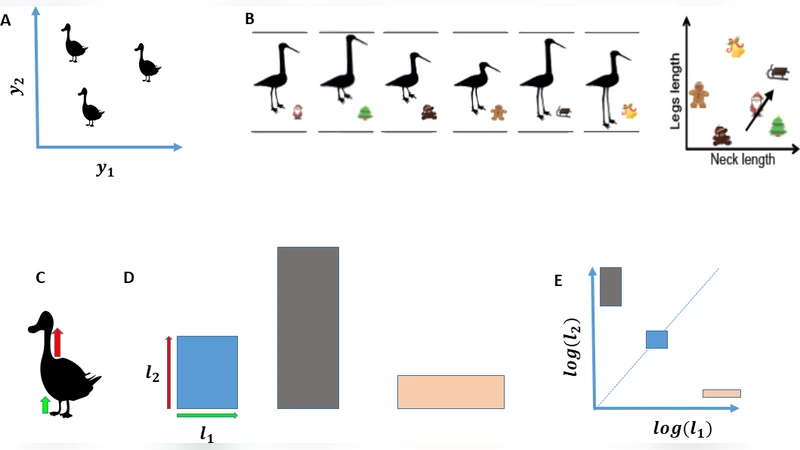

The authors then apply the Cramér‑Rao bound to the noisy population responses (Gaussian, independent, identical variance) and show that the lower bound on position‑estimation variance is minimized when the set of frequency vectors forms an equiangular frame. In two dimensions, the optimal frames are: (i) an orthonormal pair for N = 2, and (ii) the Mercedes‑Benz frame for N = 3, consisting of three vectors separated by 120°. The latter yields an interference pattern that is precisely a hexagonal lattice. The paper proves this result analytically (Theorem 1) and demonstrates it with simulations: after a two‑phase learning procedure, the first phase extracts Fourier‑like receptive fields from natural‑image translations, and the second phase selects three equiangular components that, when summed, produce a robust hexagonal grid.

Crucially, the model predicts that neighboring grid cells share the same orientation because cells receiving similar inputs will learn receptive fields with similar frequencies and orientations. It also accounts for grid‑cell remapping under environmental transformations: rotating or scaling visual cues rotates or rescales the frequency vectors, leading to a corresponding rotation or scaling of the grid pattern.

Compared with existing theories, the proposed framework avoids the problematic assumptions of the oscillatory interference model (requiring theta‑velocity‑driven oscillations, which are absent in some species) and the continuous attractor model (requiring unrealistically precise translational symmetry of synaptic weights). Instead, it relies solely on Hebbian learning, a well‑established biological mechanism, and on the statistical optimality of equiangular frames.

In summary, the authors present a mathematically rigorous, biologically plausible, and experimentally consistent account of grid‑cell formation. By linking stationary input statistics, Oja’s learning, and Fisher‑information‑based optimal encoding, they show that hexagonal grid fields naturally arise as the minimal‑variance solution of a population of head‑direction cells, providing a compelling alternative to previous models and opening new avenues for understanding spatial and possibly abstract cognitive maps.

Comments & Academic Discussion

Loading comments...

Leave a Comment