Efficient Novelty-Driven Neural Architecture Search

One-Shot Neural architecture search (NAS) attracts broad attention recently due to its capacity to reduce the computational hours through weight sharing. However, extensive experiments on several recent works show that there is no positive correlation between the validation accuracy with inherited weights from the supernet and the test accuracy after re-training for One-Shot NAS. Different from devising a controller to find the best performing architecture with inherited weights, this paper focuses on how to sample architectures to train the supernet to make it more predictive. A single-path supernet is adopted, where only a small part of weights are optimized in each step, to reduce the memory demand greatly. Furthermore, we abandon devising complicated reward based architecture sampling controller, and sample architectures to train supernet based on novelty search. An efficient novelty search method for NAS is devised in this paper, and extensive experiments demonstrate the effectiveness and efficiency of our novelty search based architecture sampling method. The best architecture obtained by our algorithm with the same search space achieves the state-of-the-art test error rate of 2.51% on CIFAR-10 with only 7.5 hours search time in a single GPU, and a validation perplexity of 60.02 and a test perplexity of 57.36 on PTB. We also transfer these search cell structures to larger datasets ImageNet and WikiText-2, respectively.

💡 Research Summary

The paper tackles a fundamental limitation of One‑Shot Neural Architecture Search (NAS): the weak correlation between validation accuracy obtained from shared weights in a supernet and the true performance after full training. Traditional One‑Shot methods rely on a reward‑based controller (e.g., reinforcement learning, gradient‑based architecture parameters) that optimizes this deceptive proxy, often leading to sub‑optimal architectures.

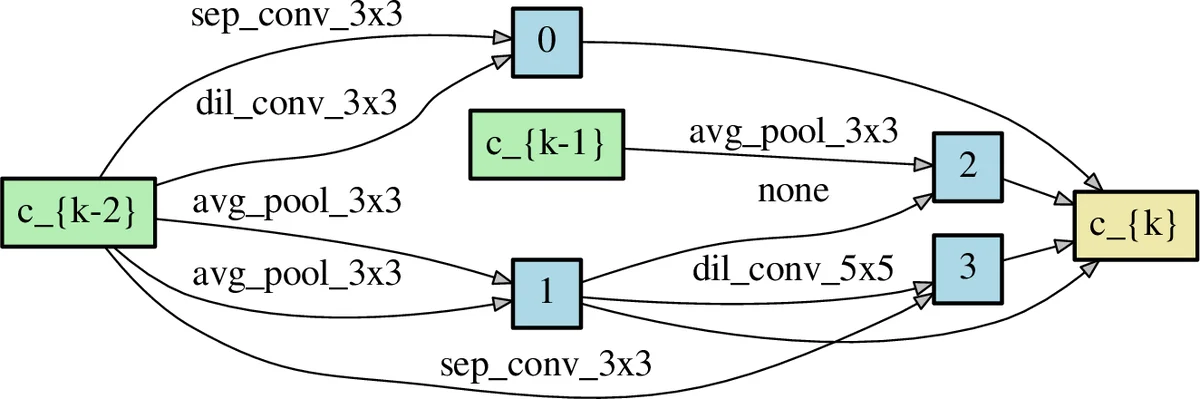

To address this, the authors propose two complementary innovations. First, they adopt a single‑path supernet architecture. The entire search space is encoded as a large directed acyclic graph (DAG), but during each training iteration only one path (i.e., one candidate architecture) is activated and its weights are updated. This drastically reduces memory consumption, allowing the whole process to run on a single GPU without the need to store all weights simultaneously.

Second, instead of a reward‑driven controller, they employ Novelty Search (NS) to sample architectures for supernet training. NS treats the architecture as a behavior characterized by a vector representation of its graph structure. At each step, the novelty of a candidate α is computed as the average Euclidean distance to its k‑nearest neighbors in an archive A of previously visited architectures:

N(α, A) = (1/|S|) Σ_{β∈S} ||b(α) – b(β)||², where S are the k nearest neighbors.

The architecture with the highest novelty score is selected for weight update, and the archive is updated (old low‑novelty entries may be replaced). To keep the distance computation tractable, the authors encode each cell as a binary vector (presence/absence of operations and connections) and use approximate nearest‑neighbor search via hashing.

The overall algorithm (EN²AS) proceeds as follows:

- Initialize an empty archive and random supernet weights.

- For a fixed number of training steps, either (a) if the archive is not full, sample uniformly and update weights, or (b) once full, select the most novel architecture, update its representation via gradient steps (Eq. 8/9), and train the corresponding path.

- After supernet training, perform a final architecture selection phase using either random search or a lightweight evolutionary algorithm on the validation set, then fully retrain the chosen architecture.

Experiments cover both convolutional (CIFAR‑10, ImageNet) and recurrent (Penn Treebank, WikiText‑2) domains. On CIFAR‑10, the method discovers a cell that achieves 2.51 % test error after only 7.5 hours of search on a single GPU—comparable to or better than state‑of‑the‑art methods that require dozens of GPU‑days. On PTB, it reaches validation perplexity 60.02 and test perplexity 57.36, again outperforming many weight‑sharing baselines. The discovered cells transfer well to larger datasets, confirming the generality of the approach.

Key strengths of the work include:

- Memory efficiency: Single‑path training eliminates the need for full‑supernet weight storage, enabling large‑scale search on modest hardware.

- Exploration‑focused sampling: Novelty Search drives the supernet to cover diverse regions of the architecture space, mitigating the deceptive‑reward problem and reducing reliance on complex controllers.

- Simplicity and reproducibility: The method replaces sophisticated RL or gradient‑based controllers with a straightforward archive‑based mechanism, easing implementation.

However, several limitations are noted:

- Novelty metric design: The binary vector embedding and Euclidean distance may not capture functional similarity between architectures; a learned similarity metric could be more predictive.

- Hyper‑parameter sensitivity: The archive size (S) and neighbor count (k) are critical but not extensively ablated; performance may vary across search spaces.

- Exploitation deficit: Pure novelty search emphasizes diversity but lacks a direct performance‑driven exploitation component, potentially slowing convergence to the true optimum.

- Search space scope: Experiments focus on cell‑based spaces; extending to more heterogeneous or multi‑scale architectures may require additional engineering.

Future directions suggested by the authors include integrating a performance‑aware term into the novelty score (forming a hybrid “novelty‑plus‑reward” objective), learning a more expressive architecture embedding for distance calculation, and applying the framework to broader search spaces such as transformer‑style blocks or hardware‑aware NAS.

Overall, the paper demonstrates that encouraging diverse architectural exploration via novelty search, combined with a memory‑light single‑path supernet, can yield state‑of‑the‑art results with dramatically reduced computational resources, offering a compelling alternative to reward‑centric One‑Shot NAS paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment