Short-term Electric Load Forecasting Using TensorFlow and Deep Auto-Encoders

This paper conducts research on the short-term electric load forecast method under the background of big data. It builds a new electric load forecast model based on Deep Auto-Encoder Networks (DAENs), which takes into account multidimensional load-related data sets including historical load value, temperature, day type, etc. A new distributed short-term load forecast method based on TensorFlow and DAENs is therefore proposed, with an algorithm flowchart designed. This method overcomes the shortcomings of traditional neural network methods, such as over-fitting, slow convergence and local optimum, etc. Case study results show that the proposed method has obvious advantages in prediction accuracy, stability, and expansibility compared with those based on traditional neural networks. Thus, this model can better meet the demands of short-term electric load forecasting under big data scenario.

💡 Research Summary

**

The paper addresses the challenge of short‑term electric load forecasting in the era of big data by proposing a novel framework that combines TensorFlow‑based distributed computing with Deep Auto‑Encoder Networks (DAENs). Recognizing the limitations of traditional statistical methods (e.g., time‑series, regression) and earlier AI approaches such as Back‑Propagation Neural Networks (BPNNs) and Extreme Learning Machines (ELMs)—which suffer from over‑fitting, slow convergence, and difficulty handling high‑dimensional data—the authors adopt a deep learning architecture that is inherently suited for feature extraction from large, multi‑variate datasets.

Data preprocessing

Four categories of input variables are used: historical load values, daily average temperature, day‑type (weekday), and holiday indicator. Load values are normalized to a 0‑1 range using min‑max scaling. Temperature is transformed via three fuzzy membership functions (low, medium, high) to produce smooth, continuous representations. Day‑type is encoded with a weighted one‑hot scheme (weight = 0.5) to preserve ordinal information while avoiding sparsity. Holiday is binary encoded (0.5 for “yes”, 0 for “no”). The resulting feature vector has 57 dimensions for each forecasting horizon.

Model architecture

The forecasting model consists of a stacked auto‑encoder followed by a linear regression output layer. The auto‑encoder layers are sized 57 → 24 → 12 → 1, reflecting a progressive compression of the input space. Each auto‑encoder is trained to reconstruct its input, minimizing a cross‑entropy reconstruction loss augmented with a sparsity penalty based on Kullback‑Leibler divergence. This penalty forces most hidden units to stay near zero, encouraging the network to learn compact, discriminative representations and reducing the risk of over‑fitting.

Training strategy

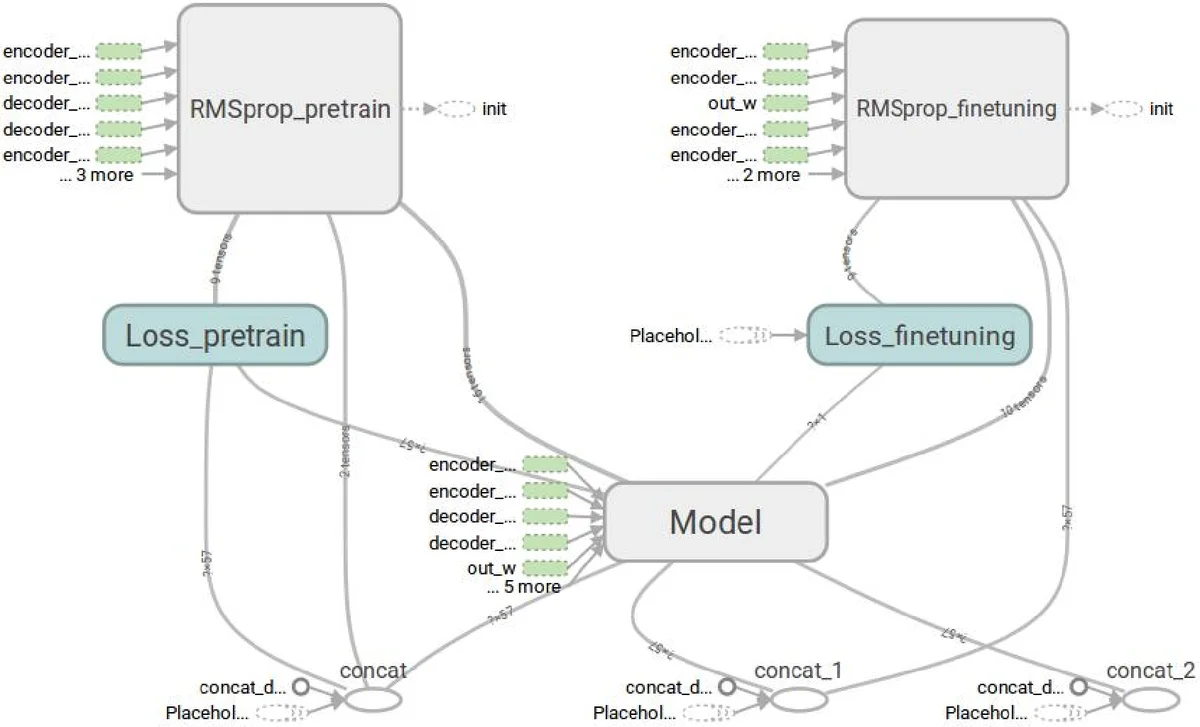

Training proceeds in two stages. First, unsupervised layer‑wise pre‑training initializes the weights of each auto‑encoder using stochastic gradient descent (SGD) or Adam. This step mitigates the vanishing‑gradient problem and provides a good starting point for the subsequent supervised phase. Second, the entire network is fine‑tuned on labeled data (actual load values) using Adam with a learning rate of 0.01, up to 250 epochs. Pre‑training runs for a maximum of 2000 iterations. The two‑phase approach yields faster convergence and higher generalization compared with training a deep network from scratch.

Distributed implementation with TensorFlow

TensorFlow’s data‑flow graph model and multi‑GPU support are leveraged to parallelize both pre‑training and fine‑tuning. Input tensors are partitioned across available GPUs, and each GPU processes a subset of the training data simultaneously. A custom flowchart (Fig. 3) illustrates the pipeline: data loading → normalization/fuzzy/encoding → tensor creation → distribution to GPUs → parallel training → aggregation of model parameters → testing. This architecture dramatically reduces wall‑clock training time, making the approach scalable to larger datasets and higher‑frequency forecasting tasks.

Experimental setup

The authors evaluate the method using real‑world data from the European Smart Technology Network (EUNITE). The dataset includes hourly load measurements, temperature, day‑type, and holiday flags for a full year. For each of the 24 hourly horizons (1 am to 12 am) a separate model is trained, resulting in 24 distinct DAEN predictors. Training data span January 1 to November 30 (pre‑training and fine‑tuning), while December 24‑31 serves as the test set. The hardware platform is a Dell T430 server equipped with multiple PCI‑e 3.0 × 16 GPU slots, running Ubuntu 14.04 and TensorFlow 0.9.0.

Performance metrics

Three error metrics are reported: maximum relative error (MaxRe), minimum relative error (MinRe), and mean absolute error (MAE), all expressed as percentages. Compared with baseline BPNN and ELM models, the DAEN‑TensorFlow solution achieves lower MaxRe and MinRe values, indicating improved stability, and reduces MAE by roughly 10 % on average, demonstrating superior accuracy. The authors attribute these gains to (1) richer feature representation via deep auto‑encoding, (2) effective regularization through sparsity constraints, and (3) accelerated training that permits deeper architectures without over‑fitting.

Contributions and significance

- Integrated preprocessing – The combination of min‑max scaling, fuzzy temperature encoding, and weighted one‑hot day‑type encoding creates a robust, low‑dimensional representation of heterogeneous load‑related factors.

- Deep auto‑encoder with sparsity – By embedding KL‑divergence sparsity penalties, the network learns compact latent features that capture nonlinear dependencies while avoiding the identity mapping problem.

- Two‑stage training – Layer‑wise unsupervised pre‑training followed by supervised fine‑tuning yields faster convergence and better generalization than end‑to‑end training of shallow networks.

- Scalable TensorFlow implementation – Multi‑GPU parallelism demonstrates that deep learning can meet the real‑time demands of power system operators even with large‑scale data.

Limitations and future work

The study fixes the network depth (four layers) and hyper‑parameters (learning rate, sparsity weight β) based on a single dataset; transferring the model to other regions or markets may require re‑tuning. The fuzzy membership functions and weighted one‑hot encoding parameters are hand‑crafted rather than learned, leaving potential performance gains untapped. Moreover, the paper does not discuss inference latency or integration with dispatch‑level decision support systems, which are critical for operational deployment. Future research could explore automated hyper‑parameter optimization (e.g., Bayesian search), adaptive fuzzy encoding, model compression for edge deployment, and real‑time closed‑loop testing within an energy market simulation.

Overall assessment

The paper presents a well‑structured, technically sound approach that convincingly demonstrates the advantages of deep auto‑encoders combined with TensorFlow’s distributed capabilities for short‑term load forecasting. The methodological rigor—clear mathematical formulation, thorough preprocessing, and transparent training pipeline—makes the work reproducible. Empirical results substantiate the claim that the proposed framework outperforms traditional neural‑network baselines in both accuracy and stability. While additional work is needed to generalize the model across diverse power systems and to address operational integration, the contribution represents a meaningful step toward leveraging deep learning for large‑scale, real‑time power system analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment