Neural Predictive Coding using Convolutional Neural Networks towards Unsupervised Learning of Speaker Characteristics

Learning speaker-specific features is vital in many applications like speaker recognition, diarization and speech recognition. This paper provides a novel approach, we term Neural Predictive Coding (NPC), to learn speaker-specific characteristics in …

Authors: Arindam Jati, Panayiotis Georgiou

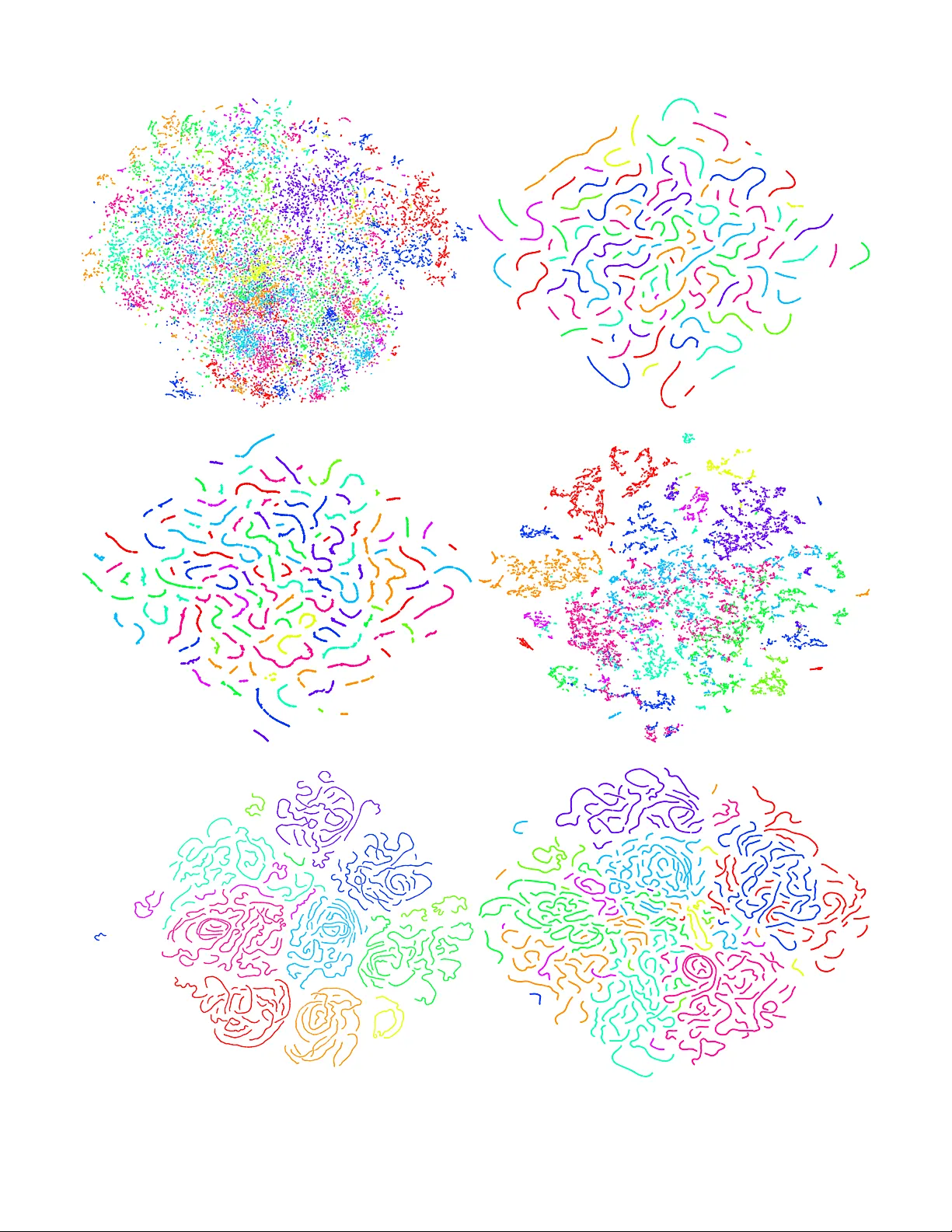

1 Neural Predicti v e Coding using Con v olutional Neural Networks to w ards Unsupervised Learning of Speaker Characteristics Arindam Jati, and Panayiotis Geor giou, Senior Member , IEEE Abstract —Learning speaker -specific features is vital in many applications like speaker r ecognition, diarization and speech recognition. This paper pro vides a novel approach, we term Neural Predicti ve Coding (NPC), to learn speaker -specific char - acteristics in a completely unsupervised manner from large amounts of unlabeled training data that even contain many non-speech events and multi-speaker audio streams. The NPC framework exploits the proposed short-term active-speaker sta- tionarity hypothesis which assumes two temporally-close short speech segments belong to the same speaker , and thus a com- mon repr esentation that can encode the commonalities of both the segments, should capture the vocal characteristics of that speaker . W e train a conv olutional deep siamese network to produce “speaker embeddings” by lear ning to separate ‘same’ vs ‘different’ speaker pairs which are generated from an unlabeled data of audio streams. T wo sets of experiments are done in different scenarios to evaluate the strength of NPC embeddings and compare with state-of-the-art in-domain supervised meth- ods. First, two speaker identification experiments with different context lengths are perf ormed in a scenario with comparatively limited within-speaker channel variability . NPC embeddings are found to perform the best at short duration experiment, and they provide complementary inf ormation to i-vectors for full utterance experiments. Second, a large scale speaker verification task having a wide range of within-speaker channel variability is adopted as an upper -bound experiment where comparisons are drawn with in-domain supervised methods. Index T erms —Speaker -specific characteristics, unsupervised learning, Convolutional Neural Networks (CNN), siamese net- work, speaker recognition. I . I N T R O D U C TI O N A COUSTIC modeling of speaker characteristics is an important task for many speech-related applications. It is also a very challenging problem due to the highly complex information that the speech signal modulates, from lexical content to emotional and behavioral attrib utes [1], [2] and multi-rate encoding of this information. A major step to- wards speaker modeling is to identify features that focus only on the speaker-specific characteristics of the speech signal. Learning these characteristics has v arious applications in speaker se gmentation [3], diarization [4], v erification [5], and recognition [6]. State-of-the-art methods for most of these applications use short-term acoustic features [7] like MFCC [8] or PLP [9] for signal parameterization. In spite of the effecti veness of the algorithms used for b uilding speaker models [6] or clustering speech segments [4], sometimes these features fail to produce high between-speaker variability and low within-speaker v ariability [7]. This is because MFCCs contain a lot of supplementary information like phoneme characteristics, and they are frequently deployed in speech recognition [10]. A. Prior work Significant research effort has gone into solving the abov e mentioned discrepancies of short-term features by incorporat- ing long-term or prosodic features [11] into existing systems. These features can specifically be used in speaker recog- nition or verification systems since they are calculated at utterance-lev el [7]. Another way to tackle the problem is to calculate mathematical functionals or transformations on top of MFCC features to expand the context and project them on a “speaker space” which is supposed to capture speak er- specific characteristics. One popular method [12] is to b uild a GMM-UBM [7] on training data and utilize MAP adapted GMM superv ectors [12] as fixed dimensional representations of variable length utterances. Along this line of research, there has been ample ef fort in exploring different factor analysis techniques on the high dimensional supervectors to estimate contributions of different latent factors like speaker - and channel-dependent variabilities [13]. Eigenv oice and eigen- channel methods were proposed by K enny et al. [14] to separately determine the contributions of speaker and chan- nel v ariabilities respectiv ely . In 2007, Joint Factor Analysis (JF A) [15] was proposed to model speaker variabilities and compensate for channel v ariabilities, and it outperformed the former technique in capturing speaker characteristics. Introduction of i-v ectors: In 2011, Dehak et al. proposed i-vectors [16] for speaker verification. The i-vectors were inspired by JF A, b ut unlike JF A, the i-vector approach trains a unified model for speaker and channel variability . One inspiration for proposing the T otal V ariability Space [16] was from the observ ation that the channel ef fects obtained by JF A also had speaker factors. The i-vectors ha ve been used by many researchers for numerous applications including speaker recognition [16], [17], diarization [18], [19] and speaker adap- tation during speech recognition [20] due to their state-of-the- art performance. But, performance of i-vector systems tends to deteriorate as the utterance length decreases [21], especially when there is a mismatch between the lengths of training and test utterances. Also, the i-v ector modeling, similar to most factor analysis methods, is constrained by the GMM assumption which might degrade the performance in some cases [7]. DNN-based methods in speak er characteristics learning: Recently , Deep Neural Network- (DNN) [22] derived “speaker 2 embeddings” [23] or bottleneck features [24] hav e been found to be very powerful for capturing speaker characteristics. For example, in [25], [26] and [27], frame-lev el bottleneck fea- tures have been extracted using DNNs trained in a supervised fashion over a finite set of speakers; and some aggregation techniques like GMM-UBM [12] or i-vectors ha ve been used on top of the frame-lev el features for utterance-le vel speaker verification. Chen et al. [28], [29] dev eloped a deep neural architecture and trained it for frame-lev el speaker comparison task in a supervised way . The y achie ved promising results in speaker verification and segmentation tasks ev en when they ev aluated their system on out-of-domain data [28]. In [30], the authors proposed an end-to-end te xt-independent speak er verification method using DNN embeddings. It uses the sim- ilar approach to generate the embeddings, b ut the utterance- lev el statistics are computed internally by a pooling layer in the DNN architecture. In more recent work [31], different combinations of Conv olutional Neural Netw orks (CNN) and Recurrent Neural Networks (RNN) [22] ha ve been exploited to find speaker embeddings using the triplet loss function which minimizes intra-speaker distance and maximizes inter - speaker distance [31]. The model also incorporates a pooling and normalization layer to produce utterance-level speaker embeddings. Need for unsupervised methods and existing works: In spite of the wide range of DNN variants, all these need one or more annotated dataset(s) for supervised training. This limits the learning po wer of the methods, especially giv en the data-hungry needs of advanced neural network- based models. Supervised training can also limit robustness due to over -tuning to the specific training en vironment. This can cause degradation in performance if the testing condition is v ery dif ferent from that of the training. Moreover , transfer learning [32] of the supervised models to a new domain also needs labeled data. This points to a desire and opportunity in employing unlabeled data and unsupervised methods for learning speaker embeddings. There hav e been a few efforts [33]–[35] in the past to employ neural networks for acoustic space division, but these works focused on speaker clustering and they did not e xploit short-term stationarity to wards embedding learning. In [36], an unsupervised training scheme using con volutional deep belief networks has been proposed for audio feature learning. They applied those features for phoneme, speaker , gender and music classification tasks. Although, the training employed there was unsupervised, the proposed system for speaker classification was trained on TIMIT dataset [37] where e very utterance is guaranteed to come from a single speaker , and PCA whitening was applied on the spectrogram per utterance basis. Moreover , performance of the system on out-of-domain data was not ev aluated. B. Pr oposed work In this paper , we propose a completely unsupervised method for learning features having speaker -specific characteristics from unlabeled audio streams that contain many non-speech ev ents (music, noise, and anything else av ailable on Y ouT ube). W e term the general learning of signal characteristics via the short-term stationarity assumption Neural Predictive Coding (NPC) since it was inspired by the idea of predicting present value of signal from a past context as done in Linear Predicti ve Coding (LPC) [38]. The short-term stationarity assumption can take place, according to the frame size, along different characteristics. For example we can assume that the behaviors expressed in the signal will be mostly stationary within a window of a fe w seconds as we did in [39]. In this work we assume that any potentially activ e speaker will be mostly stationary within a short windo w: the acti ve speaker is unlik ely to change multiple times within a couple of seconds. LPC predicts future v alues from past data via a filter described by its coefficients. NPC can predict future values from past data via neural netw ork. The embedding inside the NPC neural network can serv e as a feature. Moreo ver , while predicting future v alues from past, the NPC model can incorporate kno wledge learned from big, unlabeled datasets. The short-term speaker stationarity assumption was ex- ploited in our p r evious work [40] via an encoder-decoder model to predict future values from past through a bottleneck layer . The training in volv ed in that work was able to see past and future values of the signal only from the ‘same speaker’, assuming speaker stationarity . In contrast, the currently em- ployed siamese architecture [41], [42] helps the model to encounter and compare whether a pair of speech segments come from the same speaker or , two different speakers, based on unlabeled data via the short-term stationarity assumption. W e perform experiments under different scenarios and for different applications to explore the ability of the proposed method to learn speaker characteristics. Moreov er , the NPC training is done on out-of-domain data, and its performance is compared with i-v ectors and recently introduced x-v ectors [43] trained on in-domain data. Note that the NPC training needs no labels at all, not ev en speaker homogeneous regions. For that reason, we do not expect NPC-deriv ed features to beat in-domain supervised algorithms, but rather present this as an upper -bound aim. The comparison re veals interesting directions that can arise through further introduction of context. For example, if the algorithm emplo ys longer same-speaker context (than 2 s as- sumed by this work) similar to i-vector systems then it can allow for v ariable length features and increased channel normalization. Below are the major aims of the proposed work tow ards establishing a rob ust speaker embedding: 1) Training should require no labels of any kind (no speaker id labels, or speaker homogeneous utterances for training); 2) System should be highly scalable relying on plentiful av ailability of unlabeled data; 3) Embedding should represent short-term characteristics and be suitable as an alternativ e to MFCCs in an aggregation system like [25] or [27]; and, 4) The training scheme should be readily applicable for unsupervised transfer learning. The rest of the paper is organized as follows. The NPC methodology is described in Section II. Section III pro vides details about ev aluation methodology and required experimen- tal setup. Results are tabulated and discussed in Section IV. A qualitati ve analysis and future scopes are provided in 3 Section V. Finally conclusions are drawn in Section VI. I I . N E U R A L P R E D I C T I V E C O D I N G ( N P C ) O F S P E A K E R C H A R AC T E R I S T I C S Our ultimate goal is to learn a non-linear mapping (the employed DNN or part of it) that can project a small windo w of speech from any speaker to a lower dimensional embedding space where it will retain the maximum possible speaker - specific characteristics and reject other information as much as possible. W e expect that based on the unsupervised training paradigm we employ the embedding may also capture additional in- formation, mainly channel characteristics and we intend to address that in future work, as further discussed in Section V. A. Contrastive sample cr eation NPC learns to extract speaker characteristics in a contrastive way i.e. by distinguishing between different speakers. During training phase, it possesses no information about the actual speaker identities, but only learns whether two input audio chunks are generated from the same speaker or not. W e provide the NPC model two kinds of samples [41]. The first kind consists of pairs of speech segments that come from the same speaker , called genuine pairs . The second type consists of speech segments from two different speakers, called impostor pairs . This approach has been used in the past for numerous applications [41], [42], [44], but all of them needed labeled datasets. The challenge is how we can create such samples if we do not have labeled acoustic data. W e exploit the characteristics of speak er-turntaking that result in short-term speaker stationarity [40]. The hypothesis of short- term speaker stationarity is based on the notion that gi ven a long observ ation of human interaction, the probability of fast speaker changes will be at the tails of the distribution. In short: it is very unlikely to have extremely fast speaker changes (for example ev ery 1 second). So, if we take pairs of consecutiv e short segments from such a long audio stream, most of the pairs will contain two audio segments from the same speaker (genuine pairs). There will be definitely some pairs containing segments from two different speakers, but number of such pairs will probably be small compared to the total number of genuine pairs. T o find the impostor pairs, we choose two random segments from two dif ferent audio streams in our unsupervised dataset. Again, intuitiv ely the probability of finding the same speaker in an impostor pair is relatively lower than the probability of getting two different speakers in it, provided a sufficiently lar ge unsupervised dataset. For example, sampling two random Y ouT ube videos, the lik elihood of getting the same speaker in both is very lo w . The left part of Fig. 1 shows this contrasti ve sample creation process. Audio stream 1 and audio stream i (for any i between 2 to N , where N is the number of audio streams in the dataset) are sho wn here. Assume the vertical red lines denote (unknown) speaker change points. ( w 1 , w 2 ) is a windo w pair where each of the two windows has d feature frames. This window pair is mov ed over the audio streams with a shift of ∆ to get the genuine pairs. For e very w 1 , we randomly pick a window w 0 2 of same length from a different audio stream to get an impostor pair . All these samples are then fed into the siamese DNN network for binary classification of whether an input pair is genuine or impostor . A siamese neural network (please see right part of Fig. 1), first introduced for signature verification [45], consists of two identical twin networks with shared weights that are joined at the top by some energy function [41], [42], [44]. Generally , the siamese networks are provided with two inputs and trained by minimizing the energy function which is a predefined metric between the highest lev el feature transformations of both the inputs. The weight sharing ensures similar inputs are transformed into embeddings in close proximity with each other . The inherent structure of a siamese network enables us to learn similar or dissimilar input pairs with discriminati ve energy functions [41], [42]. Similar to [44], we use L 1 distance loss between the highest le vel outputs of the siamese twin networks for the two inputs. W e will first describe in Section II-B about the CNN that processes the speech spectrogram to automatically learn features to generate the embeddings. Next, in Section II-C we will discuss about the top part of the neural network of Fig. 1 that inv olves comparing the two embeddings and deri ving the final output and error for back-propagation. B. Siamese Con volutional layers The amazing effecti veness of CNNs have been well estab- lished in computer vision field [46], [47]. Recently , speech sci- entists are also applying CNNs for different challenging tasks like speech recognition [48], [49], speaker recognition [31], [50], [51], large scale audio classification [52] etc. The general benefits of using CNNs can be found in [22] and in the abov e papers. In our work, the inspiration to use CNNs comes from the need of exploring spectral and temporal contexts together through 2D con volution ov er the mel-spectrogram features (please see Section III-D for more information). The benefits of such a 2D conv olution have also been shown with more traditional signal processing feature sets such as Gabor features [53]. Our siamese network (one of the identical twins), b uilt using multiple CNN layers and one dense layer at the highest le vel, is shown in Fig. 2. W e gradually reduce the kernel size from 7 × 7 to 5 × 5 , 4 × 4 , and 3 × 3 . W e hav e used 2 × 2 max-pooling layers after every two conv olutional layers. The size of stride for all con volution and max-pooling operations has been chosen to be 1. W e ha ve used Leaky ReLU nonlinearity [54] after every con volutional or fully connected layer (omitted from Fig. 2 for clearer visualization). W e have applied batch normalization [55] after every layer to reduce the “internal cov ariance shift” [55] of the network. It helped the network to a void overfitting and con verge faster without the need of using dropout layers [56]. After the last con volutional layer , we get 32 feature maps, each of size 20 × 5 . W e flatten these maps to get a 3200 dimensional vector which is connected to the final 512 dimensional NPC 4 Ne t w ork 1 Ne t w ork 2 Embedding 1 Embedding 2 L1 distanc e vec t or W w 1 w 2 or w' 2 FC Bi na ry c lassifica t ion: 0 = Ge nui ne P a ir 1 = Imposter P a i r Audio 1 Audio i … … Ge nui ne P a ir (w 1 , w 2 ) Impostor Pair (w 1 , w ' 2 ) w 1 w 2 w' 2 w 1 w 2 ∆ Unla be led da tase t of a udi o s tr e a ms S ha re d we i ghts S e ction I I- C S e ction I I- B Fig. 1: NPC training scheme utilizing short-term speaker stationarity hypothesis. Left: Contrastiv e sample creation from unlabeled dataset of audio streams. Genuine and impostor pairs are created from unlabeled dataset as explained in Section II-A. Right: The siamese network training method. The genuine and impostors pairs are fed into it for binary classification. “FC” denotes Fully Connected hidden layer in the DNN. Note that the siamese con volutional layers have been discussed in Section II-B, and the deriv ation of the loss functions by comparing the siamese embeddings has been shown in Section II-C. embedding through a fully connected layer . The embeddings are obtained before applying the Leaky ReLU non-linearity 1 . C. Comparing Siamese embeddings – Loss functions Let f ( x 1 ) and f ( x 2 ) be the highest lev el outputs of the siamese twin networks for inputs x 1 and x 2 (in other words, ( x 1 , x 2 ) is one contrastiv e sample obtained from the window pair ( w 1 , w 2 ) or ( w 1 , w 0 2 ) ). W e will use this transformation f ( x ) as our “embedding” for any input x (please see right part of Fig. 1). Here x is a matrix of size d × m , and it denotes d frames of m dimensional MFCC feature vectors in window w . Similarly , x i denotes the feature frames in window w i for i = 1 , 2 . W e have deployed two dif ferent types of loss functions for training the NPC model. They are described below . 1) Cr oss entropy loss: Inspired from [44], the loss function is designed in a way such that it decreases the weighted L 1 distance between the embeddings f ( x 1 ) and f ( x 2 ) if x 1 and x 2 are from a genuine pair, and increases the same if they are from an impostor pair . The “ L 1 distance vector” (Fig. 1, right) is obtained by calculating element-wise absolute difference between the two embedding vectors f ( x 1 ) and f ( x 2 ) and is given by: L ( x 1 , x 2 ) = | f ( x 1 ) − f ( x 2 ) | . (1) W e connect L ( x 1 , x 2 ) to two outputs g i ( x 1 , x 2 ) using a fully connected layer: g i ( x 1 , x 2 ) = D X k =1 w i,k × | f ( x 1 ) k − f ( x 2 ) k | + b i (2) 1 Follo wing standard conv ention for extracting embedding from DNNs, such as in [57]. for i = 1 , 2 . Here, f ( x 1 ) k and f ( x 2 ) k are the k th elements of f ( x 1 ) and f ( x 2 ) v ectors respecti vely , and D is the length of those vectors (so, D is the embedding dimension). w i,k ’ s and b i ’ s are the weights and bias for the i th output. Note that these weights and biases are affecting only the binary classifier, and they are not part of the siamese network. A softmax layer produces the final probabilities: p i ( x 1 , x 2 ) = s ( g i (( x 1 , x 2 ))) for i = 1 , 2 . (3) Here s ( . ) is the softmax function giv en by s ( g i ( x 1 , x 2 )) = e g i ( x 1 , x 2 ) e g 1 ( x 1 , x 2 ) + e g 2 ( x 1 , x 2 ) for i = 1 , 2 . (4) The network in Fig. 1 is provided with the genuine and impostor pairs as explained in Section II-A. W e use cross entropy loss here. It is gi ven by e ( x 1 , x 2 ) = −I ( y ( x 1 , x 2 ) = 0) log( p 1 ( x 1 , x 2 )) − I ( y ( x 1 , x 2 ) = 1) log( p 2 ( x 1 , x 2 )) (5) where I ( . ) is the indicator function defined as: I ( t ) = ( 1 , if t is true 0 , if t is false and, y ( x 1 , x 2 ) is the true label for the sample ( x 1 , x 2 ) , defined as: y ( x 1 , x 2 ) = ( 0 , if ( x 1 , x 2 ) is a genuine pair . 1 , if ( x 1 , x 2 ) is an impostor pair . (6) Using Equation 6, we can write the error as e ( x 1 , x 2 ) = − (1 − y ( x 1 , x 2 )) log( p 1 ( x 1 , x 2 )) − y ( x 1 , x 2 ) log( p 2 ( x 1 , x 2 )) (7) 5 64@94 ×34 64@45 × 15 64@90 ×30 32@42 × 12 32@40 × 10 32@20 ×5 5 ×𝟓 maxpool 2 ×𝟐 4 ×𝟒 3 ×𝟑 2 ×𝟐 maxpool 3200 512 10 20 30 40 MFCC features 20 40 60 80 100 Time (frames) 7 ×𝟕 Fig. 2: The DNN architecture employed in each of the siamese twins. All the weights are shared between the twins. The kernel sizes are denoted under the red squares. 2 × 2 max-pooling is used as sho wn by yellow squares. All the feature maps are denoted as: N @ x × y , where N = number of feature maps, x × y = size of each feature map. Dimension of the speaker embedding is 512. “FC” = Fully Connected layer . T ABLE I: NPC T raining Datasets Name of the dataset Size (hours) Number of samples T edlium 100 358K T edlium-Mix 110 395K Y oUSCT ube 584 2.1M 2) Cosine embeddings loss: W e also analyze the perfor- mance of the network when we directly minimize a contrastiv e loss function between the embeddings. So, there is no need to add an extra fully connected layer at the end. The employed cosine embedding loss is defined belo w . L cos ( x 1 , x 2 ) = ( 1 − C ( f ( x 1 ) , f ( x 2 )) , if y ( x 1 , x 2 ) = 0 C ( f ( x 1 ) , f ( x 2 )) , if y ( x 1 , x 2 ) = 1 Here C ( f ( x 1 ) , f ( x 2 )) is the cosine similarity between f ( x 1 ) and f ( x 2 ) defined as cos ( x 1 , x 2 ) = x 1 . x 2 || x 1 || 2 || x 2 || 2 . Here || . || 2 denotes the L 2 norm. In Section IV, performances of the two types of loss functions will be analyzed through experimental e vidence. D. Extracting NPC embeddings for test audio Once the DNN model is trained, we use it for e xtracting speaker embeddings from any test audio stream. As discussed in Section II-C, the transformation achieved by the siamese network on an input segment x of length d frames is giv en by f ( x ) . W e use only this siamese part of the network to transform a sequence of MFCC frames of any speech segment into NPC embeddings by using a sliding window w of d frames and shifting it by 1 frame along the entire sequence. I I I . E V A L U A T I O N M E T H O D O L O G Y The nature of the proposed method introduces a great challenge in its ev aluation. All existing speaker identification methods employ some le vel of supervision. For example x-vector systems [43] employ data with complete speaker labels, while i-vector systems [16] require labeling of speaker- homogeneous regions. In our proposed work we intend to establish a lo w- lev el speaker -specific feature, on which subsequent supervised methods or layers can operate. Giv en the above ev aluation challenge we perform two sets of comparisons with existing methods: 1) Speaker identification ev aluation : Speaker identifica- tion ( i.e., closed set multi-class speaker classification) experiments are performed at different context lengths. In that case we compare with other low-le vel features such as MFCCs and statistics of MFCCs, as well as an i-vector system. 2) Upper -bound comparison : A large scale speaker verifi- cation experiment is done to set upper-bounds on perfor- mance by in-domain supervised methods. W e present this to observe the margin of improv ement of the proposed out-of-domain unsupervised method via additional higher lev el integration methods or layers. W e note that our method only integrates 1 second lev el information while the i-vector and x-vector upper-bound methods use all the av ailable data. The experimental setting for NPC training and the above experiments is described below . A. NPC T raining Datasets T able I shows the training datasets along with their approxi- mate total durations and number of contrasti ve samples created from each dataset. W e train three different models individually on these datasets, and we call every trained model by the name of the dataset used for training along with the NPC prefix (for example, the NPC model trained on Y oUSCT ube data will be called as NPC Y oUSCT ube). 1) T edlium dataset: The T edlium dataset is built from the T edlium training corpora [58]. It originally contained 666 unique speakers, but we have removed the 19 speakers which are also present in the T edlium de velopment and test sets 6 (since the T edlium dataset was originally dev eloped for speech recognition purposes, it has speaker ov erlap between train and de v/test sets). The contrasti ve samples created from the T edlium dataset are less noisy (compared to the case for Y oUSCTube data as will be discussed next), because most of the audio streams in the T edlium data are from a single speak er talking in the same en vironment for long (although there is some noise, for example, speech of the audience, clapping etc. ). The reason for employing this dataset is two-fold: First, the model trained on the T edlium data will provide a comparison with the models trained on the T edlium-Mix and Y oUSCT ube datasets for a validation of the short-term speaker stationarity hypothesis. Second, since the test set of the speaker identifi- cation experiment will be from the T edlium test data, this will help demonstrate the difference in performance for in-domain and out-of-domain ev aluation. 2) T edlium-Mix dataset: The T edlium-Mix dataset is cre- ated mainly to validate the short-term speaker stationarity hypothesis (please see Section IV -B). W e create the T edlium- Mix dataset by creating artificial dialogs through randomly concatenating utterances. T edlium is annotated, so we know the utterance boundaries. W e thus simulate a dialog that has a random speaker e very other utterance of the main speaker . For every audio stream, we reject half of the total utterances, and between every two utterances we concatenate a randomly chosen utterance from a randomly chosen speaker (i.e. S , R 1 , S , R 2 , S , R 3 , . . . where S ’ s are the utterances of the main speaker and R i (for i = 1 , 2 , 3 , . . . ) is a random utterance from a randomly chosen speaker i.e. a random utterance from another T ed recording). In this way we create the T edlium- Mix dataset ha ving a speaker change after ev ery utterance for ev ery audio stream. It also has almost the same size as the T edlium dataset. 3) Y oUSCT ube dataset: A large amount of v arious types of audio data has been collected from Y ouT ube to create the Y oUSCTube dataset. W e have chosen Y ouT ube for this purpose because of virtually unlimited supply of data from div erse en vironments. The dataset has multilingual data includ- ing English, Spanish, Hindi and T elugu from heterogeneous acoustic conditions like monologues, multi-speaker con versa- tions, movie clips with background music and noise, outdoor discussions etc . 4) V alidation data: The T edlium dev elopment set (8 unique speakers) has been used as validation data for all training cases. W e used the utterance start and end time stamps and the speaker IDs provided in the transcripts of the T edlium dataset to create the v alidation set so that it does not hav e an y noisy labels. B. Data for speaker identification experiment The T edlium test set (11 unique speakers from 11 different T ed recordings) has been employed for the speak er identifi- cation experiment. Similar to the de velopment dataset, it has start and end time of ev ery utterance for ev ery speaker as well as the speaker IDs. W e hav e extracted the utterances from ev ery speaker , and all utterances of a particular speaker 0 5 10 15 20 25 30 35 40 Number of epochs 75 80 85 90 95 100 Binary classification accuracy (%) 90.19 90.48 92.16 Train accuracy for NPC Tedlium Validation accuracy for NPC Tedlium Train accuracy for NPC Tedlium-Mix Validation accuracy for NPC Tedlium-Mix Train accuracy for NPC YoUSCTube Validation accuracy for NPC YoUSCTube Fig. 3: Binary classification accuracies of classifying genuine or impostor pairs for NPC models trained on the T edlium, T edlium-Mix, and Y oUSCT ube datasets. Both training and validation accuracies are sho wn. The best v alidation accuracies for all the models are marked by big stars (*). hav e been assigned the corresponding speaker ID. Those hav e been used for creating the experimental scenarios for speaker classification (Section IV -C2 and Section IV -C4). Similar to the v alidation set, the labels of this dataset are very clean since they are created using the human-labeled speaker IDs. C. Data for speaker verification experiment A recently released lar ge speaker verification corpus, V ox- Celeb (version 1) [59] is employed for the speaker verification experiment. It has a total of 1251 unique speakers with ∼ 154 K utterances at 16KHz sample rate. The a verage number of sessions per speaker is 18. W e use the default dev elopment and test split provided with the dataset and mentioned in [59]. D. F eature and model parameters W e employ 40 dimensional MFCC features computed from 16KHz audio with 25 ms window and 10 ms shift using the Kaldi toolkit [60]. W e choose d = 100 frames (1 s ), and ∆ = 200 frames (2 s ). Therefore, each window is a 100 × 40 matrix, and we feed this to the first CNN layer of our network (Fig. 2). The employed model has a total 1.8M parameters and it has been trained using RMSProp optimizer [61] with a learning rate of 10 − 4 and a weight decay of 10 − 6 . The held out validation set (Section III-A4) has been used for model selection. I V . E X P E R I M E N T A L R E S U L T S A. Con verg ence curves Fig. 3 shows the conv ergence curves in terms of binary classification accuracies of classifying genuine or impostor pairs for training the DNN model separately in different datasets along with the corresponding validation accuracies. The dev elopment set for calculating the validation accuracy 7 is same for all the training sets and it doesn’t contain any noisy samples. In contrast, our training set is noisy since it’ s unsupervised and based on the short-term stationarity in assigning same/different class speaker pairs. W e can see from Fig. 3 that NPC T edlium reaches almost 100% training accurac y , but NPC T edlium-Mix con ver ges at a lo wer training accuracy as expected. This is due to the larger portion of noisy samples present in the T edlium-Mix dataset that arise from the artificially introduced fast speaker changes and the simultaneous hypothesis of short-term speaker stationarity 2 . Ho wev er this doesn’t hurt the v alidation accuracy on the de velopment set, which is both distinct from training set and correctly labeled: we obtain 90 . 19% and 90 . 48% for NPC T edlium and NPC T edlium-Mix trained-models respectively . W e believ e this is because the model is correctly learning to not label some of the assumed same-speaker pairs as same- speaker when there is a speaker change that we introduced via our mixing, due to the lar ge amounts of correct data that compensate for the smaller-amount of mislabeled pairs. The NPC Y oUSCT ube model reaches much better training accuracy than the NPC T edlium-Mix model ev en with fewer epochs. This points to both increased rob ustness due to the increased data v ariability and also that speaker -changes in real dialogs are not as f ast as we simulated in the T edlium- Mix dataset. It is interesting to see that the NPC Y oUSCT ube model achie ves a little better validation accuracy ( 92 . 16% ) than the other two models even when the training dataset had no explicit domain overlap with the validation data. W e think, this is because of the huge size (approximately 6 times larger in size than the T edlium dataset) and widely varying types of acoustic en vironments of the Y oUSCT ube dataset. B. V alidation of the short-term speaker stationarity hypothesis Here we analyze the validation accuracies obtained by the NPC models trained separately on the T edlium and T edlium- Mix datasets. From Fig. 3 it is quite clear that both mod- els could achie ve similar v alidation accuracies, although the T edlium-Mix dataset has audio streams containing speaker changes at ev ery utterance and the T edlium dataset contains mostly single-speaker audio streams. The reason is that e ven though there are frequent speaker turns in the T edlium-Mix dataset, the short length of context ( d = 100 frames = 1 s ) chosen to learn the speaker characteristics ensures that the total number of correct same-speaker pairs dominate the f alsely-labeled same-speaker pairs. Therefore the sudden speaker changes are of little impact and do not deteriorate the performance of neural network on the development set. This result v alidates the short-term speaker stationarity hypothesis. C. Experiments: Speak er identification evaluation 1) F rame-le vel Embedding visualization: V isualization of high dimensional data is vital in many scenarios because it 2 The corpus is created by mixing turns. This means that there are 54 , 778 speaker change points in the 115 hours of audio. Howev er in this case we assumed that there are no speaker changes in consecuti ve frames. If the change points were uniformly distributed then that would result in an upper-bound of 87%. can rev eal the inherent structure of the data points. For speaker characteristics learning, visualizing the employed features can manifest the clusters formed around dif ferent speakers and thus demonstrate the ef ficacy of the features. W e use t-SNE visualization [62] for this purpose. W e compare the following features (the terms in boldface show the names we will use to call the features). 1) MFCC : Raw MFCC features. 2) MFCC stats : This is generated by moving a sliding window of 1 s along the raw MFCC features with a shift of 1 frame (10 ms ) and taking the statistics (mean and standard deviation in the window) to produce a ne w feature stream. This is done for a fair comparison of MFCC and the embeddings (since the embeddings are generated using 1 s context). 3) NPC Y oUSCT ube Cross Entropy : Embeddings ex- tracted with NPC Y oUSCT ube model using cross entropy loss. 4) NPC T edlium Cross Entropy : Embeddings extracted with NPC T edlium model using cross entropy loss. 5) NPC Y oUSCT ube Cosine : Embeddings extracted with NPC Y oUSCT ube model using cosine embedding loss. 6) i-vector : 400 dimensional i-vectors extracted indepen- dently ev ery 1 s using a sliding window with 10 ms shift. The i-vector system (Kaldi V oxCeleb v1 recipe) was trained on the V oxCeleb dataset [59] (16 KHz audio). It is not possible to train an i-vector system on Y oUSCT ube since it contains no labels on speaker -homogeneous re- gions. Fig. 4 shows the 2 dimensional t-SNE visualizations of the frames (at 10 ms resolution) of the abo ve features e xtracted from the T edlium test dataset containing 11 unique speakers. For better visualization of the data, we chose only 2 utterances from e very speaker , and the feature frames from a total of 22 utterances become our input dataset for the t-SNE algorithm. From Fig. 4 we can see that the raw MFCC features are very noisy , but the inherent smoothing applied to compute MFCC stats features help the features of the same speaker to come closer . Howe ver we notice that although some same- speaker features cluster in lines, these lines are far apart in the space, which denotes that the MFCC features capture additional information. For example we see that the speaker denoted with Green occupies both the very left and very right parts of the t-SNE space. The i-vector plot looks similar to the MFCC stats and does not cluster the speakers very well. This is consistent with existing literature [21] that showed that i-vectors do not perform well for short utterances especially when the training utterances are comparativ ely longer . In Section IV -C4, we will see that the utterance-le vel i-v ectors perform much better for speaker classification. The NPC Y oUSCTube Cosine embeddings underperform the cross entropy-based methods possibly because of poorer con vergence as we observed during training. They are also noisier than MFCC stats and i-vectors, indicating that e ven a little change in the input (just 10 ms of extra audio) perturbs the embedding space, which might not be desirable. 8 (a) MFCC (b) MFCC stats (c) i-vector (d) NPC Y oUSCTube Cosine (e) NPC Y oUSCTube Cross Entropy (f) NPC T edlium Cross Entropy Fig. 4: t-SNE visualizations of the frames of different features for the T edlium test data containing 11 speakers (2 utterances per speaker). Different colors represent dif ferent speakers. 9 T ABLE II: Frame-level speak er classification accuracies of different features with kNN classifier (k=1). All features below are trained on unlabeled data except i-vector which requires speaker -homogeneous files. MF CC MF CC sta ts NPC Yo USCT ube Cro ss Entro p y NPC T e dl i um Cro ss Entrop y NPC Yo USCT ube Co s ine i-v ect or Vox C eleb 1 48.75 72.70 79.05 80.25 62.97 70.26 2 54.12 81.33 87.26 88.32 70.04 79.07 3 57.05 84.11 89.56 89.62 73.77 82.58 5 61.36 88.85 92.34 92.00 78.59 87.80 8 63.38 89.73 91.62 91.33 79.07 88.91 10 64.13 90.17 92.42 91.88 80.84 89.12 MF CC MF CC sta ts NPC Yo USCT ube Cro ss Entro p y NPC T e dl i um Cro ss Entrop y NPC Yo USCT ube Co s ine i-v ect or Vox C eleb 1 38.02 70.45 75.62 76.40 56.30 64.02 2 44.08 79.43 83.75 83.21 58.18 74.50 3 46.39 81.98 85.06 84.79 59.05 76.76 5 50.24 86.20 89.12 88.65 62.18 81.65 8 51.56 87.70 89.66 89.07 64.33 84.21 10 52.65 88.46 90.34 89.94 65.79 88.13 # of Enr o llm ent Utt eranc es Tedl iu m t est set Tedl iu m de ve lopme n t set # of Enr o llm ent Utt eranc es The NPC Y oUSCT ube Cross Entropy and NPC T edlium Cross Entropy embeddings provide much better distinction between dif ferent speaker clusters. Moreo ver , the y also provide much better cluster compactness compared to the MFCC and i-vector features. Among the NPC embeddings, NPC Y oUSCT ube Cross Entropy features provide possibly the best tSNE visualization. They e ven produce better clusters than NPC T edlium Cross Entropy , although the latter one is trained on in-domain data. The larger size of Y oUSCT ube dataset might be the reason behind this observation. 2) F rame-le vel speaker identification: W e perform frame- lev el speaker identification experiments on the T edlium devel- opment set (8 speakers) and the T edlium test set (11 speakers). By frame-level classification we mean that every frame in the utterance is independently classified as to its speaker ID. The reason for ev aluating with frame-lev el speaker clas- sification is that better frame-lev el performance con ve ys the inherent strength of the system to derive short-term features that carry speaker -specific characteristics. It also sho ws the possibility to replace MFCCs with the proposed embeddings by incorporating in systems such as [25] and [27]. T able II sho ws a detailed comparison between the 6 dif ferent features described in Section IV -C1 in terms of frame-wise speaker classification accuracies. W e hav e tab ulated the accu- racies for dif ferent number of enrollment utterances (in other words training utterances for the speaker ID classifier) per speaker . W e have used kNN classifier (with k=1) for speaker classification. The reason for using such a naiv e classifier is to rev eal true potential of the features, and not to harness strength of the classifier . W e have repeated each experiment 5 times and the a verage accuracies have been reported here. Each time we have held out 5 random utterances from each speaker for testing. The same seen (enrollment) and test utterances ha ve been used for all types of features and in all cases the test and enrollment sets are distinct. From T able II we can see that MFCC stats perform much better than raw MFCC features. W e think the reason is that the raw features are much noisier than the MFCC stats features because of the implicit smoothing performed during the statistics computation. The NPC Y oUSCT ube and NPC T edlium models with cross entropy loss perform pretty sim- ilarly (for test data, NPC Y oUSCT ube e ven performs better) ev en though the former one is trained on out-of-domain data. This highlights the benefits and possibilities of employing out- of-domain unsupervised learning using publicly available data. NPC Y oUSCT ube Cosine doesn’t perform well compared to other NPC embeddings. The i-vectors perform worse than NPC embeddings and MFCC stats for frame-le vel classifica- tion due to the reasons discussed in Section IV -C1 and as reported by [21]. 3) Analyzing network weights: W e hav e seen in the previ- ous e xperiments that the embeddings learned using the cross entropy loss performed better than those learned through minimizing the cosine loss. Here we analyze the learned weights in the last fully connected (FC) layer (size = 512 × 2 ) in the network that uses cross entropy loss. From Fig. 5 we can see that the weights are learned in a way such that the weight value for a particular position of the first embedding, w 1 ,k , is approximately of same value and opposite sign of the weight value for that particular position in the second embedding, w 2 ,k (please see Equation 2 for the notations). Experimentally , for NPC Y oUSCT ube Cross Entropy model, we found the mean of absolute v alue of w 1 ,k + w 2 ,k to be 0 . 0284 , with a standard deviation of 0 . 0206 (mean and standard de viations computed over all the embedding dimensions, i.e. k varying from 1 to D = 512 ). The two bias values we found are 1 . 0392 and − 0 . 9876 . In other words, the e xperimental evidence sho ws w 1 ,k ≈ − w 2 ,k and, b 1 ≈ − b 2 . One possible and intuitiv e explanation would be that the individual absolute weight v alues pro vide importance to dif- ferent dimensions/features in the embedding (this has also been explained in [44]). The mirrored nature of weights and biases are possibly ensuring cancellation of same-speaker embeddings while ensuring maximization of impostor pair distance. For example, if w 1 ,k is a high positiv e number then it ensures higher contribution of the k th dimension of L ( x 1 , x 2 ) in the softmax output for the genuine pair class (since, w 1 ,k | f ( x 1 ) k − f ( x 2 ) k | will be higher). On the other hand, w 2 ,k ≈ − w 1 ,k is ensuring “equally lo wer” contribution of | f ( x 1 ) k − f ( x 2 ) k | to the probability of the input to be an impostor pair . For the cosine embedding loss, these automatically learned importance weights are not present, which might be the reason for under performing the cross entropy embeddings; all embedding dimensions are equally contributing to the loss. 4) Utterance-le vel speaker identification: Here we are in- terested in utterance-lev el speaker identification task. W e com- pare NPC Y oUSCT ube Cross Entropy (out-of-domain (OOD) Y ouTube), MFCC, and i-vector (OOD V oxCeleb) methods. For MFCC and NPC embeddings, we calculate the mean 10 50 100 150 200 250 300 350 400 450 500 Weight index Row 1 Row 2 Weight value -0.4 -0.3 -0.2 -0.1 0 0.1 0.2 0.3 0.4 (a) T wo rows of the full 512 × 2 matrix sho wn as an image. 0 10 20 30 40 50 60 70 80 90 100 Weight index -0.3 -0.2 -0.1 0 0.1 0.2 0.3 0.4 Weight value Row 1 Row 2 Row 1 + Row 2 (b) W eights in the two rows plotted, along with their sum. Fig. 5: V isualization of the learned weights in the last fully connected (FC) layer: (a) The matrix of the last FC layer . Note the opposite signs of the weight values in ‘Row 1’ and ‘Row 2’ for a particular weight index. (b) This figure sho ws a zoomed version of the figure in (a) for only 100 weights. Note the mirrored nature of the weights v alues in ‘Row 1’ and ‘Row 2’, and oscillation of their sum around zero. T ABLE III: Utterance-lev el speaker classification accuracies of dif ferent features with kNN classifier (k=1). Red italics indicates the best performing single feature classification result while bold text indicates the best o verall performance. MFC C sta ts NPC Yo USCTub e sta ts i-v ect o r VoxCeleb NPC Yo USCTub e + i -v ecto r MFC C sta ts NPC Yo USCTub e sta ts i-v ect o r VoxCeleb NPC Yo USCTub e + i -v ecto r 1 75.12 82.12 86.38 85.62 80.00 83.27 85.00 86.82 2 83.00 87.88 91. 75 92.12 87.64 92. 36 92.82 95.73 3 84.88 89.88 93.12 93.12 92.18 95.45 94.82 97.09 5 91.25 94.50 95. 25 95.88 92.09 95.36 93.91 95.45 8 92.12 95.00 96. 62 97.25 95.27 97.36 95.82 97.36 10 92.50 95.25 95.12 96.50 96.54 98.00 96.73 98.09 # of Enroll ment Utt erance s Tedliu m te st set Tedliu m dev elopme n t se t and standard deviation vectors ov er all frames in a particular utterance, and concatenate them to produce a single vector for ev ery utterance. F or i-vector , we calculate one i-vector for the whole utterance using the same i-vector system as mentioned in Section IV -C1. W e applied LD A (trained on development part of V oxCeleb) to project the 400 dimensional i-vectors to a 200 dimensional space. This ga ve better performance for i-vectors (also ob- served in literature [57]) and let us compare unsupervised NPC embeddings with the best possible i-vector configuration. W e again classify using k-NN classifier with k = 1 , as explained in Section IV -C2 to focus on the feature performance and not on the next-layer of trained classifiers. T able III shows the classification accuracies for dif ferent features with increasing number of enrollment utterances 3 . In each enrollment scenario, 5 randomly held-out utterances from each of the 11 speakers have been used for testing, and the process has been repeated 20 times to report the average accuracies. Both i-vectors and NPC Y oUSCT ube embeddings perform similarly . It is interesting to note the complementarity of the concatenated i-vector -embedding feature. From T able III 3 Note that due to the small-size window for our feature, e ven two utterances provide significant information; hence we do not see significant change as the enrollment utterances increase. T ABLE IV: Speaker verification on V oxCeleb v1 data. i-V ector and x-V ector use the full utterance in a supervised manner for ev aluation while the proposed embedding operates at the 1 second window with a simple statistics (mean+std) over an utterance. Me th od Tra ini n g dom ai n Feat ure Conte xt Spe aker labels Spe aker ho mog enei ty minD CF E ER(%) i-ve ctor [59] ID Full No Ye s 0.73 8.80 x-vector ID Ful l Ful l Ye s 0.61 7.21 NPC stats OOD 1se c No No 0.87 15.54 we can see that the NPC Y oUSCT ube Cross Entropy + i-v ector perform the best for almost all the cases. An additional important point is that the classifier used is the simple 1-Nearest Neighbor classifier . So, we belie ve that the highly non-Gaussian nature of the embeddings (as can be observed from Fig. 4) might not be captured well by the 1-NN since it is based on Euclidean distance which will under perform in complex manifolds as we observe with NPC embeddings. This motiv ates future work in higher -layer , utterance-based, neural network-deri ved features that build on top of these embeddings. D. Experiments: Upper-bound comparison T able IV compares performance of i-vector , x-vector [43], and the proposed NPC embeddings for the speaker verification task on V oxceleb v1 data using the default Dev and T est splits [59] distributed with the dataset. W e want to highlight that since the assumption for our system is that we have absolutely no labels during DNN training (in fact our Y ouT ube do wnloaded data are not e ven guaranteed to be speech!), the comparison with x-vector or i-vector is highly asymmetric. T o simplify this explanation: • Our proposed method uses “some random audio”: com- pletely unsupervised and challenging data. • i-vector uses “speech” with labels on “speaker homoge- neous regions”: unsupervised with a supervised step on clean data. 11 • x-vector uses “speech” with labels of “id of speaker”: completely supervised on clean data. Moreov er , i-vector and x-vector here are trained on in-domain (ID) De v part of the V oxCeleb dataset. On the other hand, the NPC model is trained out-of-domain (OOD) on unlabeled Y ouTube data. Please note that here “out-of-domain” refers to the generic characteristics of the Y oUSCTube dataset com- pared to the V oxceleb dataset. For example, the V oxceleb dataset was mined using the keyword “interview” [59] along with the speaker name, and the acti ve speaker detection [59] ensured activ e presence of that speaker in the video. On the other hand, the Y oUSCT ube dataset is mined without any constraints thus generalizing more to realistic acoustic conditions (see III-A3). Moreov er, having only celebrities [59] in the V oxceleb dataset helped it to find multiple sessions of the same speaker , which subsequently helped the supervised DNN models to be more channel-in variant. Ho wev er , such freedom is not available in the Y oUSCT ube dataset, thus paving a way to build unsupervised models that can be trained or adapted in such conditions. Finally , the features employed by i-vector and x-vector employ the whole utterance of av erage length 8.2 s (min=4 s , max=145 s ) [59] while the NPC model is only producing 1 second estimates. While we do intend to incorporate more contextual learning for longer sequences, in this work we are focusing on the lo w-lev el feature and hence emplo y statistics (mean and std) of the embeddings. This is suboptimal and creates an uninformed information bottleneck, howe ver it is a necessary and easy way to establish an utterance-based feature, thus enabling comparison with the existing methods. For all the above reasons we expect that any ev aluation with i-vector and x-vector can only be seen as a very upper-bound and we dont expect to beat either of these two in performance. The i-vector performance is as reported in [59]. No data augmentation is performed for x-vector for a fair comparison. T o maintain standard scoring mechanisms we employed LD A to project the embeddings on a lower dimensional space and, then PLD A scoring as in [43], [59]. The same V oxCeleb Dev data is utilized to train LD A and PLDA models for all methods for a fair comparison. The LD A dimension is 200 for x-vector and i-vector [59] systems, and 100 for NPC system. W e report the minimum normalized detection cost function (minDCF) for P targ et = 0 . 01 and Equal Error-Rate (EER). W e can see that the best in-domain supervised method is 30% better than unsupervised NPC in terms of minDCF . V . D I S C U S S I O N A N D F U T U R E W O R K A. Discussion Based on the visualization of Fig. 4 and the experiments of Section IV -C we have established that the resulting embed- ding is capturing significant information about the speakers’ identity . The feature has shown to be quite better than using knowledge dri ven features such as MFCCs or statistics of MFCCs and e ven more rob ust than supervised features such as i-vector operating on 1 second windows. Importantly the proposed embedding sho wed extreme portability by operating better on the T edlium dataset when trained on lar ger amounts of random audio from the collected Y oUSCTube corpus than when trained in-domain on the T edlium dataset itself. Also importantly we have shown in sections IV -A that if we on purpose create a fast changing dialog by mixing the T edlium utterances, the short-term stationarity hypotheses still holds. This encourages the use of unlabeled data. Evaluating this embedding howe ver is challenging as its use is not obvious until it is used for a full blo wn speaker identification framew ork. This requires se veral more stages of dev elopment that we will discuss further in this work, along with discussing the shortcomings of this embedding. Howe ver , we can, and we are, providing some early e vidence that the embedding does indeed capture significant information about the speaker . In Section IV -C4 we present results that compare an utterance-based classification system on the T edlium data. W e are comparing the i-vector system optimized for utterance- lev el classification, and which employs supervised data, with a very simple statistic (mean and std) of our proposed un- supervised embedding. W e show that our embedding provides very robust results that are comparativ e to the i-vector system. The shortcoming of this comparison, is that the utterances are drawn from the T edlium dataset, and they are likely also incorporating channel information. W e provide some suggestions in overcoming this shortcoming further in this section. W e proceeded, in Section IV -D, to present results that compare an utterance-based classification system on the V ox- Celeb v1 test. Here we wanted to provide an upper -bound comparison. W e ev aluated i-vector and x-vector V oxCeleb trained supervised methods. These methods are able to employ the full utterance as a single observ ation, while the proposed embedding only operates on a < 1 second resolution, hence we again aggregate via an uninformed information bottleneck (mean and std). W e see that despite the information bottleneck and complete unsupervised and out of domain nature of the experiment our proposed system still achieves an acceptable performance with a 30% worse minDCF than x-vector . B. Futur e work The abov e observations and analysis provide many direc- tions for future work. Giv en that all our same-speaker examples come from the same channel, we believ e that the proposed embedding cap- tures both channel and speaker characteristics. This provides an opportunity for data augmentation, and hence reduction of the channel influence. In future work we intend to augment the near-by frames such that contextual pairs are coming from a range of different channels through augmentation. This also provides another opportunity for joint channel and speaker learning. Through the abov e augmentation we can jointly learn same vs different speakers and same vs different channels, thus providing disentanglement and more robust speaker representations. Further , triplet learning [31], especially with hard triplet mining, has been shown to provide improv ed performance and we intend to use such an architecture in future work to directly optimize intra- and inter-class distances in the manifold. 12 One additional opportunity for improv ement is to employ a larger neural network. W e employed a CNN with only 1.8M parameters for our training (as an initial try to check the valid- ity of the proposed method). But, recent CNN-based speak er verification systems employ much deeper networks ( e.g., V ox- Celeb’ s baseline CNN comprises of 67M parameters). W e think utilizing recent state-of-the-art deep architectures will improv e performance of the proposed technique for large scale speaker verification e xperiments. Finally , and more applicable to the speaker ID task, we need embeddings that can capture information from longer sequences. As we see in Section IV -D the supervised speaker identification methods are able to e xploit longer term context while the proposed embedding is only able to serve as a short- term feature. This requires either supervised methods, towards higher lev el information integration, or more in alignment with our interests of better unsupervised context exploitation. For example we can employ a better aggregation mechanism via unsupervised diarization using this embedding to iden- tify speaker-homogeneous regions and then employ Recurrent Neural Networks (RNN) [22] to wards longitudinal information integration. V I . C O N C L U S I O N In this paper, we proposed an unsupervised technique to learn speaker -specific characteristics from unlabeled data that contain any kind of audio, including speech, environmental sounds, and multiple overlapping speakers of many languages. The proposed system exploits the short-term activ e-speaker stationarity hypothesis to create contrastiv e samples from un- labeled data, and feed them into a deep con volutional siamese network which learns the NPC embeddings by learning to classify same vs different speaker pairs. W e trained the proposed sia mese model on both the Y oUSCTube and T edlium training sets. W e performed two sets of e valuation experiments: a closed set speaker identification experiment, and a large scale speaker v erification experiment for upper-bound comparison. The NPC embeddings outper - form i-vectors at frame-le vel speaker identification, and pro- vide complementary information to i-vectors at the utterance- lev el speaker identification task. As an upper-bound task we employed the V oxCeleb speaker verification set. As expected NPC embeddings underperform in-domain supervised x-vector and in-domain i-vector meth- ods. The analysis of the proposed out-of-domain unsupervised method with the in-domain supervised methods helps identify challenges and raises a range of opportunities for future work, including in longitudinal information integration and in introducing robustness to channel characteristics. A C K N O W L E D G M E N T The U.S. Army Medical Research Acquisition Activity , 820 Chandler Street, Fort Detrick MD 21702- 5014 is the awarding and administering acquisition of fice. This work was supported by the Of fice of the Assistant Secretary of Defense for Health Af fairs through the Military Suicide Research Con- sortium under A ward No. W81XWH-10-2-0181, and through the Psychological Health and Traumatic Brain Injury Research Program under A ward No. W81XWH-15-1-0632. Opinions, interpretations, conclusions and recommendations are those of the author and are not necessarily endorsed by the Department of Defense. R E F E R E N C E S [1] X. Huang, A. Acero, and H.-W . Hon, Spoken Language Pr ocessing: A Guide to Theory , Algorithm, and System Development , 1st ed. Upper Saddle River , NJ, USA: Prentice Hall PTR, 2001. [2] S. Narayanan and P . G. Georgiou, “Behavioral signal processing: Deriving human behavioral informatics from speech and language, ” Pr oceedings of the IEEE , vol. 101, no. 5, pp. 1203–1233, 2013. [3] M. K otti, V . Moschou, and C. K otropoulos, “Speaker segmentation and clustering, ” Signal processing , vol. 88, no. 5, pp. 1091–1124, 2008. [4] X. Anguera, S. Bozonnet, N. Evans, C. Fredouille, G. Friedland, and O. V inyals, “Speaker diarization: A re view of recent research, ” IEEE T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 20, no. 2, pp. 356–370, 2012. [5] K. Chen, “T owards better making a decision in speaker verification, ” P attern Recognition , vol. 36, no. 2, pp. 329–346, 2003. [6] J. P . Campbell, “Speaker recognition: A tutorial, ” Pr oceedings of the IEEE , vol. 85, no. 9, pp. 1437–1462, 1997. [7] J. H. Hansen and T . Hasan, “Speaker recognition by machines and humans: A tutorial review , ” IEEE Signal processing magazine , v ol. 32, no. 6, pp. 74–99, 2015. [8] S. Davis and P . Mermelstein, “Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences, ” IEEE transactions on acoustics, speech, and signal pr ocessing , v ol. 28, no. 4, pp. 357–366, 1980. [9] H. Hermansky , “Perceptual linear predictive (plp) analysis of speech, ” the Journal of the Acoustical Society of America , vol. 87, no. 4, pp. 1738–1752, 1990. [10] L. R. Rabiner and B.-H. Juang, “Fundamentals of speech recognition, ” 1993. [11] E. Shriberg, L. Ferrer , S. Kajarekar, A. V enkataraman, and A. Stolcke, “Modeling prosodic feature sequences for speaker recognition, ” Speech Communication , vol. 46, no. 3, pp. 455–472, 2005. [12] D. A. Reynolds, T . F . Quatieri, and R. B. Dunn, “Speaker verification using adapted gaussian mixture models, ” Digital signal pr ocessing , vol. 10, no. 1-3, pp. 19–41, 2000. [13] P . Kenn y and P . Dumouchel, “Disentangling speaker and channel effects in speaker v erification, ” in Acoustics, Speech, and Signal Processing , 2004. Proceedings.(ICASSP’04). IEEE International Confer ence on , vol. 1. IEEE, 2004, pp. I–37. [14] P . Kenny , M. Mihoubi, and P . Dumouchel, “Ne w map estimators for speaker recognition. ” in INTERSPEECH , 2003. [15] P . Kenn y , G. Boulianne, P . Ouellet, and P . Dumouchel, “Joint factor analysis versus eigenchannels in speaker recognition, ” IEEE T ransac- tions on Audio, Speech, and Language Pr ocessing , vol. 15, no. 4, pp. 1435–1447, 2007. [16] N. Dehak, P . J. Kenny , R. Dehak, P . Dumouchel, and P . Ouellet, “Front- end factor analysis for speaker verification, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 19, no. 4, pp. 788–798, 2011. [17] N. Dehak, R. Dehak, P . Kenn y , N. Br ¨ ummer , P . Ouellet, and P . Du- mouchel, “Support vector machines versus fast scoring in the low- dimensional total variability space for speaker verification, ” in T enth Annual conference of the international speech communication associa- tion , 2009. [18] G. Sell and D. Garcia-Romero, “Speaker diarization with plda i-vector scoring and unsupervised calibration, ” in Spoken Language T echnology W orkshop (SLT), 2014 IEEE . IEEE, 2014, pp. 413–417. [19] G. Dupuy , M. Rouvier, S. Meignier , and Y . Esteve, “I-vectors and ILP clustering adapted to cross-show speaker diarization, ” in Thirteenth Annual Confer ence of the International Speech Communication Associ- ation , 2012. [20] G. Saon, H. Soltau, D. Nahamoo, and M. Pichen y , “Speaker adaptation of neural network acoustic models using i-vectors. ” in ASR U , 2013, pp. 55–59. 13 [21] A. Kanagasundaram, R. V ogt, D. B. Dean, S. Sridharan, and M. W . Mason, “I-vector based speaker recognition on short utterances, ” in Pr oceedings of the 12th Annual Conference of the International Speec h Communication Association . International Speech Communication Association (ISCA), 2011, pp. 2341–2344. [22] I. Goodfellow , Y . Bengio, and A. Courville, Deep Learning . MIT Press, 2016, http://www .deeplearningbook.org. [23] M. Rouvier, P .-M. Bousquet, and B. Favre, “Speaker diarization through speaker embeddings, ” in Signal Pr ocessing Conference (EUSIPCO), 2015 23rd Eur opean . IEEE, 2015, pp. 2082–2086. [24] G. E. Hinton and R. R. Salakhutdinov , “Reducing the dimensionality of data with neural networks, ” science , vol. 313, no. 5786, pp. 504–507, 2006. [25] T . Y amada, L. W ang, and A. Kai, “Improv ement of distant-talking speaker identification using bottleneck features of dnn. ” in Interspeech , 2013, pp. 3661–3664. [26] E. V ariani, X. Lei, E. McDermott, I. L. Moreno, and J. Gonzalez- Dominguez, “Deep neural networks for small footprint text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2014 IEEE International Confer ence on . IEEE, 2014, pp. 4052–4056. [27] S. H. Ghalehjegh and R. C. Rose, “Deep bottleneck features for i- vector based text-independent speaker verification, ” in Automatic Speech Recognition and Under standing (ASR U), 2015 IEEE W orkshop on . IEEE, 2015, pp. 555–560. [28] K. Chen and A. Salman, “Learning speaker-specific characteristics with a deep neural architecture, ” IEEE T ransactions on Neural Networks , vol. 22, no. 11, pp. 1744–1756, 2011. [29] ——, “Extracting speaker -specific information with a regularized siamese deep network, ” in Advances in Neural Information Pr ocessing Systems , 2011, pp. 298–306. [30] D. Snyder , P . Ghahremani, D. Povey , D. Garcia-Romero, Y . Carmiel, and S. Khudanpur , “Deep neural network-based speaker embeddings for end- to-end speaker verification, ” in Spoken Langua ge T echnology W orkshop (SLT), 2016 IEEE . IEEE, 2016, pp. 165–170. [31] C. Li, X. Ma, B. Jiang, X. Li, X. Zhang, X. Liu, Y . Cao, A. Kannan, and Z. Zhu, “Deep speaker: an end-to-end neural speaker embedding system, ” arXiv preprint , 2017. [32] S. J. Pan, Q. Y ang et al. , “ A survey on transfer learning, ” IEEE T ransactions on knowledge and data engineering , vol. 22, no. 10, pp. 1345–1359, 2010. [33] M. M. Saleem and J. H. Hansen, “ A discriminative unsupervised method for speaker recognition using deep learning, ” in Machine Learning for Signal Processing (MLSP), 2016 IEEE 26th International W orkshop on . IEEE, 2016, pp. 1–5. [34] X.-L. Zhang, “Multilayer bootstrap network for unsupervised speaker recognition, ” arXiv preprint , 2015. [35] I. Lapidot, H. Guterman, and A. Cohen, “Unsupervised speaker recogni- tion based on competition between self-organizing maps, ” IEEE T rans- actions on Neural Networks , vol. 13, no. 4, pp. 877–887, 2002. [36] H. Lee, P . Pham, Y . Largman, and A. Y . Ng, “Unsupervised feature learning for audio classification using con volutional deep belief net- works, ” in Advances in neural information pr ocessing systems , 2009, pp. 1096–1104. [37] J. S. Garofolo, L. F . Lamel, W . M. Fisher, J. G. Fiscus, D. S. Pallett, N. L. Dahlgren, and V . Zue, “Timit acoustic- phonetic continuous speech corpus ldc93s1, ” 1993. [Online]. A v ailable: https://catalog.ldc.upenn.edu/LDC93S1 [38] D. O’Shaughnessy , “Linear predicti ve coding, ” IEEE potentials , vol. 7, no. 1, pp. 29–32, 1988. [39] H. Li, B. Baucom, and P . Georgiou, “Unsupervised latent behavior mani- fold learning from acoustic features: Audio2behavior , ” in Pr oceedings of IEEE International Conference on Audio, Speech and Signal Processing (ICASSP) , New Orleans, Louisiana, March 2017. [40] A. Jati and P . Georgiou, “Speaker2vec: Unsupervised learning and adaptation of a speaker manifold using deep neural networks with an ev aluation on speaker segmentation, ” in Pr oceedings of Interspeech , August 2017. [41] S. Chopra, R. Hadsell, and Y . LeCun, “Learning a similarity metric discriminativ ely , with application to face verification, ” in Computer V ision and P attern Recognition, 2005. CVPR 2005. IEEE Computer Society Conference on , vol. 1. IEEE, 2005, pp. 539–546. [42] R. Hadsell, S. Chopra, and Y . LeCun, “Dimensionality reduction by learning an in variant mapping, ” in Computer vision and pattern reco g- nition, 2006 IEEE computer society conference on , vol. 2. IEEE, 2006, pp. 1735–1742. [43] D. Snyder , D. Garcia-Romero, G. Sell, D. Pove y , and S. Khudanpur, “X- vectors: Rob ust dnn embeddings for speaker recognition, ” Submitted to ICASSP , 2018. [44] G. Koch, R. Zemel, and R. Salakhutdinov , “Siamese neural networks for one-shot image recognition, ” in ICML Deep Learning W orkshop , v ol. 2, 2015. [45] J. Bromle y , I. Guyon, Y . LeCun, E. S ¨ ackinger , and R. Shah, “Signature verification using a” siamese” time delay neural network, ” in Advances in Neural Information Processing Systems , 1994, pp. 737–744. [46] A. Krizhevsk y , I. Sutskever , and G. E. Hinton, “Imagenet classification with deep con volutional neural networks, ” in Advances in neural infor - mation pr ocessing systems , 2012, pp. 1097–1105. [47] K. Simon yan and A. Zisserman, “V ery deep conv olutional networks for large-scale image recognition, ” arXiv preprint , 2014. [48] O. Abdel-Hamid, A. R. Mohamed, H. Jiang, L. Deng, G. Penn, and D. Y u, “Con volutional neural networks for speech recognition, ” IEEE/ACM T ransactions on audio, speech, and languag e pr ocessing , vol. 22, no. 10, pp. 1533–1545, 2014. [49] G. Hinton, L. Deng, D. Y u, G. E. Dahl, A.-r . Mohamed, N. Jaitly , A. Senior , V . V anhoucke, P . Nguyen, T . N. Sainath et al. , “Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Processing Magazine , vol. 29, no. 6, pp. 82–97, 2012. [50] M. McLaren, Y . Lei, N. Schef fer, and L. Ferrer, “ Application of con vo- lutional neural networks to speaker recognition in noisy conditions, ” in F ifteenth Annual Conference of the International Speech Communication Association , 2014. [51] Y . Lukic, C. V ogt, O. D ¨ urr , and T . Stadelmann, “Speaker identifica- tion and clustering using con volutional neural networks, ” in Machine Learning for Signal Processing (MLSP), 2016 IEEE 26th International W orkshop on . IEEE, 2016, pp. 1–6. [52] S. Hershey , S. Chaudhuri, D. P . W . Ellis, J. F . Gemmeke, A. Jansen, R. C. Moore, M. Plakal, D. Platt, R. A. Saurous, B. Seybold, M. Slaney , R. J. W eiss, and K. W . Wilson, “CNN architectures for large-scale audio classification, ” CoRR , vol. abs/1609.09430, 2016. [Online]. A vailable: http://arxiv .org/abs/1609.09430 [53] S.-Y . Chang and N. Morgan, “Robust cnn-based speech recognition with gabor filter kernels, ” in Fifteenth Annual Conference of the International Speech Communication Association , 2014. [54] A. L. Maas, A. Y . Hannun, and A. Y . Ng, “Rectifier nonlinearities improve neural network acoustic models, ” in Proc. ICML , vol. 30, no. 1, 2013. [55] S. Iof fe and C. Szegedy , “Batch normalization: Accelerating deep network training by reducing internal cov ariate shift, ” in Proceedings of the 32nd International Confer ence on Machine Learning , ser . Proceedings of Machine Learning Research, F . Bach and D. Blei, Eds., vol. 37. Lille, France: PMLR, 07–09 Jul 2015, pp. 448–456. [Online]. A vailable: http://proceedings.mlr .press/v37/ioffe15.html [56] N. Sriv astava, G. E. Hinton, A. Krizhevsky , I. Sutskev er , and R. Salakhutdinov , “Dropout: a simple way to prev ent neural networks from overfitting. ” Journal of Machine Learning Researc h , v ol. 15, no. 1, pp. 1929–1958, 2014. [57] D. Snyder, D. Garcia-Romero, D. Pov ey , and S. Khudanpur , “Deep neural network embeddings for text-independent speaker verification, ” Pr oc. Interspeech 2017 , pp. 999–1003, 2017. [58] A. Rousseau, P . Del ´ eglise, and Y . Estev e, “T ed-lium: an automatic speech recognition dedicated corpus. ” in LREC , 2012, pp. 125–129. [59] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxCeleb: a large-scale speaker identification dataset, ” arXiv pr eprint arXiv:1706.08612 , 2017. [60] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz, J. Silo vsky , G. Stem- mer , and K. V esely , “The kaldi speech recognition toolkit, ” in IEEE 2011 W orkshop on Automatic Speech Recognition and Under standing . IEEE Signal Processing Society , Dec. 2011, iEEE Catalog No.: CFP11SR W - USB. [61] T . Tieleman and G. Hinton, “Lecture 6.5-RMSProp: Divide the gradient by a running average of its recent magnitude, ” COURSERA: Neural networks for machine learning , vol. 4, no. 2, pp. 26–31, 2012. [62] L. V an Der Maaten and G. Hinton, “V isualizing data using t-SNE, ” Journal of Machine Learning Research , vol. 9, no. Nov , pp. 2579–2605, 2008.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment