Mask2Lesion: Mask-Constrained Adversarial Skin Lesion Image Synthesis

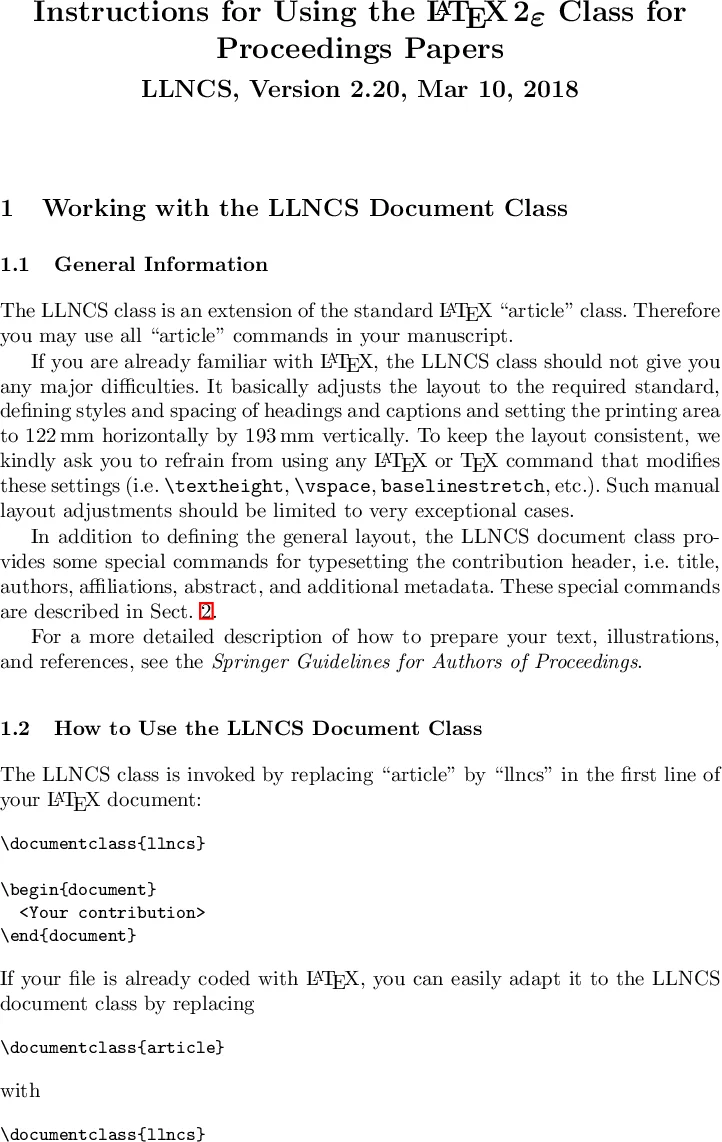

Skin lesion segmentation is a vital task in skin cancer diagnosis and further treatment. Although deep learning based approaches have significantly improved the segmentation accuracy, these algorithms are still reliant on having a large enough dataset in order to achieve adequate results. Inspired by the immense success of generative adversarial networks (GANs), we propose a GAN-based augmentation of the original dataset in order to improve the segmentation performance. In particular, we use the segmentation masks available in the training dataset to train the Mask2Lesion model, and use the model to generate new lesion images given any arbitrary mask, which are then used to augment the original training dataset. We test Mask2Lesion augmentation on the ISBI ISIC 2017 Skin Lesion Segmentation Challenge dataset and achieve an improvement of 5.17% in the mean Dice score as compared to a model trained with only classical data augmentation techniques.

💡 Research Summary

The paper addresses the persistent challenge in skin lesion segmentation: the need for large, accurately annotated datasets. While deep learning models have achieved impressive segmentation accuracy, their performance still hinges on the availability of sufficient training data. To alleviate this bottleneck, the authors propose Mask2Lesion, a conditional generative adversarial network (cGAN) that synthesizes realistic skin lesion images directly from binary segmentation masks. By leveraging the masks that are already part of most segmentation datasets, the method can generate new image–mask pairs without any additional manual annotation.

Mask2Lesion builds upon the pix2pix framework. The generator adopts a U‑Net encoder‑decoder architecture with skip connections, enabling the preservation of low‑frequency structural information from the mask while allowing the network to learn high‑frequency texture details. An L1 reconstruction loss is combined with the adversarial loss to encourage both global similarity and realistic detail. The discriminator is a PatchGAN that evaluates 70 × 70 patches, ensuring that local texture patterns are indistinguishable from real lesions. Training is performed on 128 × 128 pixel images (resized from the ISIC 2017 dataset) for 200 epochs, using 4 × 4 convolutions with stride 2, batch normalization, dropout (keep probability 0.5), and ReLU/LeakyReLU activations.

To assess the utility of the synthetic data, four augmentation strategies are compared: (i) NoAug – training only on the original dataset; (ii) ClassicAug – conventional augmentations such as rotations and flips; (iii) Mask2LesionAug – adding only the synthetic images generated by Mask2Lesion; and (iv) AllAug – a combination of classic augmentations and Mask2Lesion‑generated images. All segmentation models are identical U‑Nets trained with stochastic gradient descent (batch size = 32). Evaluation follows the ISIC challenge metrics: Dice coefficient, sensitivity, specificity, and pixel‑wise accuracy.

Quantitative results show that AllAug consistently outperforms the other three strategies. The mean Dice score improves from 0.7723 ± 0.0185 (NoAug) and 0.7743 ± 0.0203 (ClassicAug) to 0.8144 ± 0.0160, a relative gain of 5.17 %. Sensitivity and specificity also increase modestly, and pixel‑wise accuracy rises from 0.9316 to 0.9375. Visual inspection of segmentation outputs confirms that AllAug reduces false positives and produces masks that align more closely with ground truth. Kernel density estimates of the three primary metrics further illustrate that AllAug shifts the distribution toward higher values and narrows the spread, especially for specificity, indicating more reliable predictions.

Beyond numeric improvements, the authors demonstrate the flexibility of Mask2Lesion. Synthetic lesions are generated not only from the original ISIC masks but also from simple geometric shapes, hand‑drawn masks, and masks deformed via elastic or PCA‑based transformations. In every case, the generated images respect the mask boundaries, confirming that the model can be driven by arbitrary mask designs. This property opens the door to controlled data synthesis, such as creating rare lesion shapes or augmenting under‑represented classes.

The study acknowledges several limitations. The training resolution (128 × 128) is modest compared to clinical imaging standards, potentially limiting fine‑grained texture fidelity. The quality of generated images depends heavily on the quality of input masks; noisy or incomplete masks could degrade synthesis. Moreover, the clinical realism of the synthetic lesions is assessed only indirectly through segmentation performance, lacking direct dermatologist validation.

Future work is outlined along four axes: (1) scaling the model to higher resolutions and incorporating full‑color dermoscopic channels; (2) extending the mask‑to‑image paradigm to 3D modalities such as CT and MRI; (3) conducting systematic expert reviews to quantify clinical plausibility; and (4) integrating automated mask generation pipelines to further reduce manual effort.

In summary, Mask2Lesion provides a practical, mask‑driven GAN framework that can substantially enrich skin lesion training datasets without additional labeling. By demonstrating measurable gains in segmentation accuracy and showcasing the method’s adaptability to diverse mask inputs, the paper positions mask‑constrained image synthesis as a valuable tool for medical image analysis, with promising extensions to broader imaging domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment