A Highly Efficient Distributed Deep Learning System For Automatic Speech Recognition

Modern Automatic Speech Recognition (ASR) systems rely on distributed deep learning to for quick training completion. To enable efficient distributed training, it is imperative that the training algorithms can converge with a large mini-batch size. I…

Authors: Wei Zhang, Xiaodong Cui, Ulrich Finkler

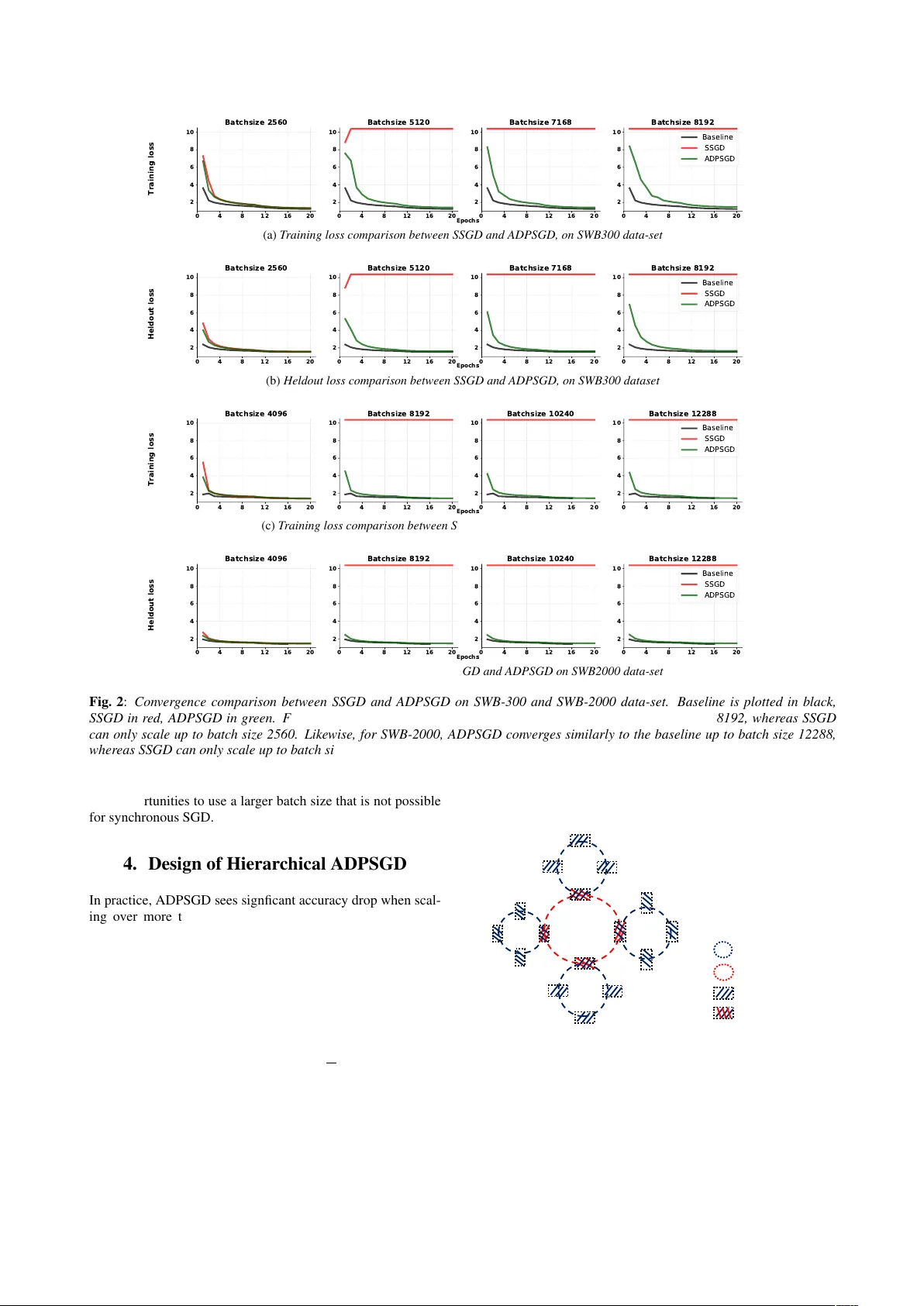

A Highly Efficient Distrib uted Deep Learning System F or A utomatic Speech Recognition W ei Zhang, Xiaodong Cui, Ulric h F inkler , Geor ge Saon, Abdullah Kayi, Alper Buyuktosuno glu, Brian Kingsbury , David K ung, Mic hael Picheny IBM Research { weiz,cuix,ufinkler,gsaon,kayi,alperb,bedk,kung,picheny } @us.ibm.com Abstract Modern Automatic Speech Recognition (ASR) systems rely on distributed deep learning to for quick training completion. T o enable efficient distrib uted training, it is imperati ve that the training algorithms can con ver ge with a large mini-batch size. In this work, we discovered that Asynchronous Decentralized Parallel Stochastic Gradient Descent (ADPSGD) can work with much larger batch size than commonly used Synchronous SGD (SSGD) algorithm. On commonly used public SWB-300 and SWB-2000 ASR datasets, ADPSGD can con ver ge with a batch size 3X as large as the one used in SSGD, thus enable training at a much larger scale. Further , we proposed a Hierarchical- ADPSGD (H-ADPSGD) system in which learners on the same computing node construct a super learner via a fast allreduce implementation, and super learners deploy ADPSGD algorithm among themselves. On a 64 Nvidia V100 GPU cluster con- nected via a 100Gb/s Ethernet network, our system is able to train SWB-2000 to reach a 7.6% WER on the Hub5-2000 Switchboard (SWB) test-set and a 13.2% WER on the Call- home (CH) test-set in 5.2 hours. T o the best of our knowledge, this is the fastest ASR training system that attains this lev el of model accuracy for SWB-2000 task to be ev er reported in the literature. Index T erms : speech recognition, distrib uted systems, deep learning 1. Introduction Deep Learning (DL) driv es the current Automatic Speech Recognition (ASR) systems and has yielded models of unprece- dented accuracy [1, 2]. Stochastic Gradients Descent (SGD) and its v ariants are the de f acto learning algorithms deployed in DL training systems. Distrib uted Deep Learning (DDL), which deploys dif ferent v ariants of parallel SGD algorithms in its core, is known today as the most effecti ve method to enable fast DL model training. Several DDL algorithms exist – most notably , Synchronous SGD (SSGD) [3], parameter-server based Asyn- chronous SGD (ASGD) [4], and Asynchronous Decentralized Parallel SGD (ADPSGD) [5]. Among them, ASGD has lost its fa vor among practitioners due to its poor conv ergence behav- ior [6, 3, 7, 8]. In our previous work [9], we systematically studied the application of state-of-the-art SSGD (SSGD) and ADPSGD to challenging SWB-2000 tasks. T o date, it is com- monly believed that ADPSGD and SSGD con ver ge with sim- ilar batch sizes [5]. In this paper, we find ADPSGD can po- tentially smoothen objecti ve function landscape and work with much larger batch sizes than SSGD. As a result of this find- ing, we made the following contributions in this paper: (1) W e systematically studied the con ver gence behavior of SSGD and ADPSGD on two public ASR datasets – SWB-300 and SWB- 2000– with a state-of-the-art LSTM model and confirmed that ADPSGD enables distributed training on a much larger scale. T o the best of our knowledge, this is the first demonstration conducted on large-scale public datasets that an asynchronous system can scale with a larger batch size than SSGD. (2) T o reduce system staleness, improv e system scalability w .r .t num- ber of learners and enable better communication and computa- tion efficienc y , we implemented a Hierarchical-ADPSGD (H- ADPSGD) system in which learners on the same computing node construct a “super-learner” via a fast allreduce 1 imple- mentation and the super-learners communicate with each other in the ADPSGD fashion. Our system shortens the SWB-2000 training from over 8 days on one V100 gpu to 5.2 hours on 64 gpus (i.e., 40X speedup), and the resulting model reaches 7.6% WER on SWB and 13.2% on CH. T o the best of our knowledge, this is the fastest system for state-of-the-art SWB-2000 model training ev er reported in the literature. 2. Background and Pr oblem Formulation Consider the following stochastic optimization problem min θ F ( θ ) = E ξ [ f ( θ ; ξ )] (1) where F is the objectiv e function, θ is the parameters to be op- timized (it is the weights of networks for DL) and ξ ∼ p ( x ) is a random variable on the training data x obeying distribution p ( x ) . Supposing that there are n training samples and ξ as- sumes a uniform distribution, ξ ∼ Uniform { 1 , · · · , n } , we hav e min θ F ( θ ) = E ξ [ f ( θ ; ξ )] = 1 n n X i =1 f i ( θ ) (2) where f i ( θ ) is evaluated at training sample x i . In mini-batch based SGD, at each iteration k , we hav e F ( θ k +1 ) = F ( θ k ) − α k g ( θ k , ξ k ) (3) where α k is the learning rate and g ( θ k , ξ k ) , 1 m m X s =1 ∇ f ( θ k , ξ k,s ) (4) with m being the size of the mini-batch. DL problem is an optimization problem described abov e. DDL is the distributed computing paradigm that solves DL problems. At the dawn of DDL research, the Parameter Server (PS) architecture [4] was wildy popular . Figure 1(a) illustrates a PS design: each learner calculates gradients and sends them to the PS, before the PS sends the updated weights back to 1 Allreduce is a broacast operation followed by a reduction operation (e.g., summation). (a) P arameter Server (b) Decen tralized Ar chitec tur e Fig. 1 : A centralized distrib uted learning ar chitectur e (aka P arameter-Server ar chitecture) and a decentralized distributed learning ar chitecture the learners. The core of PS architecture is the ASGD algo- rithm that allows each learner to calculate gradients and asyn- chronously push/pull the gradients/weights to/from the PS. The weight update rule in ASGD is giv en in Equation (5): F ( θ k +1 ) = F ( θ k ) − α k g ( θ ζ i k , ξ ζ i k ) (5) where ζ i ∈ { 1 , · · · , λ } is an indicator of the learner which made the model update at iteration k . Soon researchers real- ized that such design led to sub-par models that can be trained fast but accuracy-wise significantly lag behind single-learner baseline because of the large system staleness issue [10, 6, 8]. SSGD regained its popularity mainly because the synchroniza- tion problems exacerbated by the cheap commodity systems could be addressed by decades of High Performance Comput- ing (HPC) research. In SSGD, the weight update rule is gi ven in Equation (6) : each learner calculates gradients and receives updated weights in lockstep with the others. 2 SSGD has e xactly the same conv ergence rate as the single learner baseline while enjoying reasonable speedup. F ( θ k +1 ) = F ( θ k ) − α k " 1 λ λ X i =1 g ( θ i k , ξ i k ) # (6) The summation and the following broadcast in Equation (6) is known as the “ All-Reduce” operation in the HPC, which is a well-studied operation. When the message to be “ AllReduced” is large, as in the DL case, an optimal algorithm exists that max- imizes the communication bandwidth utilization [11]. Many in- carnations of this algorithm exist, such as [12, 13, 14]. Like an y synchronous algorithm, SSGD is subject to the straggler pr ob- lem , which means a slo w learner slows do wn the entire training system. W ildfire [15] is the first decentralized training system that has been demonstrated to work on modern deep learning tasks. The follow-up work in [16, 5] rigorously proved the decen- tralized parallel SGD algorithm in both synchronous and asyn- chronous forms can maintain the SSGD con vergence rate while resolving the straggler problem. Figure 1(b) illustrates a decen- tralized parallel SGD system , where each learner i calculates the gradients, updates its weights, and av erages its weights with its neighbor j in a ring topology . DPSGD/ADPSGD weights update rule is defined in Equation (7). Θ k +1 = Θ k T k − α k g (Θ k , Ξ k ) (7) 2 Throughout this paper , we use λ to denote the number of learners. where the columns of Θ k = [ θ 1 k , · · · , θ λ k ] are the weights of each learner; the columns of Ξ k = [ ξ 1 k , · · · , ξ λ k ] are ran- dom variables associated with batch samples; the columns of g (Θ k , Ξ k ) = [ g ( θ 1 k , ξ 1 k ) , · · · , g ( θ λ k , ξ λ k )] are the gradients of each learner , and T k is a symmetric stochastic matrix (therefore doubly stochastic) with two 0 . 5 s at neighboring positions for each row and column and 0s everywhere else. This amounts to performing model av eraging with one learner’ s left or right neighbor in the ring for each mini-batch weights update. The key difference between DPSGD and ADPSGD is that ADPSGD allows overlapping of gradients calculation and weights av erag- ing and removes the global barrier in DPSGD that forces every learner to synchronize at the end of each minibatch training. Naturally , ADPSGD runs much faster than DPSGD. 3. Scalability of ADPSGD It is well known that when batch-size is increased, it is difficult for a DDL system to maintain model accuracy [3, 8]. In our previous work [9], we designed a principled method to increase batch size while maintaining model accuracy with respect to training epochs for both SSGD and ADPSGD on ASR tasks, up to batch size 2560. The key technique is as follo ws: (1) learning rate linear scaling by the ratio between effecti ve batch size and baseline batch size. (2) linearly warmup the learning rate for the first 10 epochs before annealing at a rate 1 √ (2) per epoch for the remaining epochs. For example, if the baseline batch size is 256 and learning rate is 0.1 and assume now the total batch size is 2560, then for the first 10 epochs, the learning rate increases by 0.1 every epoch before reaching 1.0 at the 10th epoch. W e demonstrated that SSGD and ADPSGD can both scale up to the batch size of 2560 on ASR tasks in [9]. In this paper , we adopt the same hyper-parameter setup and study the conv ergence be- havior for SSGD and ADPSGD under larger batch sizes. Fig- ure 2a and Figure 2b plot the training loss and held-out loss w .r .t epochs for SSGD (in red) and ADPSGD (in green) under differ - ent batch sizes on SWB-300. The single-learner baseline with batch size 256 is plotted in black. ADPSGD can scale with a batch size 3x larger than SSGD (i.e., 8192 vs 2560). Figure 2c and Figure 2d show a similar trend for SWB-2000– SSGD only scales up to batch size 4096 whereas ADPSGD scales up to batch size 12288. T o mak e SSGD con verge with the same batch size as ADPSGD, one may use a much less aggressive learning rate (e.g. 4x smalle learning rate), which will take many more epochs to con verge to the same le vel of accurac y . W e speculate ADPSGD can scale with a larger batch size than SSGD for the following reason: ADPSGD is carried out with local gradient computation and update on each learner (second term on the RHS of Equation (7)) and global model av- eraging (first term of the RHS of Equation (7)) across the ring. The locally updated information is transferred to other learners in the ring by performing model av eraging through neighbor- ing learners. If we halt the local updates and only conduct the model av eraging through the doubly stochastic matrix T k , the system will con verge to the equilibrium where all learners share the identical weights, which is 1 n P n i =1 θ i k . After the con ver - gence, each learner pulls the weights, computes the gradients, updates the model and conducts another round of model av- eraging to con vergence. This amounts to synchronous SGD. Therefore, ADPSGD has synchronous SGD as its special case. Howe ver , in ADPSGD, local updates and model averaging run simultaneously . It is speculated that the local updates are car- ried out on 1 n P n i =1 θ i k perturbed by a noise term. This may 0 4 8 12 16 20 2 4 6 8 10 Batchsize 2560 0 4 8 12 16 20 2 4 6 8 10 Batchsize 5120 0 4 8 12 16 20 2 4 6 8 10 Batchsize 7168 0 4 8 12 16 20 2 4 6 8 10 Batchsize 8192 Baseline SSGD ADPSGD Epochs Training loss (a) T raining loss comparison between SSGD and ADPSGD, on SWB300 data-set 0 4 8 12 16 20 2 4 6 8 10 Batchsize 2560 0 4 8 12 16 20 2 4 6 8 10 Batchsize 5120 0 4 8 12 16 20 2 4 6 8 10 Batchsize 7168 0 4 8 12 16 20 2 4 6 8 10 Batchsize 8192 Baseline SSGD ADPSGD Epochs Heldout loss (b) Heldout loss comparison between SSGD and ADPSGD, on SWB300 dataset 0 4 8 12 16 20 2 4 6 8 10 Batchsize 4096 0 4 8 12 16 20 2 4 6 8 10 Batchsize 8192 0 4 8 12 16 20 2 4 6 8 10 Batchsize 10240 0 4 8 12 16 20 2 4 6 8 10 Batchsize 12288 Baseline SSGD ADPSGD Epochs Training loss (c) T raining loss comparison between SSGD and ADPSGD on SWB2000 data-set 0 4 8 12 16 20 2 4 6 8 10 Batchsize 4096 0 4 8 12 16 20 2 4 6 8 10 Batchsize 8192 0 4 8 12 16 20 2 4 6 8 10 Batchsize 10240 0 4 8 12 16 20 2 4 6 8 10 Batchsize 12288 Baseline SSGD ADPSGD Epochs Heldout loss (d) Heldout loss comparison between SSGD and ADPSGD on SWB2000 data-set Fig. 2 : Conver gence comparison between SSGD and ADPSGD on SWB-300 and SWB-2000 data-set. Baseline is plotted in black, SSGD in r ed, ADPSGD in green. F or SWB-300, ADPSGD con verg es similarly to the baseline up to batch size 8192, whereas SSGD can only scale up to batch size 2560. Likewise , for SWB-2000, ADPSGD con ver ges similarly to the baseline up to batch size 12288, wher eas SSGD can only scale up to batch size 4096. F or all SSGD and ADPSGD experiments, we use 16 learners. giv e opportunities to use a larger batch size that is not possible for synchronous SGD. 4. Design of Hierarchical ADPSGD In practice, ADPSGD sees signficant accuracy drop when scal- ing over more than 16 learners on ASR tasks [5, 9] due to system staleness issue. W e built H-ADPSGD, which is a hi- erarchical system as depicted in Figure 3, to address the stal- eness issue. N learners constructs a super-learner , which ap- plies the weight update rule as in Equation (6). The staleness across learners in this super-learner is ef fectiv ely 0. Each super - learner then participates in the ADPSGD ring and update its weights using Equation (7). Further, assume there are a total of λ learners; then the conver gence behavior of this λ -learner H- ADPSGD system is equivalent to that of a λ N -learner ADPSGD with the same total batch size . This design ensures H-ADPSGD scales with N more learners than its ADPSGD counterpart, while maintaining the same le vel of model accuracy . Moreover , H-ADPSGD improv es communication ef ficiency and reduces main memory traffic and CPU pressure across super -learners. Sync- Ring Learners in local Sync- Ring ADPSGD - Ring GPUs in ADPSGD - Ring Fig. 3 : System ar chitectur e for H-ADPSGD. Learners, on the same computing node, constitute a super-learner via a synchr onous ring (blue). The super-learners constructs the ADPSGD ring (r ed). BS256 BS4096 BS8192 BS10240 BS12288 Baseline SSGD (H-)ADPSGD SSGD (H-)ADPSGD SSGD (H-)ADPSGD SSGD (H-)ADPSGD SWB 7.5 7.6 7.5 – 7.6 – 7.5 – 7.8 CH 13.0 13.1 13.2 – 13.2 – 13.5 – 13.6 T able 1 : WER comparison between baseline, SSGD, and ADPSGD for differ ent batch sizes. –: not con verged, BS: batch size. (H- )ADPSGD scales with 3X lar ger batch size than SSGD. ADPSGD scales up to 16 GPUs, H-ADPSGD scales up to 64 gpus. 5. Methodology 5.1. Softwar e and Hardwar e PyT orch 0.4.1 is our DL framew ork. Our communication li- brary is built with CUD A 9.2 compiler, the CUD A-aware Open- MPI 3.1.1, and g++ 4.8.5 compiler . W e run our experiments on a 64-GPU 8-server cluster . Each server has 2 sockets and 9 cores per socket. Each core is an Intel Xeon E5-2697 2.3GHz processor . Each serv er is equipped with 1TB main memory and 8 V100 GPUs. Between servers are 100Gbit/s Ethernet connec- tions. GPUs and CPUs are connected via PCIe Gen3 bus, which has a 16GB/s peak bandwidth in each direction per socket. 5.2. DL Models and Dataset The acoustic model is an LSTM with 6 bi-directional layers. Each layer contains 1,024 cells (512 cells in each direction). On top of the LSTM layers, there is a linear projection layer with 256 hidden units, followed by a softmax output layer with 32,000 units corresponding to context-dependent HMM states. The LSTM is unrolled with 21 frames and trained with non- ov erlapping feature subsequences of that length. The feature input is a fusion of FMLLR (40-dim), i-V ector (100-dim), and logmel with its delta and double delta (40-dim × 3). This model contains ov er 43 million parameters and is about 165MB large. The language model (LM) is built using publicly available training data, including Switchboard, Fisher , Gigaword, and Broadcast News, and Con versations. Its vocabulary has 85K words, and it has 36M 4-grams. The two training datasets used are SWB-300, which contains over 300 hours of training data and has a capacity of 30GB, and SWB-2000, which contains ov er 2000 hours of training data and has a capacity of 216GB. The two training data-sets are stored in HDF5 data format. The test set is the Hub5 2000 ev aluation set, composed of two parts: 2.1 hours of switchboard (SWB) data and 1.6 hours of call- home (CH) data. 6. Experimental Results 6.1. Con vergence Results T able 1 records the WER of SWB-2000 models trained by SSGD and ADPSGD under different batch sizes. Single-gpu training baseline is also given as a reference. ADPSGD can con verge with a batch size 3x larg er than that of SSGD, while maintaining model accuracy . 6.2. Speedup Figure 4 shows the H-ADPSGD speedup. Using a batch size 128 per gpu, our system achieves 40X speedup over 64 gpus. It takes 203 hours to finish SWB-2000 training in 16 epochs on one V100 GPU. It takes H-ADPSGD 5.2 hours to train for 16 epochs to r each WER 7.6% on SWB and WER 13.2% on CH, on 64 gpus. 0 8 16 24 32 40 48 56 64 # of GPUs 0 10 20 30 40 50 60 Speedup Linear speedup H-ADPSGD Fig. 4 : Speedup up to 64 gpus. Batch-size per gpu is 128. 7. Conclusion and Future W ork In this work, we made the following contributions: (1) W e dis- cov ered that ADPSGD can scale with much larger batch sizes than the commonly used SSGD algorithm for ASR tasks. T o the best of our knowledge, this is the first asynchronous sys- tem that scales with larger batch sizes than a synchronous sys- tem for public large-scale DL tasks. (2) T o make ADPSGD more scalable w .r .t number of learners, we designed a ne w al- gorithm H-ADPSGD, which trains a SWB-2000 model to reach WER 7.6% on SWB and WER 13.2% on CH in 5.2 hours, on 64 Nvidia V100 GPUs. T o the best of our knowledge, this is the fastest system that trains SWB-2000 to this lev el of accu- racy . W e plan to in vestigating the theoretical justification for why ADPSGD scales with a lar ger batch size than SSGD in our future work. 8. Related W ork DDL systems enable many AI applications with unprecedented accuracy , such as speech recognition [7, 17], computer vision [3], language modeling [18], and machine translation [19]. Dur- ing the early days of DDL system research, researchers could only rely on loosely-coupled inexpensiv e computing systems and adopted PS-based ASGD algorithm [4]. ASGD has infe- rior model performance when learners are many , and SSGD has now become a mainstream DL training method [6, 3, 7, 8, 20]. Consequently , these systems encounter the classical straggler problem in distributed system design. Recently , researchers proposed ADPSGD, which is proved to ha ve the same con ver - gence rate as SSGD while completely eliminating the straggler problem [5]. Previous work [9] applied ADPSGD to the chal- lenging SWB2000-LSTM task to achieve state-of-the-art model accuracy in a record time (11.5 hours). In this work, we discov- ered that ADPSGD can scale with much larger batch sizes than SSGD and designed a hierarchical ADPSGD training system that further improves training efficienc y , with the resulting sys- tem improving training speed o ver [9] by o ver 2X. 9. References [1] G. Saon, G. Kurata, T . Sercu, K. Audhkhasi, S. Thomas, D. Dim- itriadis, X. Cui, B. Ramabhadran, M. Picheny , L.-L. Lim, B. Roomi, and P . Hall, “English con versational telephone speech recognition by humans and machines, ” in Interspeech , 2017. [2] W . Xiong, J. Droppo, X. Huang, F . Seide, M. L. Seltzer , A. Stol- cke, D. Y u, and G. Zweig, “T oward human parity in conversa- tional speech recognition, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 25, no. 12, pp. 2410– 2423, Dec 2017. [3] P . Goyal, P . Doll ´ ar , R. B. Girshick, P . Noordhuis, L. W esolowski, A. Kyrola, A. Tulloch, Y . Jia, and K. He, “ Accurate, large minibatch SGD: training imagenet in 1 hour, ” CoRR , vol. abs/1706.02677, 2017. [Online]. A vailable: http://arxiv .org/abs/ 1706.02677 [4] J. Dean, G. S. Corrado, R. Monga, K. Chen, M. Devin, Q. V . Le, M. Z. Mao, M. Ranzato, A. Senior, P . T ucker , K. Y ang, and A. Y . Ng, “Large scale distrib uted deep networks, ” in NIPS , 2012. [5] X. Lian, W . Zhang, C. Zhang, and J. Liu, “ Asynchronous decen- tralized parallel stochastic gradient descent, ” in ICML , 2018. [6] J. Chen, R. Monga, S. Bengio, and R. Jozefowicz, “Revisiting distributed synchronous sgd, ” in International Confer ence on Learning Repr esentations W orkshop T rack , 2016. [Online]. A vailable: https://arxiv .org/abs/1604.00981 [7] D. Amodei(et.al.), “Deep speech 2 : End-to-end speech recognition in english and mandarin, ” in ICML’16 . PMLR, 2016, pp. 173–182. [Online]. A vailable: http://proceedings.mlr . press/v48/amodei16.html [8] W . Zhang, S. Gupta, and F . W ang, “Model accuracy and runtime tradeoff in distrib uted deep learning: A systematic study , ” in IEEE International Confer ence on Data Mining , 2016. [9] W . Zhang, X. Cui, U. Finkler, B. Kingsbury , G. Saon, D. Kung, and M. Picheny , “Distributed deep learning strategies for auto- matic speech recognition, ” in ICASSP’2019 , May 2019. [10] W . Zhang, S. Gupta, X. Lian, and J. Liu, “Staleness-aware async- sgd for distributed deep learning, ” in Pr oceedings of the T wenty- F ifth International Joint Confer ence on Artificial Intelligence, IJ- CAI 2016, New Y ork, NY , USA, 9-15 July 2016 , 2016, pp. 2350– 2356. [11] P . Patarasuk and X. Y uan, “Bandwidth optimal all-reduce algo- rithms for clusters of workstations, ” J. P arallel Distrib . Comput. , vol. 69, pp. 117–124, 2009. [12] Baidu, Effectively Scaling Deep Learning F rameworks , available at https://github .com/baidu- research/baidu- allreduce. [13] Nvidia, NCCL: Optimized primitives for collective multi-GPU communication , available at https://github.com/NVIDIA/nccl. [Online]. A vailable: https://github.com/NVIDIA/nccl [14] M. Cho, U. Finkler , S. Kumar , D. S. Kung, V . Saxena, and D. Sreedhar , “Powerai DDL, ” CoRR , vol. abs/1708.02188, 2017. [Online]. A vailable: http://arxiv .org/abs/1708.02188 [15] R. Nair and S. Gupta, “W ildfire: Approximate synchronization of parameters in distributed deep learning, ” IBM Journal of Resear ch and Development , v ol. 61, no. 4/5, pp. 7:1–7:9, July 2017. [16] X. Lian, C. Zhang, H. Zhang, C.-J. Hsieh, W . Zhang, and J. Liu, “Can decentralized algorithms outperform centralized al- gorithms? A case study for decentralized parallel stochastic gra- dient descent, ” in NIPS , 2017. [17] K. Chen and Q. Huo, “Scalable training of deep learning machines by incremental block training with intra-block parallel optimiza- tion and blockwise model-update filtering, ” in ICASSP’2016 , March 2016. [18] R. Puri, R. Kirby , N. Y akov enko, and B. Catanzaro, “Large scale language modeling: Conv erging on 40gb of text in four hours, ” CoRR , vol. abs/1808.01371, 2018. [19] M. Ott, S. Edunov , D. Grangier, and M. Auli, “Scaling neural machine translation, ” EMNLP 2018 THIRD CONFERENCE ON MACHINE TRANSLA TION , vol. abs/1806.00187, 2018. [20] W . Zhang, M. Feng, Y . Zheng, Y . Ren, Y . W ang, J. Liu, P . Liu, B. Xiang, L. Zhang, B. Zhou, and F . W ang, “Gadei: On scale-up training as a service for deep learning. ” The IEEE International Conference on Data Mining series(ICDM’2017), 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment