Deep Learning with ConvNET Predicts Imagery Tasks Through EEG

Deep learning with convolutional neural networks (ConvNets) have dramatically improved learning capabilities of computer vision applications just through considering raw data without any prior feature extraction. Nowadays, there is rising curiosity in interpreting and analyzing electroencephalography (EEG) dynamics with ConvNets. Our study focused on ConvNets of different structures, constructed for predicting imagined left and right movements on a subject-independent basis through raw EEG data. Results showed that recently advanced methods in machine learning field, i.e. adaptive moments and batch normalization together with dropout strategy, improved ConvNets predicting ability, outperforming that of conventional fully-connected neural networks with widely-used spectral features.

💡 Research Summary

The paper investigates the use of deep convolutional neural networks (ConvNets) for decoding imagined left‑right hand movements from raw electroencephalography (EEG) recordings in a subject‑independent setting. A publicly available EEG motor‑imagery dataset (EEGMMI) comprising 64 channels sampled at 160 Hz from 109 participants was employed. Each participant performed a task in which a visual cue appeared on either the left or right side of a screen, prompting the subject to imagine opening and closing the corresponding hand. For each subject, 45 labeled segments (15 left, 15 right, repeated three times) were extracted, yielding a sizable multi‑subject corpus that far exceeds the scale of most prior works (which typically involve ≤20 subjects).

Preprocessing was deliberately minimal: a high‑pass Butterworth filter (order 3) removed frequencies above 30 Hz, leaving the raw temporal dynamics largely intact. This design choice was intended to let the ConvNet discover spatial‑temporal patterns directly from the data without bias introduced by extensive feature engineering.

Two baseline approaches were constructed for comparison. First, a conventional spectral analysis using the Welch method was applied to each trial. The EEG was segmented into overlapping 0.15 s Hann‑windowed epochs (50 % overlap) and periodograms were computed with a resolution of 1.67 Hz. Power in the alpha band (8‑12 Hz) served as the feature vector for a multilayer perceptron (MLP) with two hidden layers (100 and 75 neurons) and tanh activations. Training used batch size 1, a fixed learning rate of 0.01, and early stopping after 100 epochs or when validation loss failed to improve for 15 consecutive updates. The MLP performed poorly, essentially failing to discriminate the two imagined directions.

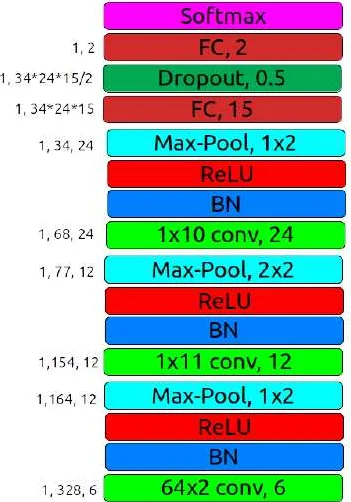

The core contribution is a deep ConvNet architecture specifically adapted for EEG. The network consists of three convolution‑max‑pooling blocks followed by two fully‑connected layers and a dropout layer (p = 0.5). The first convolution is 2‑D, preserving the spatial layout of the 64 electrodes; the subsequent two convolutions are conventional 1‑D filters. After each convolution, batch normalization and ReLU activation are applied, stabilizing training and mitigating internal covariate shift. Max‑pooling is performed without overlap, progressively reducing temporal resolution. The final dense layers map the learned representations to a binary output.

Training employed three optimization schemes with identical hyper‑parameters: Adam (β₁ = 0.9, β₂ = 0.99, ε = 1e‑8), stochastic gradient descent with momentum (SGDM, momentum = 0.9), and RMSprop (ρ = 0.99, ε = 1e‑8). The learning rate started at 0.001 and decayed by a factor of 0.1 every 10 epochs. Weight updates occurred after every 100 mini‑batches, and early stopping was triggered after 100 epochs or when validation cross‑entropy failed to improve for 15 consecutive checks.

Results show that the ConvNet markedly outperforms the spectral‑MLP baseline. Using Adam, the model achieved 79.16 % classification accuracy, surpassing SGDM (slightly lower) and RMSprop (significantly lower). Confusion matrices indicate balanced performance across left and right classes, confirming that the network captures discriminative spatial‑temporal EEG patterns rather than exploiting class imbalance. The superiority of Adam and SGDM over RMSprop suggests that adaptive moment estimation and momentum‑based updates are better suited to the noisy, non‑stationary nature of EEG signals.

The study’s significance lies in several aspects: (1) Demonstrating that raw EEG can be fed directly into a ConvNet with only minimal filtering, eliminating the need for handcrafted spectral or time‑frequency features; (2) Showing that modern deep‑learning techniques—batch normalization, dropout, and adaptive optimizers—substantially boost performance on a challenging subject‑independent BCI task; (3) Validating the approach on a large, heterogeneous cohort (109 subjects), thereby providing strong evidence of generalizability beyond subject‑specific models that dominate the literature.

The authors conclude that ConvNets are a promising tool for neuroscience and BCI research, capable of learning robust representations from minimally pre‑processed EEG. They suggest future directions such as deeper architectures, incorporation of time‑frequency windows, multimodal fusion (e.g., EEG + MEG), and transfer learning from large‑scale neuroimaging datasets to further improve accuracy and robustness.

Comments & Academic Discussion

Loading comments...

Leave a Comment