StrokeSave: A Novel, High-Performance Mobile Application for Stroke Diagnosis using Deep Learning and Computer Vision

According to the WHO, Cerebrovascular Stroke, or CS, is the second largest cause of death worldwide. Current diagnosis of CS relies on labor and cost intensive neuroimaging techniques, unsuitable for areas with inadequate access to quality medical facilities. Thus, there is a great need for an efficient diagnosis alternative. StrokeSave is a platform for users to self-diagnose for prevalence to stroke. The mobile app is continuously updated with heart rate, blood pressure, and blood oxygen data from sensors on the patient wrist. Once these measurements reach a threshold for possible stroke, the patient takes facial images and vocal recordings to screen for paralysis attributed to stroke. A custom designed lens attached to a phone’s camera then takes retinal images for the deep learning model to classify based on presence of retinopathy and sends a comprehensive diagnosis. The deep learning model, which consists of a RNN trained on 100 voice slurred audio files, a SVM trained on 410 vascular data points, and a CNN trained on 520 retinopathy images, achieved a holistic accuracy of 95.0 percent when validated on 327 samples. This value exceeds that of clinical examination accuracy, which is around 40 to 89 percent, further demonstrating the vital utility of such a medical device. Through this automated platform, users receive efficient, highly accurate diagnosis without professional medical assistance, revolutionizing medical diagnosis of CS and potentially saving millions of lives.

💡 Research Summary

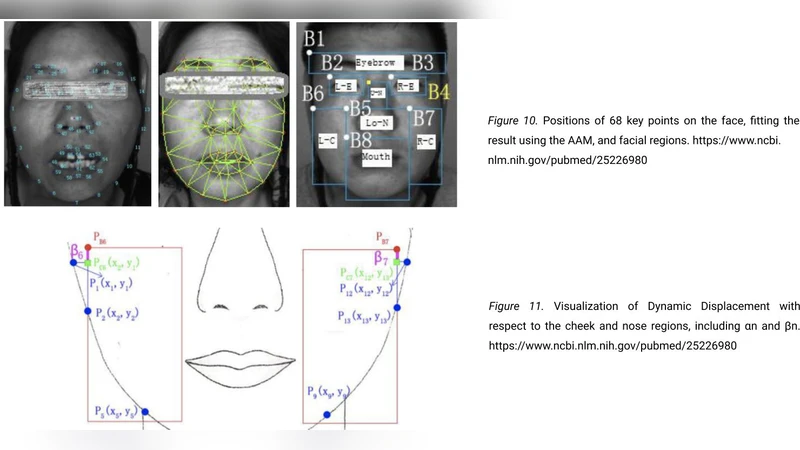

StrokeSave is presented as a mobile‑first, multimodal diagnostic platform aimed at addressing the global burden of cerebrovascular stroke (CS), which the World Health Organization identifies as the second leading cause of death worldwide. The authors argue that conventional stroke diagnosis relies on expensive, infrastructure‑intensive neuroimaging (CT, MRI) that is unavailable in many low‑resource settings, creating an urgent need for a low‑cost, widely accessible alternative. StrokeSave attempts to fill this gap by continuously monitoring three physiological parameters—heart rate, blood pressure, and blood oxygen saturation—via a wrist‑worn sensor. When any of these metrics cross a pre‑established risk threshold, the app prompts the user to perform a self‑assessment consisting of three data‑capture modalities: a facial photograph, a short voice recording, and a retinal image obtained through a custom lens attached to the phone’s camera.

The analytical core of the system is a three‑branch deep‑learning ensemble. The voice branch employs a recurrent neural network (RNN) trained on 100 slurred‑speech audio clips to detect dysarthria, a common post‑stroke symptom. The vascular branch uses a support vector machine (SVM) trained on 410 numerical features derived from the wrist sensor (e.g., systolic/diastolic pressure, pulse‑rate variability, SpO₂ trends) to flag hemodynamic abnormalities. The retinal branch relies on a convolutional neural network (CNN) trained on 520 retinal photographs to identify microvascular changes and retinopathy that have been correlated with cerebrovascular disease. Each branch outputs a probability score; the scores are combined via a weighted average to produce a final “stroke risk” decision.

The authors report a holistic accuracy of 95.0 % on an internal validation set of 327 samples, surpassing the cited clinical examination accuracy range of 40–89 %. They claim that this performance, together with the app’s low cost and ease of deployment, could revolutionize stroke screening, especially in underserved regions.

While the concept is compelling, several methodological and practical concerns emerge upon closer inspection. First, the dataset sizes are modest: only 100 voice recordings, 410 sensor feature vectors, and 520 retinal images were used for training. Such limited data raise the specter of overfitting, particularly for the CNN, which typically requires thousands of images to achieve robust generalization. The validation set is drawn from the same institution and likely shares acquisition conditions (lighting, sensor calibration, demographic distribution) with the training data, making it difficult to assess true external validity. A more rigorous evaluation would involve multi‑center, prospective cohorts with diverse ethnic, age, and comorbidity profiles.

Second, the paper provides scant detail on preprocessing and quality control. Wrist‑sensor signals are notoriously noisy, especially when users move or wear the device incorrectly. No description is given of artifact rejection, signal smoothing, or calibration procedures. Similarly, the retinal imaging workflow depends on a custom lens that could introduce optical distortion, focus drift, or illumination heterogeneity. Without explicit calibration or correction algorithms, the CNN may learn spurious patterns tied to device artifacts rather than genuine pathology.

Third, the integration of three heterogeneous modalities raises questions about decision‑fusion strategy. The authors use a simple weighted average, but do not justify the chosen weights, nor do they explore alternative fusion techniques (e.g., hierarchical Bayesian models, attention mechanisms) that could dynamically adjust the influence of each modality based on data quality. Moreover, the relative contribution of each branch to the final accuracy is not quantified, leaving clinicians uncertain about which signals are most informative.

Fourth, privacy and regulatory considerations are largely omitted. The system transmits highly sensitive biometric data (voice, facial image, retinal scan) to a cloud backend for inference. Compliance with GDPR, HIPAA, or comparable data‑protection frameworks would require end‑to‑end encryption, anonymization, and explicit user consent mechanisms—none of which are discussed. From a medical‑device standpoint, StrokeSave would likely be classified as a Class II (or higher) device in many jurisdictions, necessitating FDA, CE, or other regulatory clearance before market release. The paper does not outline a pathway for such approval, nor does it address post‑market surveillance or liability issues.

Fifth, the claim that the app “replaces professional medical assistance” is overstated. While a high‑accuracy screening tool can flag individuals who need urgent care, false positives could overwhelm already strained emergency services, and false negatives could delay life‑saving interventions. The authors should present sensitivity, specificity, positive predictive value, and negative predictive value alongside overall accuracy to allow a balanced risk assessment.

In summary, StrokeSave introduces an innovative combination of wearable sensing, computer vision, and speech analysis to create a low‑cost, portable stroke‑screening solution. Its reported 95 % accuracy is promising, but the evidence base is limited by small, homogeneous datasets, insufficient external validation, and a lack of detailed methodological transparency. Future work should focus on (1) expanding the training and validation cohorts across multiple sites, (2) implementing rigorous signal‑quality checks and device calibration, (3) exploring more sophisticated multimodal fusion strategies, (4) establishing robust data‑privacy safeguards, and (5) navigating the regulatory landscape through formal clinical trials. If these challenges are addressed, StrokeSave could become a valuable adjunct in global stroke prevention, particularly in regions where conventional neuroimaging is inaccessible.