Privacy-Preserving Deep Learning via Weight Transmission

This paper considers the scenario that multiple data owners wish to apply a machine learning method over the combined dataset of all owners to obtain the best possible learning output but do not want to share the local datasets owing to privacy concerns. We design systems for the scenario that the stochastic gradient descent (SGD) algorithm is used as the machine learning method because SGD (or its variants) is at the heart of recent deep learning techniques over neural networks. Our systems differ from existing systems in the following features: {\bf (1)} any activation function can be used, meaning that no privacy-preserving-friendly approximation is required; {\bf (2)} gradients computed by SGD are not shared but the weight parameters are shared instead; and {\bf (3)} robustness against colluding parties even in the extreme case that only one honest party exists. We prove that our systems, while privacy-preserving, achieve the same learning accuracy as SGD and hence retain the merit of deep learning with respect to accuracy. Finally, we conduct several experiments using benchmark datasets, and show that our systems outperform previous system in terms of learning accuracies.

💡 Research Summary

The paper addresses the problem of collaborative deep‑learning training when multiple data owners wish to keep their local datasets private. Existing privacy‑preserving approaches either share selected gradients (which still leak information) or rely on heavyweight cryptographic primitives such as homomorphic encryption or secret sharing. Both strategies have notable drawbacks: gradient sharing can be reverse‑engineered, and cryptographic solutions incur large communication and computation overheads, especially when many participants are involved.

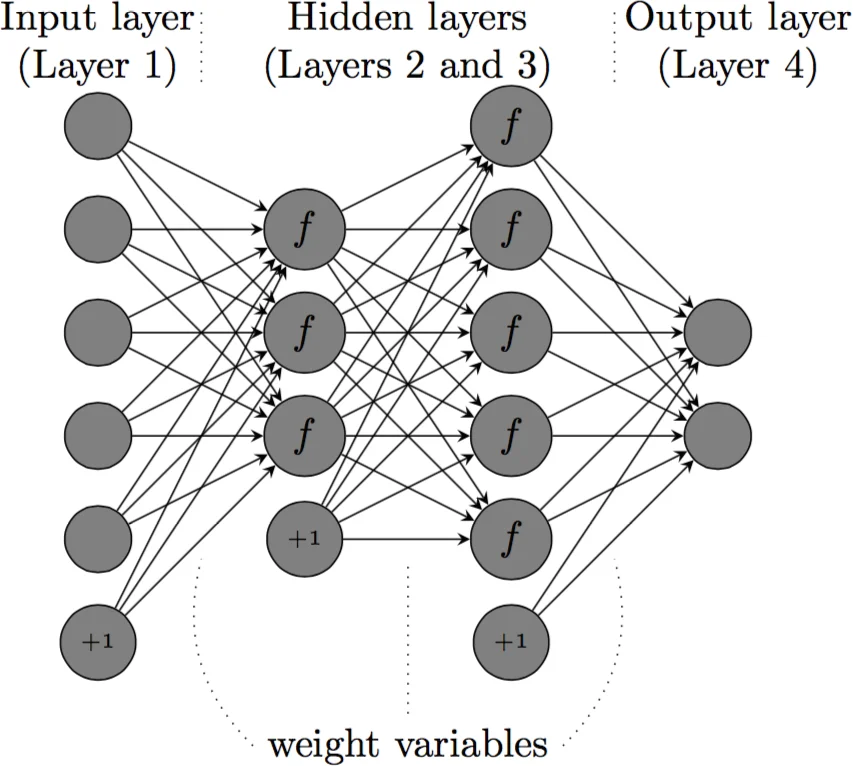

The authors propose a fundamentally different paradigm: weight transmission. Instead of sending gradients, each participant performs a local SGD step on its own data, encrypts the resulting weight vector, and sends the encrypted weights to a central server (SNT topology) or directly to the next participant (FNT topology). The server (or peers) never sees the plaintext weights because a shared symmetric key, unknown to the server, is used for encryption (TLS provides the underlying confidentiality). After decryption, the next participant continues training from the received weight vector. Because the weight vector after a local update is mathematically equivalent to the previous weight plus a weighted sum of gradients, the overall training trajectory is identical to that of standard SGD on the union of all datasets.

Two system architectures are described:

-

SNT (Server‑aided Network Topology) – a single honest‑but‑curious server stores and forwards encrypted weights. This model scales well when the number of trainers (L) is large (≥ 20) because each round requires only O(1) communication per trainer.

-

FNT (Fully‑connected Network Topology) – every trainer maintains a TLS link to every other trainer, eliminating the need for a central server. This is more suitable for a modest number of participants (≤ 20).

Security analysis is formalized through four theorems. Theorem 1 proves that an honest‑but‑curious server learns nothing about any participant’s data or intermediate weights. Theorems 2 and 4 address the “extreme collusion” scenario where only one trainer is honest while all others (including the server) collude; they show that reconstructing the honest trainer’s data from observed encrypted weights reduces to solving an under‑determined system of nonlinear equations, which is computationally infeasible. Theorems 3 and 5 guarantee that the proposed protocols achieve exactly the same convergence and final accuracy as plain SGD, because the weight updates are mathematically equivalent to aggregating all local gradients.

Experimental evaluation spans several benchmark datasets: UCI classification tasks, MNIST (both MLP and CNN), and CIFAR‑10/100 with ResNet architectures. Results demonstrate:

- Accuracy – The proposed systems match or slightly exceed the accuracy of the baseline SGD and outperform prior privacy‑preserving methods (e.g., Shokri‑Shmatikov, Aono et al.) that rely on gradient selection or polynomial approximations.

- Performance overhead – Encryption/decryption and weight transmission add modest latency. For MNIST the total runtime is under three times that of plain SGD; for deeper networks (ResNet on CIFAR) cryptographic overhead accounts for less than 5 % of total training time.

- Scalability – Communication cost per round remains constant in SNT, whereas alternative secure aggregation schemes grow linearly with the number of participants.

The authors also discuss integration with differential privacy: adding Laplace noise to the transmitted weights yields (\epsilon)-DP guarantees without affecting the core protocol, thereby providing an additional privacy layer.

Limitations are acknowledged. Managing the shared symmetric key becomes more complex as the participant pool grows, and the current reliance on classical TLS does not provide quantum‑resistance. Moreover, the trained weight vector itself may be considered intellectual property, so practical deployments must enforce access‑control policies beyond the cryptographic guarantees.

In summary, the paper introduces a novel, practical approach to privacy‑preserving deep learning by transmitting encrypted model weights rather than gradients. The method preserves the exact learning dynamics of standard SGD, offers strong security even under severe collusion, incurs low computational and communication overhead, and is validated across a range of datasets and network depths. This contribution significantly advances the feasibility of collaborative, privacy‑aware deep learning in real‑world settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment