Visualizing Uncertainty and Saliency Maps of Deep Convolutional Neural Networks for Medical Imaging Applications

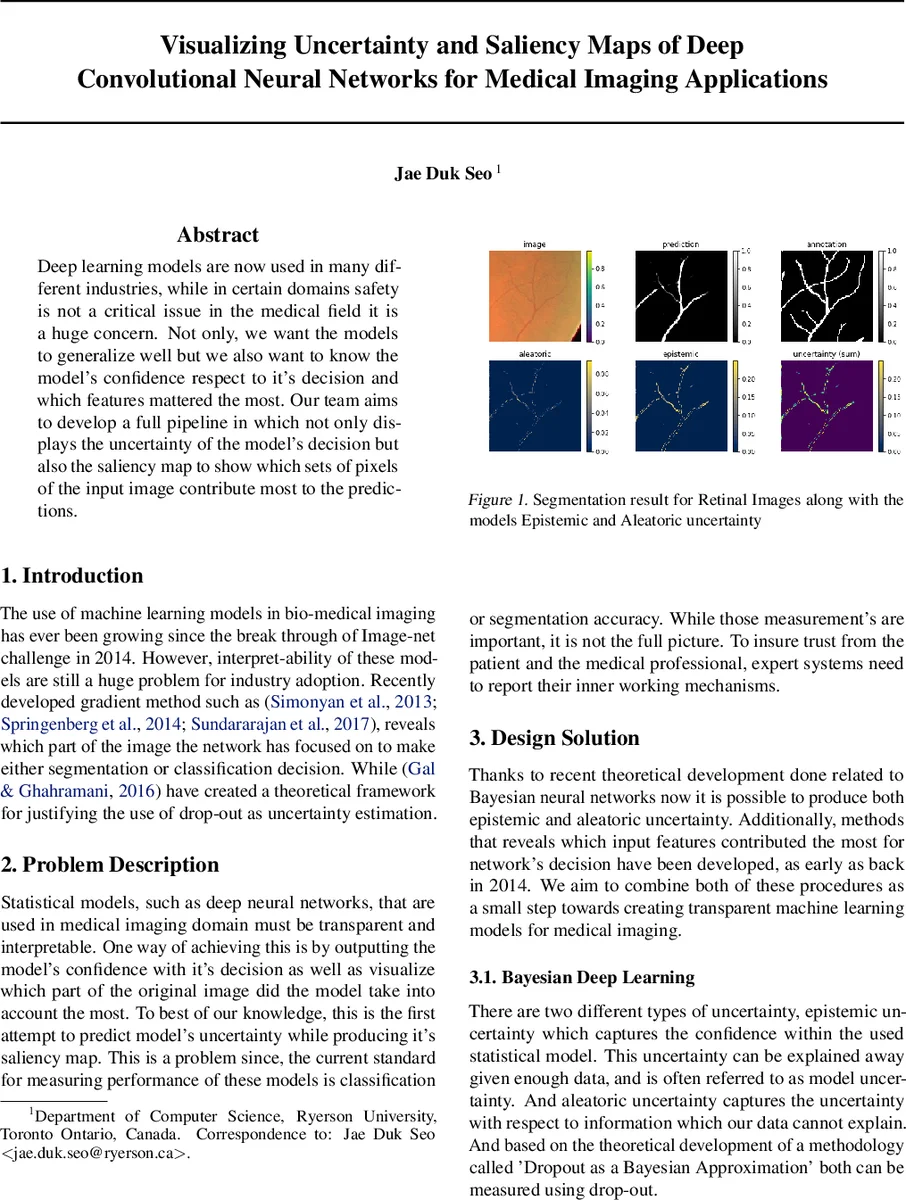

Deep learning models are now used in many different industries, while in certain domains safety is not a critical issue in the medical field it is a huge concern. Not only, we want the models to generalize well but we also want to know the models confidence respect to its decision and which features matter the most. Our team aims to develop a full pipeline in which not only displays the uncertainty of the models decision but also, the saliency map to show which sets of pixels of the input image contribute most to the predictions.

💡 Research Summary

The paper presents an integrated framework that simultaneously quantifies predictive uncertainty and visualizes pixel‑level importance for deep convolutional neural networks (CNNs) applied to medical imaging. Recognizing that high classification or segmentation accuracy alone is insufficient for clinical adoption, the authors combine Bayesian deep learning with gradient‑based saliency methods to produce both epistemic and aleatoric uncertainty maps as well as interpretable importance maps.

The Bayesian component relies on the “Dropout as a Bayesian Approximation” principle (Gal & Ghahramani, 2016). By keeping dropout active during inference and performing Monte‑Carlo (MC) sampling, the model generates a distribution of predictions for each input. The mean of this distribution serves as the final prediction, while the variance is decomposed into epistemic uncertainty (model uncertainty that diminishes with more data) and aleatoric uncertainty (intrinsic data noise). These two uncertainty types are visualized as separate heat‑maps, enabling clinicians to see where the model is confident and where it is not.

For interpretability, the authors adopt Integrated Gradients (Sundararajan et al., 2017) and its smoother variant (SmoothGrad) to compute attribution scores for each pixel. By integrating gradients along a path from a baseline (typically a black image) to the actual input, the method yields a quantitative measure of each pixel’s contribution to the network’s output. The resulting saliency maps highlight regions that drive the decision, which is especially valuable in medical contexts where the relevance of specific anatomical structures must be verified.

Two experimental use‑cases illustrate the pipeline. First, a nine‑layer U‑Net is trained on the retinal vessel dataset of Staal et al. (2004) using Dice loss. The model achieves a training loss of 0.22 and a validation loss of 0.26, indicating good generalization. MC dropout sampling produces uncertainty maps that are higher in thin, ambiguous vessel regions, confirming that the model’s confidence aligns with visual ambiguity. Second, a pre‑trained VGG‑16 is fine‑tuned on the ChestX‑ray8 dataset (Wang et al., 2017) for disease classification. Saliency visualizations consistently point to the lower‑left quadrant of the X‑ray as the most influential area across multiple attribution methods, suggesting that the network is focusing on clinically relevant patterns.

Implementation considerations address the reality that medical institutions rarely share raw imaging data. To facilitate adoption, the authors propose a step‑by‑step tutorial (video or web‑based) that can be executed locally on institutional hardware. They also outline future extensions, including dual‑task networks for microscopic cell images (segmentation plus cell counting) and a web‑application that allows clinicians to upload their own scans and instantly obtain predictions, uncertainty estimates, and saliency maps.

Overall, the contribution lies in merging Bayesian uncertainty estimation with modern attribution techniques into a single, reproducible pipeline. This dual‑output approach not only quantifies how much the model “knows” but also reveals what it is looking at, thereby addressing two major barriers to the clinical deployment of deep learning: trustworthiness and interpretability. The paper’s experimental results, while limited to 2‑D datasets, provide compelling evidence that uncertainty maps can flag low‑confidence regions and that saliency maps can corroborate clinically meaningful focus areas, paving the way for more transparent AI‑assisted diagnostics.

Comments & Academic Discussion

Loading comments...

Leave a Comment