Region-Manipulated Fusion Networks for Pancreatitis Recognition

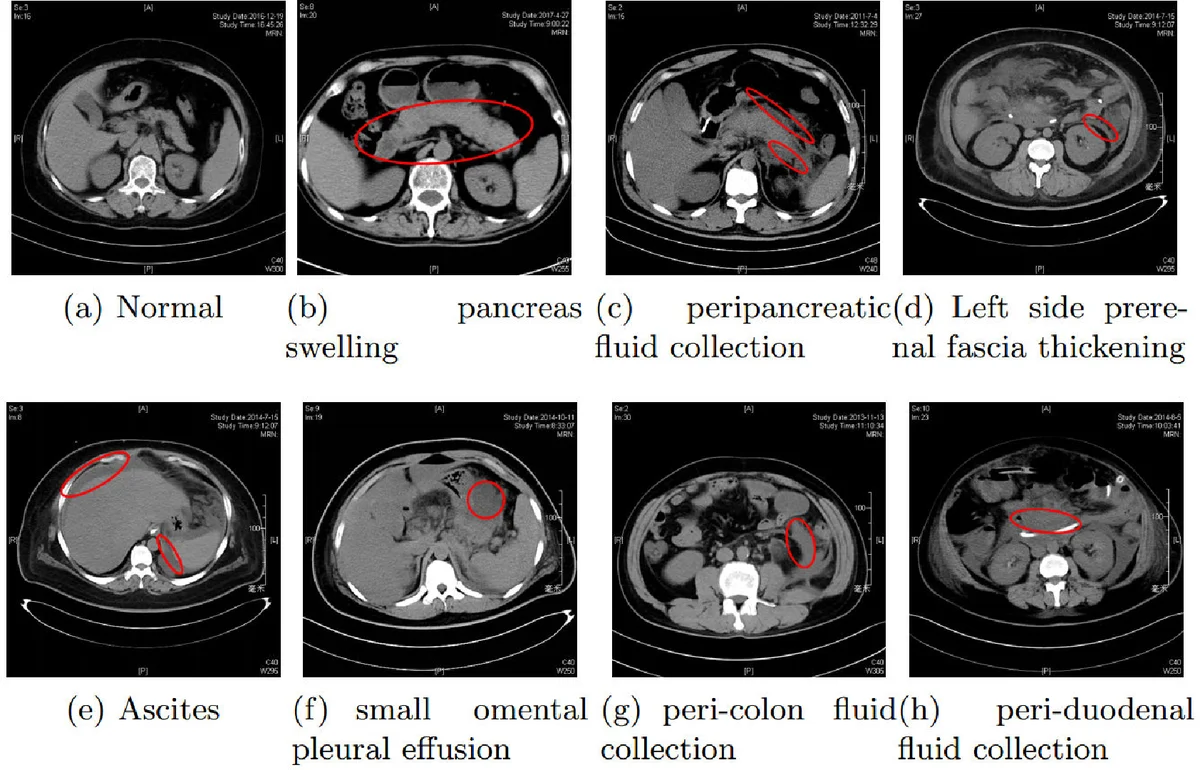

This work first attempts to automatically recognize pancreatitis on CT scan images. However, different form the traditional object recognition, such pancreatitis recognition is challenging due to the fine-grained and non-rigid appearance variability of the local diseased regions. To this end, we propose a customized Region-Manipulated Fusion Networks (RMFN) to capture the key characteristics of local lesion for pancreatitis recognition. Specifically, to effectively highlight the imperceptible lesion regions, a novel region-manipulated scheme in RMFN is proposed to force the lesion regions while weaken the non-lesion regions by ceaselessly aggregating the multi-scale local information onto feature maps. The proposed scheme can be flexibly equipped into the existing neural networks, such as AlexNet and VGG. To evaluate the performance of the propose method, a real CT image database about pancreatitis is collected from hospitals \footnote{The database is available later}. And experimental results on such database well demonstrate the effectiveness of the proposed method for pancreatitis recognition.

💡 Research Summary

This paper presents a pioneering deep learning approach for the automated recognition of pancreatitis in abdominal CT scan images, introducing the novel Region-Manipulated Fusion Networks (RMFN). Diagnosing acute pancreatitis, especially in its critical early stages, is challenging due to the small, fine-grained, non-rigid, and highly variable appearance of local lesion regions, which are easily missed by human observers or standard convolutional neural networks (CNNs) designed for natural images.

The core innovation of RMFN lies in its unique architecture and “region-manipulated” fusion scheme designed to address these specific challenges. The network consists of a main pathway (based on VGG11) for capturing global context and multiple subsidiary sub-networks that process multi-scale local regions of the input image. These local regions are obtained by dividing the image into grids at different scales (e.g., 32x32 and 48x48 pixel patches).

The key technical contribution is the fusion mechanism. Instead of merely combining features at the final layer, RMFN performs intermediate fusion. The feature maps from a sub-network, which encode detailed local information from a specific scale, are element-wise added onto the spatially corresponding regions of the feature maps produced by an intermediate layer of the main network. This process is repeated across multiple scales. This “ceaseless aggregation” forces the network to continually enhance the feature activations corresponding to potential lesion areas while diminishing the influence of non-lesion background areas throughout the forward propagation, effectively guiding the model to focus on discriminative local clues.

The authors collected a real-world CT image database from hospitals to evaluate their method. Experimental results on this dataset demonstrate that RMFN achieves superior performance compared to standard baseline networks like VGG. The proposed region-manipulated fusion scheme is presented as a flexible plug-in component that can be integrated into existing CNN architectures (e.g., AlexNet, VGG) to improve their capability in fine-grained recognition tasks, not only in medical image analysis but potentially also in broader computer vision applications where local, subtle features are critical for accurate classification.

Comments & Academic Discussion

Loading comments...

Leave a Comment