Seismic data denoising and deblending using deep learning

An important step of seismic data processing is removing noise, including interference due to simultaneous and blended sources, from the recorded data. Traditional methods are time-consuming to apply as they often require manual choosing of parameters to obtain good results. We use deep learning, with a U-net model incorporating a ResNet architecture pretrained on ImageNet and further trained on synthetic seismic data, to perform this task. The method is applied to common offset gathers, with adjacent offset gathers of the gather being denoised provided as additional input channels. Here we show that this approach leads to a method that removes noise from several datasets recorded in different parts of the world with moderate success. We find that providing three adjacent offset gathers on either side of the gather being denoised is most effective. As this method does not require parameters to be chosen, it is more automated than traditional methods.

💡 Research Summary

This paper addresses the long‑standing challenge of removing noise and blending interference from seismic recordings without the need for extensive manual parameter tuning. The authors propose a deep‑learning solution based on a U‑Net architecture whose encoder is a ResNet‑34 network pretrained on ImageNet. By leveraging the pretrained low‑level filters (edge detectors, textures) the model can be trained efficiently on seismic data, while the high‑level layers are fine‑tuned for the specific task of denoising and deblending.

A key innovation is the use of multiple adjacent common‑offset gathers as additional input channels. The central gather to be cleaned is supplemented with three gathers on each side (six adjacent gathers total). Because ResNet‑34 expects three input channels, the first convolutional layer is re‑parameterised: the pretrained weights are summed across the original RGB channels and divided by the new number of input channels, then duplicated for each new channel. This preserves the benefit of the pretrained filters while adapting to the higher‑dimensional seismic input.

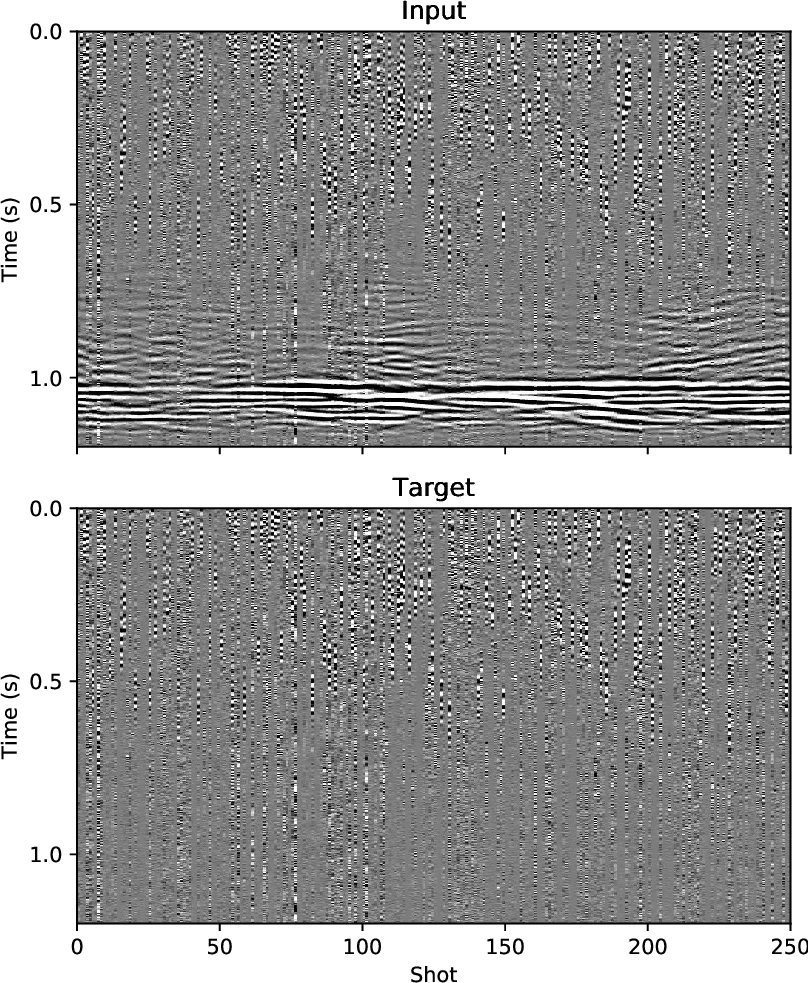

Training data are generated synthetically using a 2‑D acoustic wave propagator and twenty randomly sampled velocity models. Each model yields 300 receivers and 250 shots, with a 35 Hz Ricker source and 5 m spacing. Blending noise is simulated by overlapping shot records with random inter‑shot delays (0.5–1.5 s) and jitter (0.1–0.6 s). To increase realism, field data from three Rolling Deck to Repository cruises and white Gaussian noise are mixed into the synthetic set, providing cable, swell, and cross‑survey interference. The network is trained to predict the noise component (input minus clean signal); both inputs and targets are normalized by the standard deviation of the noisy gather.

Training follows a two‑stage schedule. First, only the randomly initialized layers are updated for five epochs at a learning rate of 0.01 while the pretrained ResNet weights remain frozen. Then, all layers are trained for 350 epochs using the 1‑cycle policy and AdamW optimizer, with a discriminative learning‑rate that ramps from 1 × 10⁻⁶ in the shallowest layers to 1 × 10⁻³ in the deepest layers. Patches of size 224 × 224 are extracted randomly from each gather, and data augmentation includes random time‑domain convolution, variable time‑stride (1–5), and variable receiver‑spacing stride (1–4) to mimic different acquisition geometries.

Four real marine streamer datasets (North Sea, Venezuela, Norway, New Zealand) are used for testing. The North Sea data are synthetically blended to evaluate deblending performance; the other three are real noisy datasets. Experiments varying the number of adjacent gathers show that providing three gathers on each side yields the lowest validation loss, confirming that the model can exploit spatial redundancy without being overwhelmed by excessive channels.

On the blended North Sea data, the method improves signal‑to‑noise ratio (SNR) from –3.6 dB to 16.5 dB, comparable to state‑of‑the‑art deblending techniques reported in the literature (e.g., Chen et al. 2018, Zu et al. 2018). CMP‑stack images demonstrate a clear reduction of blending artifacts, although a modest attenuation of the underlying signal is observed. When applied to the three real noisy datasets, the network removes the majority of cable, swell, and cross‑survey noise, but a small amount of signal leakage into the estimated noise is evident, especially for low‑amplitude events. The authors attribute this to the synthetic‑dominant training set, which lacks the subtle amplitude variations present in field data.

The discussion highlights several limitations and future directions. First, loss increases slightly when more than three adjacent gathers are supplied, likely because additional channels increase the number of trainable parameters without providing commensurate new information. Second, signal leakage suggests that incorporating a larger proportion of real data into the training mix could improve fidelity. Third, the authors note that alternative input configurations—such as using NMO‑corrected CMP gathers as channels—might further decorrelate blending noise but would require additional preprocessing and a reliable velocity model. Fourth, the method’s applicability to land data is uncertain due to static shifts and irregular acquisition geometry; preprocessing steps like static correction and interpolation would be necessary. Finally, the “black‑box” nature of deep networks is acknowledged; while they outperform explicit filters, interpretability remains a challenge.

In summary, the paper demonstrates that a pretrained ResNet‑34‑based U‑Net, fed with multiple adjacent gathers, can automatically denoise and deblend seismic data across diverse marine environments without manual parameter selection. The approach achieves competitive SNR gains, generalizes reasonably well to unseen datasets, and offers a practical, low‑maintenance alternative to conventional methods. Future work should focus on expanding the training corpus with more field data, exploring alternative multi‑dimensional input representations, improving interpretability, and extending the framework to land seismic acquisition scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment