Noise Adaptive Speech Enhancement using Domain Adversarial Training

In this study, we propose a novel noise adaptive speech enhancement (SE) system, which employs a domain adversarial training (DAT) approach to tackle the issue of a noise type mismatch between the training and testing conditions. Such a mismatch is a critical problem in deep-learning-based SE systems. A large mismatch may cause a serious performance degradation to the SE performance. Because we generally use a well-trained SE system to handle various unseen noise types, a noise type mismatch commonly occurs in real-world scenarios. The proposed noise adaptive SE system contains an encoder-decoder-based enhancement model and a domain discriminator model. During adaptation, the DAT approach encourages the encoder to produce noise-invariant features based on the information from the discriminator model and consequentially increases the robustness of the enhancement model to unseen noise types. Herein, we regard stationary noises as the source domain (with the ground truth of clean speech) and non-stationary noises as the target domain (without the ground truth). We evaluated the proposed system on TIMIT sentences. The experiment results show that the proposed noise adaptive SE system successfully provides significant improvements in PESQ (19.0%), SSNR (39.3%), and STOI (27.0%) over the SE system without an adaptation.

💡 Research Summary

This paper presents a novel framework for noise-adaptive speech enhancement (SE) that addresses the critical issue of noise type mismatch between training and testing conditions, a common pitfall in deep learning-based SE systems. The core innovation lies in the application of Domain Adversarial Training (DAT), a technique prevalent in computer vision, to the regression task of speech enhancement.

The proposed system operates under a realistic scenario where abundant labeled data from a “source domain” (noisy speech with stationary noises like car or engine noise, paired with clean speech) is available, but only a limited amount of unlabeled data from a “target domain” (noisy speech with non-stationary noises like a baby cry, without clean references) exists for adaptation. The model architecture comprises three key components: an encoder that extracts features from noisy input, a decoder that reconstructs the clean speech spectrogram from these features, and a domain discriminator that attempts to classify the noise type (domain) based on the encoder’s features.

The training process involves a dual-objective loss. The primary objective is a regression loss (L_regress) calculated only on the source domain data, which minimizes the difference between the decoder’s output and the ground-truth clean spectrogram. The secondary and crucial objective is the domain adversarial loss (L_DAT). Here, the discriminator is trained to correctly identify the noise type of the input features. Simultaneously, the encoder is trained adversarially to produce features that are indistinguishable across different noise types, thereby “fooling” the discriminator and maximizing its classification error. This adversarial min-max game encourages the encoder to learn noise-invariant representations, making the subsequent enhancement performed by the decoder more robust to unseen noise types.

Extensive experiments were conducted on the TIMIT corpus. The source domain training set was created by corrupting clean speech with five types of stationary noise at various SNRs. For the target domain, non-stationary baby-cry noise was used to create unlabeled adaptation data. The system was evaluated on test sets corrupted with the same baby-cry noise (seen during adaptation) and a completely unseen cafeteria babble noise.

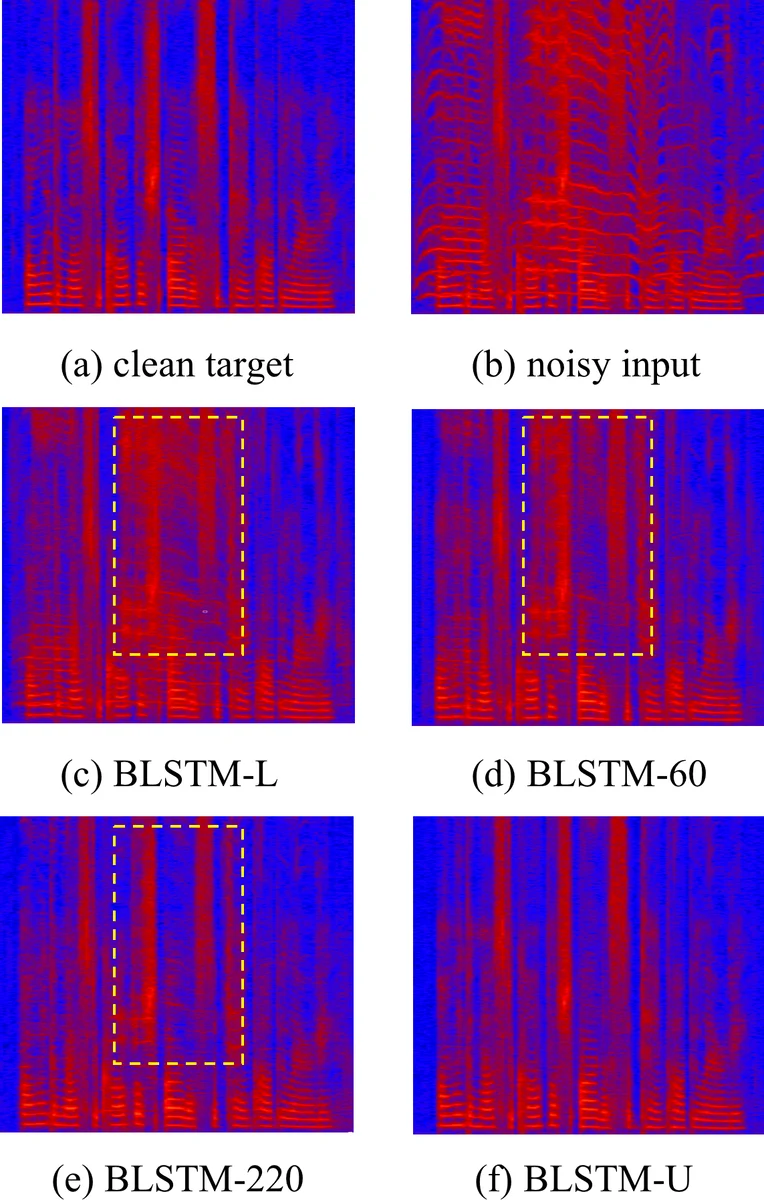

The results demonstrated significant improvements. Compared to a baseline model (BLSTM-L) trained only on source domain data, the DAT-adapted model using just 60 unlabeled target utterances (BLSTM-60) showed substantial gains across all SNR levels and objective metrics: Perceptual Evaluation of Speech Quality (PESQ) improved by 19.0%, Segmental Signal-to-Noise Ratio (SSNR) by 39.3%, and Short-Time Objective Intelligibility (STOI) by 27.0% on average for the baby-cry noise. Importantly, the adapted model also generalized well to the unseen cafeteria noise, outperforming the baseline, which indicates that the model learned genuinely noise-invariant features rather than overfitting to the specific adaptation noise. Performance was further shown to improve gradually with more unlabeled adaptation data.

In conclusion, this study successfully adapts Domain Adversarial Training for speech enhancement, proving its efficacy in bridging the domain gap between training and real-world noisy environments. By leveraging unlabeled data from a target noise environment, the proposed method provides a practical and powerful pathway to build robust SE systems capable of handling a wide array of unseen noises, moving a step closer to deployable real-world applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment