Spectrogram Feature Losses for Music Source Separation

In this paper we study deep learning-based music source separation, and explore using an alternative loss to the standard spectrogram pixel-level L2 loss for model training. Our main contribution is in demonstrating that adding a high-level feature loss term, extracted from the spectrograms using a VGG net, can improve separation quality vis-a-vis a pure pixel-level loss. We show this improvement in the context of the MMDenseNet, a State-of-the-Art deep learning model for this task, for the extraction of drums and vocal sounds from songs in the musdb18 database, covering a broad range of western music genres. We believe that this finding can be generalized and applied to broader machine learning-based systems in the audio domain.

💡 Research Summary

The paper investigates an alternative loss function for deep‑learning based music source separation, moving beyond the conventional pixel‑level L2 loss that treats a spectrogram as a simple image and penalises differences in each time‑frequency bin independently. The authors argue that such a loss ignores higher‑level patterns that are characteristic of different instruments—for example, drums produce vertical energy bands while vocals generate horizontal harmonic lines. To capture these structures, they adopt the perceptual loss paradigm from computer vision: they pass both the estimated and target spectrograms through a pre‑trained VGG‑19 network, extract feature maps from several convolutional layers, and compute two additional terms – a feature reconstruction loss and a style reconstruction loss – exactly as described in the style‑transfer literature. These two terms are each weighted by 0.25 and combined with the standard L2 loss (weighted 0.5) to form a composite spectrogram loss.

The study uses the state‑of‑the‑art MMDenseNet architecture as the backbone model. MMDenseNet is a multi‑scale, multi‑band DenseNet that splits the input spectrogram along the frequency axis into sub‑bands, processes each sub‑band with its own DenseNet auto‑encoder, and finally concatenates the sub‑band feature maps. This design allows the network to learn frequency‑specific filters, which is beneficial for music signals that exhibit different characteristics in low and high frequencies. Because the original MMDenseNet implementation is not publicly available, the authors re‑implemented it following the specifications in the original paper (FFT size 2048, hop 1024, RMSProp optimizer, bottleneck factor 4, compression factor 0.2, etc.).

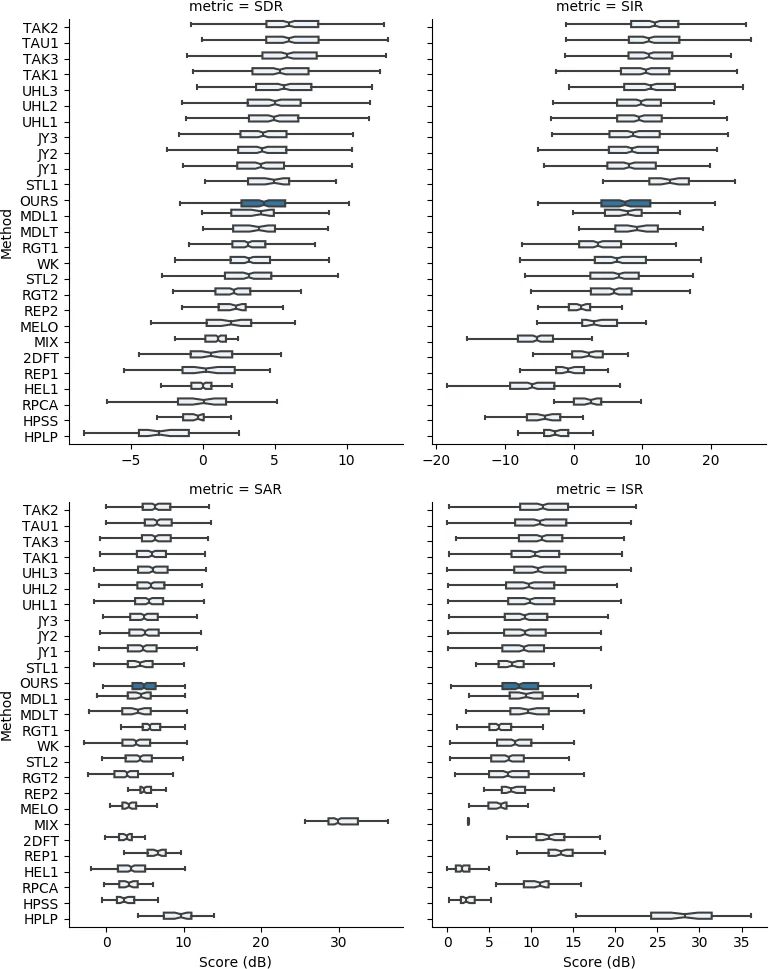

Experiments are conducted on the musdb18 dataset, which provides 100 training tracks and 50 test tracks with isolated ground‑truth stems for vocals, drums, bass, and “other”. The authors focus on three sources (vocals, drums, bass) and evaluate performance using the Signal‑to‑Distortion Ratio (SDR) from the BSS Eval toolkit. For each source they train two versions of the model: one with the pure pixel‑level L2 loss and one with the composite spectrogram loss. To account for stochasticity in training (random initialization, GPU nondeterminism), each configuration is trained four times with different random seeds. The validation loss is monitored, and the epoch with the lowest composite validation loss is selected for the composite‑loss model; for the pixel‑loss model the epoch with the lowest L2 validation loss is used. A two‑sample t‑test is applied to the resulting SDR values to assess statistical significance.

Results show consistent improvements when the composite loss is used. For vocals, the mean SDR rises from 4.32 dB (pixel loss) to 4.59 dB (composite loss), a gain of 0.27 dB that is statistically significant (p = 0.008). Drums improve from 4.54 dB to 4.78 dB (p ≈ 0.051), and bass from 3.92 dB to 4.10 dB, also with p < 0.05. A further experiment with a single‑channel (mono) input, obtained by averaging the stereo channels, confirms that the benefit of the composite loss persists under different input configurations.

The authors acknowledge several limitations. VGG‑19 is trained on natural images, so its feature maps are not tailored to audio‑specific characteristics; consequently the perceptual loss is more a proxy for visual patterns in spectrograms than a true auditory perceptual metric. They suggest that future work should develop an audio‑domain analogue of VGG, perhaps using a network trained on large‑scale audio classification or music tagging tasks, to derive more meaningful feature losses. Additionally, the re‑implemented MMDenseNet lacks some enhancements present in top‑performing submissions to the SiSEC challenge, such as data augmentation, LSTM layers, and source‑specific architectural tweaks, which explains the modest absolute gap to the state‑of‑the‑art.

In summary, the paper demonstrates that augmenting the standard pixel‑level loss with high‑level spectrogram feature losses extracted from a visual CNN can yield measurable gains in music source separation quality. The work serves as an early proof‑of‑concept that perceptual‑style losses, originally devised for images, can be transferred to the audio domain, and it opens avenues for more sophisticated audio‑centric perceptual loss designs and for integrating such losses into increasingly powerful separation architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment