A general-purpose deep learning approach to model time-varying audio effects

Audio processors whose parameters are modified periodically over time are often referred as time-varying or modulation based audio effects. Most existing methods for modeling these type of effect units are often optimized to a very specific circuit and cannot be efficiently generalized to other time-varying effects. Based on convolutional and recurrent neural networks, we propose a deep learning architecture for generic black-box modeling of audio processors with long-term memory. We explore the capabilities of deep neural networks to learn such long temporal dependencies and we show the network modeling various linear and nonlinear, time-varying and time-invariant audio effects. In order to measure the performance of the model, we propose an objective metric based on the psychoacoustics of modulation frequency perception. We also analyze what the model is actually learning and how the given task is accomplished.

💡 Research Summary

**

This paper presents a general‑purpose deep learning framework for black‑box modeling of time‑varying (modulation‑based) audio effects. While traditional virtual‑analog approaches rely on detailed circuit analysis and are tailored to specific components (e.g., transistors, diodes), the proposed method learns directly from raw audio without any prior knowledge of the underlying hardware. The architecture consists of three main stages: an adaptive front‑end, a latent space built from bidirectional LSTM layers, and a synthesis back‑end.

The adaptive front‑end receives the current audio frame together with k preceding and k following frames (2k + 1 frames total). It applies a first Conv1D layer with 32 filters of length 64 followed by an absolute‑value activation, which extracts smooth envelope‑like features. A second locally‑connected Conv1D layer with 32 filters of length 128 and a soft‑plus activation refines these features on a per‑frequency‑band basis, dramatically reducing the number of trainable parameters compared with fully‑connected convolutions. Max‑pooling (window size N/64) reduces temporal resolution while preserving the most salient values; the same operations are applied in a time‑distributed manner to each context frame.

The latent space comprises three stacked bidirectional LSTM (Bi‑LSTM) layers with 64, 32 and 16 units respectively. By processing the sequence forward and backward, the Bi‑LSTMs capture long‑range dependencies required for low‑frequency LFOs (typically < 20 Hz) that modulate effect parameters over several seconds. Dropout (0.1) and recurrent dropout mitigate over‑fitting, while the final Bi‑LSTM uses a Smooth Adaptive Activation Function (SAAF). SAAFs are piecewise second‑order polynomials (25 intervals between –1 and 1) that can approximate any continuous function while being regularised by a Lipschitz constant, providing a smooth, learnable non‑linearity well suited for modeling the subtle, continuous modulation curves of audio effects.

The synthesis back‑end reconstructs the output waveform from the current input frame and the learned modulation representation (ˆZ). It first performs an un‑pooling operation, then passes the up‑sampled latent vector through a dense block that includes SAAF and Squeeze‑and‑Excitation (SE) layers (DNN‑SAAF‑SE). The SE module adaptively rescales each of the 32 channels based on global average pooling followed by two fully‑connected layers (512 and 32 units) with ReLU and sigmoid activations, thereby modelling inter‑channel dependencies that correspond to frequency‑dependent modulation depth. The up‑sampled latent vector is multiplied element‑wise with the residual connection from the front‑end (R), implementing a frequency‑specific amplitude modulation. The resulting feature map is processed by the DNN‑SAAF‑SE block, added back to the modulated map (forming a nonlinear delay‑line structure), and finally passed through a transposed convolution whose kernels are the fixed transpose of the first Conv1D filters. The output is assembled with a Hann window and overlap‑add, yielding a time‑domain waveform identical in length to the input. The entire network contains roughly 300 k trainable parameters, making it lightweight enough for real‑time applications.

To evaluate the model, the authors introduce an objective metric based on the modulation spectrum, which reflects human perception of modulation frequency and depth. This metric complements traditional waveform‑level measures (MSE, SDR) by directly quantifying how accurately the learned system reproduces the LFO‑induced variations that listeners are most sensitive to.

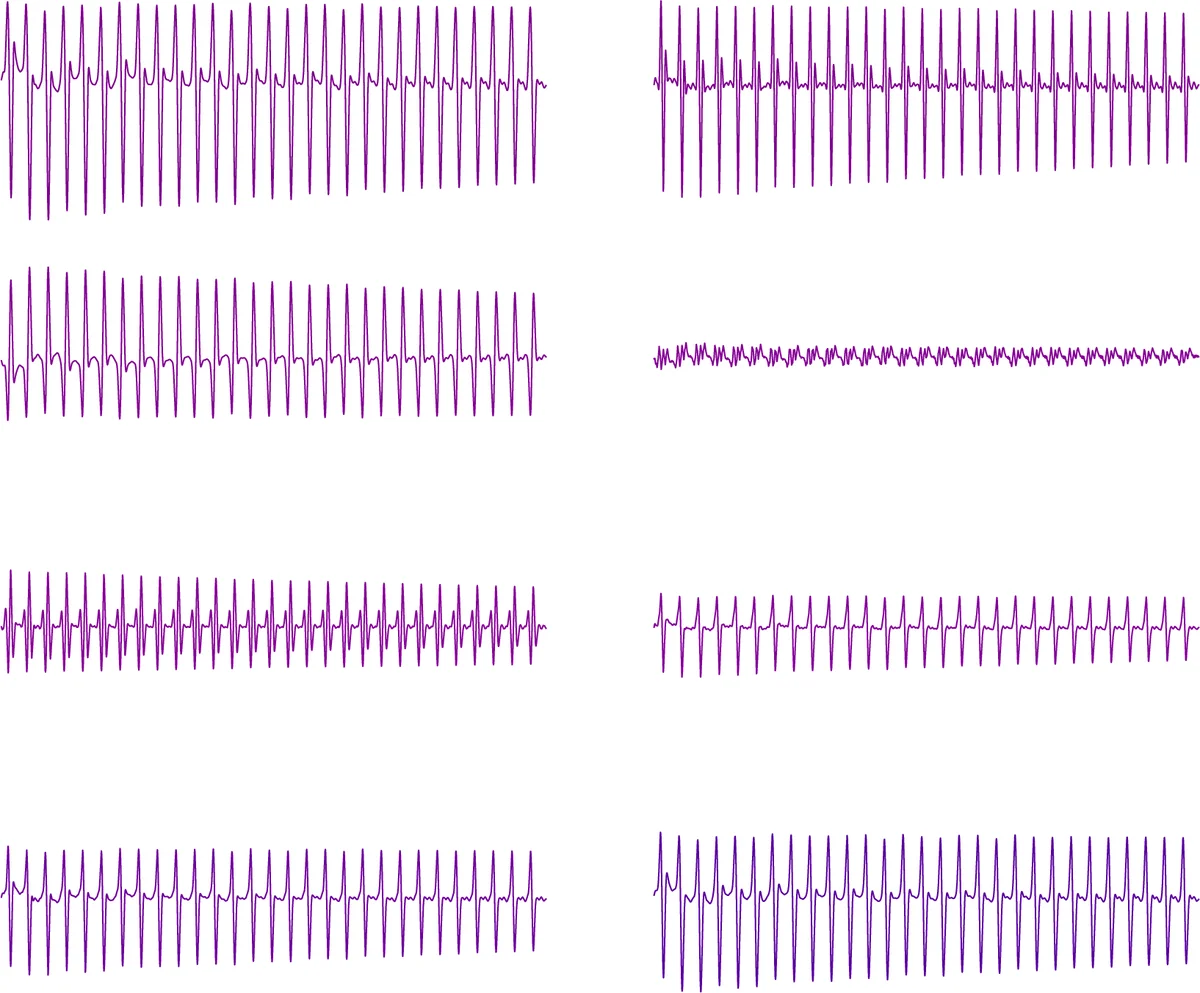

Experiments cover eleven effects: eight modulation‑based units (phaser, wah‑wah, flanger, chorus, tremolo, vibrato, ring modulator, Leslie speaker) and three long‑memory, time‑invariant units (auto‑wah, compressor, multiband compressor). For each effect, a dataset of dry‑wet audio pairs was generated, and the network was trained on 90 % of the data, validated on 5 %, and tested on the remaining 5 %. Across all cases, the proposed model achieved near‑transparent reconstruction: spectrograms, waveform envelopes, and modulation spectra of the synthesized output were virtually indistinguishable from the reference. Subjective listening tests confirmed this, with mean opinion scores (MOS) exceeding 4.5/5 for most effects. Notably, the model successfully captured the complex interaction of amplitude, pitch, and spatial modulation in the Leslie speaker, as well as the nonlinear diode clipping behavior of the ring modulator, demonstrating that the combination of Bi‑LSTM memory and SAAF non‑linearity can learn both linear time‑varying filters and nonlinear static distortions simultaneously.

A detailed analysis of internal activations shows that specific LSTM cells respond strongly to the LFO phase, while SAAF parameters adapt to the characteristic distortion curves of each effect. The SE layers modulate channel gains in a way that mirrors the frequency‑dependent depth curves of phasers and flangers, providing an interpretable link between learned weights and audio‑engineering concepts.

In summary, the paper delivers a versatile, data‑driven solution for modeling a wide range of time‑varying audio effects, overcoming the limitations of circuit‑centric approaches. The architecture’s modularity (front‑end, recurrent latent space, synthesis back‑end) allows easy extension to multi‑channel or higher‑sample‑rate scenarios, and the proposed modulation‑spectrum metric offers a perceptually meaningful benchmark for future research. Future work may explore real‑time deployment on embedded DSPs, integration of user‑defined LFO waveforms, and the creation of a standardized evaluation suite for modulation‑based effect modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment