VoiceFilter: Targeted Voice Separation by Speaker-Conditioned Spectrogram Masking

In this paper, we present a novel system that separates the voice of a target speaker from multi-speaker signals, by making use of a reference signal from the target speaker. We achieve this by training two separate neural networks: (1) A speaker rec…

Authors: Quan Wang, Hannah Muckenhirn, Kevin Wilson

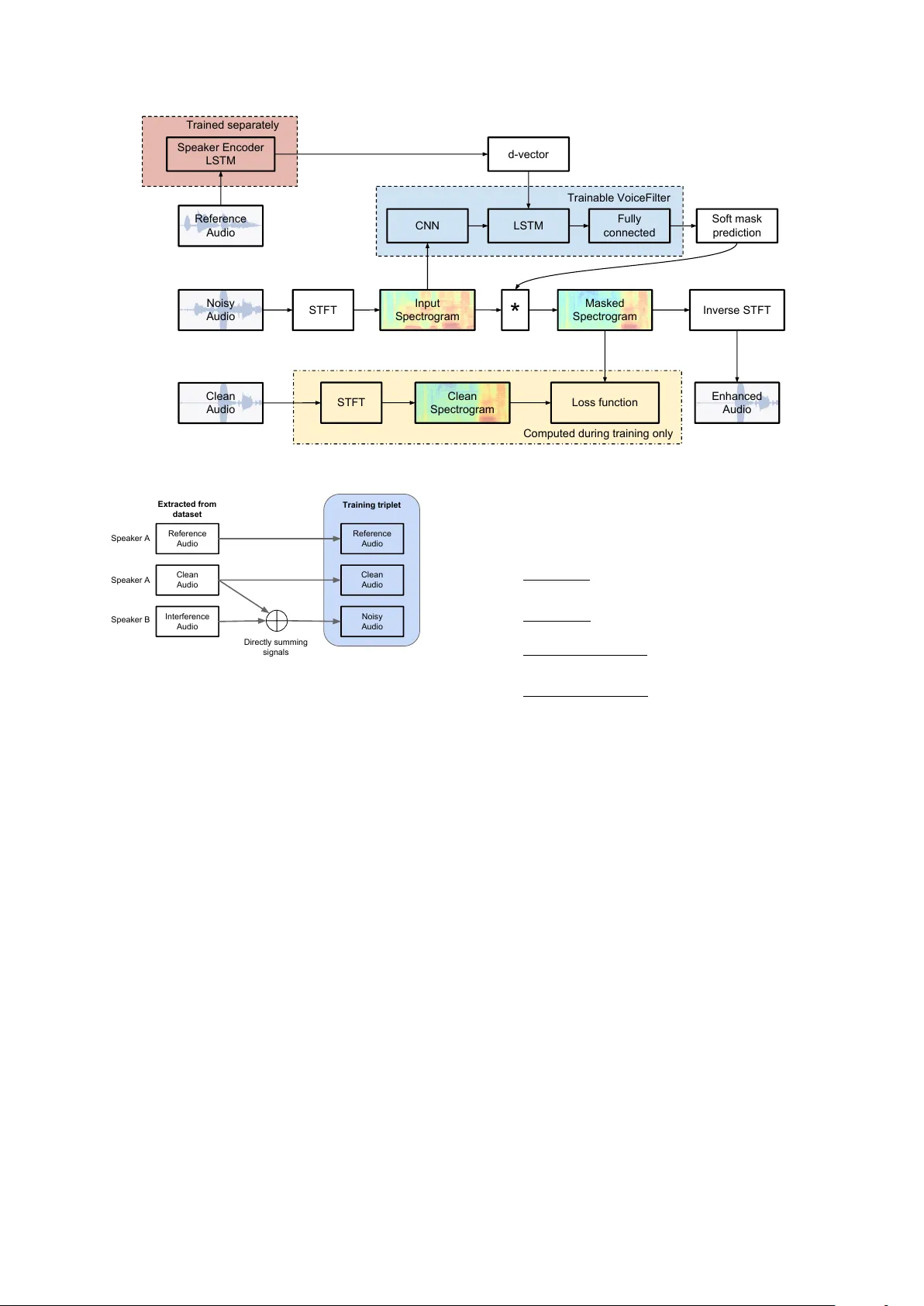

V oiceFilter: T argeted V oice Separation by Speaker -Conditioned Spectrogram Masking Quan W ang * 1 Hannah Muck enhirn * 2,3 K evin W ilson 1 Prashant Sridhar 1 Zelin W u 1 J ohn Hershe y 1 Rif A. Saur ous 1 Ron J . W eiss 1 Y e Jia 1 Ignacio Lopez Mor eno 1 1 Google Inc., USA 2 Idiap Research Institute, Switzerland 3 EPFL, Switzerland quanw@google.com hannah.muckenhirn@idiap.ch Abstract In this paper , we present a novel system that separates the v oice of a target speak er from multi-speaker signals, by making use of a reference signal from the target speaker . W e achiev e this by training two separate neural networks: (1) A speaker recogni- tion network that produces speaker-discriminati ve embeddings; (2) A spectrogram masking netw ork that takes both noisy spec- trogram and speaker embedding as input, and produces a mask. Our system significantly reduces the speech recognition WER on multi-speaker signals, with minimal WER degradation on single-speaker signals. Index T erms : Source separation, speaker recognition, spectro- gram masking, speech recognition 1. Introduction Recent adv ances in speech recognition ha ve led to performance improv ement in challenging scenarios such as noisy and far - field conditions. Ho wever , speech recognition systems still per - form poorly when the speaker of interest is recorded in crowded en vironments, i.e. , with interfering speakers in the foreground or background. One way to deal with this issue is to first apply a speech separation system on the noisy audio in order to separate the voices from different speakers. Therefore, if the noisy signal contains N speakers, this approach would yield N outputs with a potential additional output for the ambient noise. A classical speech separation task like this needs to cope with two main challenges. First, identifying the number of speak ers N in the recording, which in realistic scenarios is unknown. Secondly , the optimization of a speech separation system may be required to be in variant to the permutation of speaker labels, as the order of the speakers should not ha ve an impact during training [ 1 ]. Lev eraging the advances in deep neural networks, several suc- cessful works have been introduced to address these problems, such as deep clustering [ 1 ], deep attractor network [ 2 ], and per- mutation in variant training [ 3 ]. This work addresses the task of isolating the voices of a subset of speakers of interest from the commonality of all the other speakers and noises. For example, such subset can be formed by a single target speaker issuing a spoken query to a personal mobile de vice, or the members of a house talking to a shared home device. W e will also assume that the speaker(s) of interest can be individually characterized by previous refer- ence recordings, e.g. through an enrollment stage. This task is closely related to classical speech separation, but in a way that it is speaker-dependent. In this paper , we will refer to the task of speak er-dependent speech separation as voice filtering (some * Equal contribution. Hannah performed this work as an intern at Google. literature call it speak er extr action ). W e argue that for voice fil- tering, speaker-independent techniques such as those presented in [ 1 , 2 , 3 ] may not be a good fit. In addition to the challenges described previously , these techniques require an extra step to determine which output – out of the N possible outputs of the speech separation system – corresponds to the target speaker(s), by e.g. choosing the loudest speaker , running a speaker verifi- cation system on the outputs, or matching a specific keyw ord. A more end-to-end approach for the voice filtering task is to treat the problem as a binary classification problem, where the positive class is the speech of the speaker of interest, and the negativ e class is formed by the combination of all fore- ground and background interfering speakers and noises. By speaker -conditioning the system, this approach suppresses the three aforementioned challenges: unknown number of speak- ers, permutation problem, and selection from multiple outputs. In this work, we aim to condition the system on the speaker embedding vector of a reference recording. The proposed ap- proach is the following. W e first train a LSTM-based speaker encoder to compute robust speaker embedding v ectors. W e then train separately a time-frequency mask-based system that takes two inputs: (1) the embedding vector of the target speaker , pre- viously computed with the speaker encoder; and (2) the noisy multi-speaker audio. This system is trained to remove the inter- fering speakers and output only the v oice of the target speaker . 1 This approach can be easily extended to more than one speaker of interest by repeating the process in turns, for the reference recording of each target speak er . Similar related literature exists for the task of voice filter- ing. For example, in [ 4 , 5 ], the authors achiev ed impressive results by doing an indirect speaker conditioning of the system on the visual information (lips movement). Howe ver , a solution like that would require simultaneously using speech and visual information, which may not be av ailable in certain type of appli- cations, where a reference speech signal may be more practical. The systems proposed in [ 6 , 7 , 8 , 9 ] are also very similar to ours, with a few major differences: (1) Unlike using one-hot vectors, i-vectors or speaker posteriors deriv ed from a cross- entropy classification network, our speaker encoder network is specifically designed for large-scale end-to-end speaker recog- nition [ 10 ], which proves to perform much better in speaker recognition tasks, especially for unseen speakers. (2) Instead of using a GEV beamformer [ 6 , 8 ], our system directly opti- mizes the power -law compressed reconstruction error between the clean and enhanced signals [ 11 ]. (3) In addition to source- to-distortion ratio [ 6 , 7 ], we focus on W ord Error Rate improv e- ments for ASR systems. (4) W e use dilated con volutional lay- ers to capture low-le vel acoustic features more effecti vely . (5) 1 Samples of output audios are av ailable at: https://google. github.io/speaker- id/publications/VoiceFilter W e prefer separately trained speaker encoder network over joint training like [ 8 , 9 ], due to the very different requirements for data in speaker recognition and source separation tasks. The rest of this paper is organized as follows. In Section 2 , we describe our approach to the problem, and provide the de- tails of how we train the neural networks. In Section 3 , we describe our experimental setup, including the datasets we use and the evaluation metrics. The experimental results are pre- sented in Section 4 . W e draw our conclusions in Section 5 , with discussions on future work directions. 2. A pproach The system architecture is sho wn in Fig. 1 . The system consists of two separately trained components: the speaker encoder (in red), and the V oiceFilter system (in blue), which uses the output of the speak er encoder as an additional input. In this section, we will describe these two components. 2.1. Speak er encoder The purpose of the speak er encoder is to produce a speak er em- bedding from an audio sample of the target speaker . This sys- tem is based on a recent work from W an et al. [ 10 ], which achiev es great performance on both text-dependent and text- independent speak er v erification tasks, as well as on speak er di- arization [ 12 , 13 ], multispeaker TTS [ 14 ], and speech-to-speech translation [ 15 ]. The speaker encoder is a 3-layer LSTM network trained with the generalized end-to-end loss [ 10 ]. It takes as inputs log-mel filterbank energies extracted from windows of 1600 ms, and outputs speaker embeddings, called d-vectors , which have a fixed dimension of 256. T o compute a d-vector on one utter- ance, we extract sliding windows with 50% overlap, and aver - age the L2-normalized d-vectors obtained on each windo w . 2.2. V oiceFilter system The V oiceFilter system is based on the recent work of Wilson et al. [ 11 ], developed for speech enhancement. As shown in Fig. 1 , the neural network takes two inputs: a d-v ector of the target speaker , and a magnitude spectr ogram computed from a noisy audio. The network predicts a soft mask, which is element-wise multiplied with the input (noisy) magnitude spec- trogram to produce an enhanced magnitude spectrogram. T o obtain the enhanced waveform, we directly merge the phase of the noisy audio to the enhanced magnitude spectrogram, and apply an in verse STFT on the result. The network is trained to minimize the difference between the masked magnitude spec- trogram and the target magnitude spectrogram computed from the clean audio. The V oiceFilter network is composed of 8 con volutional layers, 1 LSTM layer , and 2 fully connected layers, each with ReLU activ ations except the last layer , which has a sigmoid ac- tiv ation. The values of the parameters are provided in T able 1 . The d-v ector is repeatedly concatenated to the output of the last con volutional layer in e very time frame. The resulting con- catenated vector is then fed as the input to the follo wing LSTM layers. W e decide to inject the d-vector between the con vo- lutional layers and the LSTM layer and not before the con vo- lutional layers for two reasons. First, the d-vector is already a compact and rob ust representation of the target speaker , thus we do not need to modify it by applying con volutional layers on top of it. Secondly , conv olutional layers assume time and frequenc y homogeneity , and thus cannot be applied on an input composed T able 1: P arameters of the V oiceF ilter network. Layer Width Dilation Filters / Nodes time freq time freq CNN 1 1 7 1 1 64 CNN 2 7 1 1 1 64 CNN 3 5 5 1 1 64 CNN 4 5 5 2 1 64 CNN 5 5 5 4 1 64 CNN 6 5 5 8 1 64 CNN 7 5 5 16 1 64 CNN 8 1 1 1 1 8 LSTM - - - - 400 FC 1 - - - - 600 FC 2 - - - - 600 of two completely different signals: a magnitude spectrogram and a speaker embedding. While training the V oiceFilter system, the input audios are divided into segments of 3 seconds each and are con verted, if necessary , to single channel audios with a sampling rate of 16 kHz. 3. Experimental setup In this section, we describe our experimental setup: the data used to train the two components of the system separately , as well as the metrics to assess the systems. 3.1. Data 3.1.1. Datasets Speaker encoder: Although our speaker encoder network has exactly the same network topology as the text-independent model described in [ 10 ], we use more training data in this sys- tem. Our speaker encoder is trained with two datasets com- bined by the MultiReader technique introduced in [ 10 ]. The first dataset consists of anonymized voice query logs in English from mobile and farfield devices. It has about 34 million utter- ances from about 138 thousand speakers. The second dataset consists of LibriSpeech [ 16 ], V oxCeleb [ 17 ], and V oxCeleb2 [ 18 ]. This model (referred to as “d-vector V2” in [ 13 ]) has a 3.06% equal error rate (EER) on our internal en-US phone au- dio test dataset, compared to the 3.55% EER of the one reported in [ 10 ]. V oiceFilter: W e cannot use a “standard” benchmark cor- pus for speech separation, such as one of the CHiME chal- lenges [ 19 ], because we need a clean reference utterance of each target speaker in order to compute speaker embeddings. Instead, we train and ev aluate the V oiceFilter system on our own generated data, deriv ed either from the VCTK dataset [ 20 ] or from the LibriSpeech dataset [ 16 ]. F or VCTK, we randomly take 99 speakers for training, and 10 speakers for testing. For LibriSpeech, we used the training and de velopment sets defined in the protocol of the dataset: the training set contains 2338 speakers, and the dev elopment set contains 73 speakers. These two datasets contain read speech, and each utterance contains the v oice of one speak er . W e e xplain in the ne xt section how we generate the data used to train the V oiceFilter system. 3.1.2. Data generation From the system diagram in Fig. 1 , we see that one training step inv olves three inputs: (1) the clean audio from the target speaker , which is the ground truth; (2) the noisy audio contain- ing multiple speakers; and (3) a reference audio from the target Input Spectrogram Trainable VoiceFilter LSTM Fully connected Soft mask prediction CNN Trained separately Reference Audio Speaker Encoder LSTM d-vector STFT STFT Computed during training only Loss function * Inverse STFT Clean Spectrogram Masked Spectrogram Clean Audio Noisy Audio Enhanced Audio Figure 1: System arc hitectur e. Clean Audio Reference Audio Noisy Audio Training triplet Interference Audio Clean Audio Reference Audio Extracted from dataset Speaker A Directly summing signals Speaker A Speaker B Figure 2: Input data pr ocessing workflow . speaker (different from the clean audio) ov er which the d-vector will be computed. This training triplet can be obtained by using three audios from a clean dataset, as shown in Fig. 2 . The reference au- dio is picked randomly among all the utterances of the target speaker , and is different from the clean audio. The noisy audio is generated by mixing the clean audio and an interfering audio randomly selected from a different speaker . More specifically , it is obtained by directly summing the clean audio and the inter- fering audio, then trimming the result to the length of the clean audio. W e hav e also tried to multiply the interfering audio by a ran- dom weight following a uniform distribution either within [0 , 1] or within [0 , 2] . Howe ver , this did not affect the performance of the V oiceFilter system in our experiments. 3.2. Evaluation T o ev aluate the performance of different V oiceFilter mod- els, we use two metrics: the speech recognition W ord Error Rate (WER) and the Source to Distortion Ratio (SDR). 3.2.1. W or d err or rate As mentioned in Sec. 1 , the main goal of our system is to improve speech recognition. Specifically , we want to re- duce the WER in multi-speaker scenarios, while preserving the same WER in single-speaker scenarios. The speech recognizer we use for WER ev aluation is a version of the con ventional phone models discussed in [ 21 ], which is trained on a Y ouTube dataset. For each V oiceFilter model, we care about four WER num- bers: • Clean WER: W ithout V oiceFilter , the WER on the clean audio. • Noisy WER: Without V oiceFilter, the WER on the noisy (clean + interence) audio. • Clean-enhanced WER: the WER on the clean audio pro- cessed by the V oiceFilter system. • Noisy-enhanced WER: the WER on the noisy audio pro- cessed by the V oiceFilter system. A good V oiceFilter model should hav e these tw o properties: 1. Noisy-enhanced WER is significantly lower than Noisy WER, meaning that the V oiceFilter is improving speech recognition in multi-speaker scenarios. 2. Clean-enhanced WER is very close to Clean WER, meaning that the V oiceFilter has minimal negati ve im- pact on single-speaker scenarios. 3.2.2. Source to distortion r atio The SDR is a very common metric to e v aluate source separation systems [ 22 ], which requires to know both the clean signal and the enhanced signal. It is an energy ratio, expressed in dB, be- tween the energy of the target signal contained in the enhanced signal and the ener gy of the errors (coming from the interfering speakers and artifacts). Thus, the higher it is, the better . 4. Results 4.1. W ord error rate In T able 2 , we present the results of V oiceFilter models trained and ev aluated on the LibriSpeech dataset. The architecture of the V oiceFilter system is shown in T able 1 , with a few dif ferent variations of the LSTM layer: (1) no LSTM layer , i.e . , only con- volutional layers directly followed by fully connected layers; (2) a uni-directional LSTM layer; (3) a bi-directional LSTM layer . In general, after applying V oiceFilter , the WER on the T able 2: Speech recognition WER on LibriSpeech. V oiceF ilter is trained on LibriSpeec h. V oiceFilter Model Clean Noisy WER (%) WER (%) No V oiceFilter 10.9 55.9 V oiceFilter: no LSTM 12.2 35.3 V oiceFilter: LSTM 12.2 28.2 V oiceFilter: bi-LSTM 11.1 23.4 T able 3: Speech r ecognition WER on VCTK. LSTM layer is uni- dir ectional. Model arc hitectur e is shown in T able 1 . V oiceFilter Model Clean Noisy WER (%) WER (%) No V oiceFilter 6.1 60.6 T rained on VCTK 21.1 37.0 T rained on LibriSpeech 5.9 34.3 noisy data is significantly lo wer than before, while the WER on the clean dataset remains close to before. There is a significant gap between the first and second model, meaning that process- ing the data sequentially with an LSTM is an important compo- nent of the system. Morev er , using a bi-directional LSTM layer we achieve the best WER on the noisy data. W ith this model, applying the V oiceFilter system on the noisy data reduces the speech recognition WER by a relativ e 58.1%. In the clean sce- nario, the performance degradation caused by the V oiceFilter system is very small: the WER is 11.1% instead of 10.9%. In T able 3 , we present the WER results of V oiceFilter mod- els ev aluated on the VCTK dataset. With a V oiceFilter model trained also on VCTK, the WER on the noisy data after applying V oiceFilter is significantly lo wer than before, reduced relati vely by 38.9%. Howe ver , the WER on the clean data after applying V oiceFilter is significantly higher . This is mostly because the VCTK training set is too small, containing only 99 speakers. If we use a V oiceFilter model trained on LibriSpeech instead, the WER on the noisy dataset further decreases, while the WER on the clean data reduces to 5.9%, which is ev en smaller than before applying V oiceFilter . This means: (1) The V oiceFilter model is able to generalize from one dataset to another; (2) W e are improving the acoustic quality of the original clean audios, ev en if we did not explicitly train it this way . Note that the LibriSpeech training set contains about 20 times more speakers than VCTK (2338 speakers instead of 99 speakers), which is the major difference between the two mod- els sho wn in T able 3 . Thus, the results also imply that we could further improve our V oiceFilter model by training it with ev en more speakers. 4.2. Source to distortion ratio W e present the SDR numbers in T able 4 . The results follow the same trend as the WER in T able 2 . The bi-directional LSTM approach in the V oiceFilter achie ves the highest SDR. W e also compare the V oiceFilter results to a speech sepa- ration model that uses the permutation inv ariant loss [ 3 ]. This model has the same architecture as the V oiceFilter system (with a bi-directional LSTM), presented in T able 1 , but is not fed with speaker embeddings. Instead, it separates the noisy input into two components, corresponding to the clean and the interfering audio, and chooses the output that is the closest to the ground truth, i.e. , with the lowest SDR. This system can be seen as an “oracle” system as it knows both the number of sources con- tained in the noisy signal as well as the ground truth clean sig- T able 4: Sour ce to distortion ratio on LibriSpeech. Unit is dB. P ermIn v stands for permutation in variant loss [ 3 ]. Mean SDR for “No V oiceFilter” is high since some clean signals ar e mixed with silent parts of interfer ence signals. V oiceFilter Model Mean SDR Median SDR No V oiceFilter 10.1 2.5 V oiceFilter: no LSTM 11.9 9.7 V oiceFilter: LSTM 15.6 11.3 V oiceFilter: bi-LSTM 17.9 12.6 PermIn v: bi-LSTM 17.2 11.9 nal. As explained in Section 1 , using such a system in practice would require to: 1) estimate how many speakers are in the noisy input, and 2) choose which output to select, e.g. by run- ning a speaker v erification system on each output (which might not be efficient if there are a lot of interfering speak ers). W e observe that the V oiceFilter system outperforms the per - mutation inv ariant loss based system. This shows that not only our system solves the two aforementioned issues, but using a speaker embedding also improves the capability of the system to extract the source of interest (with a higher SDR). 4.3. Discussions In T able 2 , we tried a few variants of the V oiceFilter model on LibriSpeech, and the best WER performance was achieved with a bi-directional LSTM. Howe ver , it is likely that a similar per- formance could be achiev ed by adding more layers or nodes to uni-directional LSTM. Future work includes exploring more variants and fine-tuning the hyper-parameters to achieve better performance with lower computational cost, but that is beyond the focus of this paper . 5. Conclusions and future work In this paper , we have demonstrated the ef fectiv eness of us- ing a discriminatively-trained speaker encoder to condition the speech separation task. Such a system is more applicable to real scenarios because it does not require prior kno wledge about the number of speakers and av oids the permutation problem. W e hav e sho wn that a V oiceFilter model trained on the LibriSpeech dataset reduces the speech recognition WER from 55.9% to 23.4% in two-speaker scenarios, while the WER stays approxi- mately the same on single-speaker scenarios. This system could be improved by taking a few steps: (1) training on larger and more challenging datasets such as V ox- Celeb 1 and 2 [ 18 ]; (2) adding more interfering speakers; and (3) computing the d-vectors over several utterances instead of only one to obtain more robust speaker embeddings. Another interesting direction would be to train the V oiceFilter system to perform joint voice separation and speech enhancement, i.e. , to remov e both the interfering speakers and the ambient noise. T o do so, we could add different noises when mixing the clean au- dio with interfering utterances. This approach will be part of future in vestigations. Finally , the V oiceFilter system could also be trained jointly with the speech recognition system to further increase the WER improv ement. 6. Acknowledgements The authors would like to thank Seungwon P ark for open sourc- ing a third-party implementation of this system. 2 W e would like to thank Y iteng (Arden) Huang, Jason Pelecanos, and Fadi Biadsy for the helpful discussions. 2 https://github.com/mindslab- ai/voicefilter 7. References [1] J. R. Hershey , Z. Chen, J. Le Roux, and S. W atanabe, “Deep clus- tering: Discriminative embeddings for segmentation and separa- tion, ” in International Confer ence on Acoustics, Speech and Sig- nal Pr ocessing (ICASSP) . IEEE, 2016, pp. 31–35. [2] Z. Chen, Y . Luo, and N. Mesgarani, “Deep attractor network for single-microphone speaker separation, ” in International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017. [3] D. Y u, M. K olbæk, Z.-H. T an, and J. Jensen, “Permutation in- variant training of deep models for speaker -independent multi- talker speech separation, ” in International Conference on Acous- tics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 241–245. [4] A. Ephrat, I. Mosseri, O. Lang, T . Dekel, K. W ilson, A. Hassidim, W . T . Freeman, and M. Rubinstein, “Looking to listen at the cock- tail party: A speaker-independent audio-visual model for speech separation, ” in SIGGRAPH , 2018. [5] T . Afouras, J. S. Chung, and A. Zisserman, “The conversation: Deep audio-visual speech enhancement, ” in Interspeech , 2018. [6] K. Zmolikova, M. Delcroix, K. Kinoshita, T . Higuchi, A. Ogawa, and T . Nakatani, “Speaker-aw are neural network based beam- former for speaker e xtraction in speech mixtures, ” in Interspeec h , 2017. [7] J. W ang, J. Chen, D. Su, L. Chen, M. Y u, Y . Qian, and D. Y u, “Deep extractor network for target speaker recovery from sin- gle channel speech mixtures, ” arXiv pr eprint arXiv:1807.08974 , 2018. [8] K. ˇ Zmol ´ ıkov ´ a, M. Delcroix, K. Kinoshita, T . Higuchi, A. Ogawa, and T . Nakatani, “Learning speak er representation for neu- ral network based multichannel speaker e xtraction, ” in A uto- matic Speech Recognition and Understanding W orkshop (ASR U) . IEEE, 2017, pp. 8–15. [9] M. Delcroix, K. Zmolikov a, K. Kinoshita, A. Ogaw a, and T . Nakatani, “Single channel target speaker extraction and recog- nition with speaker beam, ” in International Confer ence on Acous- tics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 5554–5558. [10] L. W an, Q. W ang, A. Papir , and I. L. Moreno, “Generalized end- to-end loss for speaker verification, ” in International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2018, pp. 4879–4883. [11] K. W ilson, M. Chinen, J. Thorpe, B. Patton, J. Hershey , R. A. Saurous, J. Skoglund, and R. F . L yon, “Exploring tradeoffs in models for low-latenc y speech enhancement, ” in International W orkshop on Acoustic Signal Enhancement (IW AENC) . IEEE, 2018, pp. 366–370. [12] Q. W ang, C. Do wney , L. W an, P . A. Mansfield, and I. L. Moreno, “Speaker diarization with lstm, ” in International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 5239–5243. [13] A. Zhang, Q. W ang, Z. Zhu, J. Paisley , and C. W ang, “Fully su- pervised speaker diarization, ” arXiv preprint , 2018. [14] Y . Jia, Y . Zhang, R. J. W eiss, Q. W ang, J. Shen, F . Ren, Z. Chen, P . Nguyen, R. Pang, I. L. Moreno et al. , “T ransfer learning from speaker verification to multispeaker text-to-speech synthesis, ” in Confer ence on Neural Information Pr ocessing Systems (NIPS) , 2018. [15] Y . Jia, R. J. W eiss, F . Biadsy , W . Macherey , M. Johnson, Z. Chen, and Y . W u, “Direct speech-to-speech translation with a sequence- to-sequence model, ” arXiv preprint , 2019. [16] V . Panayoto v , G. Chen, D. Povey , and S. Khudanpur , “Lib- rispeech: an asr corpus based on public domain audio books, ” in International Confer ence on Acoustics, Speech and Signal Pr o- cessing (ICASSP) . IEEE, 2015, pp. 5206–5210. [17] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxceleb: a large- scale speaker identification dataset, ” in Interspeech , 2017. [18] J. S. Chung, A. Nagrani, and A. Zisserman, “V oxceleb2: Deep speaker recognition, ” in Interspeech , 2018. [19] J. P . Barker , R. Marxer , E. Vincent, and S. W atanabe, “The CHiME challenges: Robust speech recognition in e veryday envi- ronments, ” in New Er a for Rob ust Speec h Reco gnition . Springer , 2017, pp. 327–344. [20] C. V eaux, J. Y amagishi, K. MacDonald et al. , “Superseded-cstr vctk corpus: English multi-speaker corpus for cstr voice cloning toolkit, ” 2016. [21] H. Soltau, H. Liao, and H. Sak, “Neural speech recognizer: Acoustic-to-word lstm model for large vocab ulary speech recog- nition, ” in Interspeech , 2017. [22] E. V incent, R. Gribon val, and C. F ´ evotte, “Performance measure- ment in blind audio source separation, ” IEEE transactions on au- dio, speech, and language processing , vol. 14, no. 4, pp. 1462– 1469, 2006.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment