Network Representation Learning: Consolidation and Renewed Bearing

Graphs are a natural abstraction for many problems where nodes represent entities and edges represent a relationship across entities. An important area of research that has emerged over the last decade is the use of graphs as a vehicle for non-linear dimensionality reduction in a manner akin to previous efforts based on manifold learning with uses for downstream database processing, machine learning and visualization. In this systematic yet comprehensive experimental survey, we benchmark several popular network representation learning methods operating on two key tasks: link prediction and node classification. We examine the performance of 12 unsupervised embedding methods on 15 datasets. To the best of our knowledge, the scale of our study – both in terms of the number of methods and number of datasets – is the largest to date. Our results reveal several key insights about work-to-date in this space. First, we find that certain baseline methods (task-specific heuristics, as well as classic manifold methods) that have often been dismissed or are not considered by previous efforts can compete on certain types of datasets if they are tuned appropriately. Second, we find that recent methods based on matrix factorization offer a small but relatively consistent advantage over alternative methods (e.g., random-walk based methods) from a qualitative standpoint. Specifically, we find that MNMF, a community preserving embedding method, is the most competitive method for the link prediction task. While NetMF is the most competitive baseline for node classification. Third, no single method completely outperforms other embedding methods on both node classification and link prediction tasks. We also present several drill-down analysis that reveals settings under which certain algorithms perform well (e.g., the role of neighborhood context on performance) – guiding the end-user.

💡 Research Summary

This paper presents the most extensive systematic benchmark of unsupervised network representation learning (NRL) methods to date. The authors evaluate twelve widely used embedding algorithms—Laplacian Eigenmaps, DeepWalk, Node2Vec, GraRep, NetMF, M‑NMF, HOPE, LINE, and several classic baselines—across fifteen heterogeneous graph datasets that vary in size, density, directionality, and weighting. Two downstream tasks are considered: link prediction and node classification. For each method, the authors use the original authors’ code whenever possible, conduct exhaustive hyper‑parameter searches (embedding dimension, walk length, number of walks, window size, negative sampling, regularization parameters, etc.), and repeat experiments with multiple random train‑test splits (10 %/90 % and 50 %/50 %) to report averaged results, thereby addressing the reproducibility gaps that have plagued prior work.

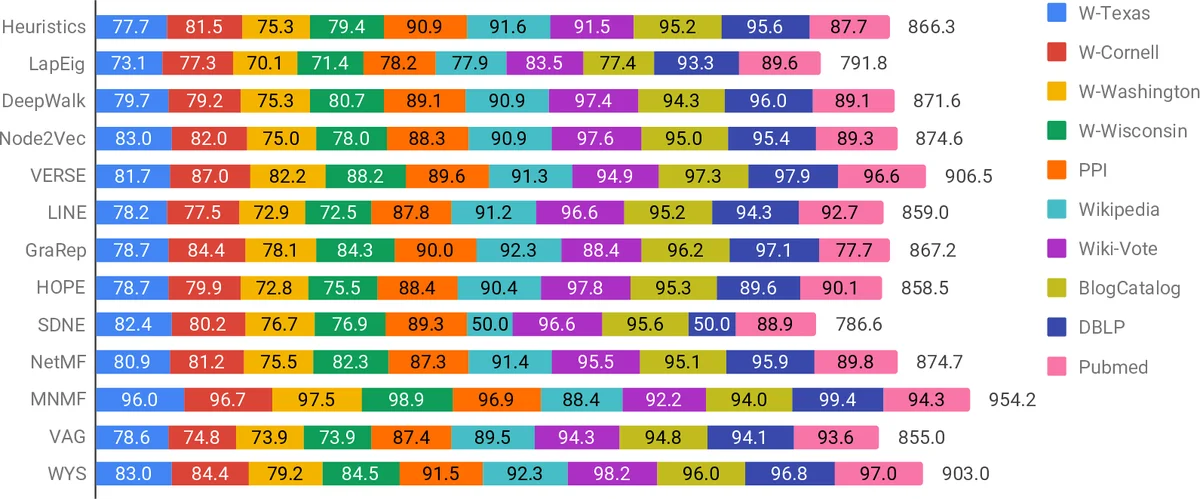

Key findings are as follows. First, matrix‑factorization‑based approaches show a modest but consistent edge over random‑walk‑based methods. Specifically, M‑NMF, which jointly preserves first‑order, second‑order, and community‑level structure, achieves the highest AUC on link‑prediction across most datasets. NetMF, which analytically derives the DeepWalk co‑occurrence matrix and factorizes it via SVD, consistently yields the best Macro‑F1 scores for multi‑label node classification. Second, classic baselines that are often dismissed—such as simple heuristic link‑prediction scores (common neighbors, Adamic‑Adar) and traditional manifold learning (Laplacian Eigenmaps)—can be highly competitive when their hyper‑parameters (e.g., logistic‑regression regularization for Eigenmaps) are carefully tuned. Third, no single method dominates both tasks; performance is strongly influenced by graph characteristics (size, sparsity, label cardinality) and by the evaluation protocol. For instance, on sparse graphs with few labels, heuristic methods outperform sophisticated embeddings, while on dense, richly labeled graphs, matrix‑factorization methods excel.

The authors also dissect methodological nuances. They compare two common link‑prediction evaluation strategies: (i) training a logistic‑regression classifier on concatenated node embeddings, and (ii) ranking candidate edges by the dot product of embeddings. The classifier‑based approach generally yields higher accuracy but is computationally expensive for large graphs, whereas dot‑product ranking scales well and is more realistic for production systems. Additionally, they study the effect of context window size: larger windows benefit random‑walk‑based models but have diminishing returns for factorization‑based methods. The paper highlights that many prior studies suffer from “tuning strawman” bias—new methods are heavily tuned while baselines receive default settings—leading to misleading conclusions. By applying uniform, exhaustive tuning to all methods, the authors demonstrate that baseline performance can close much of the reported gaps.

Beyond empirical results, the paper makes several methodological contributions. It proposes a standardized benchmark protocol (fixed dataset splits, multiple random seeds, reporting of confidence intervals), releases all code, scripts, and hyper‑parameter logs, and provides a detailed taxonomy of graph characteristics that correlate with method performance. The authors argue that future NRL research should adopt these standards to enable fair comparison and to focus on genuine algorithmic advances rather than incremental tuning tricks.

Limitations are acknowledged: the study is confined to non‑attributed graphs, omitting recent attribute‑aware graph neural networks (e.g., GraphSAGE, GAT). Moreover, the benchmark does not include supervised or semi‑supervised GNNs, which are increasingly popular for the same downstream tasks. Nonetheless, the work offers a valuable reference point for practitioners deciding which unsupervised embedding to deploy and for researchers seeking to understand where current methods succeed or fail.

In summary, this comprehensive survey demonstrates that (1) matrix‑factorization methods (M‑NMF for link prediction, NetMF for node classification) are currently the most robust unsupervised embeddings, (2) well‑tuned classic baselines can rival or surpass many recent methods, and (3) rigorous, standardized evaluation—covering hyper‑parameter search, multiple splits, and clear reporting—is essential for credible progress in network representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment