Source Generator Attribution via Inversion

With advances in Generative Adversarial Networks (GANs) leading to dramatically-improved synthetic images and video, there is an increased need for algorithms which extend traditional forensics to this new category of imagery. While GANs have been shown to be helpful in a number of computer vision applications, there are other problematic uses such as `deep fakes’ which necessitate such forensics. Source camera attribution algorithms using various cues have addressed this need for imagery captured by a camera, but there are fewer options for synthetic imagery. We address the problem of attributing a synthetic image to a specific generator in a white box setting, by inverting the process of generation. This enables us to simultaneously determine whether the generator produced the image and recover an input which produces a close match to the synthetic image.

💡 Research Summary

The paper addresses the emerging forensic challenge of attributing synthetic images—particularly those generated by GANs and auto‑encoders—to their specific source generators. Traditional image forensics rely on low‑level physical cues such as sensor pattern noise (PRNU) to identify camera provenance, but synthetic imagery lacks any physical capture process, rendering those cues unavailable. The authors therefore propose a unified framework that simultaneously solves two problems: (1) limited source attribution—determining which of a known set of generators produced a given probe image, and (2) generator inversion—recovering the latent vector z that, when fed into the identified generator, reproduces the probe as closely as possible.

Mathematically, each generator Gᵢ is a deterministic mapping from a low‑dimensional latent space (vector z) to a high‑dimensional image space. For a probe image I, the loss Lᵢ(z)=‖I−Gᵢ(z)‖²/(M·N) is minimized with respect to z. This optimization is performed independently for every candidate generator. The generator that yields the smallest minimum loss Lᵢ^min is selected as the source, and the corresponding ẑ =arg min Lᵢ(z) is taken as the recovered latent code. Because neural networks are differentiable, the loss can be optimized efficiently with gradient‑based methods; the authors use the Adam optimizer.

A key practical difficulty is the non‑convex nature of the loss landscape, which can trap optimization in local minima. To mitigate this, the authors employ a multi‑start strategy: each inversion is run multiple times from different random initializations of z, and the best result (lowest loss) is kept. To quantify the confidence of an attribution decision, they define a normalized score

S = (Lⱼ^min – Lᵢ^min) / (Lⱼ^min + Lᵢ^min)

which ranges from +1 (perfect reconstruction by i) to –1 (perfect reconstruction by j) and 0 when both generators perform equally. By sweeping a threshold on S, they generate Receiver Operating Characteristic (ROC) curves and compute the Area Under the Curve (AUC) as a performance metric.

The experimental evaluation proceeds in two parts. First, the authors train two fully‑connected auto‑encoders on the MNIST digit dataset. Each auto‑encoder has a 32‑dimensional latent space and a symmetric decoder that serves as a frozen generator. Four scenarios are examined: (a) non‑overlapping training data (one generator sees only even digits, the other only odd digits); (b) identical data but different training order; (c) identical data, identical order, but different random weight initializations; and (d) the same as (c) with JPEG compression applied to the probe images. In the non‑overlapping case, the method achieves near‑perfect AUC≈0.999, demonstrating that distinct data distributions produce clearly separable manifolds. When the data are identical, performance degrades modestly (AUC≈0.96–0.98 for different order, ≈0.70–0.85 for identical order) but remains well above random guessing. JPEG compression introduces quantization noise that shifts the probe off the generator’s manifold; nevertheless, multi‑start inversion still yields AUC≈0.90–0.94, indicating robustness to typical forensic perturbations.

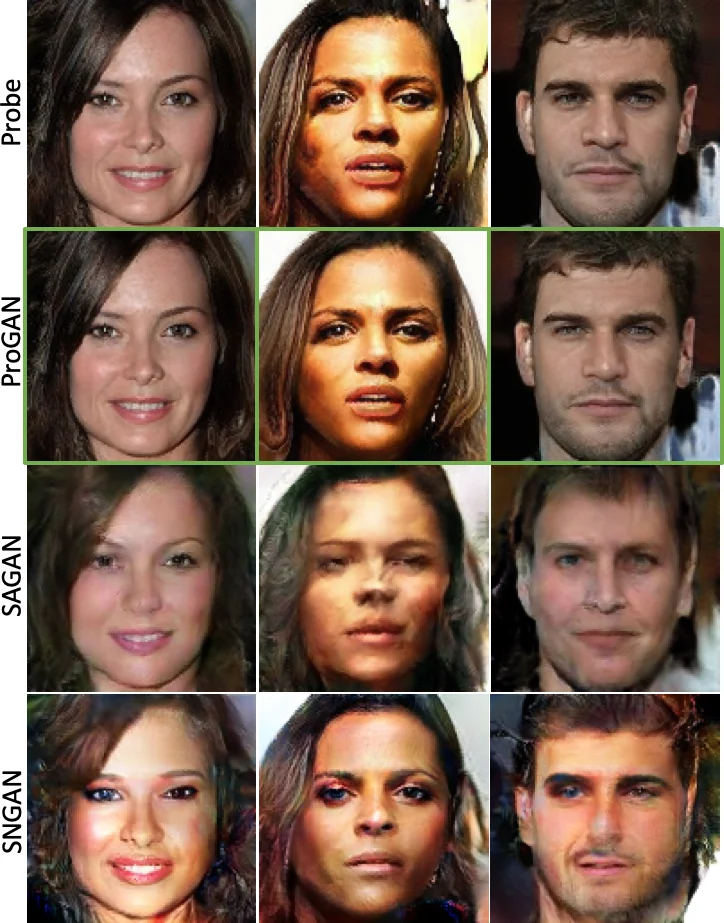

The second part of the study scales the approach to high‑resolution facial imagery generated by ProGAN. Two ProGAN models (latent dimension 512, output resolution 1024×1024) are trained on the same dataset. Despite the vastly larger image dimensionality (≈3 million pixels) and the complex, highly non‑linear manifolds involved, the inversion‑based attribution still achieves AUC≈0.94–0.97. Moreover, the recovered latent vectors allow the authors to reconstruct images that are visually indistinguishable from the originals, thereby providing not only a binary attribution decision but also a plausible reconstruction of the generation process—a valuable asset for forensic explainability.

The authors discuss several limitations. The method assumes a white‑box setting: the architecture and weights of all candidate generators must be known in advance, which may not hold in real‑world investigations where only black‑box access is available. The inversion step is computationally intensive, especially for large generators, because each probe requires multiple gradient‑based optimizations. Finally, when two generators are extremely similar—identical architecture, identical training data, identical order, and only differing by random seed—their output manifolds can overlap substantially, leading to occasional mis‑attributions.

Future work suggested includes extending the framework to black‑box scenarios via meta‑learning or surrogate models, developing more efficient initialization schemes (e.g., using encoder networks trained to predict z directly), and incorporating probabilistic modeling to handle sets of many generators simultaneously. The authors also note the potential for integrating this technique with existing deep‑fake detection pipelines, thereby enriching detection with source provenance information.

In summary, the paper presents a novel, gradient‑based inversion approach for source generator attribution that works across a range of difficulty levels—from clearly distinct training data to nearly identical generators—and remains robust to common image degradations such as JPEG compression. By coupling attribution with latent‑code recovery, the method offers both a decision and an explanatory reconstruction, advancing the state of the art in synthetic‑image forensics and opening avenues for practical deployment in digital evidence analysis, copyright enforcement, and deep‑fake mitigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment