Statistical time analysis for regular events with high count rate

In physics, it is frequently needed to precisely measure the count rate of some process. Quite often one needs to account for electronics dead time, pile-up and other features of data acquisition system to avoid systematic shifts of the count rate. In this article, we present a statistical mechanism to diminish or completely eliminate systematic errors arising from the correlation between the events. Also, we present examples of application of this method to the analysis of “Troitsk nu-mass” and “Tristan in Troitsk” experiments.

💡 Research Summary

**

The paper addresses a common problem in experimental physics: accurately determining the count rate of a process when the data acquisition system introduces systematic distortions such as electronic dead time, pulse pile‑up, and irregular background bursts. The traditional method—simply dividing the total number of recorded events by the measurement duration—ignores the timing information of individual events and therefore cannot correct for these distortions.

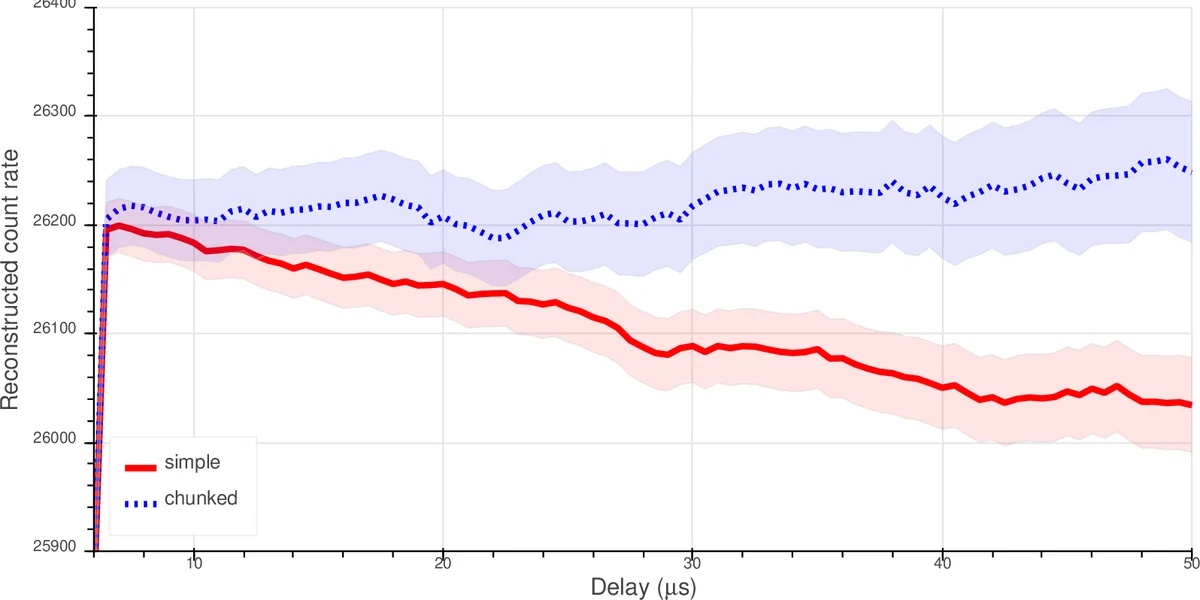

The authors propose a statistical technique that makes full use of the inter‑event time distribution. For a pure Poisson process the intervals follow an exponential law (p(t)=\mu e^{-\mu t}). In real measurements, however, short‑time intervals are often corrupted. The key idea is to introduce an arbitrary cutoff time (t_{0}) and retain only those intervals larger than (t_{0}). The resulting probability density becomes (p^{*}(t)=\mu e^{\mu t_{0}}e^{-\mu t}) for (t\ge t_{0}). Maximizing the likelihood for the retained intervals yields a simple estimator

\

Comments & Academic Discussion

Loading comments...

Leave a Comment