Neural Style Representations and the Large-Scale Classification of Artistic Style

The artistic style of a painting is a subtle aesthetic judgment used by art historians for grouping and classifying artwork. The recently introduced `neural-style' algorithm substantially succeeds in merging the perceived artistic style of one image …

Authors: Jeremiah Johnson

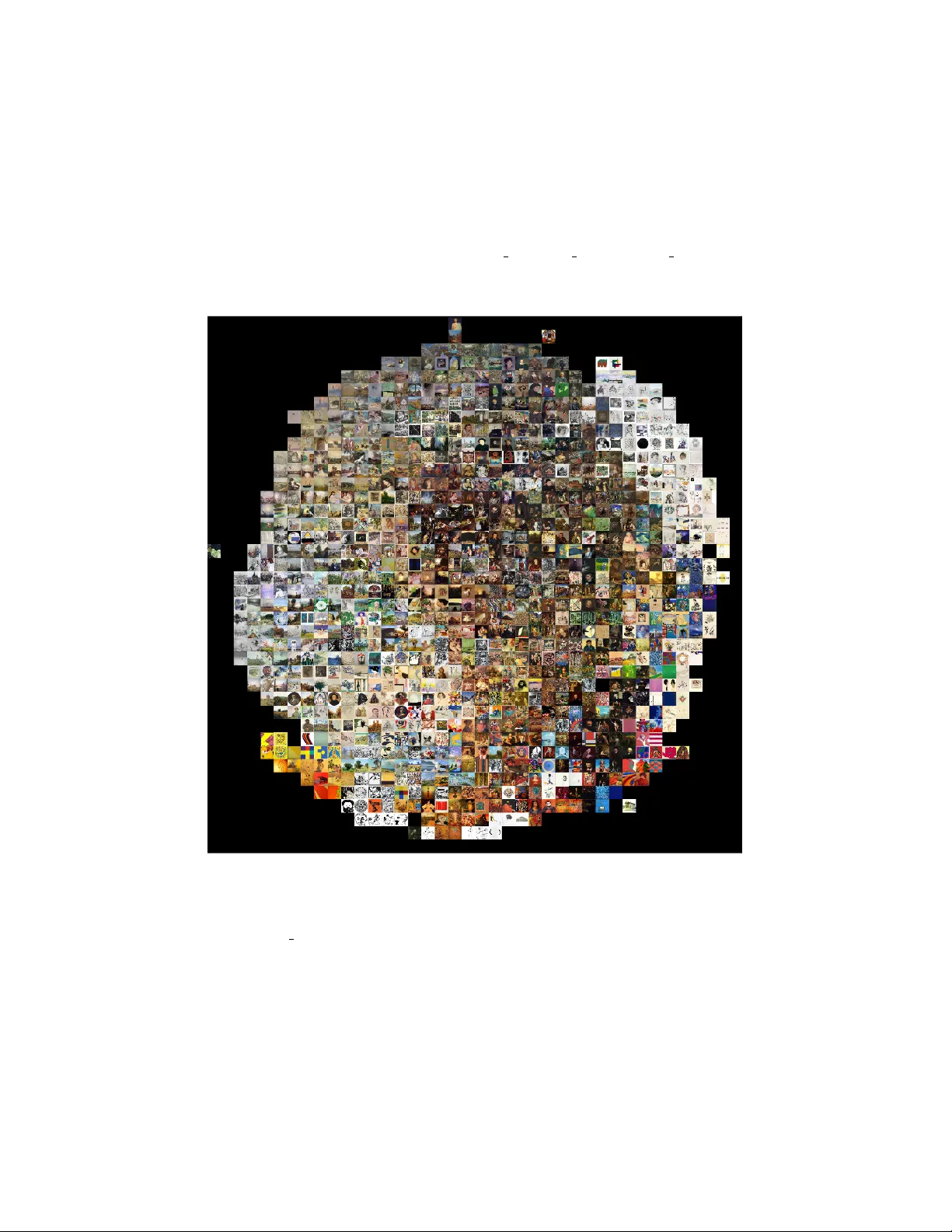

Neural Style Representations and the Lar ge-Scale Classification of Artistic Style Jeremiah W . Johnson Department of Applied Sciences & Engineering Uni versity of Ne w Hampshire Manchester , NH 03101 jeremiah.johnson@unh.edu Nov ember 17, 2016 Abstract The artistic style of a painting is a subtle aesthetic judgment used by art histo- rians for grouping and classifying artwork. The recently introduced ‘neural-style’ algorithm substantially succeeds in merging the perceiv ed artistic style of one im- age or set of images with the perceived content of another . In light of this and other recent de velopments in image analysis via con v olutional neural networks, we inv estigate the effecti v eness of a ‘neural-style’ representation for classifying the artistic style of paintings. 1 Intr oduction Any observer can sense the artistic style of painting, e ven if it takes training to articulate it. T o an art historian, the artistic style is the primary means of classifying the painting [10]. Howe ver , artistic style is not well defined, and may be loosely described as “.. a distinctive manner which permits the grouping of works into related categories” [1]. Algorithmically determining the artistic style of an artwork is a challenging problem which may include analysis of features such as the painting’ s color , its texture, and its subject matter , or none of those at all. Detecting the style of a digitized image of a painting poses additional challenges raised by the digiti zation process, which itself has consequences that may affect the ability of a machine to correctly detect artistic style; for instance, textures may be affected by the resolution of the digitization. Despite these challenges, intelligent systems for detecting artistic style would be useful for identification and retriev al of images of a similar style. In this paper we in vestigate several methods based on recent adv ances in con volu- tional neural networks for large-scale determination of artistic style. In particular , we adapt the neural-style algorithm introduced in [2] for large-scale style classification, showing performance that is competitiv e with other deep con v olutional neural network based approaches. 1 Figure 1: Original image on the left, after application of the ‘neural-style’ algorithm (style image ’Starry Night’, by V an Gogh) on the right. 2 Related W ork Algorithmic determination of artistic style in paintings has only been considered spo- radically in the past. Examples of early efforts at style classification are [8] and [15], where the datasets used are quite small, and only a handful of very distinct artistic style categories considered. Several complex models are constructed in [14] by hand- engineering features on a large dataset similar to the one used for this work. And in [7], it is demonstrated that con v olutional neural networks may be ef fecti ve for under- standing image style in general, including artistic style in paintings. In the papers just mentioned the number of artistic style categories is held to a relativ ely small 25 and 27 broadly defined style categories arespecti vely . In the paper “ A Neural Algorithm of Artistic Style”, it is demonstrated that the correlations between the low-le vel feature activ ations in a deep con volutional neural network encode sufficient information about the style of the input image to permit a tranfer of the visual style of the input image onto a ne w image via an algorithm informally referred to as the “neural-style” algorithm [2]. An example of the output of this algorithm is presented in Figure 1. Several authors hav e built upon the work of Gatys et. al. in the past year [13], [12], [12], [6]. These inv estigations have primarily focused on ways to improve either the quality of the style transfer or the efficiency of the algorithm. T o the best of our kno wledge the only other look at the use of the style representation of an image as a classifier is in [11]. 3 Data and Methods 3.1 Data The data used for this in vestigation consists of 76449 digitized images of fine art paintings. The vast majority of the images were originally obtained from http: //www.wikiart.org , the largest online repository of fine-art paintings. For con- 2 venience, we utilize a prepackaged set of images sourced and prepared by Kiri Nichols and hosted by the data-science competition website http://www.kaggle.com . A stratified 10% of the dataset was held out for v alidation purposes. W e chose to use a finer set of style categories for classification than has been used in pre vious work on image style, as we believ e that finer classification is likely necessary for practical ap- plication. W e utilize 70 distinct style cate gories, the maximum amount possible while maintaining at least 100 observations of each style category . This noticably increases the comple xity of the classification task as many of the class boundaries are not well- defined, the classes are unbalanced, and there are not nearly as man y e xamples of each of the artistic styles as in previous attempts at lar ge-scale artistic style classification. 3.2 The Neural Style Algorithm The primary insight in the neural-style algorithm outlined by Gatys et. al. is that the correlations between low-le vel feature activ ations in a conv olutional neural network capture information about the style of the image, while higher-le vel feature acti vations capture information about the content of the image. Thus, to construct an image x that merges both the style of an image a and the content of an image p , an image is initialized as white noise and the following two loss functions are simultaneously minimized: L content ( p , x ) = X l ∈ L content 1 N l M l X i,j F l ij − P l ij 2 , (1) and L sty l e ( a , x ) = X l ∈ L style 1 N 2 l M 2 l X i,j ( G l ij − A l ij ) 2 , (2) where N l is the number of filters in the layer, M l is the spatial dimensionality of the fea- ture map, F l and P l represent the feature maps e xtracted by the network at layer l from the images x and p respectiv ely , and letting S l represent the feature maps extracted by the network at layer l from the image a , G l ij = M l X k =1 F l ik F l j k and A l ij = M l X k =1 S l ik S l j k . That is, the style loss, which encodes the images style, is a loss taken ov er Gram ma- trices for filter activ ations. 3.3 Style Classification T o establish a baseline for style classification, we first trained a single con volutional neural network from scratch. The network has a uniform structure consisting of con- volutional layers with 3x3 kernels and leaky ReLUs acti vations ( α = 0 . 333 ). Between ev ery pair of conv olutional layers is a fractional max pooling layer with a 3x3 kernel. Fractional max-pooling is used as gi ven the relatively small size of the dataset, the more commonly used av erage or max-pooling operations would lead to rapid data loss and a relati vely shallo w network [3]. The con volutional layer sizes are 3 → 32 → 96 → 128 → 160 → 192 → 224 , followed by a fully-connected layer and 70-way softmax. 3 T able 1: Baseline Results Model Accuracy (top 1%) Con volutional Neural Network 27.47 Pretrained Residual Neural Network 36.99 10% dropout is applied to the fully connected layer . Aside from mean normalization and horizontal flips, the data were not augmented in any way . The model was trained ov er 55 epochs using stochastic gradient descent and achieved a top 1% accuracy of 27.468%. W e then finetuned a pretrained object classification model for style classification. The pretrained model used was a residual neural network with 50 layers pretrained on the ImageNet 2015 dataset. There are two motiv ating factors for choosing to finetune this network. The first is that residual networks currently exhibit the best on object recognition tasks, and previous work on style classification suggests that a network trained for the task of object recognition and then finetuned for image style detection will perform the task well [4], [7]. The second and more interesting reason from the standpoint of artistic style classification is that the architecture of a residual neural network makes the outputs of lower levels of the network av ailable to higher levels in the network. In this way , the net functions similar to a Long Short-T erm Memory network without gates [17]. For style classification, this is particularly appealing as a means of allowing the higher le vels in the net to consider both lower -le vel features and higher -lev el features when forming an artistic style classification, where the style may very much be determined by the lo wer-le vel features. The residual neural network model obtained top-1% accuracy of 36.985%. T o determine whether or not the style representation encoded in the Gram matrices for a giv en image has any power as a classifier , we extracted the Gram matrices of feature acti vations at layers ReLU1 1, ReLU2 1, ReLu3 1, ReLu4 1, and ReLU5 1 from a V GG-19 network for the paintings described abov e [16]. The choice of network and layers was based on the quality of the style transfers obtained with these choices in [2]. The pretrained VGG-19 model was obtained from the Caffe Model Zoo [5]. The Gram matrices were then reshaped to account for symmetry , producing a total of 304,416 distinct features per image, nearly a f actor of four greater that the total number of observations in the dataset. Analyzing the style representation was approached in two ways. First, the full feature vector was normalized and then passed to a single-layer linear classifier which was trained using Adam ov er 55 epochs, producing a top 1% accuracy of 13.23% [9]. W e then built random forest classifiers on the individual Gram matrices extracted from the activ ations of the network. The dimensionality of the Gram matrices post- reshaping is 2016, 8128, 32640, 130816, and 130816 respectively . Considered sepa- rately , the random forest classifiers b uilt on the first three of these style representations performed better than the linear classifier based on the full style representation and bet- ter than the baseline con volutional neural network, with top-1% accuracies of 27.84%, 4 T able 2: Style Representation Results Model Accuracy (top 1%) Full Style Representation - Linear Classifier 13.21 ReLU1 1 Random Forest 27.84 ReLU2 1 Random Forest 28.97 ReLU3 1 Random Forest 33.46 ReLU4 1 Random Forest 9.79 ReLU5 1 Random forest 10.18 28.97%, and 33.46%. The random forests built on the latter two layers performed considerably worse. The results are presented in table 2. In contrast to results reported in [11], we observed a significant loss in accuracy when dimensionality reduction was even lightly utilized on these smaller layers. F or instance, performing PCA while preserving 90% of the variance in the data from the layer ReLU1 1 style representation reduced the accuracy of the random forest model on that layer from 27.84% to 17%, perhaps due to our use of a larger , less homogeneous dataset. W e also saw no significant gains when the data were normalized. 4 Conclusion & Futur e W ork The ‘neural-style’ representation of an artwork of fers competiti ve performance as an artistic style classifier; nev ertheless, in our experiments a finetuned deep neural net- work still obtains superior results. Our best results using the ‘neural-style’ represen- tation of artistic style were obtained when models suitable for high-dimensional non- linear data were constructed indi vidually on the first three Gram matrices that form the building blocks of the style representation. It appears that the art-historical definition of artistic style is not quite what is cap- tured by the neural style algorithm using this network and these layers. Nevertheless it is clear that this information is relev ant and has some predictiv e ability , and under- standing and improving on these results is a tar get for future work. Acknowledgments The author would like to thank NVIDIA for GPU donation to support this research, W ikiart.org for providing many of the images, the website Kaggle.com for hosting the data, and Kiri Nichols for sourcing the data. Refer ences [1] F E R N I E , E . Art History and its Methods: A Critical Anthology . London: Phaidon, 1995. 5 [2] G A T Y S , L . A . , E C K E R , A . S . , A N D B E T H G E , M . Image style transfer using con- volutional neural netw orks. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition (2016), pp. 2414–2423. [3] G R A H A M , B . Fractional max-pooling. CoRR abs/1412.6071 (2014). [4] H E , K . , Z H A N G , X . , R E N , S . , A N D S U N , J . Deep residual learning for image recognition. CoRR abs/1512.03385 (2015). [5] J I A , Y . , S H E L H A M E R , E . , D O N A H U E , J . , K A R AY E V , S . , L O N G , J . , G I R S H I C K , R . , G UA D A R R A M A , S . , A N D D A R R E L L , T. Caf fe: Con volutional architecture for fast feature embedding. arXiv preprint arXiv:1408.5093 (2014). [6] J O H N S O N , J . , A L A H I , A . , A N D F E I - F E I , L . Perceptual losses for real-time style transfer and super-resolution. In European Conference on Computer V ision (2016). [7] K A R AY E V , S . , H E RT Z M A N N , A . , W I N N E M O E L L E R , H . , A G A RW A L A , A . , A N D D A R R E L L , T. Recognizing image style. CoRR abs/1311.3715 (2013). [8] K E R E N , D . Recognizing image style and acti vities in video using local features and naiv e bayes. P attern Recogn. Lett. 24 , 16 (Dec 2003), 2913–2922. [9] K I N G M A , D . P . , A N D B A , J . Adam: A method for stochastic optimization. In Pr oceedings of the 3r d International Conference on Learning Repr esentations (ICLR) (2014). [10] L A N G , B . The Concept of Style . Cornell Univ ersity Press, 1987. [11] M A T S U O , S . , A N D Y A N A I , K . Cnn-based style vector for style image retriev al. In Pr oceedings of the 2016 A CM on International Conference on Multimedia Retrieval (Ne w Y ork, NY , USA, 2016), ICMR ’16, A CM, pp. 309–312. [12] N OV A K , R . , A N D N I K U L I N , Y . Improving the neural algorithm of artistic style. CoRR abs/1605.04603 (2016). [13] R U D E R , M . , D O S O V I T S K I Y , A . , A N D B R O X , T . Artistic style transfer for videos. arXiv pr eprint arXiv:1604.08610 (2016). [14] S A L E H , B . , A N D E L G A M M A L , A . Lar ge-scale classification of fine-art paintings: Learning the right metric on the right feature. In International Confer ence on Data Mining W orkshops (2015), IEEE. [15] S H A M I R , L . , M A C U R A , T. , O R L OV , N . , E C K L E Y , D . M . , A N D G O L D B E R G , I . G . Impressionism, expressionism, surrealism: Automated recognition of painters and schools of art. A CM T rans. Appl. P ercept. 7 , 2 (feb 2010), 8:1–8:17. [16] S I M O N YAN , K . , A N D Z I S S E R M A N , A . V ery deep con v olutional networks for large-scale image recognition. CoRR abs/1409.1556 (2014). 6 [17] S R I V A S T A V A , R . K . , G R E FF , K . , A N D S C H M I D H U B E R , J . T raining very deep networks. In Advances in Neural Information Pr ocessing Systems (2015), pp. 2377–2385. 7 Supplementary Material T o create the follo wing visualizations, 20000 images were selected at random and their Gram matrices of activ ations at layers ReLU1 1, ReLU2 1, and ReLU3 1 were ex- tracted. The visualizations were produced by then running the Barnes-Hut t -SNE al- gorithm on the Gram matrices at each layer , rather than on the raw pixel data. Figure 2: A Barnes-Hut t -SNE visualization of 20000 randomly selected images. The features used in creating the visualization are the components of the Gram matrix from layer ReLU1 1. 8 Figure 3: A Barnes-Hut t -SNE visualization of 20000 randomly selected images. The features used in creating the visualization are the components of the Gram matrix from layer ReLU2 1. 9 Figure 4: A Barnes-Hut t -SNE visualization of 20000 randomly selected images. The features used in creating the visualization are the components of the Gram matrix from layer ReLU3 1. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment