Attentional Policies for Cross-Context Multi-Agent Reinforcement Learning

Many potential applications of reinforcement learning in the real world involve interacting with other agents whose numbers vary over time. We propose new neural policy architectures for these multi-agent problems. In contrast to other methods of training an individual, discrete policy for each agent and then enforcing cooperation through some additional inter-policy mechanism, we follow the spirit of recent work on the power of relational inductive biases in deep networks by learning multi-agent relationships at the policy level via an attentional architecture. In our method, all agents share the same policy, but independently apply it in their own context to aggregate the other agents’ state information when selecting their next action. The structure of our architectures allow them to be applied on environments with varying numbers of agents. We demonstrate our architecture on a benchmark multi-agent autonomous vehicle coordination problem, obtaining superior results to a full-knowledge, fully-centralized reference solution, and significantly outperforming it when scaling to large numbers of agents.

💡 Research Summary

The paper introduces a novel neural policy architecture for multi‑agent reinforcement learning (MARL) that leverages self‑attention to enable a single shared policy to operate across a variable number of agents. Unlike traditional approaches that either train separate policies per agent or employ a centralized critic that aggregates all agents’ observations, the proposed “cross‑context attentional policy” embeds a scaled‑dot‑product multi‑head attention layer directly within both the actor and the critic networks. Each agent receives its own local observation and, optionally, observations from other agents it is allowed to attend to (encoded as edges in a directed graph). The attention mechanism computes query, key, and value projections for every agent, adds class‑specific relative positional embeddings to differentiate relationship types (e.g., front‑back, same lane, different lane), and produces an aggregated representation that is then fed into a small multilayer perceptron to output the action distribution (for the actor) or a scalar value (for the critic).

Key technical contributions include:

- Parameter sharing – All agents use the same set of weights θ, dramatically reducing the total number of learnable parameters and eliminating the need to train a separate network for each possible team size.

- Dynamic handling of agent count – The input to the attention layer is an |Iₜ| × n tensor (where |Iₜ| is the current number of agents). No padding or truncation is required; the attention computation naturally scales with the number of agents present, allowing back‑propagation for any team size.

- Relational inductive bias – By incorporating edge‑class embeddings (aᶜᴷ, aᶜⱽ) the network can learn distinct interaction patterns for different relationship types, embodying a relational inductive bias that has been shown to improve sample efficiency in graph‑structured domains.

- Compatibility with single‑agent RL algorithms – Because the policy and value networks retain the same overall architecture (attention + MLP), standard on‑policy methods such as PPO or A2C can be applied without modification. No separate centralized critic is required during execution, which simplifies deployment.

- Decentralized execution – At test time each agent independently runs the shared network using only locally available observations and the observations it receives from neighboring agents, achieving fully decentralized control with minimal communication overhead.

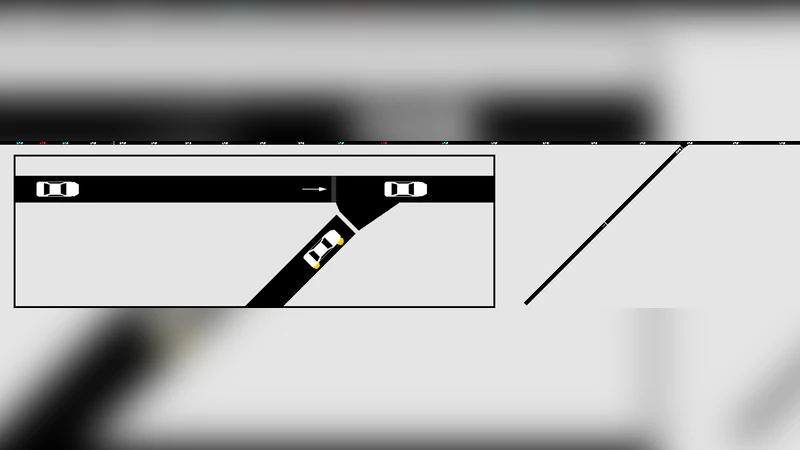

The authors evaluate the approach on the “Merge” benchmark from Vinitzky et al. (2018), a mixed‑autonomy traffic scenario where two single‑lane roads merge into one. A subset of vehicles is controllable (autonomous) while the rest follow an Intelligent Driver Model. The goal is to minimize congestion and collisions by learning acceleration policies for the autonomous vehicles. Two baselines are compared: (a) a fully centralized policy that stacks all observations into a fixed‑size vector and processes them with a conventional multilayer perceptron, and (b) the proposed attentional policy. Performance metrics include average collision count, average waiting time, and cumulative reward.

Results show that the attentional policy consistently outperforms the centralized baseline across all metrics. The advantage becomes more pronounced as the number of agents increases (e.g., 30 % more agents leads to a larger gap), demonstrating that the attention mechanism effectively focuses on the most relevant neighboring vehicles and discards irrelevant information, thereby scaling gracefully. Moreover, the same trained network can be deployed in a fully decentralized fashion without any loss of performance, confirming the practicality of the method for real‑world traffic control where communication bandwidth is limited.

The paper also discusses limitations: the experiments are confined to simulation, edge‑class definitions are hand‑crafted, and the O(|I|²) attention cost may become prohibitive for very large fleets. Future work is suggested on approximate attention (local windows, sampling), automatic learning of edge classes via meta‑learning, and real‑world validation with sensor noise and communication delays.

In summary, this work presents a compelling paradigm shift: by embedding relational attention directly into the policy network, it achieves parameter efficiency, scalability to varying agent counts, and decentralized execution—all while remaining compatible with existing single‑agent RL algorithms. The approach opens a promising path toward deploying MARL solutions in traffic management, robotic swarms, and other domains where agents must cooperate under dynamic population sizes.

Comments & Academic Discussion

Loading comments...

Leave a Comment