Implicit Background Estimation for Semantic Segmentation

Scene understanding and semantic segmentation are at the core of many computer vision tasks, many of which, involve interacting with humans in potentially dangerous ways. It is therefore paramount that techniques for principled design of robust models be developed. In this paper, we provide analytic and empirical evidence that correcting potentially errant non-distinct mappings that result from the softmax function can result in improving robustness characteristics on a state-of-the-art semantic segmentation model with minimal impact to performance and minimal changes to the code base.

💡 Research Summary

**

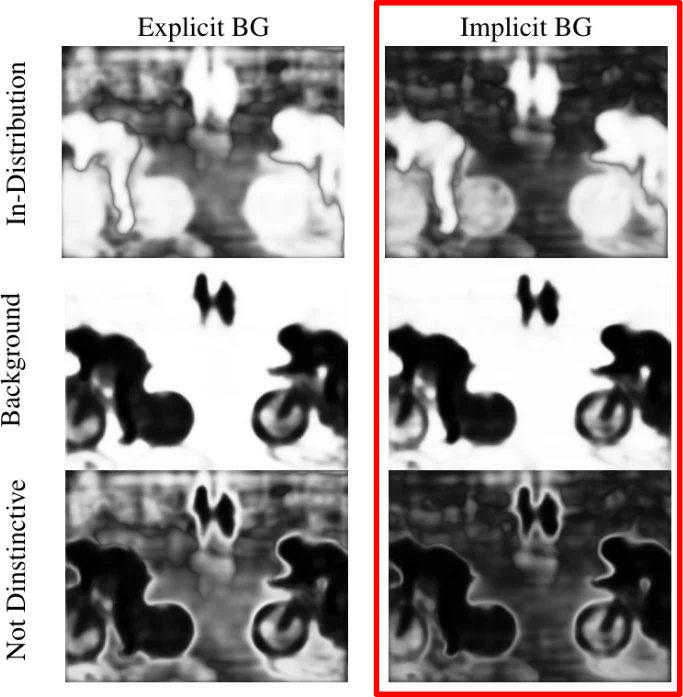

The paper “Implicit Background Estimation for Semantic Segmentation” investigates a subtle but important flaw in the way modern semantic‑segmentation networks treat the background class. In most state‑of‑the‑art models the soft‑max function is applied to a per‑pixel logit vector (v\in\mathbb{R}^{k}). While soft‑max is surjective onto the interior of the ((k-1))-simplex, the authors prove (Lemmas 1.1‑1.3) that it is not injective: when two or more components become equal or when some components tend to (-\infty), the resulting probability distribution collapses to the same point. This “non‑distinctiveness” is especially problematic for the background class, which often shares the same logit magnitude as foreground classes in ambiguous regions. The consequence is two‑fold: (1) the model’s built‑in out‑of‑distribution (OOD) detector—i.e., the background prediction—fails to reliably flag pixels that do not belong to any known class; (2) confidence scores become poorly calibrated because soft‑max pushes in‑distribution inputs toward the simplex vertices, inflating confidence even when the model is uncertain.

To address these issues the authors propose Implicit Background Estimation (IBE). Instead of learning a separate background logit, they define the background logit mathematically as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment