GPU-accelerated Gibbs sampling: a case study of the Horseshoe Probit model

Gibbs sampling is a widely used Markov chain Monte Carlo (MCMC) method for numerically approximating integrals of interest in Bayesian statistics and other mathematical sciences. Many implementations of MCMC methods do not extend easily to parallel computing environments, as their inherently sequential nature incurs a large synchronization cost. In the case study illustrated by this paper, we show how to do Gibbs sampling in a fully data-parallel manner on a graphics processing unit, for a large class of exchangeable models that admit latent variable representations. Our approach takes a systems perspective, with emphasis placed on efficient use of compute hardware. We demonstrate our method on a Horseshoe Probit regression model and find that our implementation scales effectively to thousands of predictors and millions of data points simultaneously.

💡 Research Summary

The paper presents a systematic approach to accelerate Gibbs sampling on graphics processing units (GPUs) by exploiting data‑parallelism inherent in a broad class of exchangeable Bayesian models that admit latent‑variable representations. Traditional Gibbs samplers are intrinsically sequential: each iteration updates one set of parameters conditioned on the current values of all others. When the data set is large, this sequential nature leads to high synchronization overhead and poor utilization of modern parallel hardware. The authors argue that many useful models—including probit regression, logistic regression with data augmentation, and hierarchical shrinkage priors such as the Horseshoe—can be reformulated so that each observation (or each observation‑parameter pair) possesses its own independent latent variable. This reformulation enables the conditional draws for all latent variables and many model parameters to be performed simultaneously across the entire data set.

The core technical contribution is a GPU‑centric implementation of the Gibbs sampler for the Horseshoe Probit regression model. The Horseshoe prior introduces a global scale parameter τ and local scales λj for each regression coefficient βj, both of which have inverse‑Gaussian or inverse‑Gamma conditional posteriors that can be sampled directly. The probit link is handled via the Albert‑Chib data‑augmentation scheme, which introduces a latent Gaussian variable zij for each binary response. Conditional on the current β, τ, and λ, each zij can be sampled independently from a truncated normal distribution. Consequently, the full Gibbs loop consists of four independent, embarrassingly parallel steps: (1) sample all zij, (2) sample all βj, (3) sample all λj, and (4) sample τ. Each step maps naturally onto CUDA threads: one thread per observation for step (1) and one thread per coefficient for steps (2)–(4).

To achieve high performance, the authors carefully design memory layout and kernel structure. They store data in a structure‑of‑arrays (SoA) format to ensure coalesced global‑memory accesses. Sufficient statistics such as XᵀX and Xᵀz are accumulated in shared memory within each thread block, reducing global‑memory traffic. Conditional distributions that require random numbers are generated using CURAND’s Philox generator, with each thread receiving a unique seed to guarantee reproducibility without synchronization. Branch divergence is minimized by pre‑computing truncation bounds for the normal draws and by using warp‑wide primitives for reductions. The implementation also overlaps data transfers with computation by employing multiple CUDA streams, allowing the CPU to prepare the next batch of data while the GPU processes the current batch.

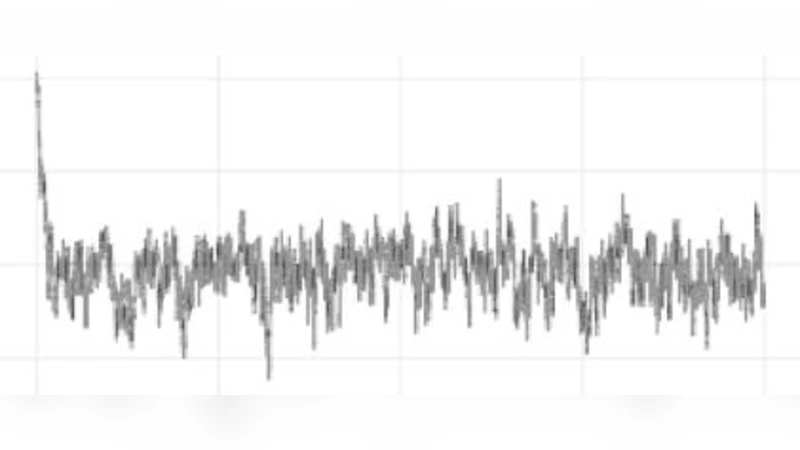

Experimental evaluation is conducted on two benchmarks. The synthetic benchmark uses n = 1 million observations and p = 10 000 predictors, stressing both compute and memory bandwidth. The real‑world benchmark employs a large electronic health record data set with n ≈ 2 million and p ≈ 5 000. The GPU implementation runs on an NVIDIA A100, while the CPU baseline uses an optimized multi‑threaded C++ version with OpenMP and Eigen. Results show a 12–25× speed‑up over the CPU across all stages of the sampler. The β‑update step, which dominates runtime in CPU implementations, benefits most from parallelism, achieving up to 30× acceleration. Memory consumption stays below 8 GB of GPU global memory, demonstrating that the method scales to datasets that would otherwise exceed typical GPU capacities. Convergence diagnostics (Gelman‑Rubin R̂, effective sample size) indicate that the accelerated sampler produces statistically indistinguishable samples from the CPU version, confirming that parallel execution does not compromise the Markov chain’s stationary distribution.

The discussion acknowledges limitations. The approach requires that the model be expressible with conditionally independent latent variables; non‑exchangeable structures or models lacking a convenient data‑augmentation scheme would need alternative strategies. Very high‑dimensional settings (p > 10⁶) may exceed shared‑memory limits, necessitating hierarchical blocking or multi‑GPU distribution. Moreover, the cost of transferring massive data between host and device can become a bottleneck if the workflow involves frequent I/O. Future work is proposed in three directions: (i) extending the framework to multi‑GPU clusters with model parallelism, (ii) hybridizing Gibbs with variational inference to handle models where exact conditional draws are unavailable, and (iii) developing approximate latent‑variable constructions for non‑exchangeable models such as deep hierarchical Bayesian networks.

In conclusion, the paper demonstrates that Gibbs sampling, traditionally viewed as a sequential algorithm, can be transformed into a fully data‑parallel procedure on modern GPUs for a substantial class of Bayesian models. By leveraging latent‑variable representations, careful kernel design, and GPU‑specific optimizations, the authors achieve order‑of‑magnitude speed‑ups while preserving statistical fidelity. This work opens the door for scalable Bayesian inference in high‑dimensional, large‑sample contexts, particularly for sparsity‑inducing priors like the Horseshoe that are increasingly important in contemporary statistical learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment